2023:Data Export (Export Definition): Difference between revisions

Created page with "<blockquote style="font-size:125%"> '''''Data Export''''' is one of the '''''Export Types''''' available when configuring an '''''Export Behavior'''''. It exports extracted document data over a '''Data Connection''', allowing users to export data to a SQL or ODBC compliant database. </blockquote> {|cellpadding="10" cellspacing="5" |-style="background-color:#36b0a7; color:white" |style="font-size:14pt"|'''FYI''' | Prior to version 2021, extracted data was exported using..." |

|||

| Line 98: | Line 98: | ||

* The extracted index data, which will be exported to a database. | * The extracted index data, which will be exported to a database. | ||

|| [[File:database_export_001.gif]] | || [[File:database_export_001.gif]] | ||

|} | |} | ||

| Line 181: | Line 173: | ||

#* "YTD" | #* "YTD" | ||

| | | | ||

[[File: | [[File:2023_Data-Export_02_HowTo_01_01.png]] | ||

|- | |- | ||

|valign=top| | |valign=top| | ||

| Line 206: | Line 198: | ||

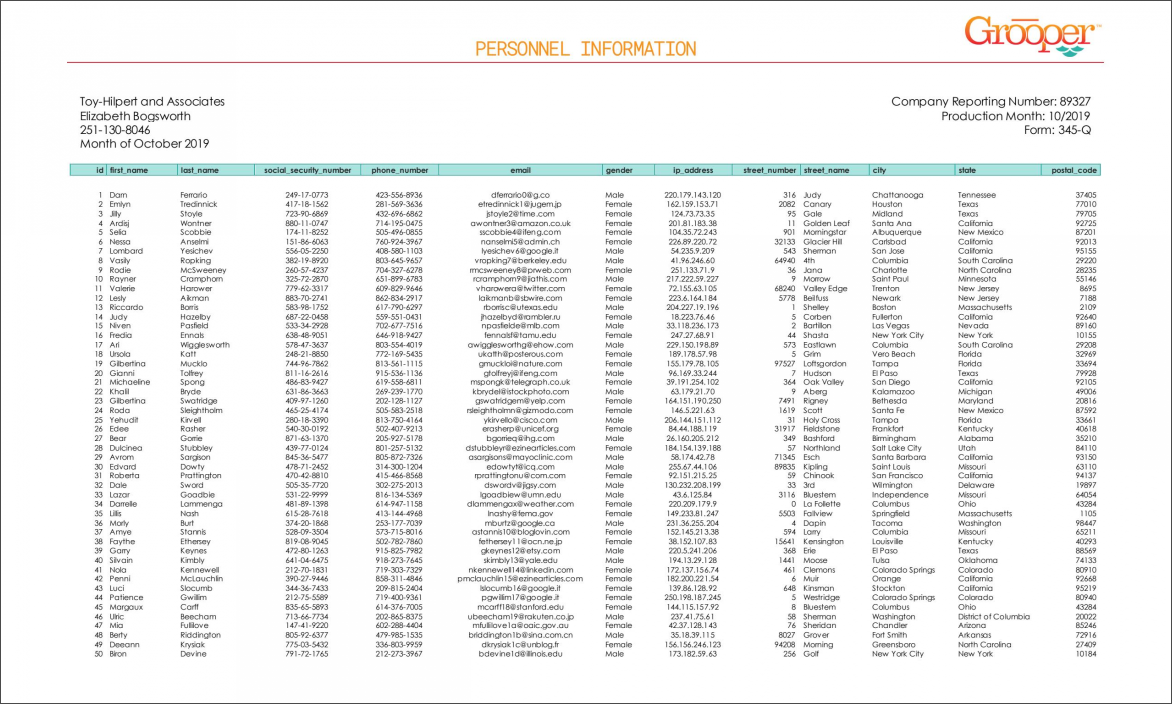

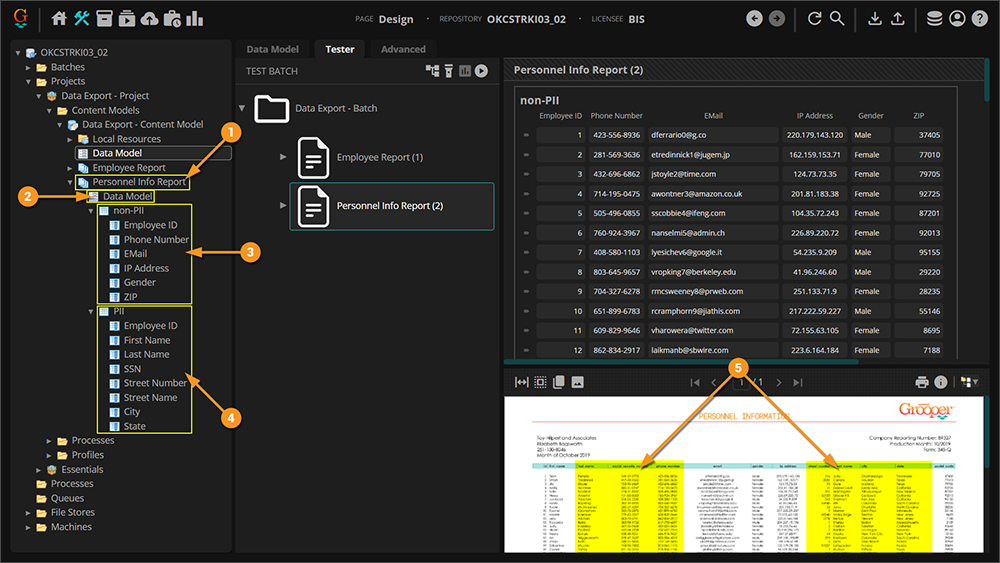

# This set-up uses a single table extractor to collect each row on the document, but reports it to two different '''Data Table''' objects in Grooper (the "non-PII" '''Data Table''' and the "PII" '''Data Table'''). This will ultimately allow us to parse data from these columns, and place the PII related information into a separate database. | # This set-up uses a single table extractor to collect each row on the document, but reports it to two different '''Data Table''' objects in Grooper (the "non-PII" '''Data Table''' and the "PII" '''Data Table'''). This will ultimately allow us to parse data from these columns, and place the PII related information into a separate database. | ||

| | | | ||

[[File: | [[File:2023_Data-Export_02_HowTo_01_02.png]] | ||

|} | |} | ||

| Line 226: | Line 218: | ||

| style="vertical-align:top; width:40%" | | | style="vertical-align:top; width:40%" | | ||

=== Option 1 === | === Option 1 === | ||

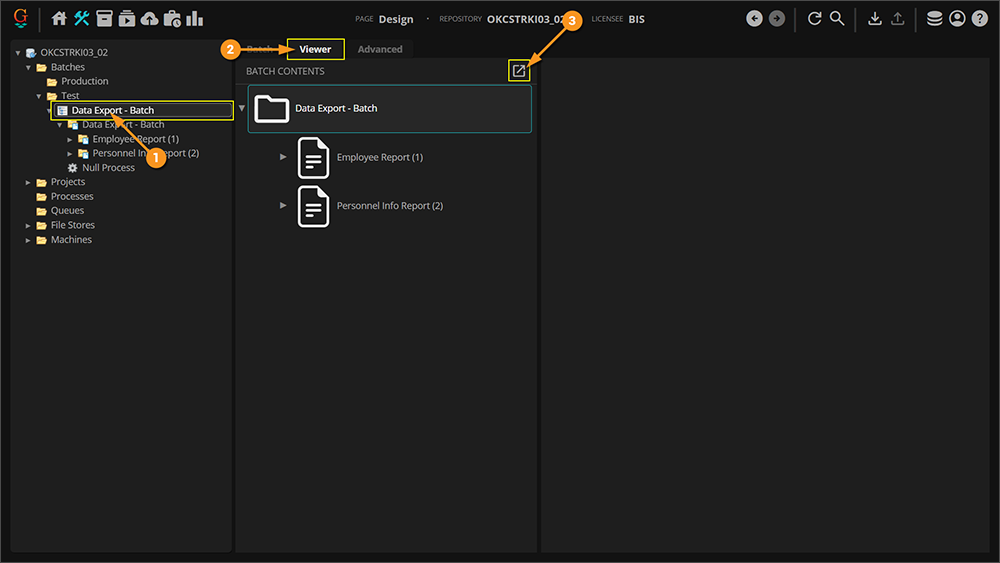

# First, navigate to | # First, navigate to the '''Batch''' object in the node tree. | ||

# From there, click on the "Viewer" tab. | |||

# Click the "Open in review page" button. | |||

# From there, click on the " | |||

# | |||

| | | | ||

[[File: | [[File:2023_Data-Export_02_HowTo_01_03.png]] | ||

|- | |- | ||

| style="vertical-align:top; width:40%" | | |||

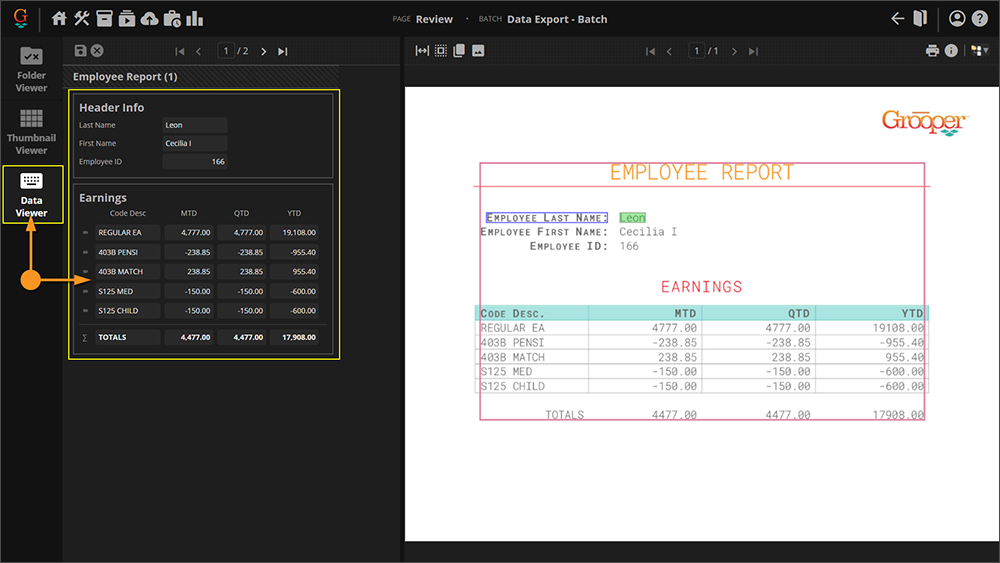

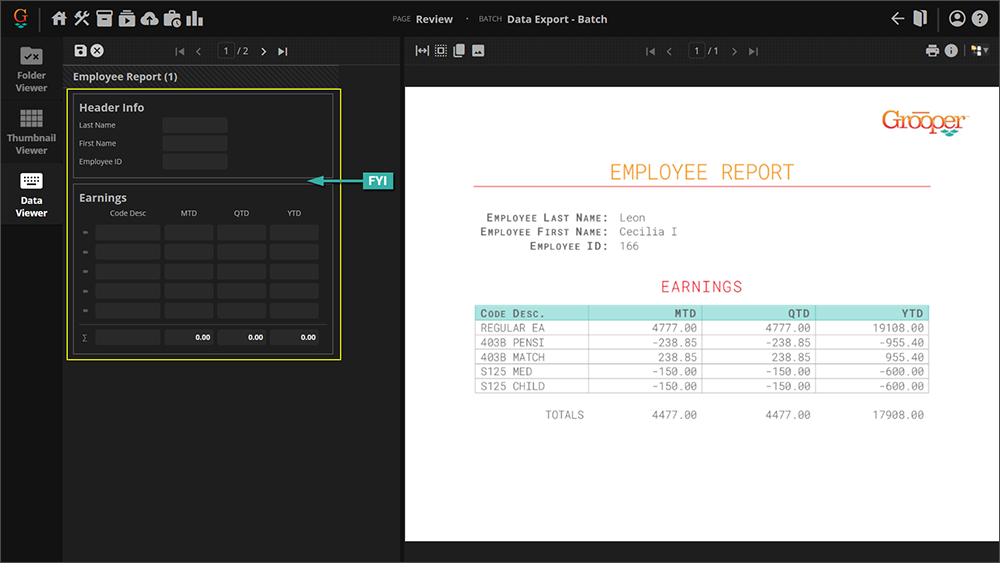

Click the "Data Viewer" tab and from there you can review review the data. | |||

| | |||

[[File:2023_Data-Export_02_HowTo_01_04.png]] | |||

|- | |||

|valign=top| | |valign=top| | ||

{|cellpadding="10" cellspacing="5" | {|cellpadding="10" cellspacing="5" | ||

| Line 243: | Line 239: | ||

|} | |} | ||

| | | | ||

[[File: | [[File:2023_Data-Export_02_HowTo_01_05.png]] | ||

|} | |} | ||

| Line 252: | Line 248: | ||

Another means of verifying is to actually view the file created by the '''Extract''' activity and stored in the Grooper repository's file store location. | Another means of verifying is to actually view the file created by the '''Extract''' activity and stored in the Grooper repository's file store location. | ||

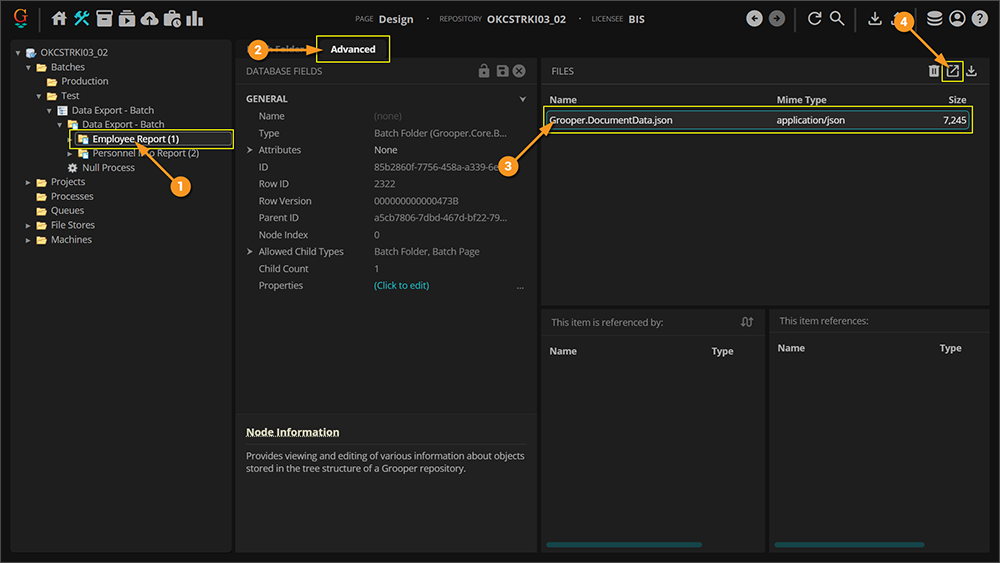

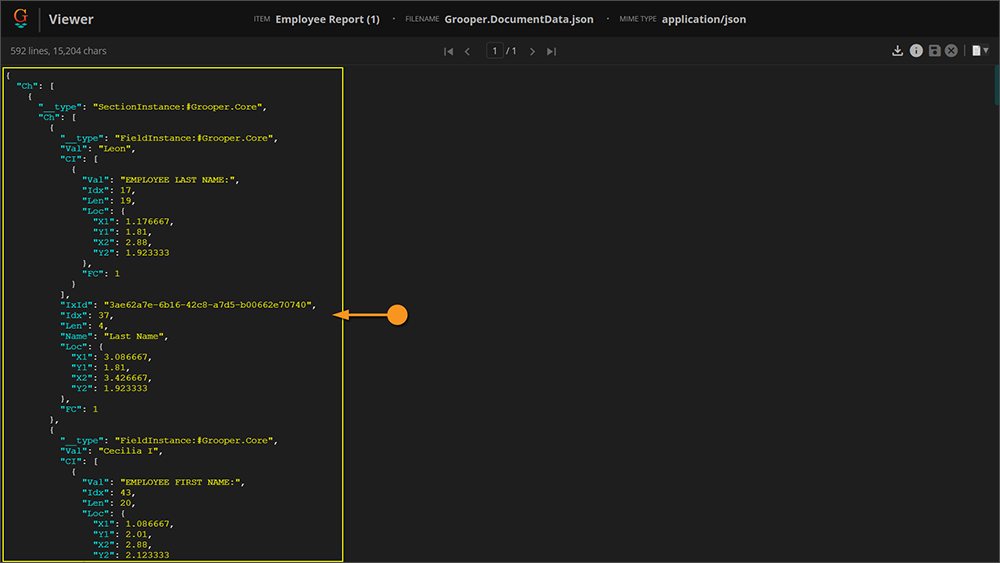

# | # In this case, select a '''Batch Folder''' that is a child of the '''Batch'''. In this case the "Employee Report(1)" is selected. | ||

# Click the "Advanced" tab. | # Click the "Advanced" tab. | ||

# Click the " | # Select the JSON file in the files panel. | ||

# Click the "Display file" button. | |||

| | |||

[[File:2023_Data-Export_02_HowTo_01_06.png]] | |||

|- | |||

| style="vertical-align:top; width:40%" | | |||

A new tab will open in your browser and you will be shown the contents of the JSON file. This is the file the '''Extract''' activity generates when it processes a '''Batch Folder'''. It serializes all the extracted '''Data Elements'''' values for the document's '''Data Model'''. | |||

| | | | ||

[[File: | [[File:2023_Data-Export_02_HowTo_01_07.png]] | ||

|} | |} | ||

</tab> | </tab> | ||

Revision as of 12:19, 20 October 2023

Data Export is one of the Export Types available when configuring an Export Behavior. It exports extracted document data over a Data Connection, allowing users to export data to a SQL or ODBC compliant database.

| FYI |

Prior to version 2021, extracted data was exported using the Database Export activity. With the introduction of Export Behaviors in version 2021, this changed. Data is now exported to databases using the Export activity and one or more Data Export behavior definitions. |

About

|

You may download and import the file below into your own Grooper environment (version 2021). This contains Batches with the example document(s) and a Content Model discussed in this article |

The most important goal of Grooper is to deliver accurate data to line of business systems that allow the information to be integrated into impactful business decisioning. Tables in databases remain, to this day, one of the main vessels by which this information is stored. Data Export is one of the main ways to deliver data collected in Grooper.

|

Data Export allows us to take a document... |

...and its extracted data... |

...and map that data to column locations in a database table. |

There are three important things to understand when using and configuring Data Export to export data to a database:

- The Export activity.

- Data Elements

- Data Connections

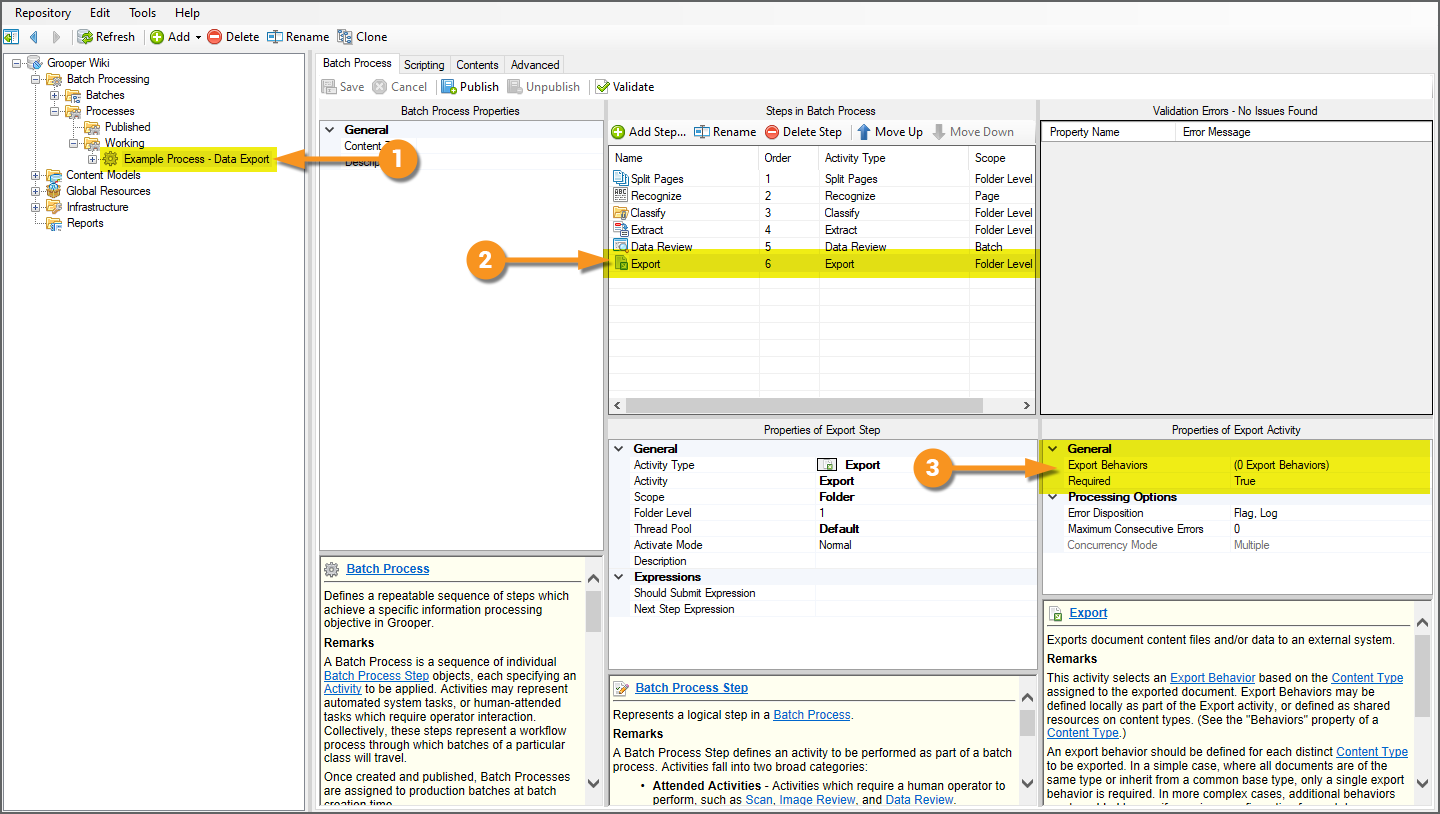

The Export Activity

Grooper's Export activity is the mechanism by which Grooper-processed document content is delivered to an external storage platform. Export configurations are defined by adding Export Type definitions to Export Behaviors. Data Export is the Export Type designed to export Batch Folder document data collected by the Extract activity to a Microsoft SQL Server or ODBC-compliant database server.

For more information on configuring Export Behaviors, please visit the full Export activity article.

Data Elements

Data Export is the chief delivery device for "collection" elements. Data is collected in Grooper by executing the Extract activity, extracting values from a Batch Folder according to its classified Document Type's Data Model.

A Data Model in Grooper is a digital representation of document data targeted for extraction, defining the data structure for a Content Type in a Content Model. Data Models are objects comprised of Data Element objects, including:

- Data Fields used to target single field values on a document.

- Data Tables and their child Data Columns used to target tabular data on a document.

- Data Sections used to divide a document into sections to simplify extraction logic and/or target repeating sections of extractable Data Elements on a single document.

With Data Models and their child Data Elements configured, Grooper collects values using the Extract activity.

Depending on the Content Type hierarchy in a Content Model and/or Data Element hierarchy in a Data Model, there will be a collection, or "set", of values for varying data scope of a fully extracted Data Model's hierarchy. That may be the full data scope of the Data Model, including any inherited Data Elements inherited from parent Data Models. It may be a narrower scope of Data Elements like a child Data Section comprised of its own child Data Fields.

Understanding this will be important as Data Export has the ability to take full advantage of Grooper's hierarchical data modeling to flatten complex and inherited data structures. Understanding Data Element hierarchy and scope will also be critical when exporting data from a single document to multiple different database tables to ensure the right data exports to the right places.

Data Connections

Data Export uses a configured Data Connection object to establish a link to SQL or ODBC compliant database tables in a database and intelligently populate said tables. Once this connection is established, collected Data Elements can be mapped to corresponding column locations in one or multiple database tables. Much of Data Export's configuration is assigning these data mappings. The Data Connection presents these mappable data endpoints to Grooper as well as allowing data content to flow from Grooper to the database table when the Export activity processes each Batch Folder in a Batch.

Furthermore, not only can Grooper connect to existing databases using a Data Connection, but it can create whole new databases as well as database tables once a connection to the database server is established.

We discuss how to create Data Connections, add a new database from a Data Connection, and add a new database table from a Data Connection in the #Configuring a Data Connection tutorial below.

| FYI | We have improved integration with PostgreSQL, Db2, MySQL, and Oracle using the ODBC connection type in version 2021!

While not fully supported in previous versions of Grooper, you can now connect to these data sources with a Data Connection seamlessly in Grooper, allowing for full database export operations via Data Export. |

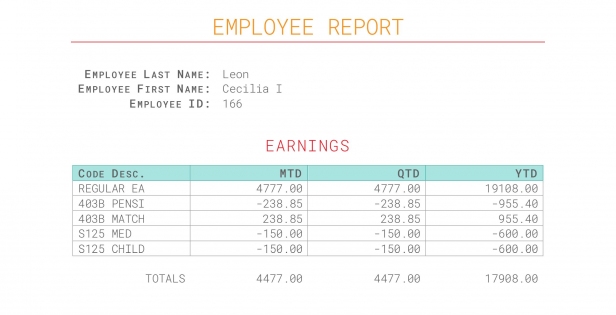

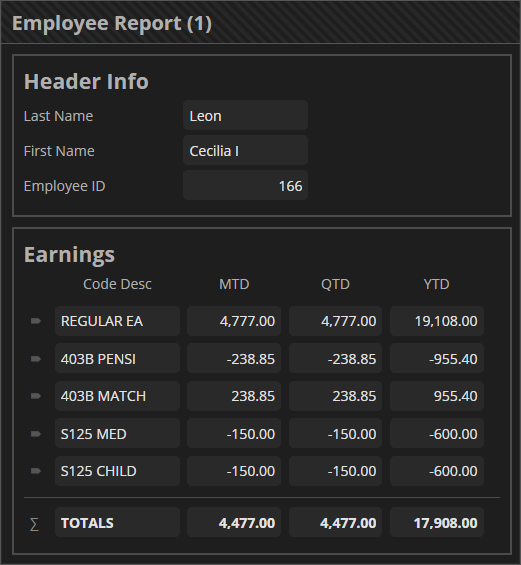

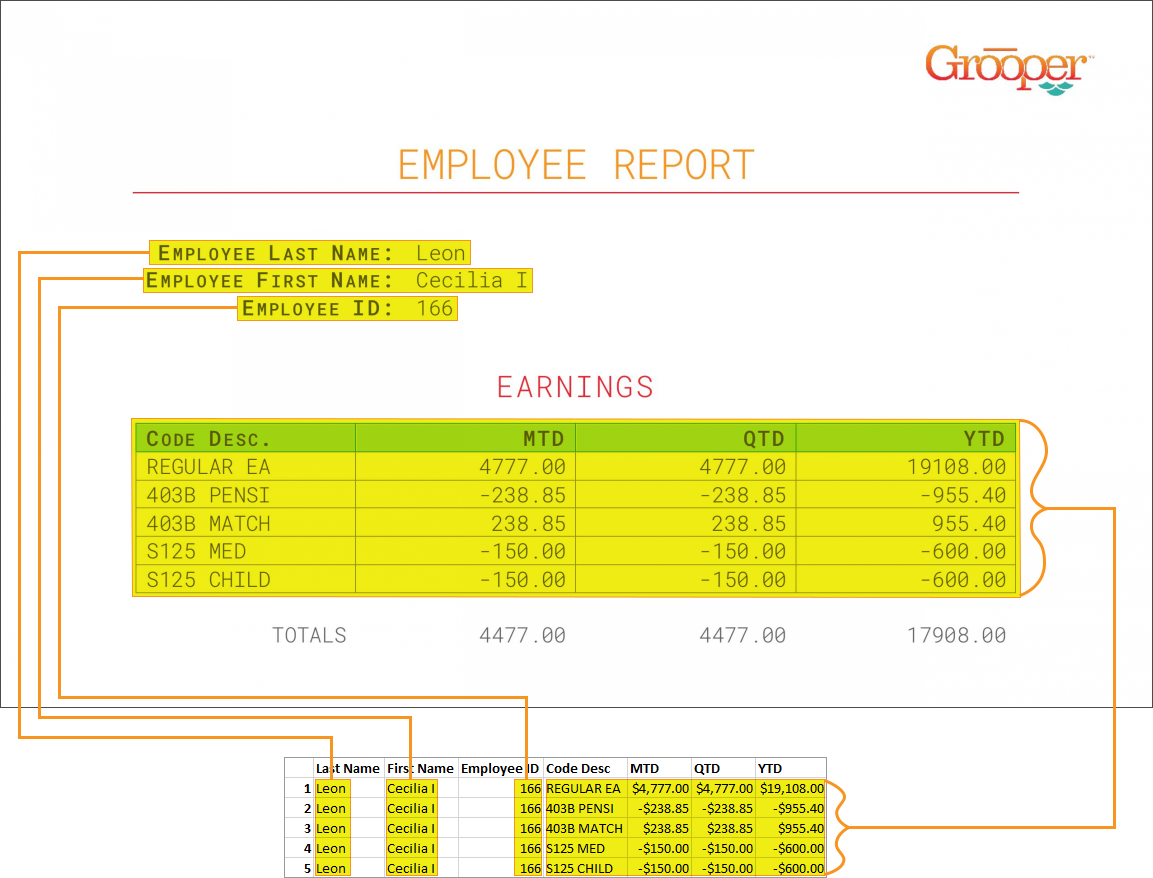

How To

Understanding the Forms

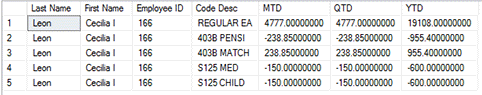

Document 1: Employee ReportThe thing to understand about this document is some of its data share a "one-to-many" relationship. Some of the data is described as "single instance" data. These are individual fields like "Employee Last Name", "Employee First Name" and "Employee ID". For each document, there is only one value for each of these fields. These values are only listed once, and hence only collected once during extraction. Some of the data, however, is described as "multi-instance" data. The "Earnings" table displays a dynamic amount of rows, for which there may be a varying number of data for its columns ("Code Desc", "MTD", "QTD", "YTD") depending on how many rows are in the table. There are multiple instances of the "YTD" value for the whole table (and therefore the whole document). The single instance data, as a result of only being listed once on the document, will only be collected once, but needs to be married to each row of information from the table, in one way or another. The "one" "Employee ID" value, for example, pertains to the "many" different table rows. This document is meant to show how to flatten data structures. While the single instance data is only collected once, it will be reported many times upon exporting to a database table. |

|||

Document 2: Personnel Information ReportThe second document is essentially one big table of personnel information (name, address, email, phone number and the like). While we ultimately want to collect data from all rows in this table, there are potentially two sets of information here. Some of it is generic personnel information, but some of it is "personally identifiable information" or PII. This information should be protected for legal reasons. As a result, we will export collected data to two database tables (with the assumption that the second table is "protected".) This document is meant to demonstrate how to export to multiple tables via one Export Behavior.

|

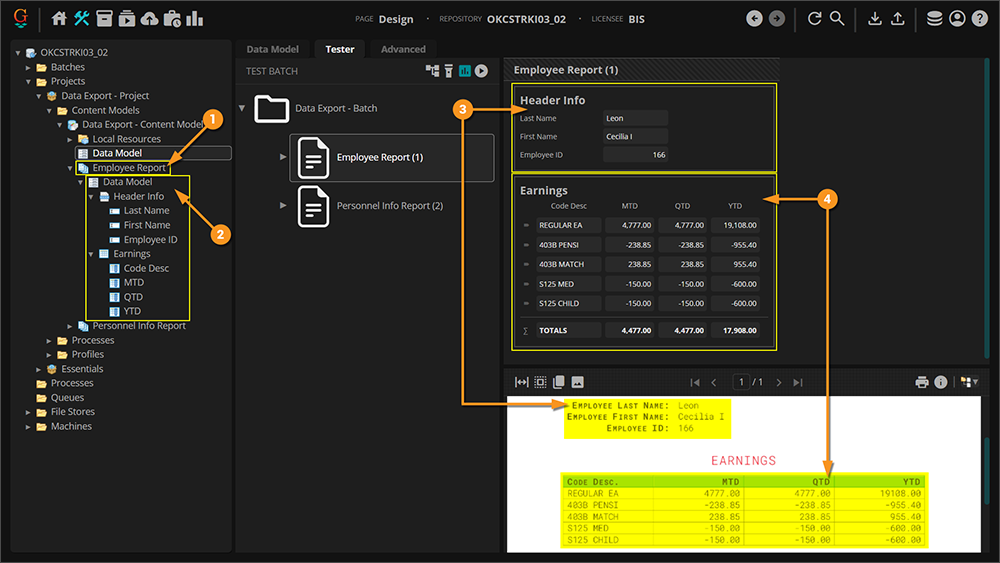

Understanding the Content Model

The Content Model provided for this tutorial is named "Example Model - Data Export". This Content Model is designed to extract the data for these two different kinds of documents, each represented by its own Document Type.

The Employee Report Document Type

|

|

The Personnel Info Report Document Type

|

Verifying Index Data

Before the Database Export activity can send data, it must have data!

It's easy to get in the habit of testing extraction on a Data Field or a Data Model and feel good about the results, but it must be understood that the information displayed when doing so is in memory, or temporary. When testing a Data Export configuration, it's a good idea to ensure extracted data is actually present for document Batch Folders whose data you want to export.

When the Extract activity runs, it executes all extraction logic for the Data Model tied to a Batch Folder's classified Document Type. For each Batch Folder document, it creates "Index Data" and marries it to the Batch Folder via a JSON file called Grooper.DocumentData.json.

A couple of ways to verify its existence are as follows:

Option 1

|

|||

|

Click the "Data Viewer" tab and from there you can review review the data. |

|||

|

Option 2Another means of verifying is to actually view the file created by the Extract activity and stored in the Grooper repository's file store location.

|

|

|

A new tab will open in your browser and you will be shown the contents of the JSON file. This is the file the Extract activity generates when it processes a Batch Folder. It serializes all the extracted Data Elements' values for the document's Data Model. |

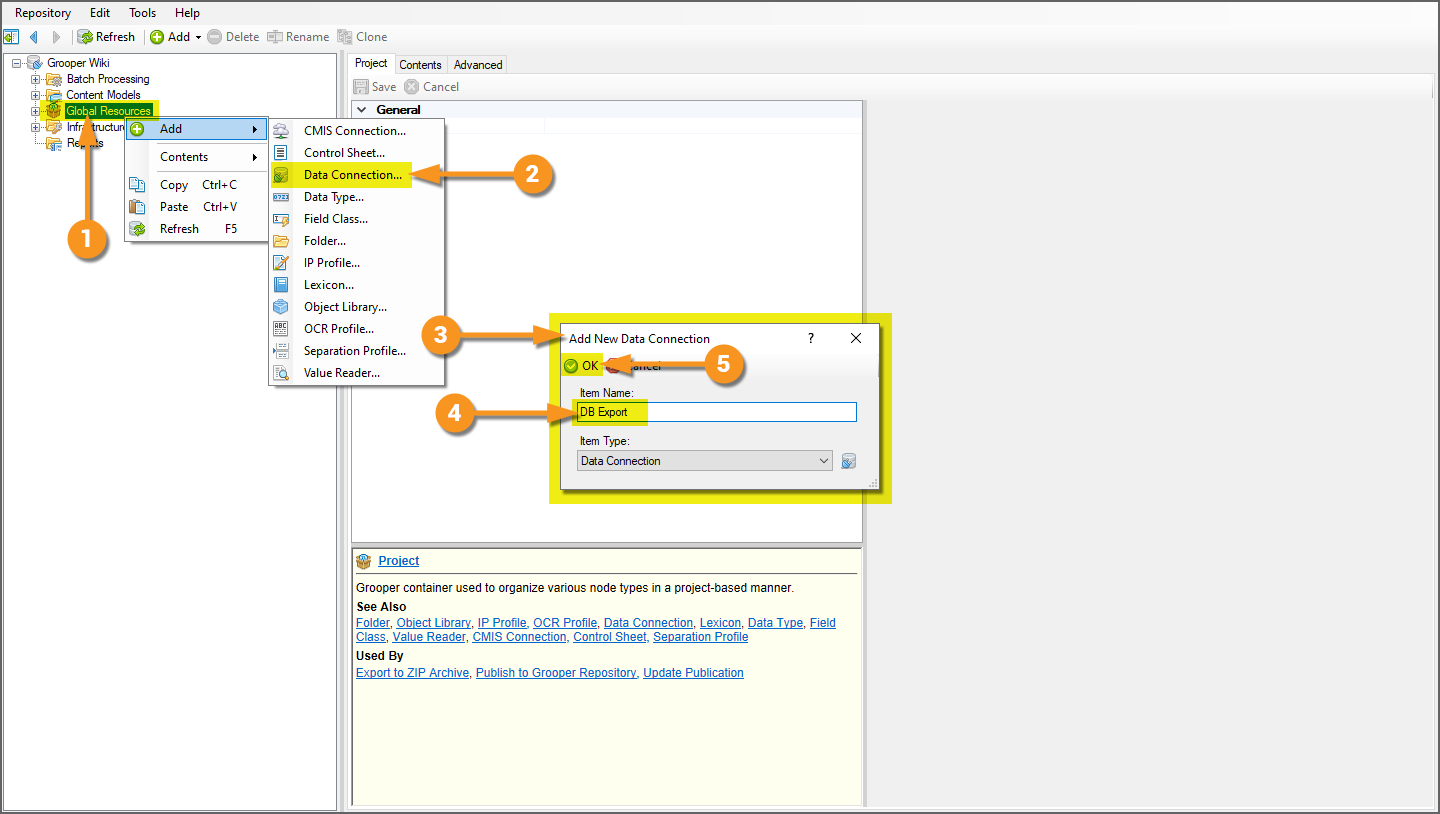

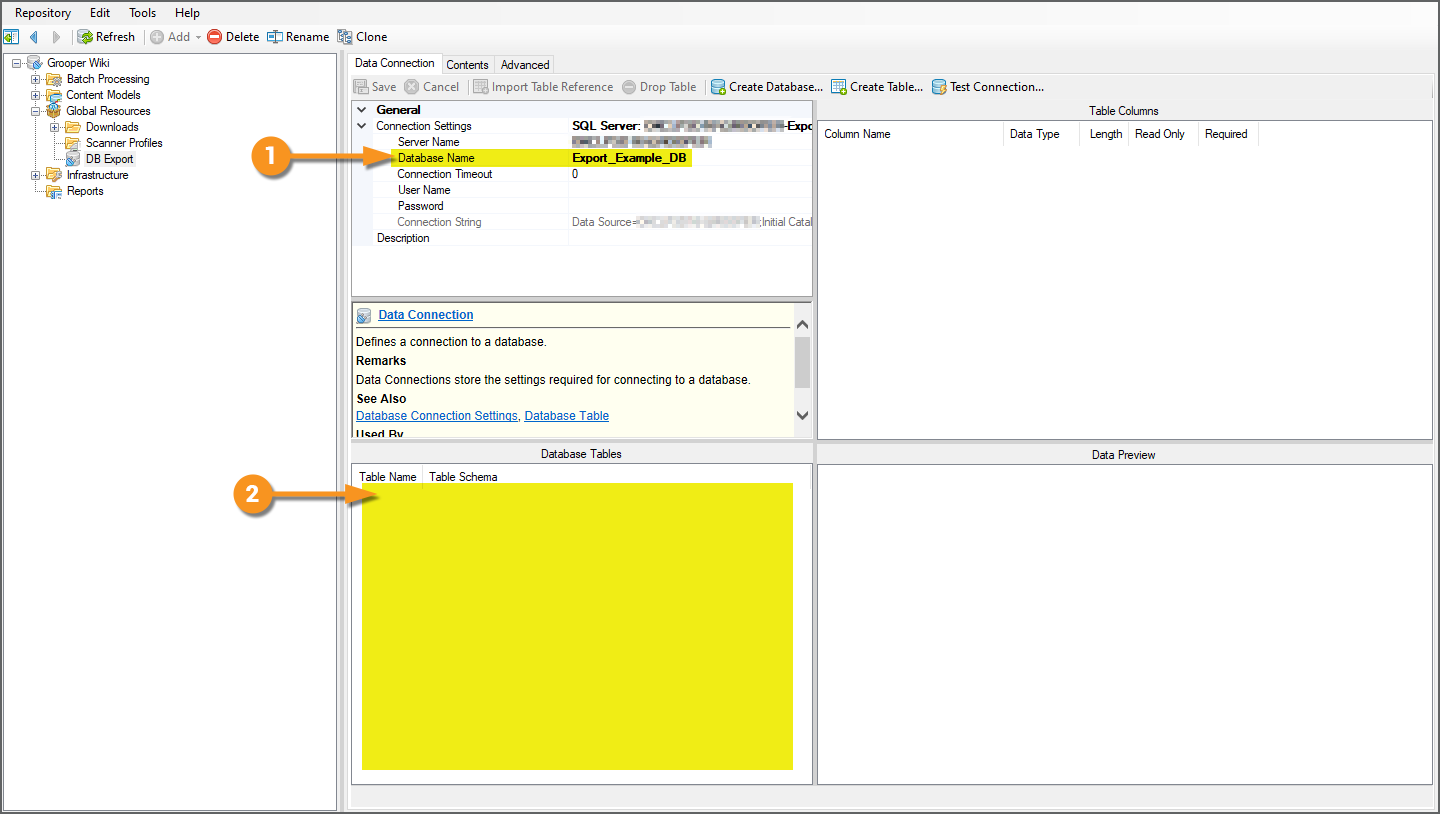

Configuring a Data Connection

In order for the Data Export to run, it first needs an established connection to a database and subsequent table(s).

Grooper can connect to an existing database and import references to its tables using a Data Connection object. Once connected to the database server, you can even have Grooper create a database AND create tables based on Data Model structures present in Grooper!

In the following tutorial we will cover how to:

- Create a new Data Connection object.

- Connect to a database server using the Data Connection.

- Create a new database from the Data Connection.

- Create a database table using the Data Models in our example Content Model.

It is worth noting that this article cannot tell you specifics about permissions in your own environment. The configuration for this article uses Microsoft SQL Server and has given the active Active Directory user full DB Admin privileges to the SQL environment.

Create a Data Connection

|

New Data Connections are added to the Global Resources folder of the Node Tree.

|

|

|

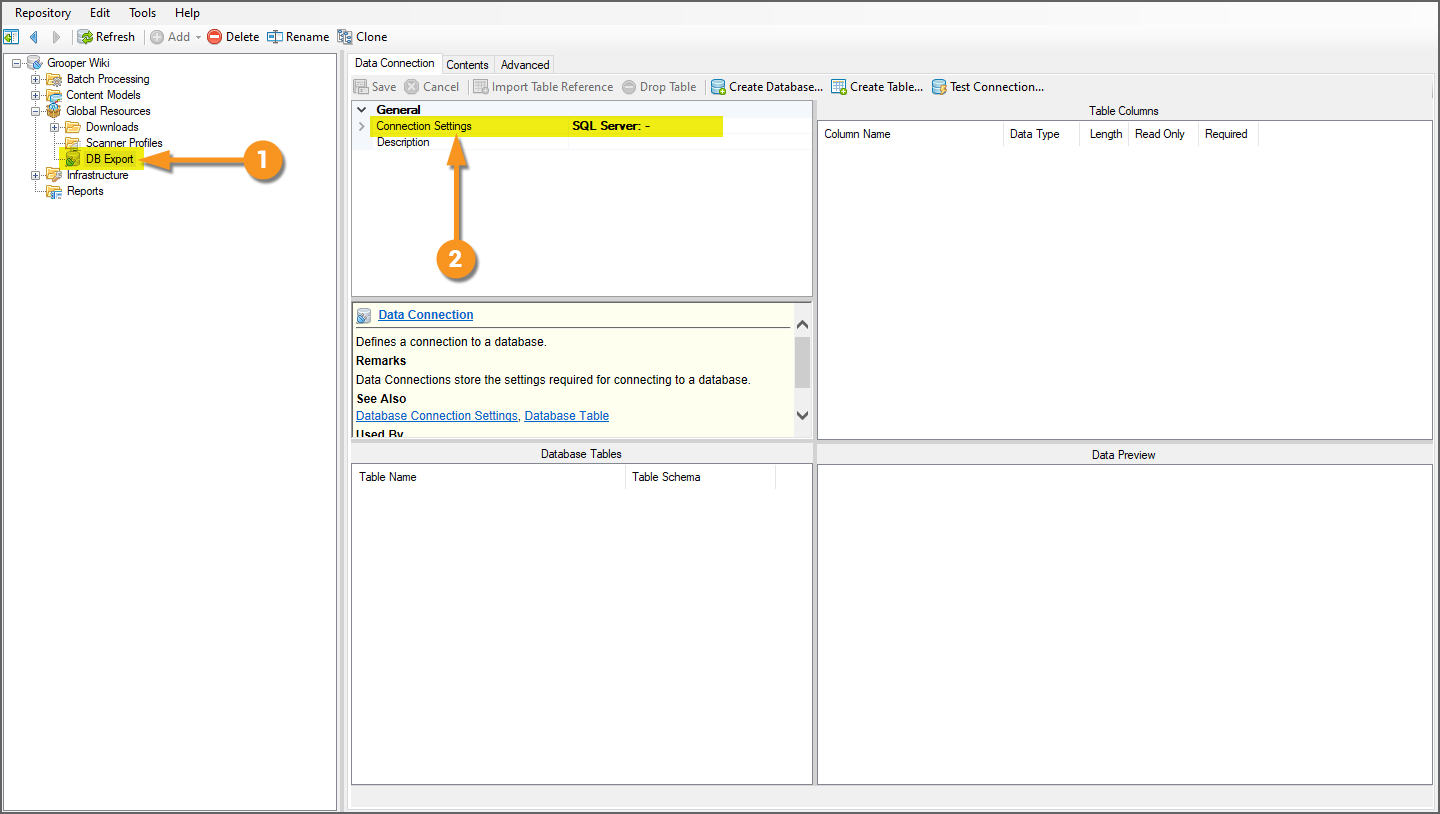

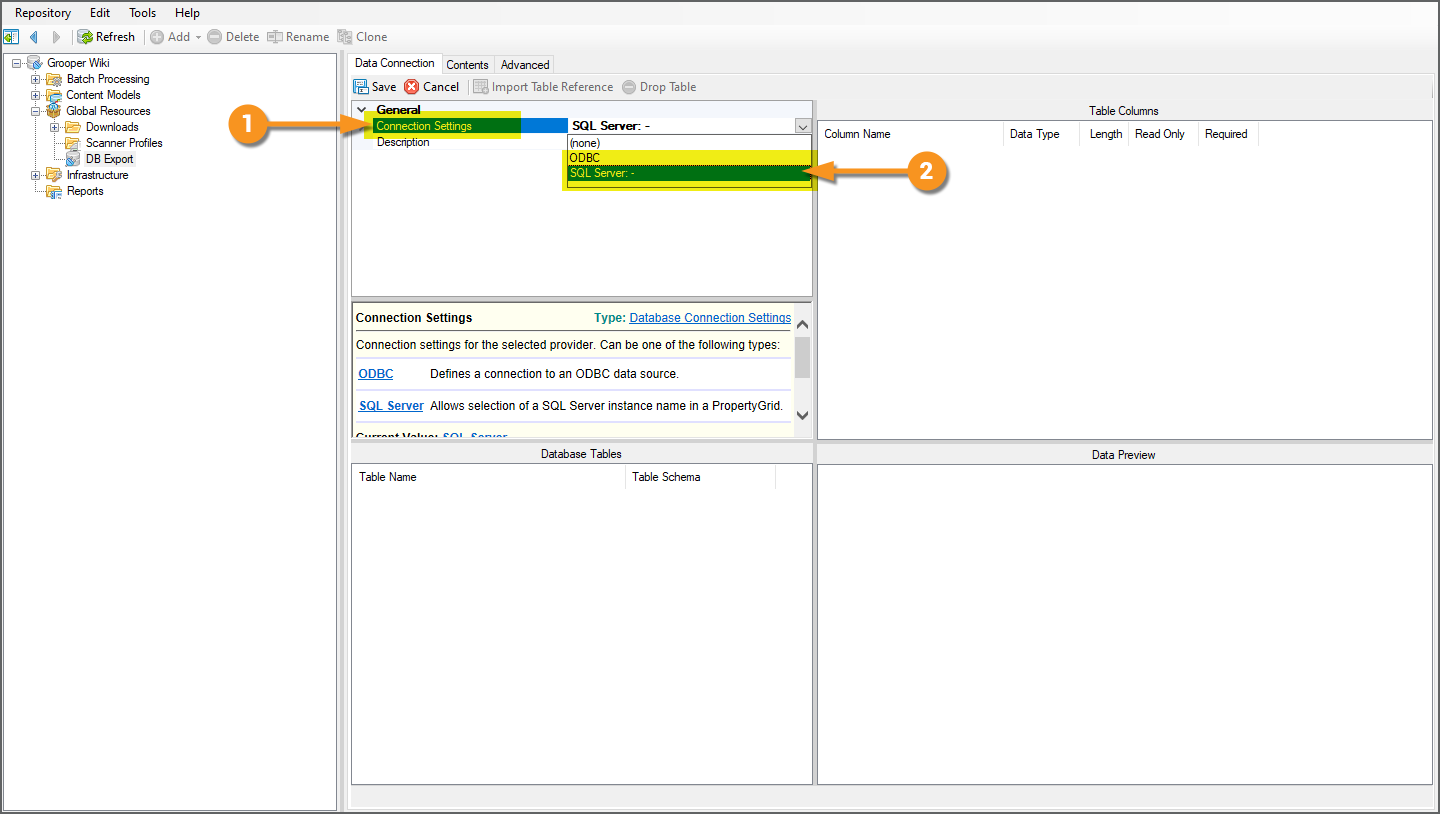

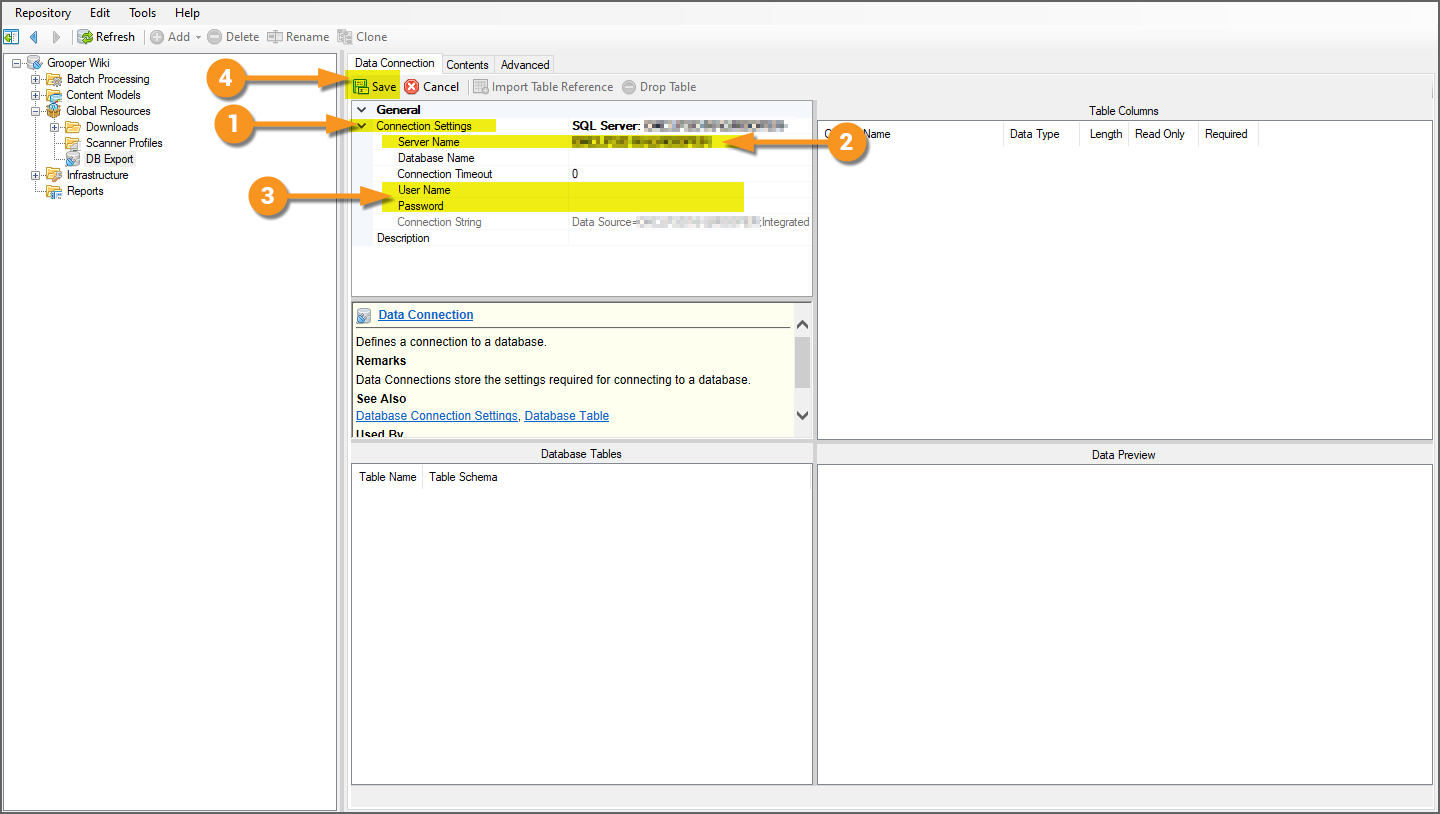

Configure Connection Settings

|

Regardless whether you want to connect to an existing database or create a new one from Grooper, your first step is always the same. You must first connect to a database server. Grooper can connect to Microsoft SQL servers or any ODBC (Open Database Connectivity) compliant data source. For the purposes of this tutorial, we will connect to a SQL server.

|

|

|

Next, we need to define settings to access the database server. All you really need to do this is the server's name and access rights.

|

|

|

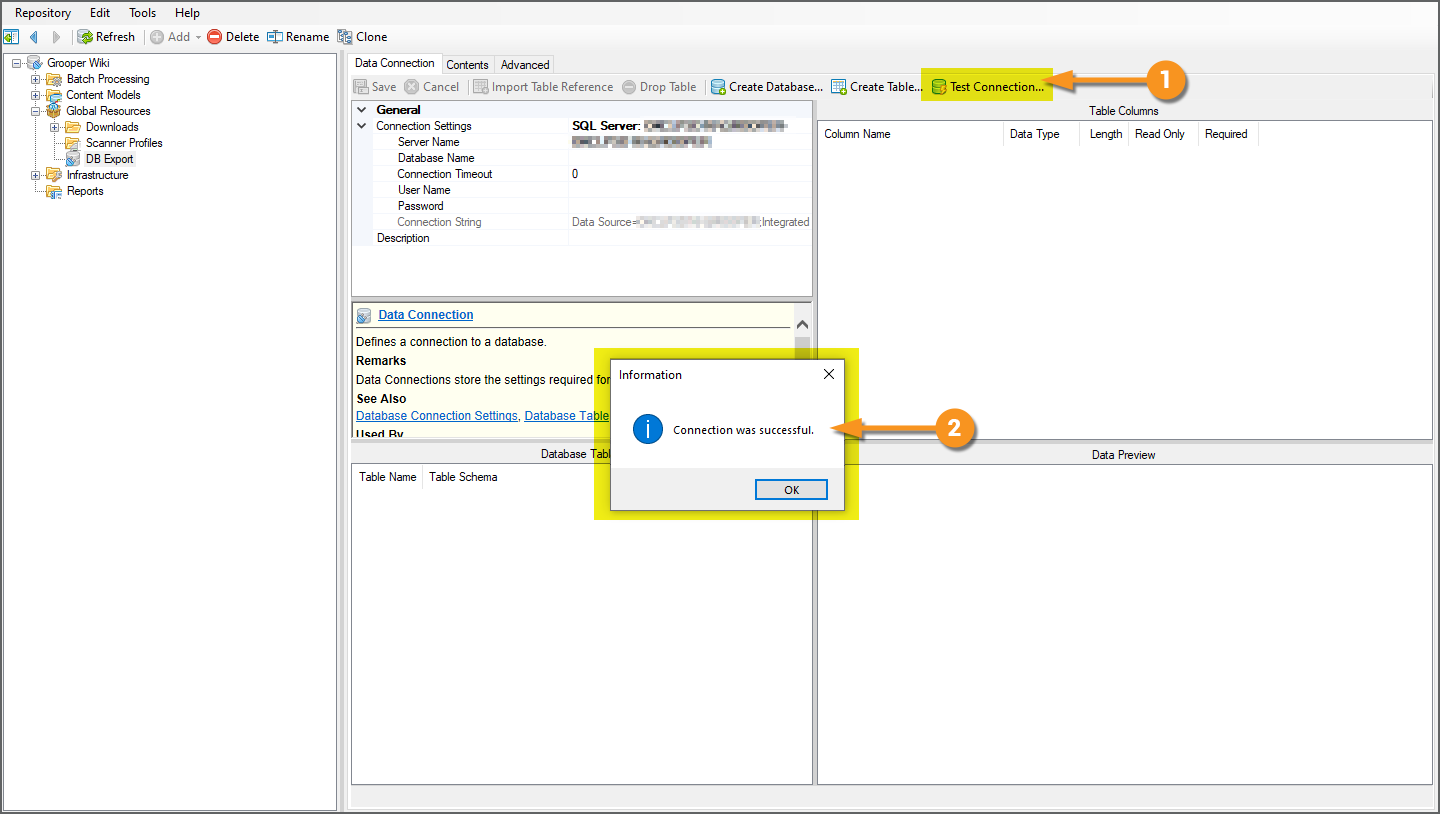

That's it! You're officially connected to the database server now. We can now connect to existing databases, import references to their tables (Keep this in the back of your mind. This will be important later.), create new databases, and new database tables.

|

At this point, you have two options:

- Connect to an existing database

- Create a new database

|

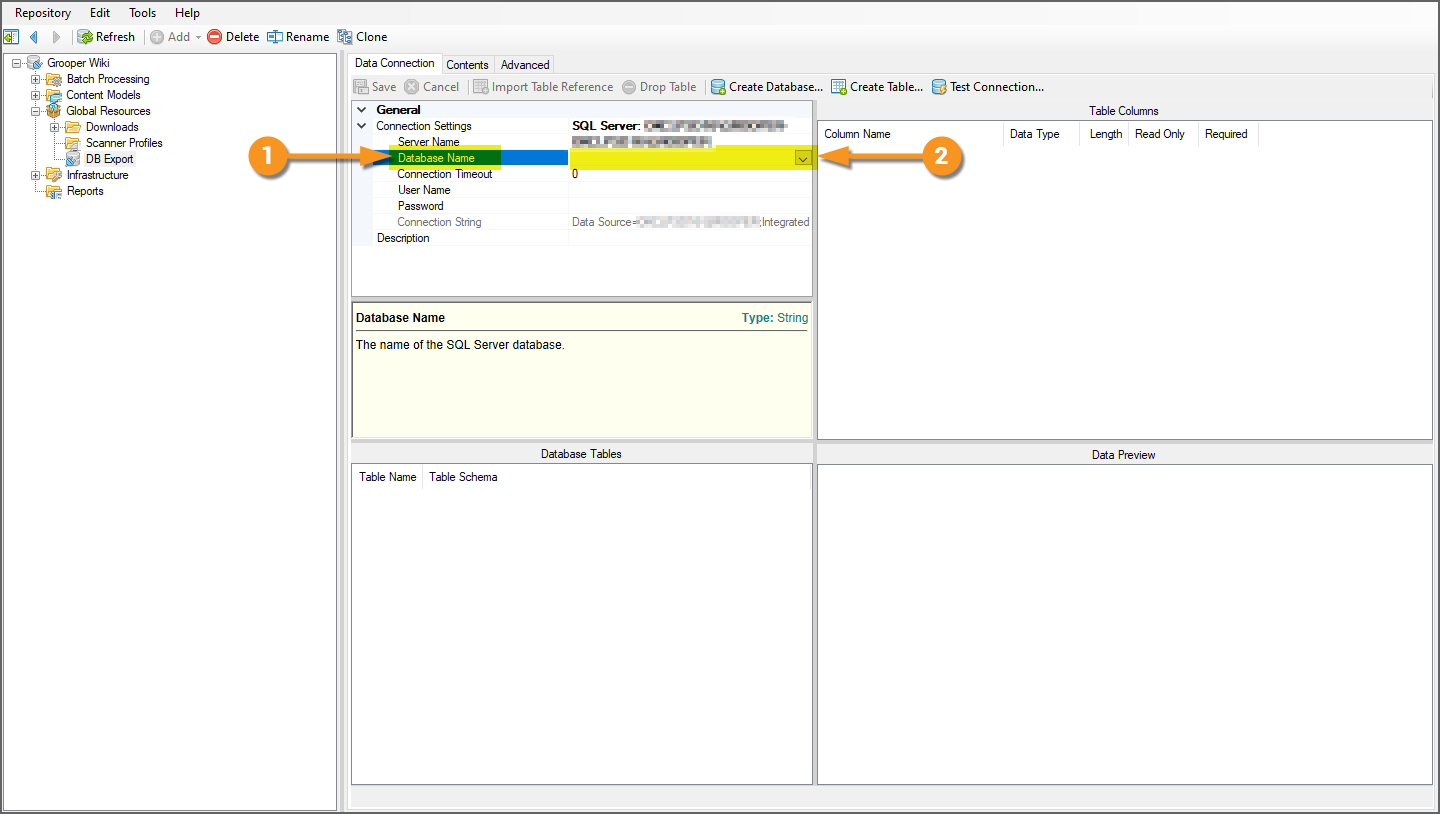

If you wanted to connect to an existing database, it's very easy.

|

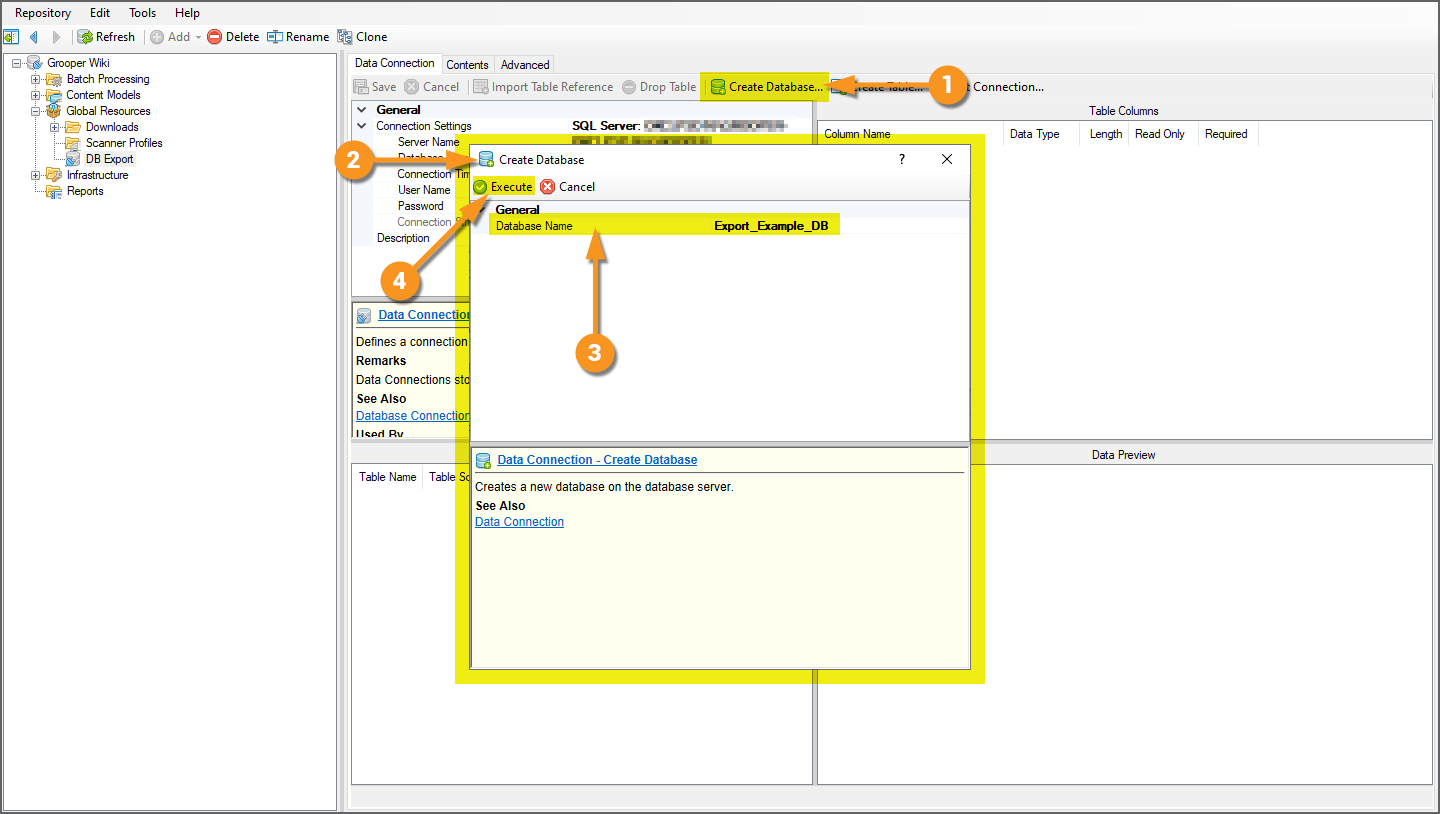

However, now that we're connected to the database, you can also create a brand new database!

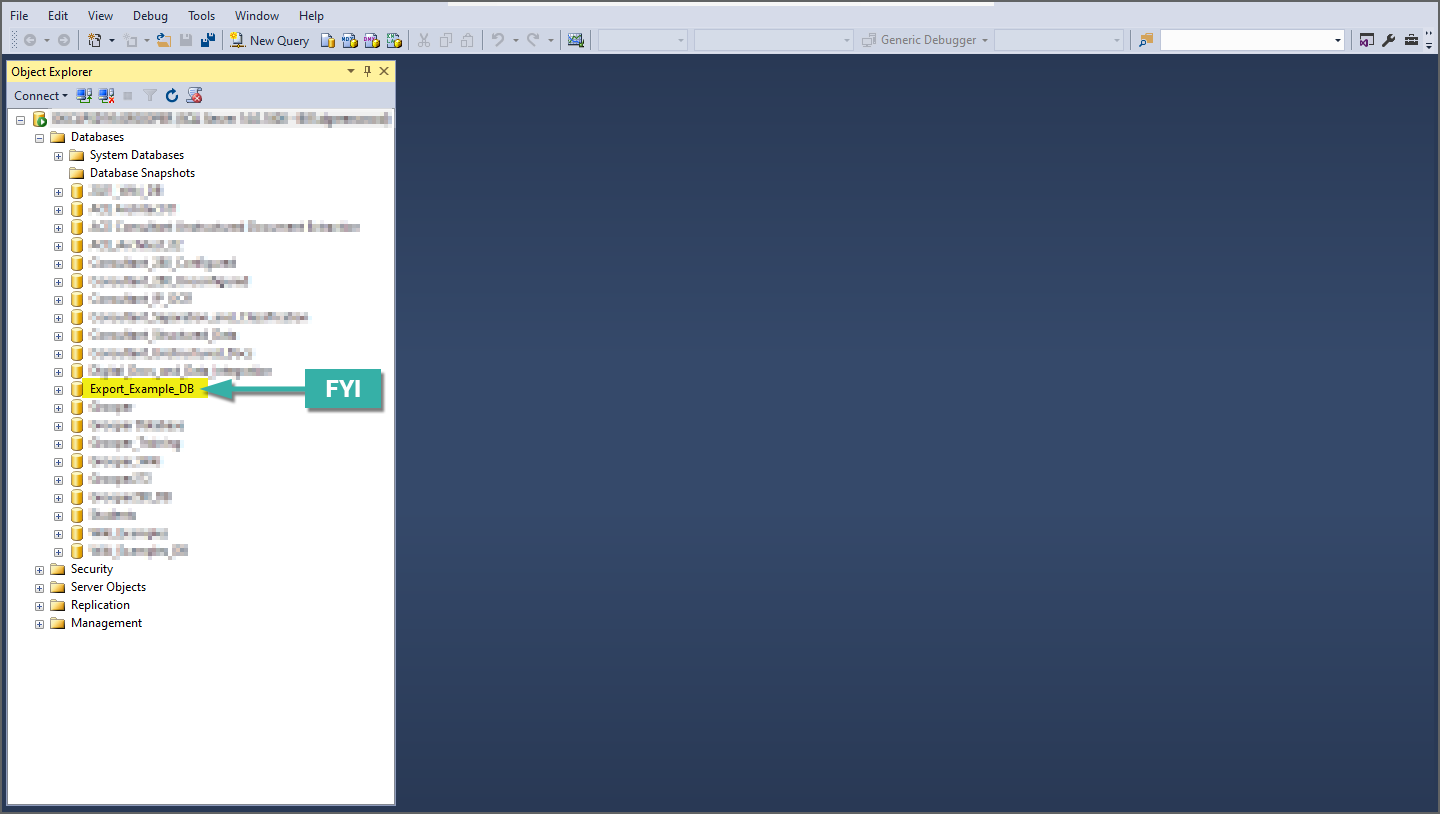

Create a New Database from the Data Connection

|

|||

|

|||

|

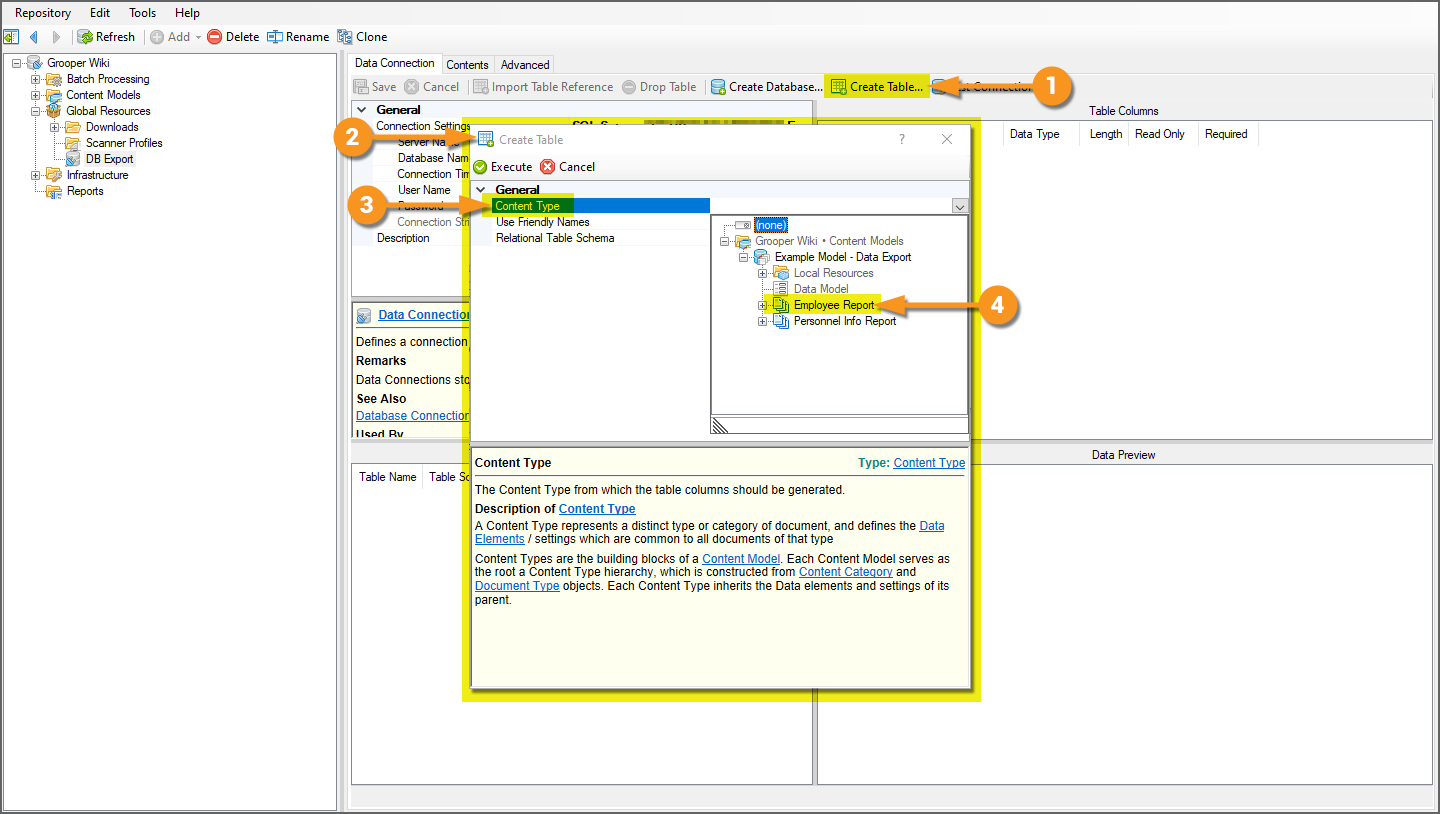

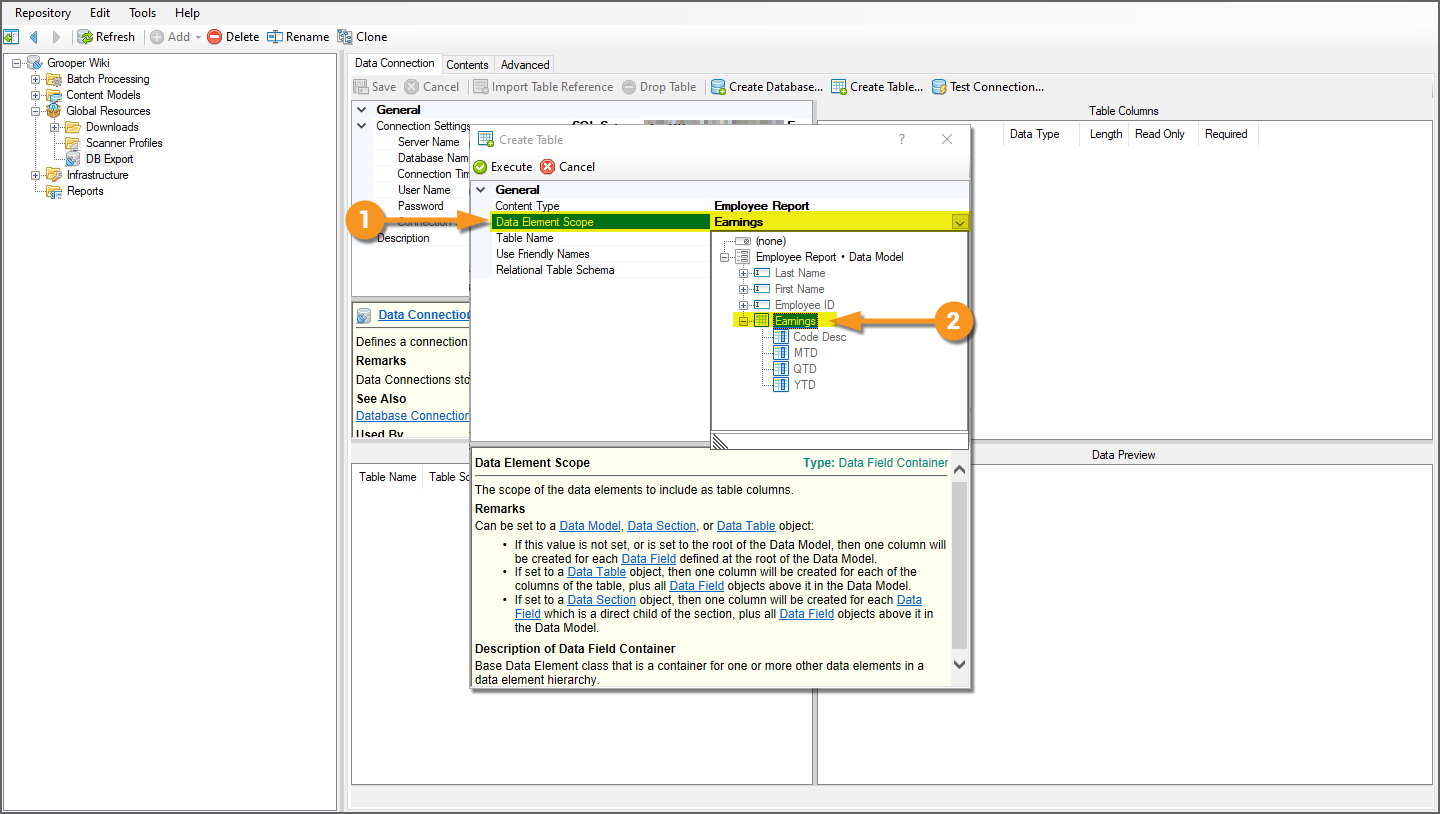

Create Database Tables from the Data Connection

We will end up creating three database tables by the end of this section:

- A table for the "Employee Report" Document Type's extracted Data Elements with its "one-to-many" related data elements flattened to a single table structure.

- A table for the "Personnel Info Report" Document Type's extracted "non-PII" Data Table.

- A table for the "Personnel Info Report" Document Type's extracted "PII" Data Table.

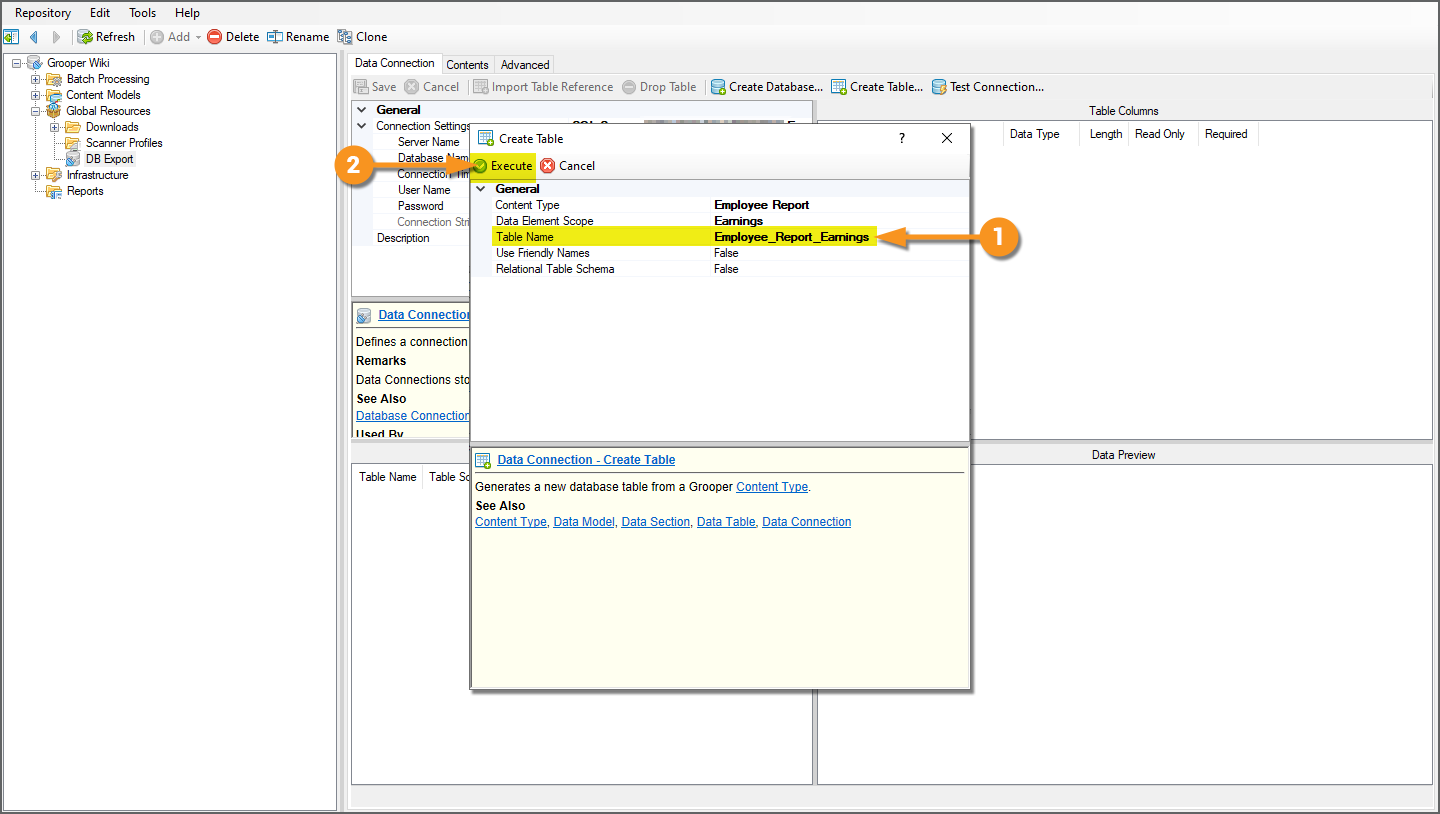

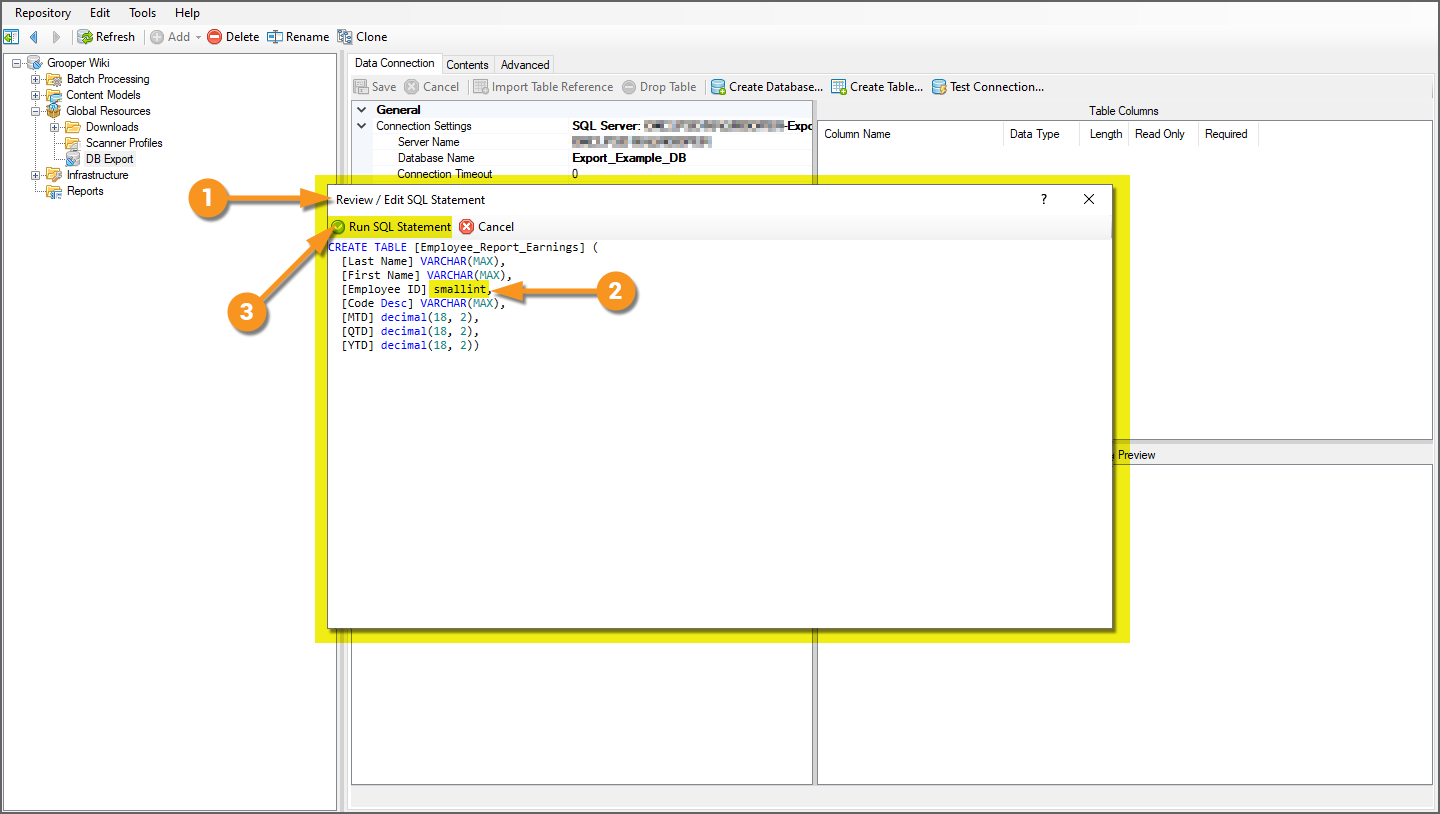

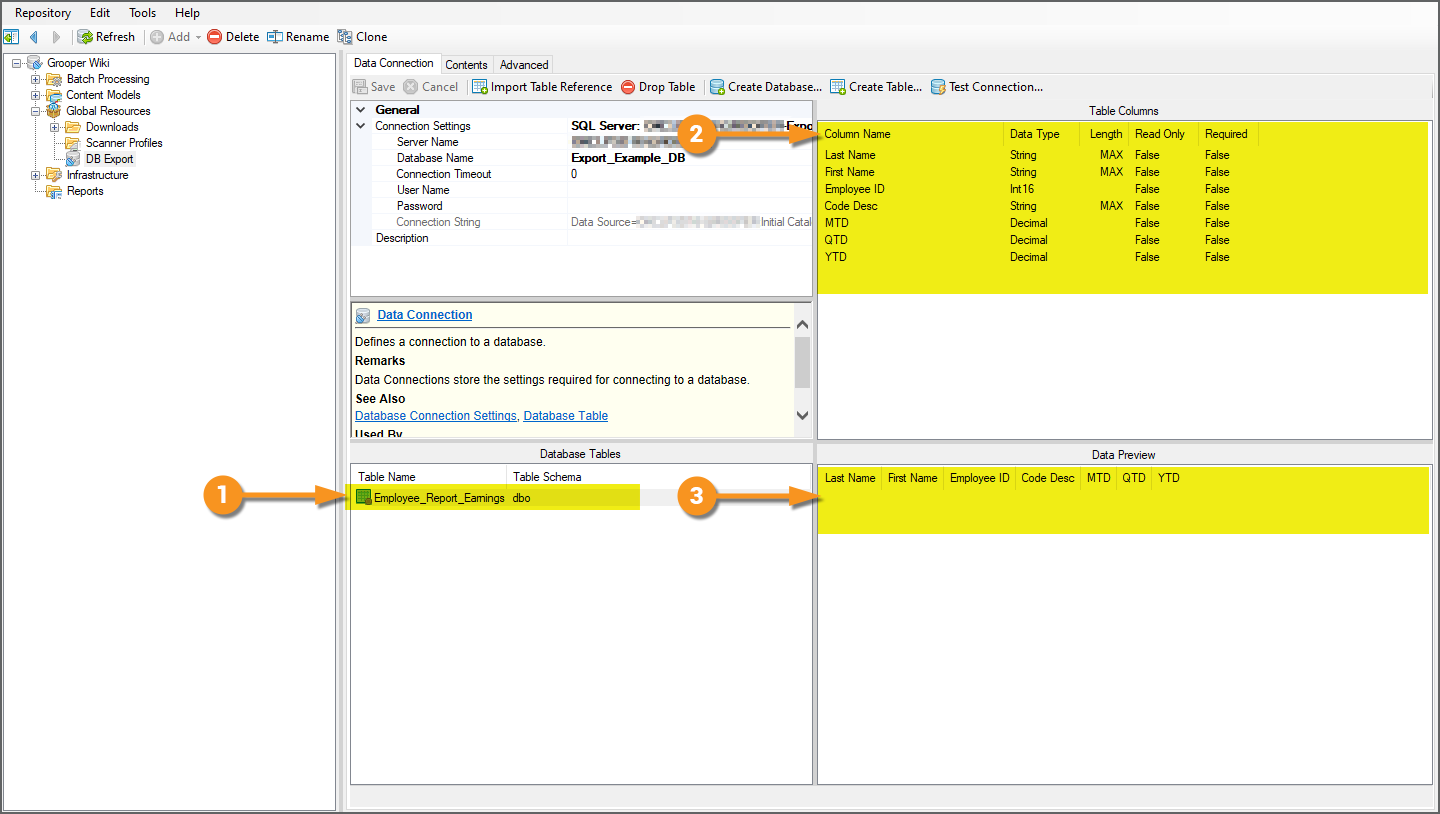

Table 1: Employee Report Data

|

|

|

|

|

|

|

|

|

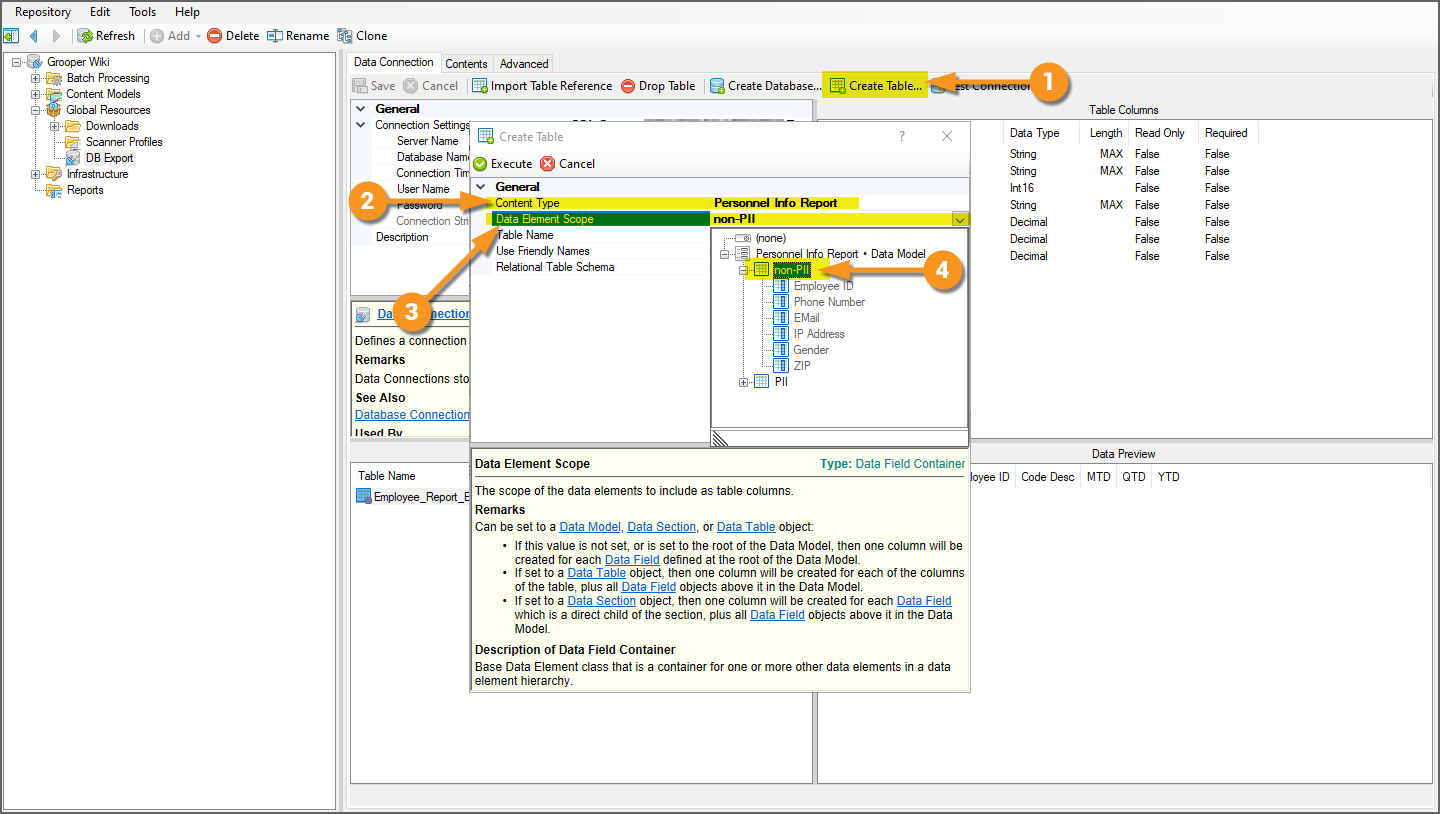

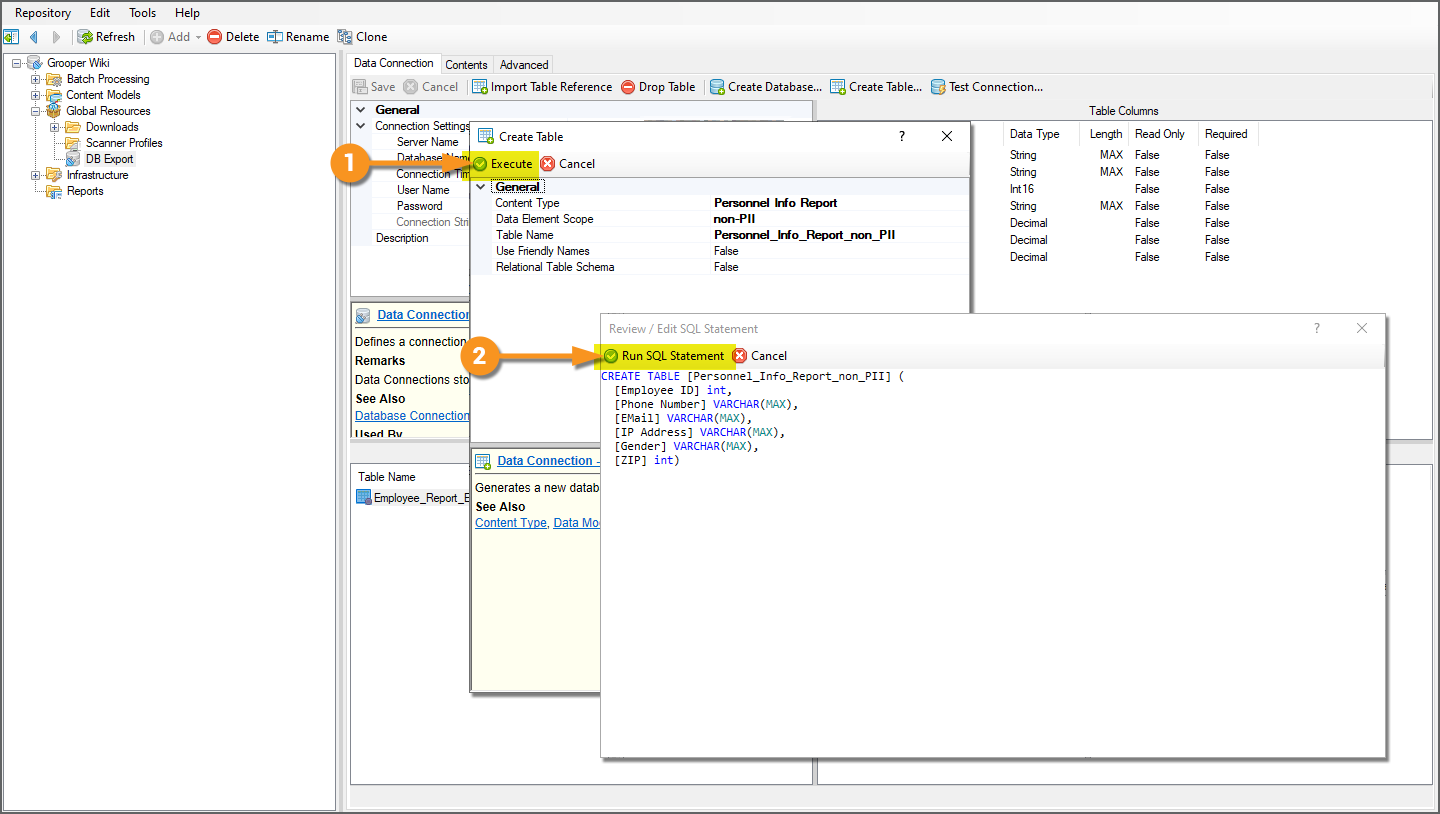

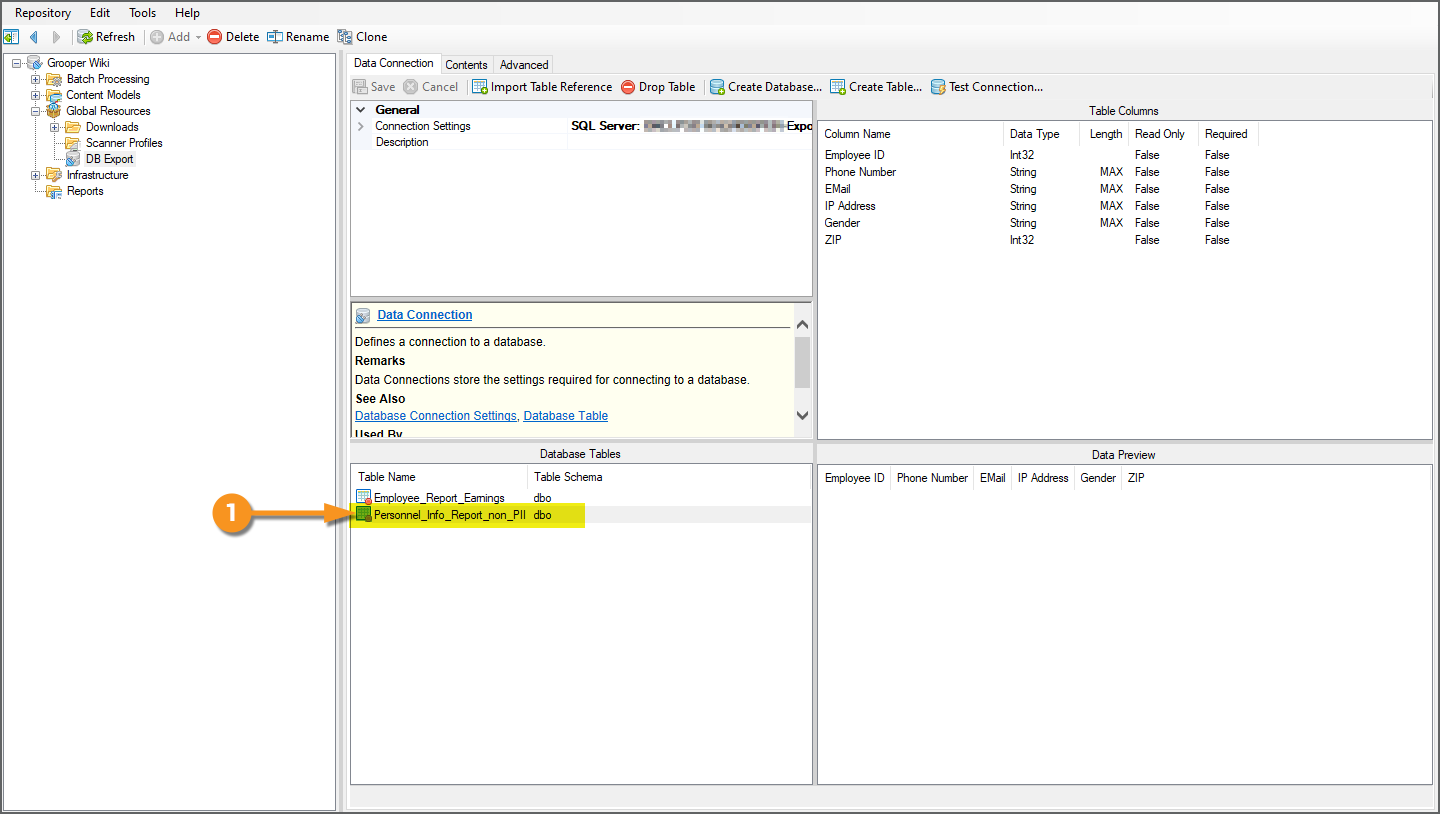

Table 2: Personnel Info Report non-PII Data

For the other two tables, it's mostly a repeat of the same steps, just taking care to select the appropriate Content Type and Data Element scope.

|

|

|

|

|

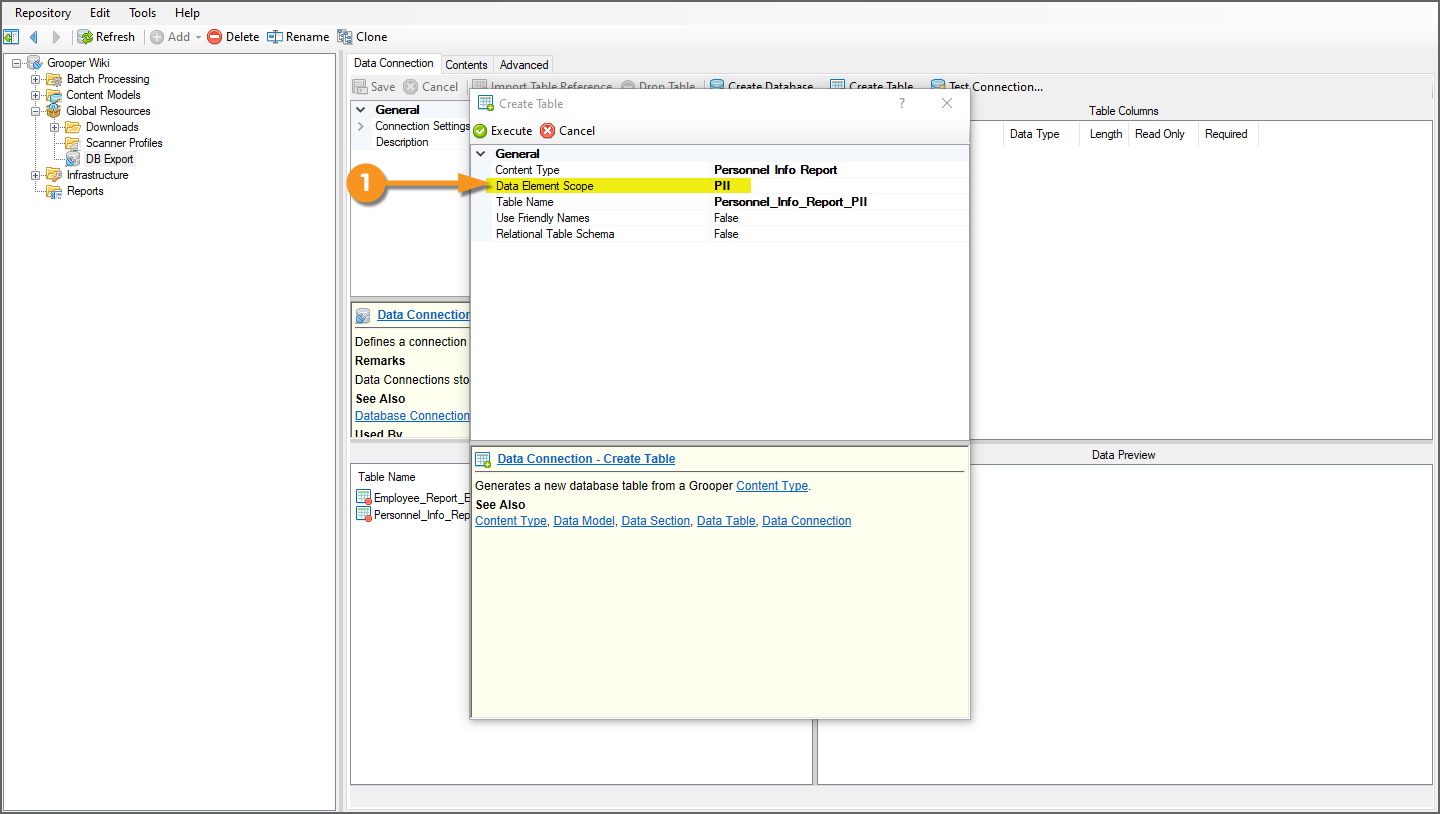

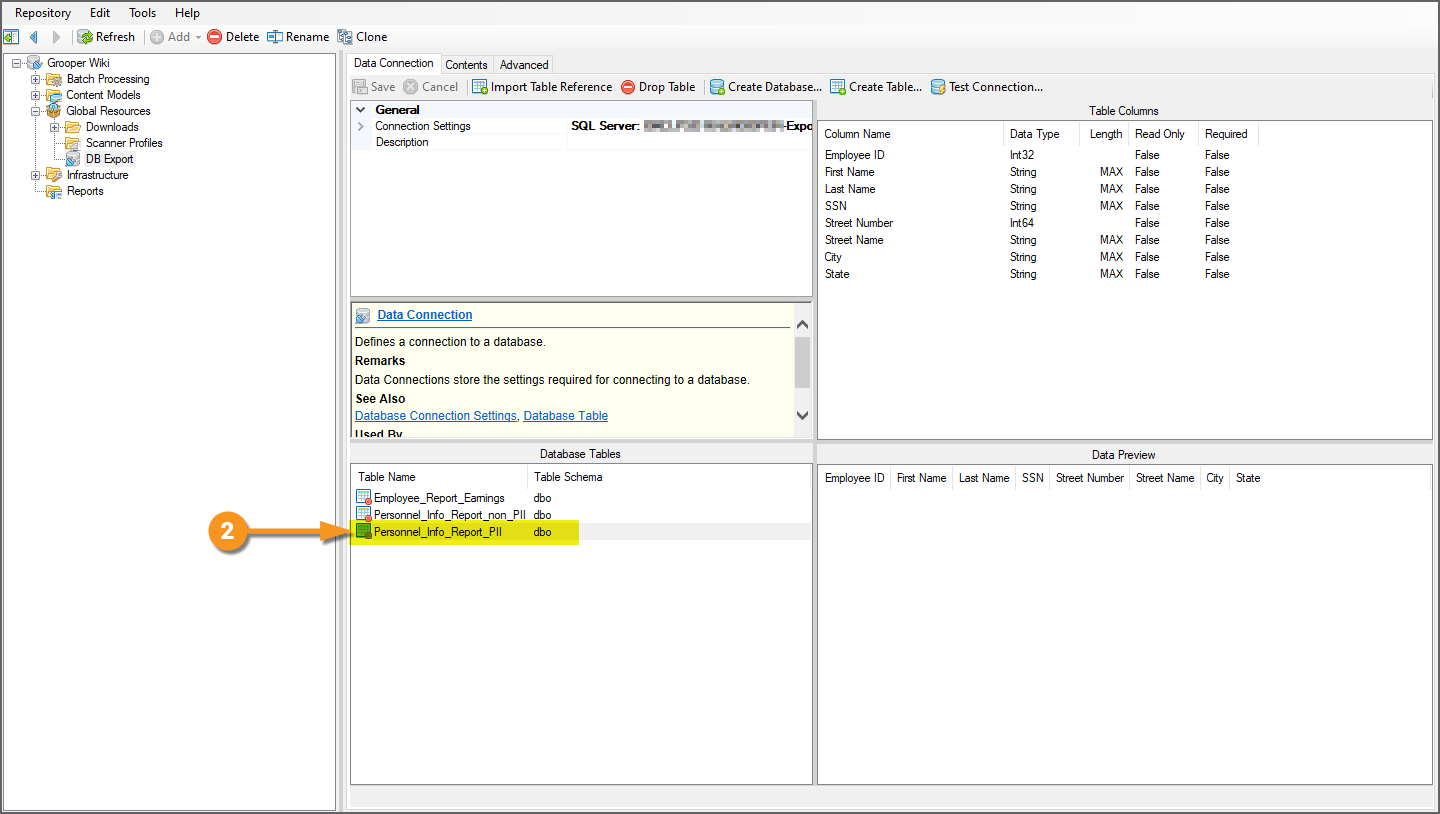

Table 3: Personnel Info Report PII Data

|

This database table can be created with the exact same steps as described above with just one key difference:

|

|

|

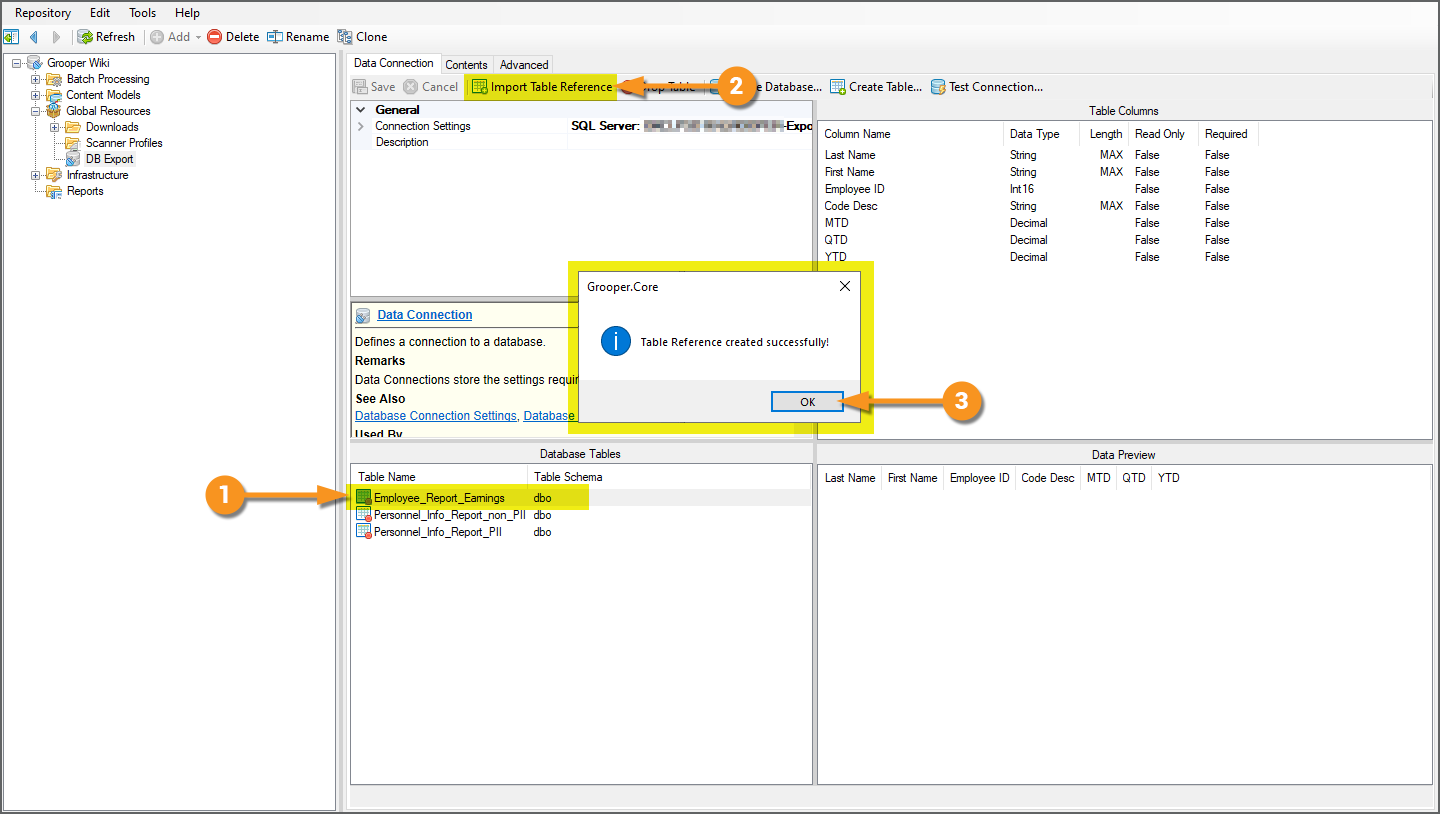

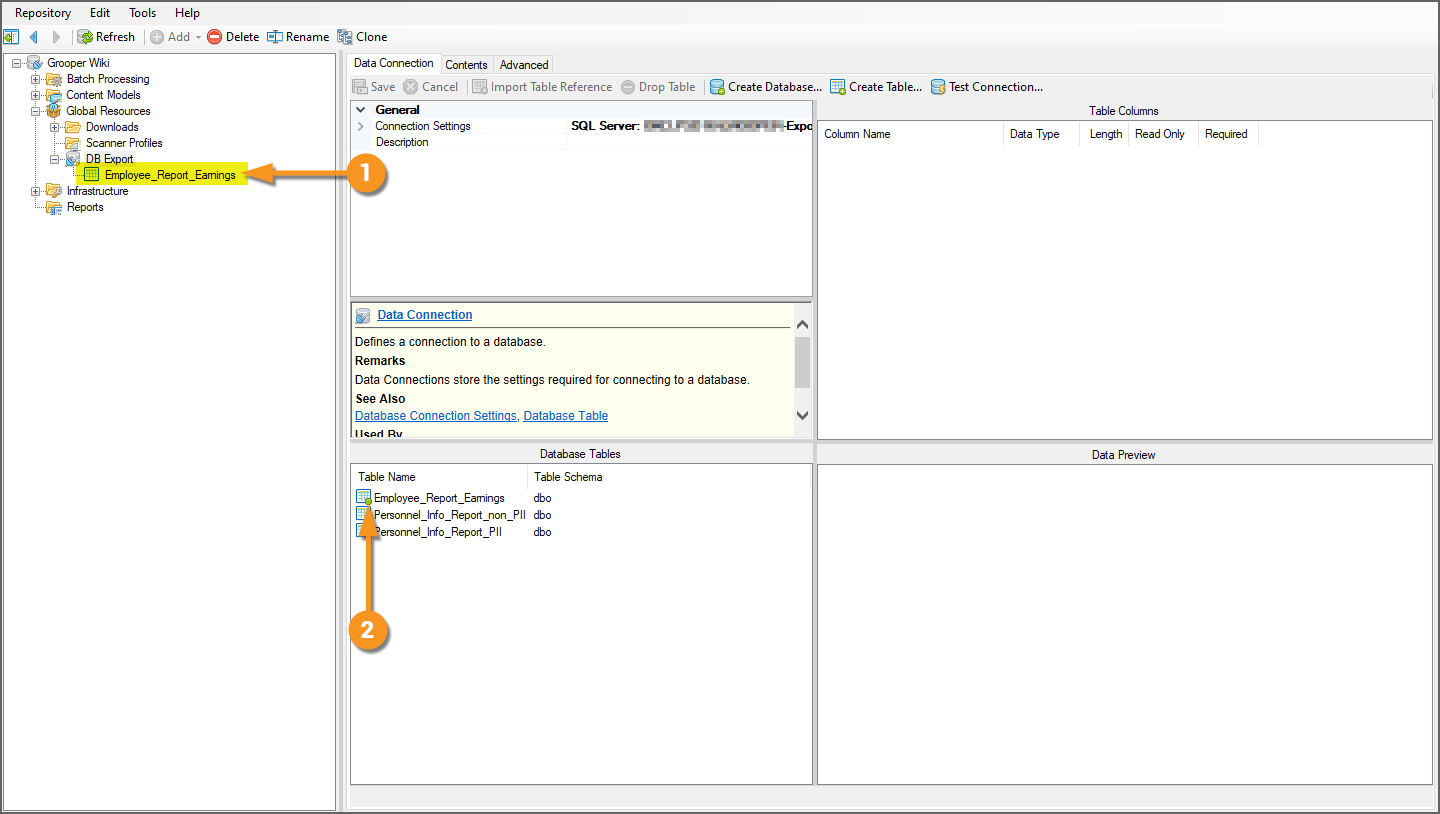

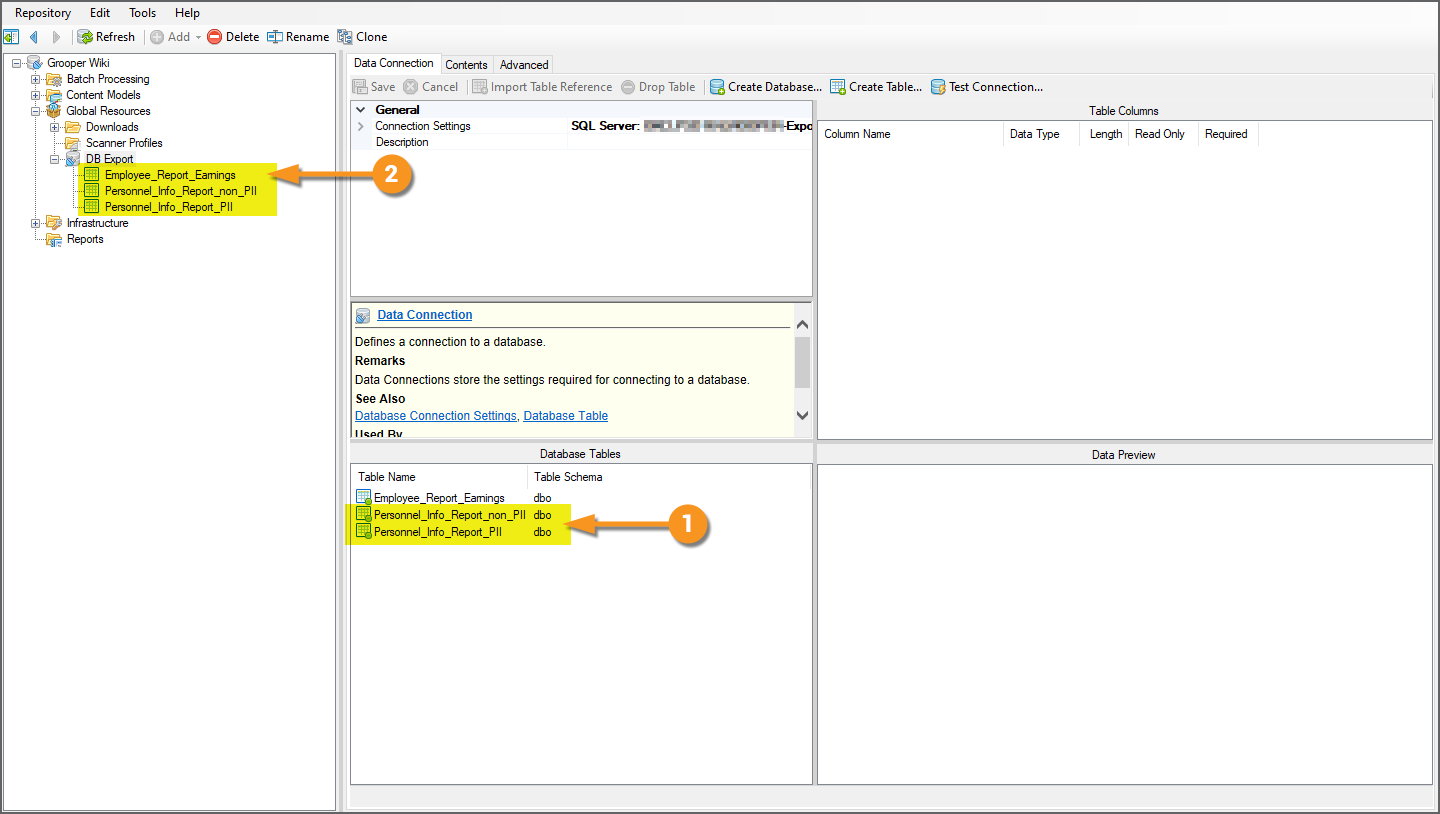

Import Table References

Before we can export data to these newly created tables, we must import their table references. This part is critical in order for Grooper to interact with a database table, whether to export data using Data Export or perform a Database Lookup operation. Importing the table references will give Grooper an object it can reference when mapping data between Grooper and the database table, ultimately allowing for data to flow from extracted Batch Folders to the database table.

|

Importing a table reference is as simple as a click of a button.

|

|

|

|

|

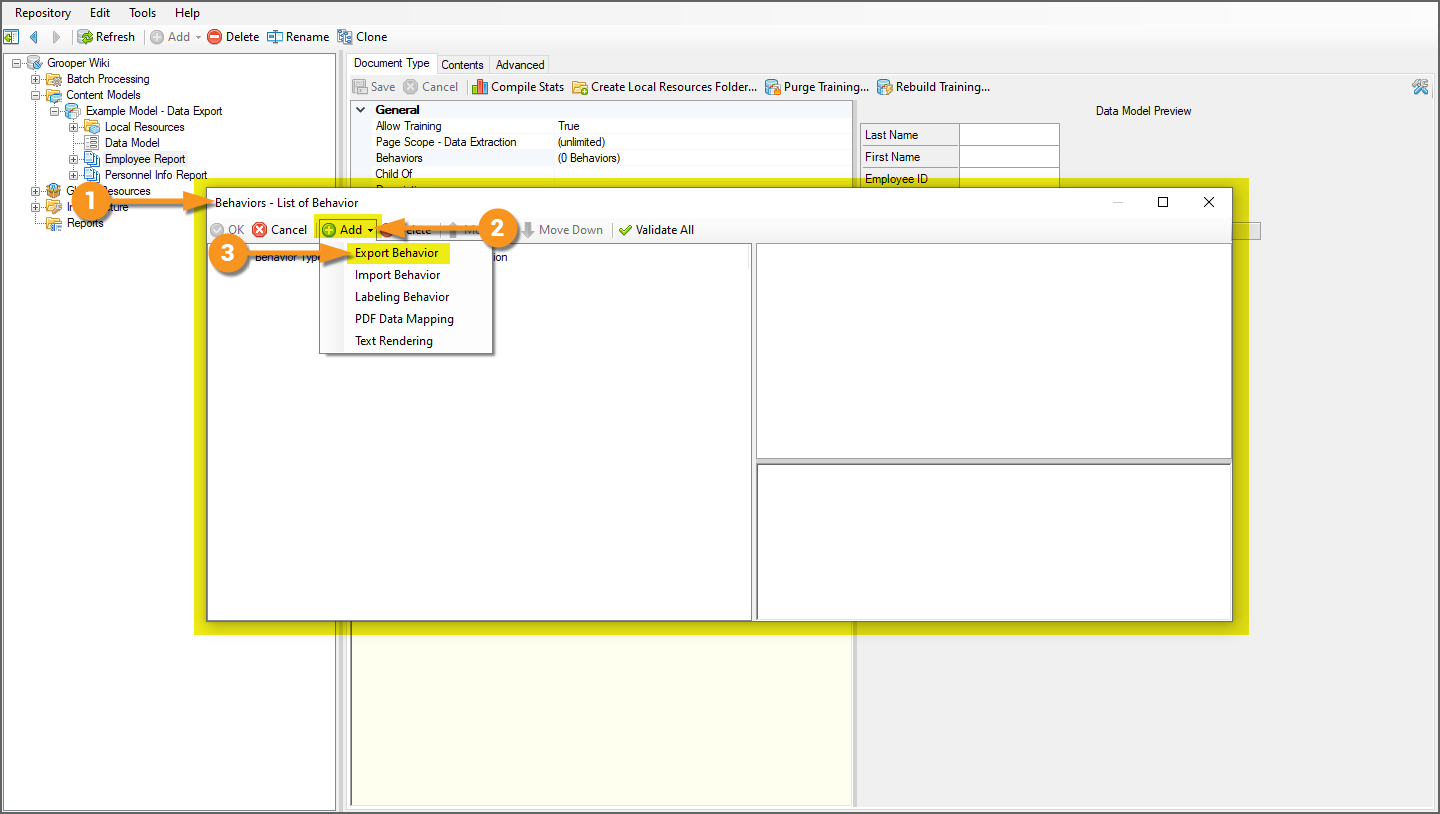

Configuring Export Behaviors for Data Export

Data Export is one of the Export Type options when configuring an Export Behavior. Export Behaviors control what document content for a Batch Folder is exported where, according to its classified Document Type. As such, in order to configure a Data Export, you must first configure an Export Behavior for a Content Type (a Content Model or its child Content Categories or Document Types).

In our case, we want to perform two different kinds of export, depending on the document Batch Folder's classified Document Type.

- For the "Employee Report" Document Type, we want to export its collected Data Elements to our first database table.

- For the "Personnel Info Report" Document Type, we want to export its collected Data Elements (which are collected using an entirely different Data Model and have an entirely different data structure) to our second and third database table.

The basic idea behind Export Behaviors is, based on kind of document you're looking at, you can tell Grooper how you want to export it.

Export Behaviors can be configured in one of two ways:

- Using the Behaviors property of a Content Type object

- A Content Model

- A Content Category

- Or, a Document Type

- As part of the Export activity's property configuration

When the Export activity processes each Batch Folder it will execute the Export Behaviors, according to their configuration settings.

| FYI | In general, users will choose to configure Export Behaviors either on the Content Type object it applies to or local to the Export activity step in a Batch Process.

This may just boil down to personal preference. There is no functional difference between an Export Behavior configured on a Content Type or an Export Behavior configured on an Export Step, upon completing their configuration. In either case, they will accomplish the same goal. However, it is possible to configure Export Behaviors, in both locations. If you do this, you will need to understand the Export activity's Shared Behavior Mode property options. This will effect if and how two Export Behaviors configured for the same Content Type will execute. Please visit the Export article for more information. |

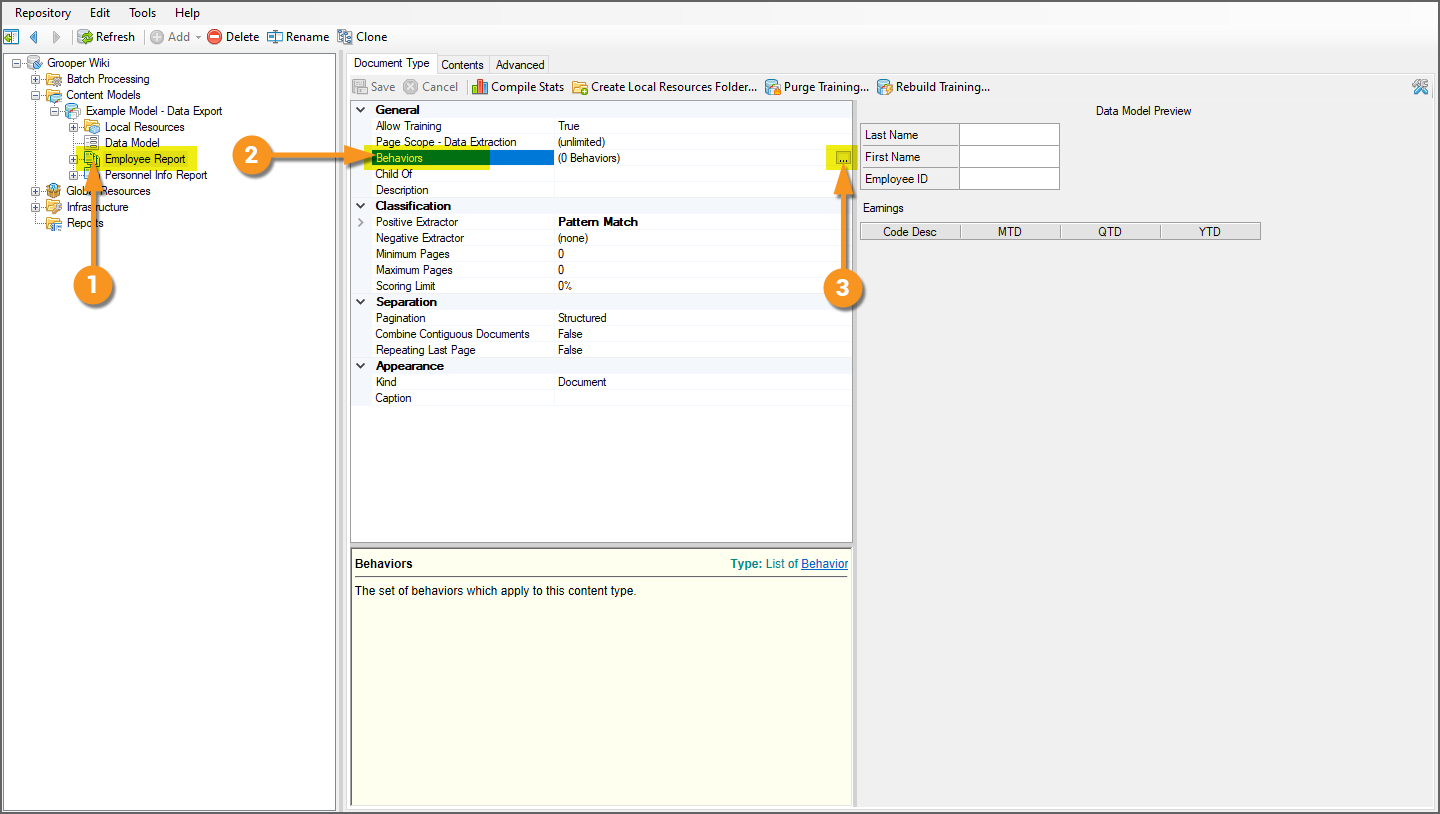

Add an Export Behavior

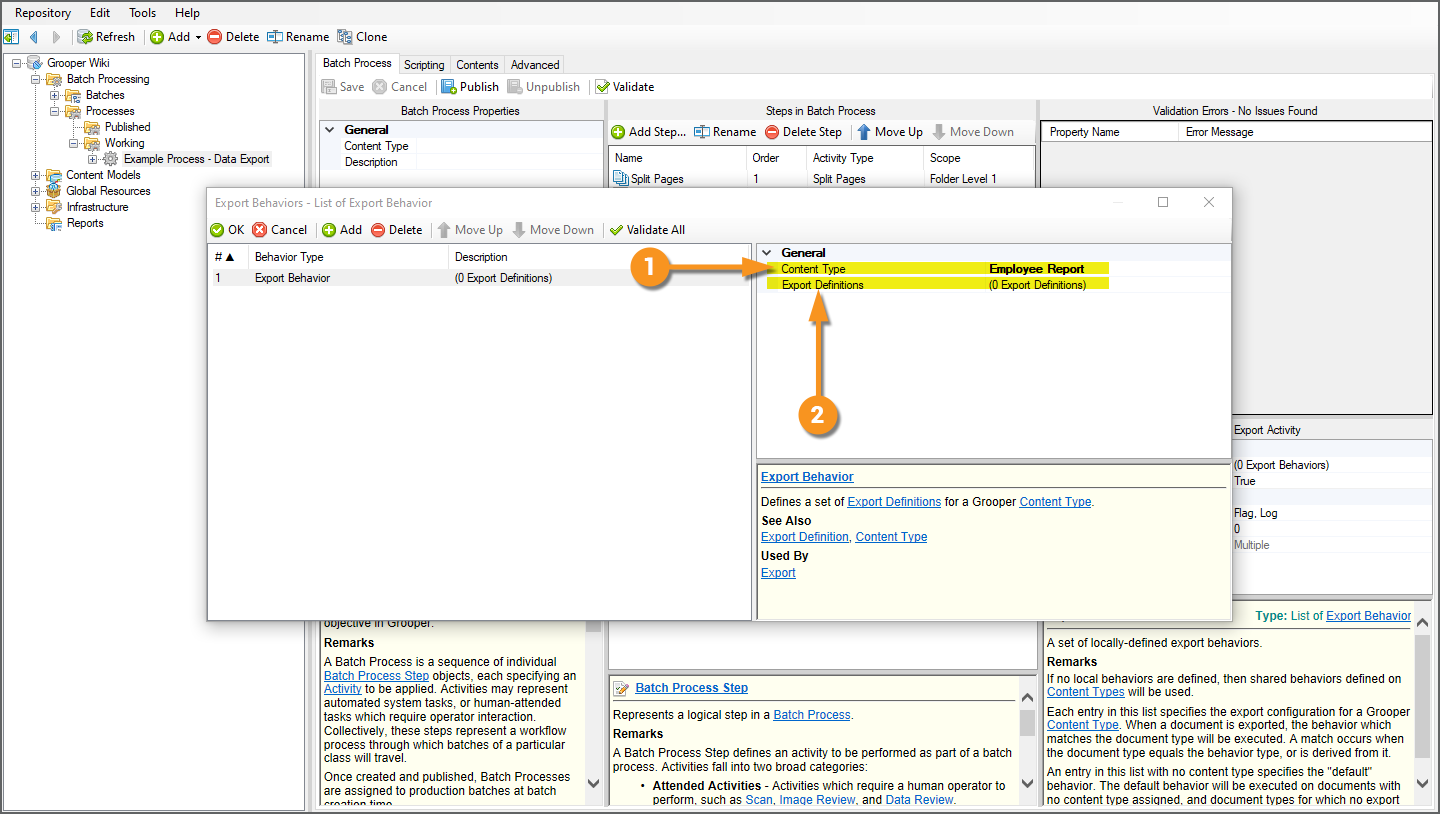

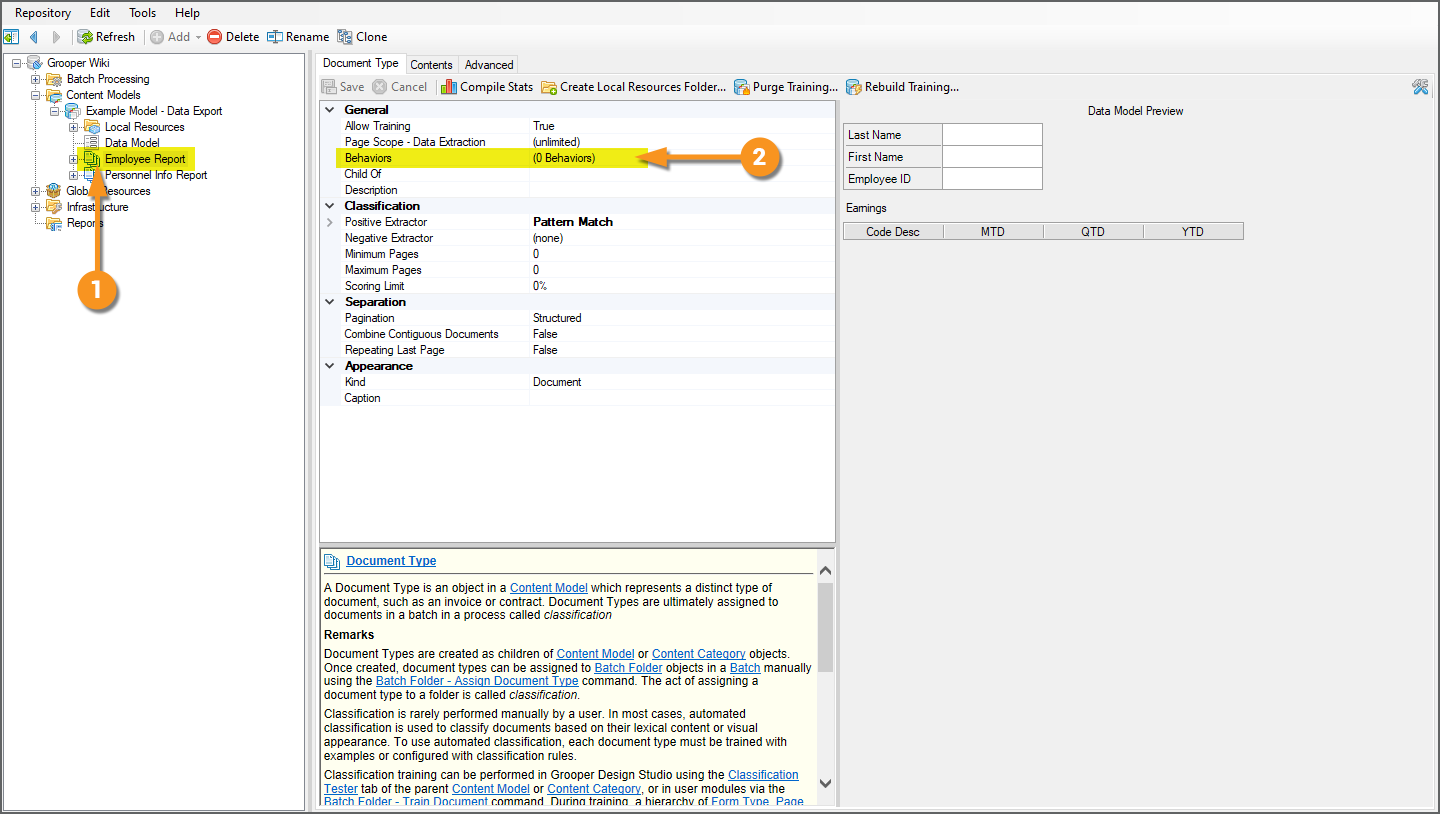

Option 1: Content Type Export Behaviors

|

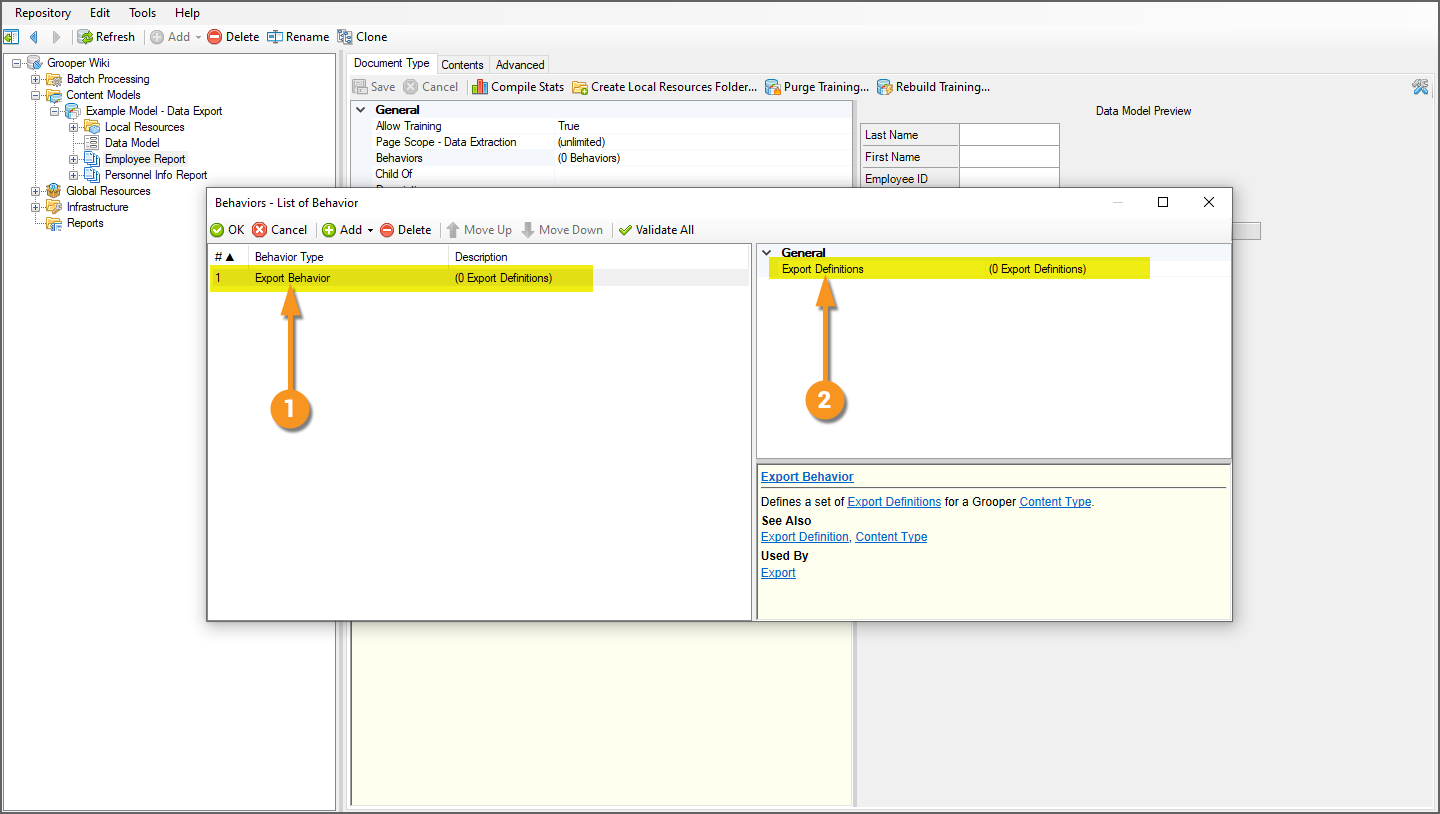

An Export Behavior configuration can be added to any Content Type object (i.e. Content Models, Content Categories, and Document Types) using its Behaviors property. Doing so will control how a Document Type "behaves" upon export.

|

|

|

|

|

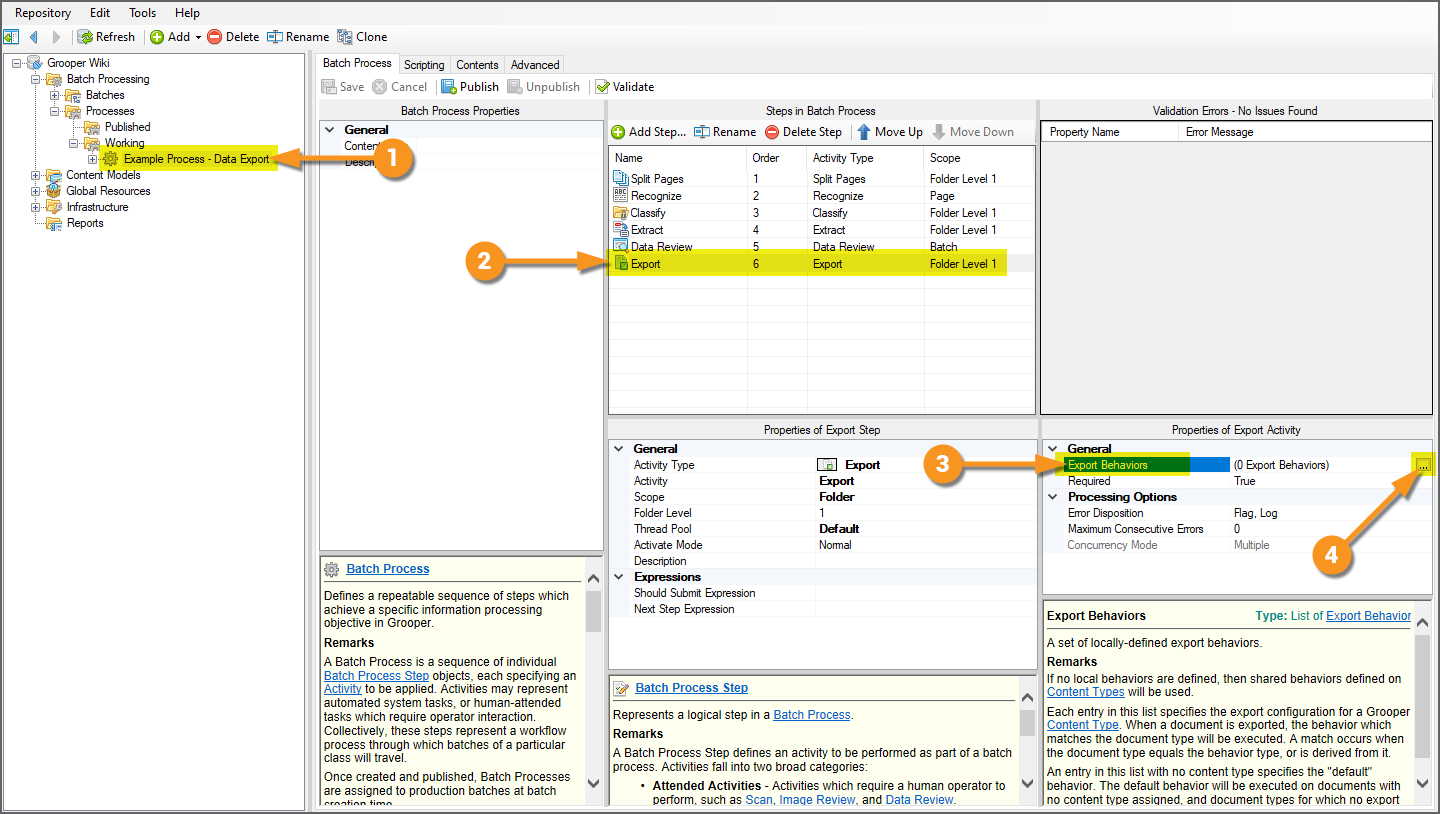

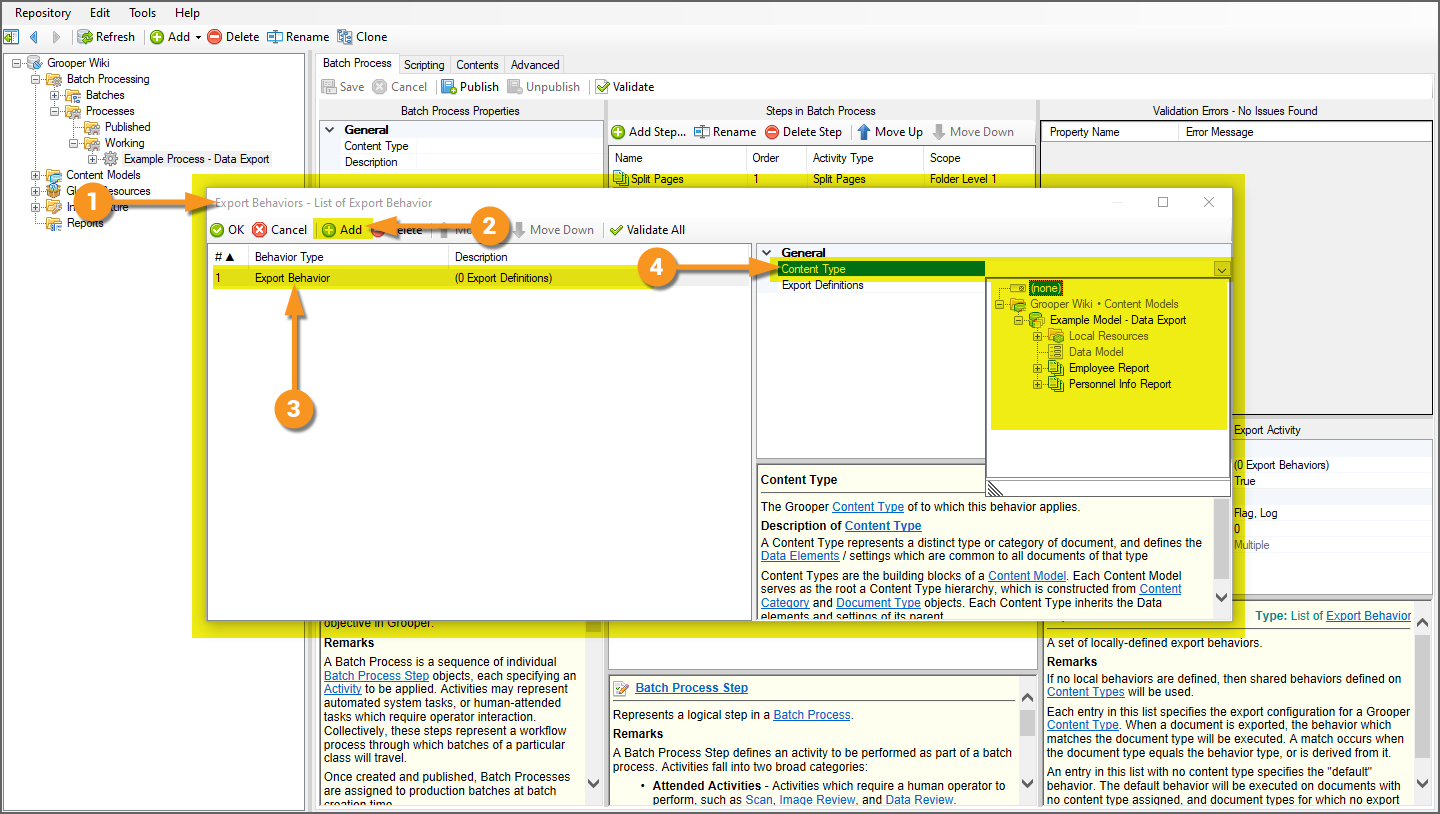

Option 2: Export Activity Export Behaviors

|

Export Behaviors can also be configured as part of the Export activity's configuration. These are called "local" Export Behaviors. They are local to the Export activity step in the Batch Process. PLEASE NOTE that the following instructions are only to be followed if you choose to set Export Behaviors on the Batch Process Step instead of on the Content Type itself. You do not need to follow these instructions if you choose to set the Export Behaviors on the Content Types, which is how this article will continue. It's worth understanding that if you set Export Behaviors on Content Types themselves, you as a result do not need to choose which Content Type the Export Behavior will apply to. However, when setting Export Behaviors on Batch Process Steps, the first thing you configure after adding the Export Behavior is which Content Type the behavior should apply to. You can set multiple Export Behaviors that affect multiple Content Types on the Batch Process Step, or you can configure individual Export Behaviors on individual Content Types. Again, in the case of this article, we will be setting the Export Behaviors on each Content Type, instead of on the Batch Process Step.

|

|

|

|

|

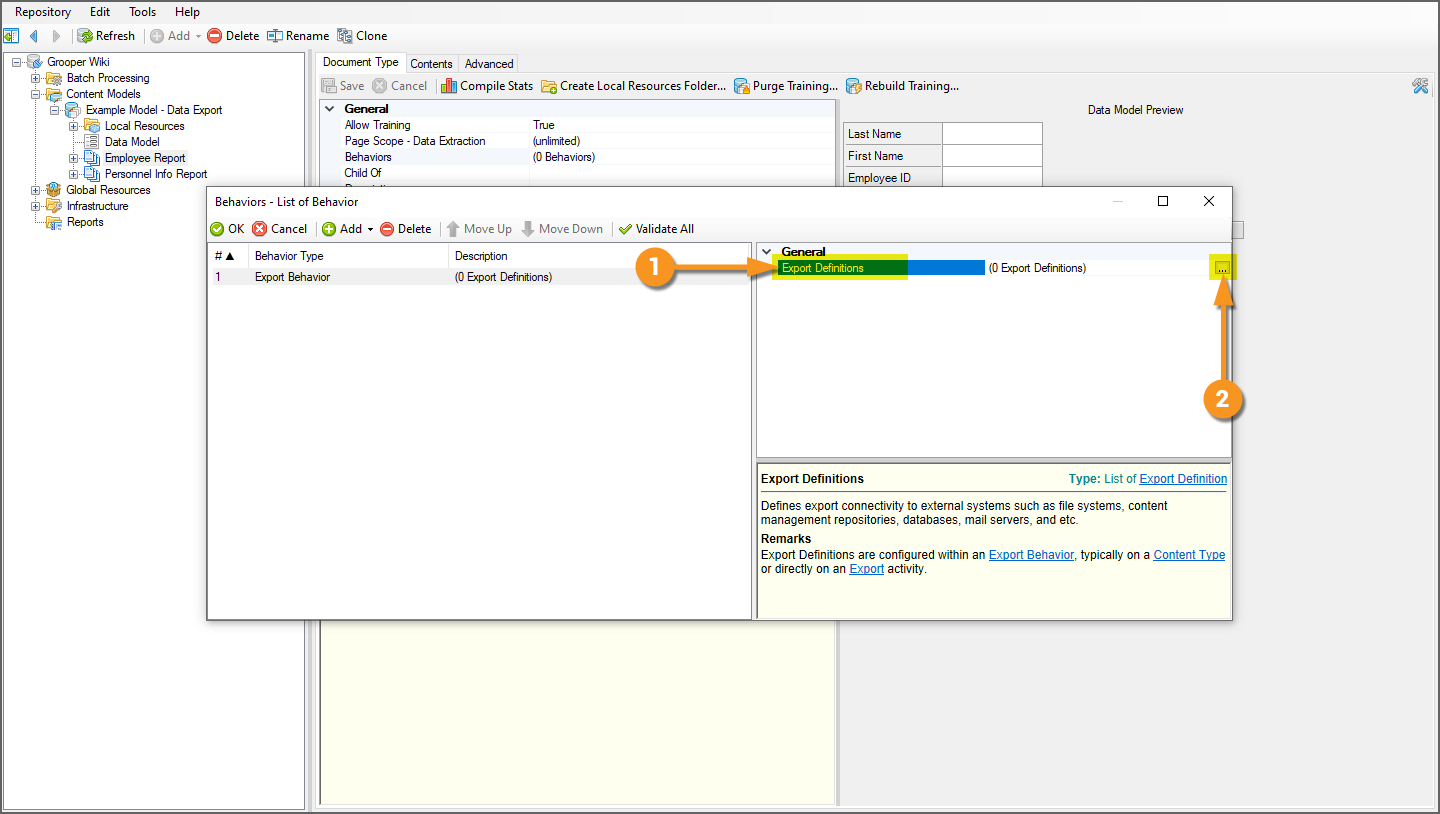

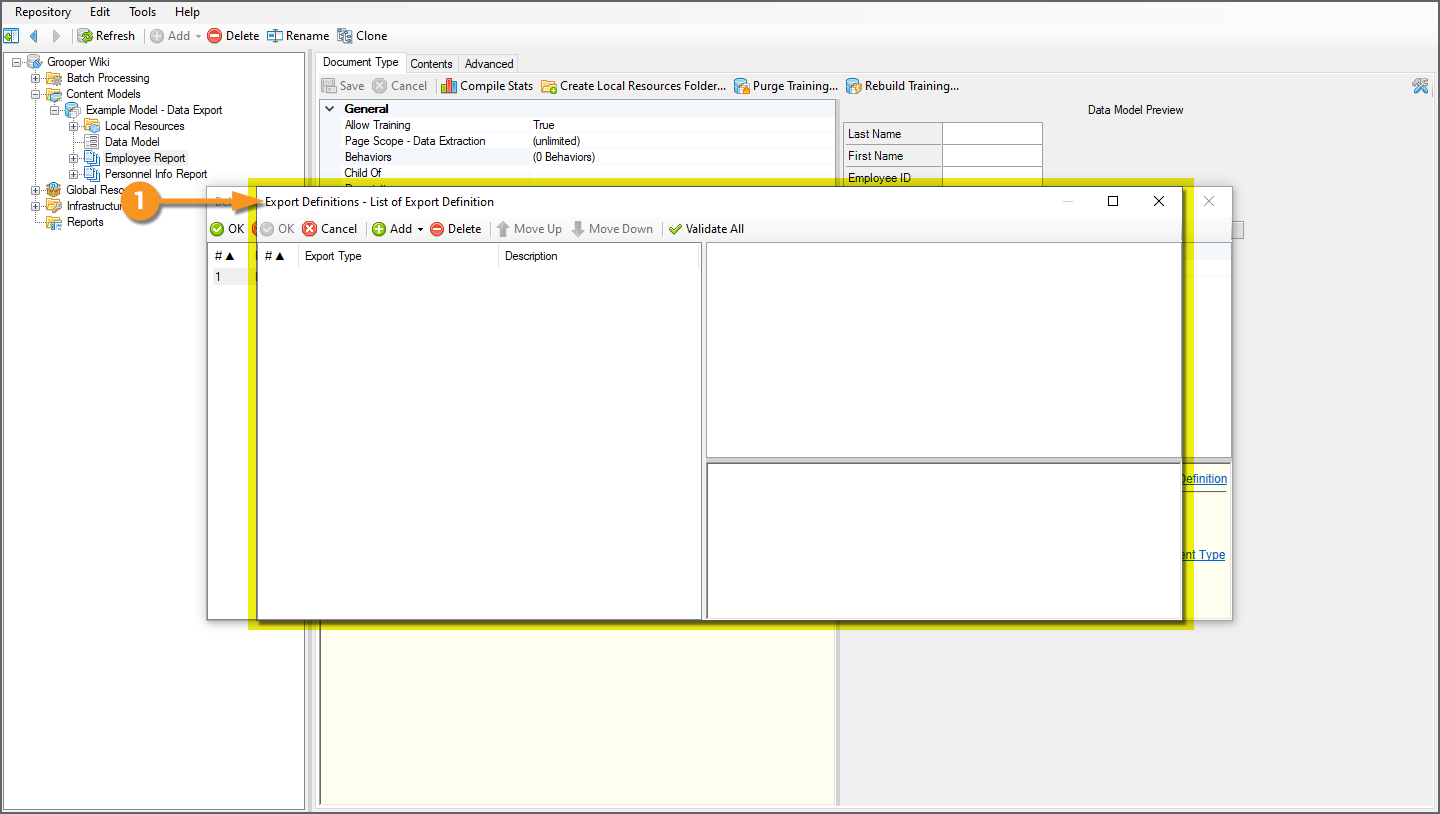

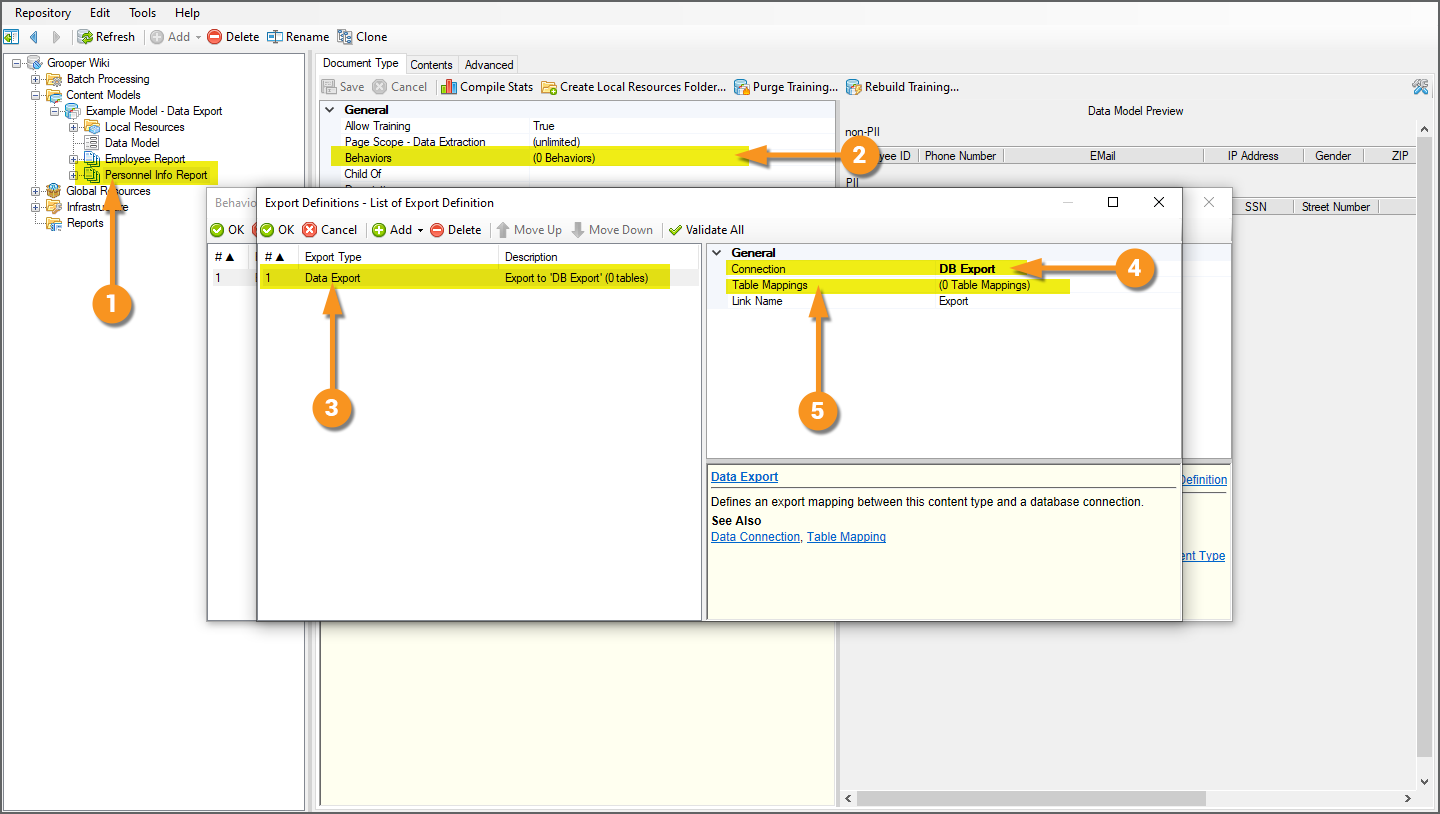

Add an Export Definition

|

|

|

Regardless if you choose to configure the Export Behavior on a Content Type object, or if you configure it local to to Export activity's configuration, your next step is adding an Export Definition.

|

|

|

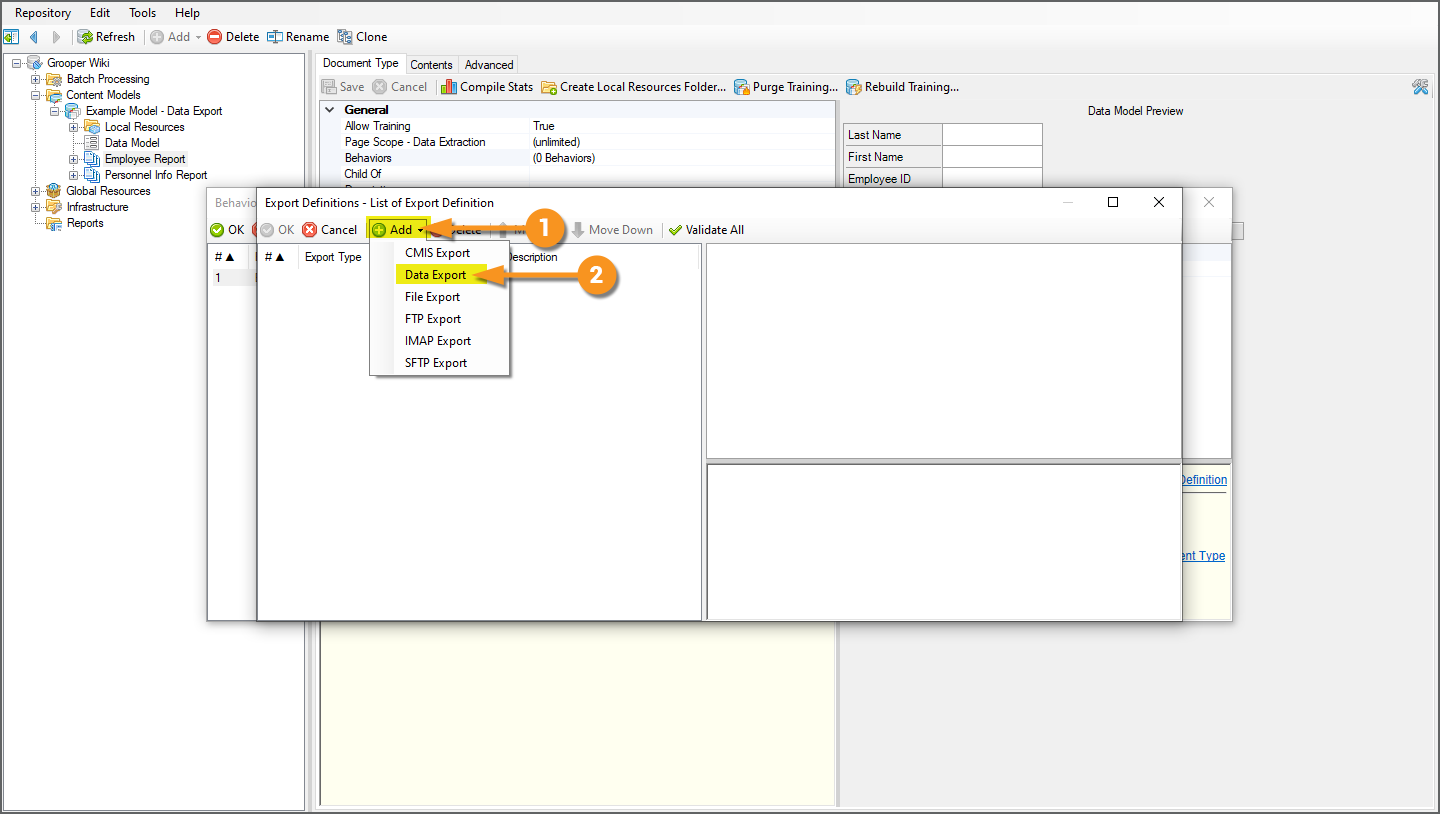

Add a Data Export

Export Definitions functionally determine three things:

- Location - Where the document content ends up upon export. In other words, the storage platform you're exporting to.

- Content - What document content is exported: image content, full text content, and/or extracted data content.

- Format - What format the exported content takes, such as a PDF file or XML data file.

|

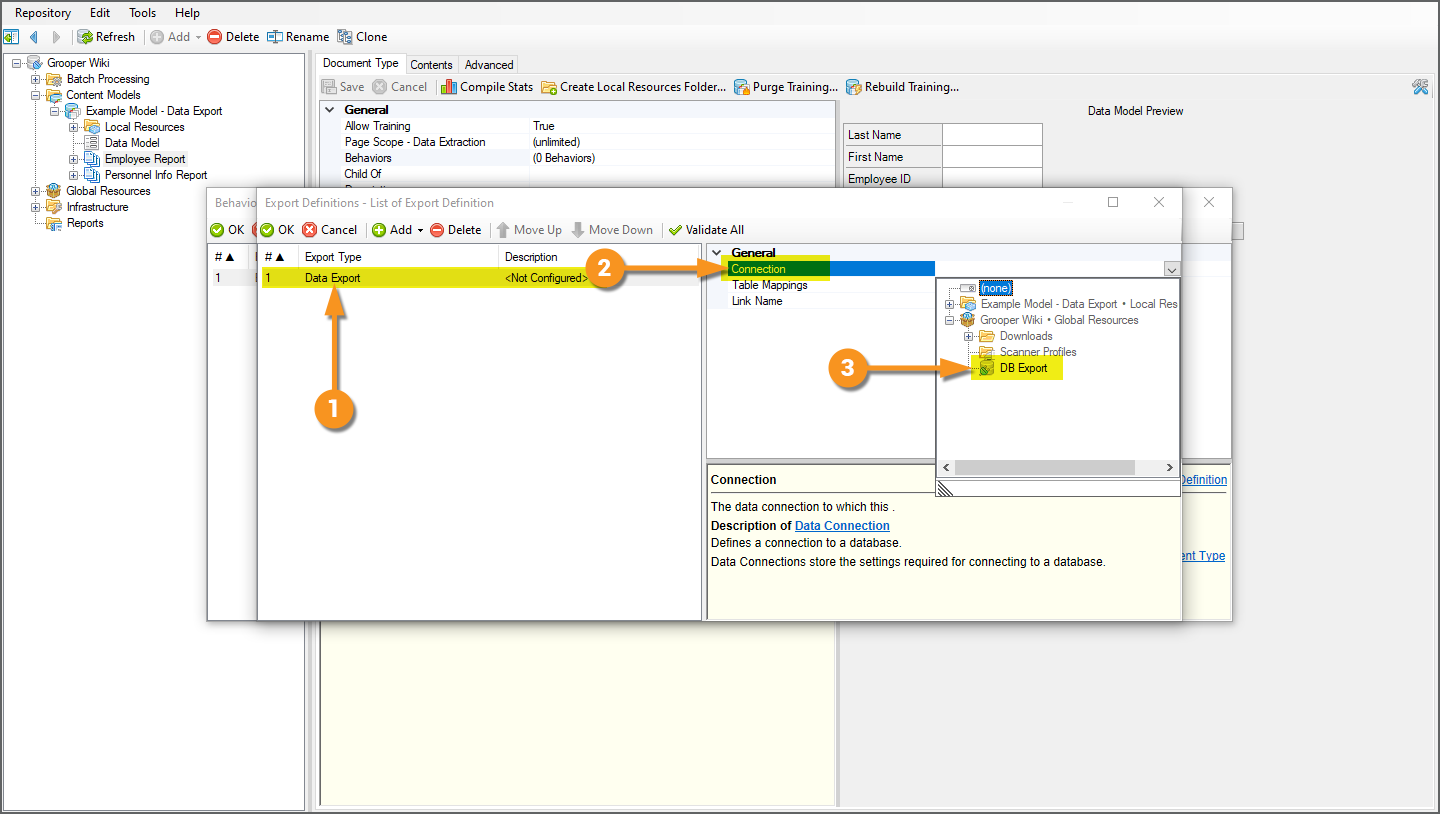

Export Definitions do this by adding one or more Export Type configurations to the definition list. The Export Type you choose determines how you want to export content to which platform. In our case, we want to use a Data Connection to export extracted document data ("Content") to a database table ("Location" and "Format"). We will add a Data Export to the definition list.

|

|

|

|

|

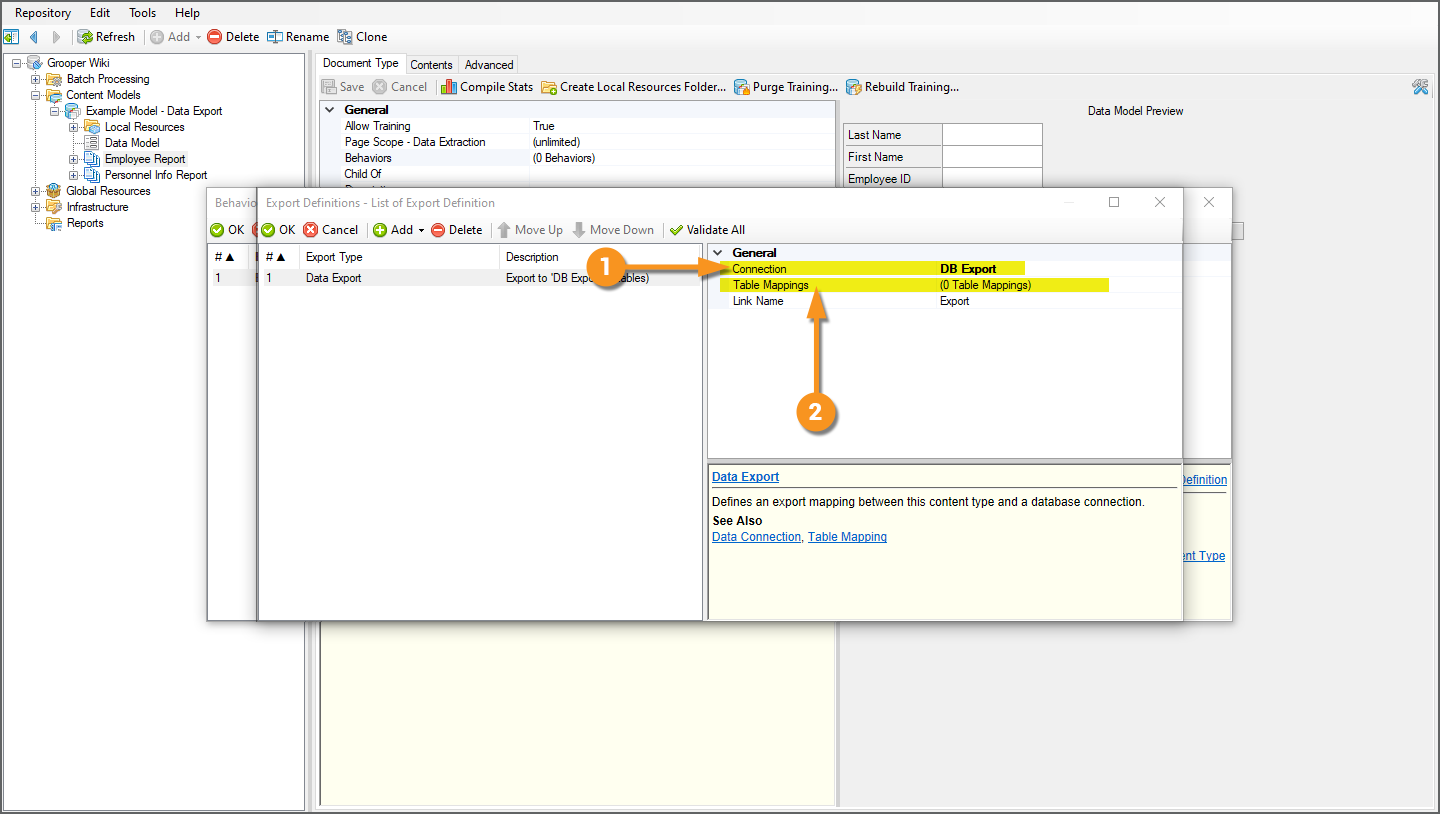

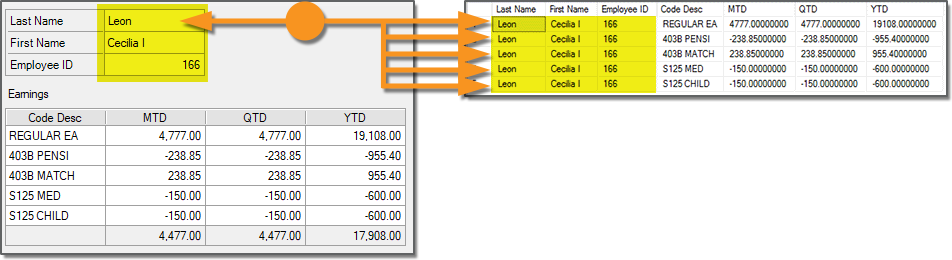

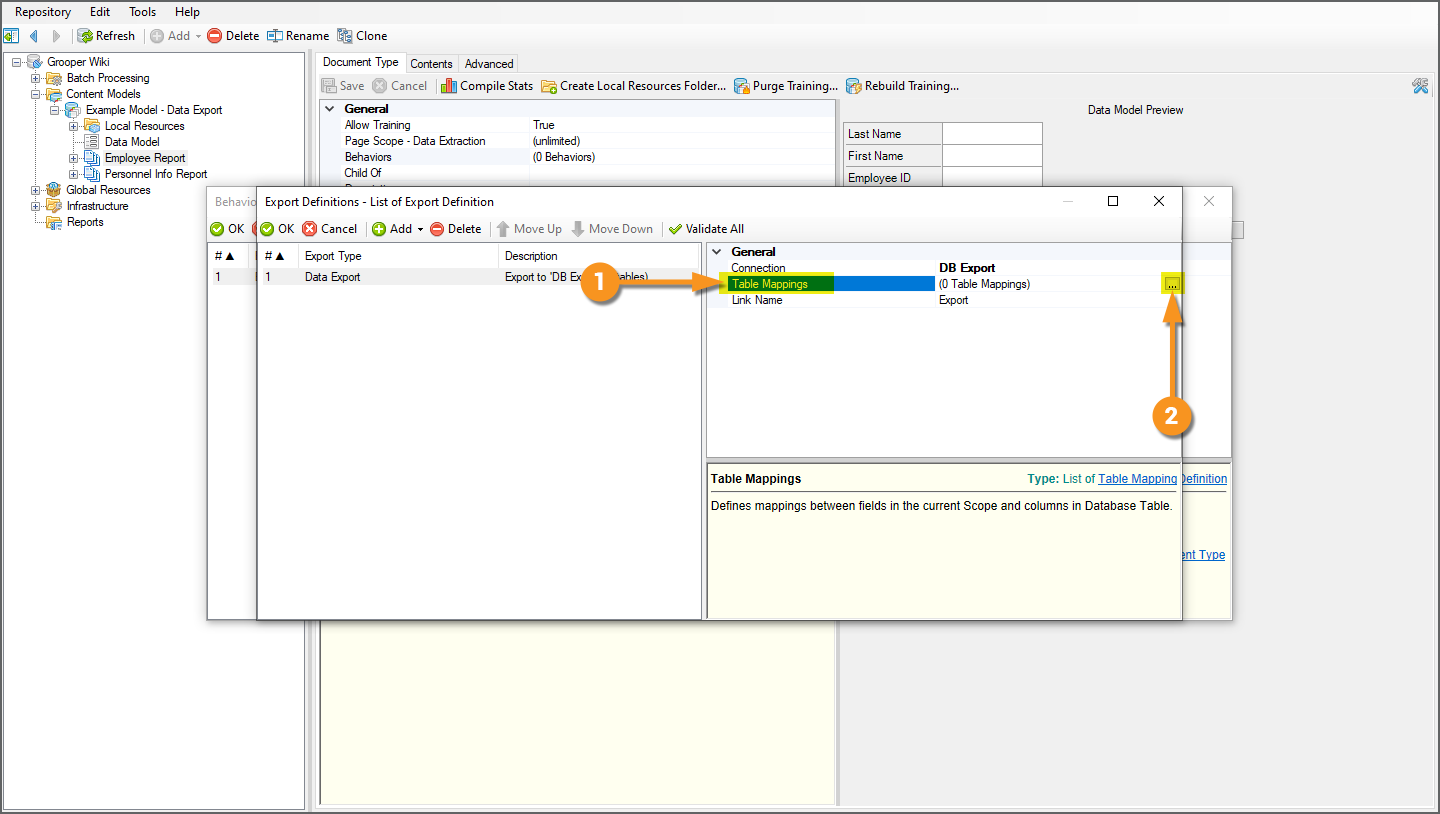

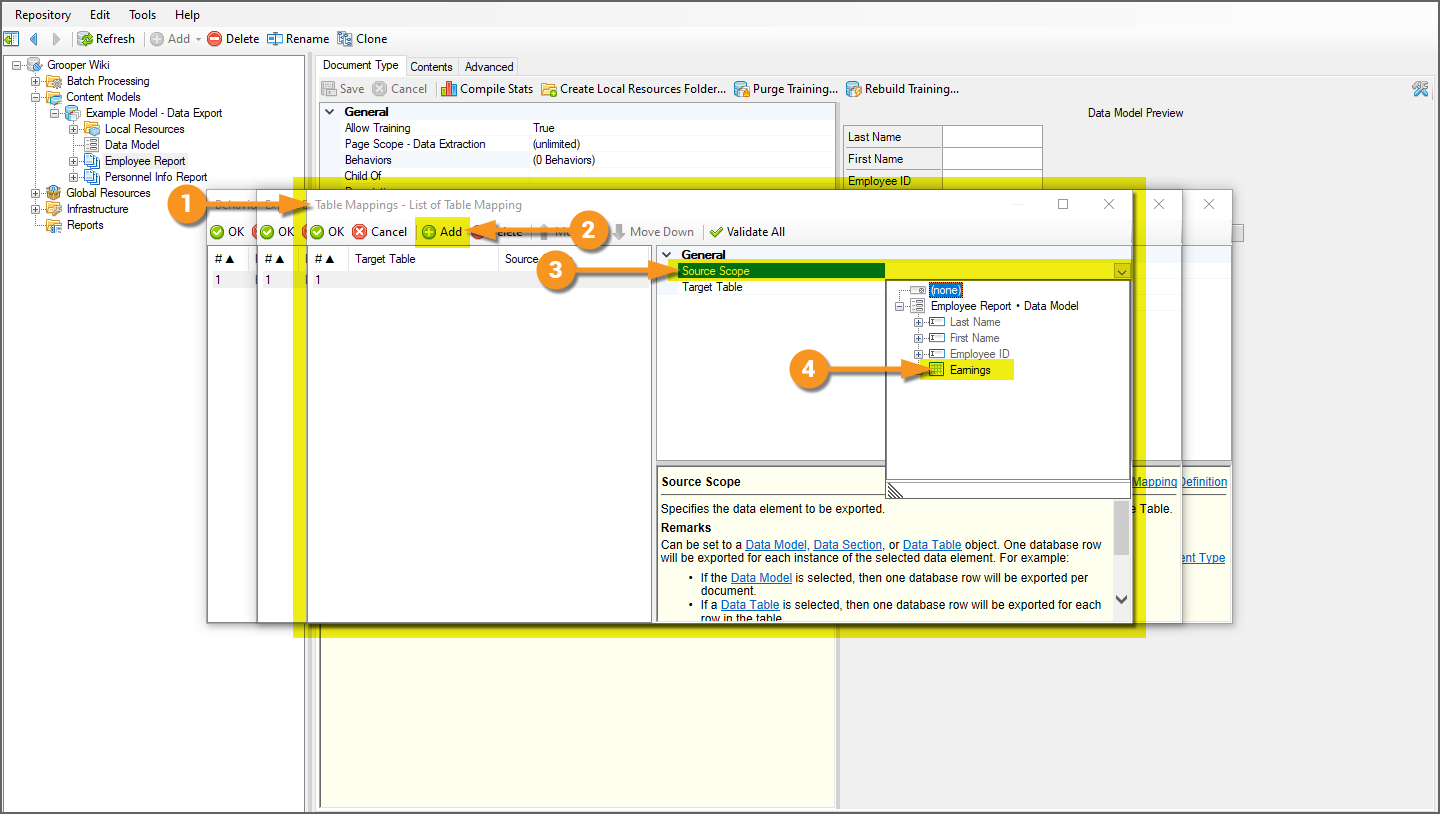

Table Mappings Example 1: Flattening a Data Model

|

We will continue configuring our "Employee Report" Document Type's Data Export first. For this document, we have to deal with a "one-to-many" relationship between data that exists once on the document, and the dynamic data in the multiple rows in the "Earnings" table. For this example, we will flatten the Data Model's element hierarchy. By flattening the Data Model, we can output all the document's data to a single database, despite the fact some of its data is present once on the document (the single instance Data Field' values) and some is more dynamic (mutli-instance Data Column values from our Data Table). We will essentially re-output the single instance values, marrying them to every row instance output for the table on the document. Think of the single instance values as "document level". These single values ("Last Name", "First Name", "Employee ID") pertain to the whole document. Therefore, they also pertain to every row of information in the "Earnings" table. |

|

We accomplish this feat through Table Mappings. This will allow us to hook up the right Data Elements in a Data Model to the right database table columns upon exporting collected values. Much like data hierarchy scope was important when we created this database, so will it be when we configure Table Mappings for export.

|

|

|

|

|

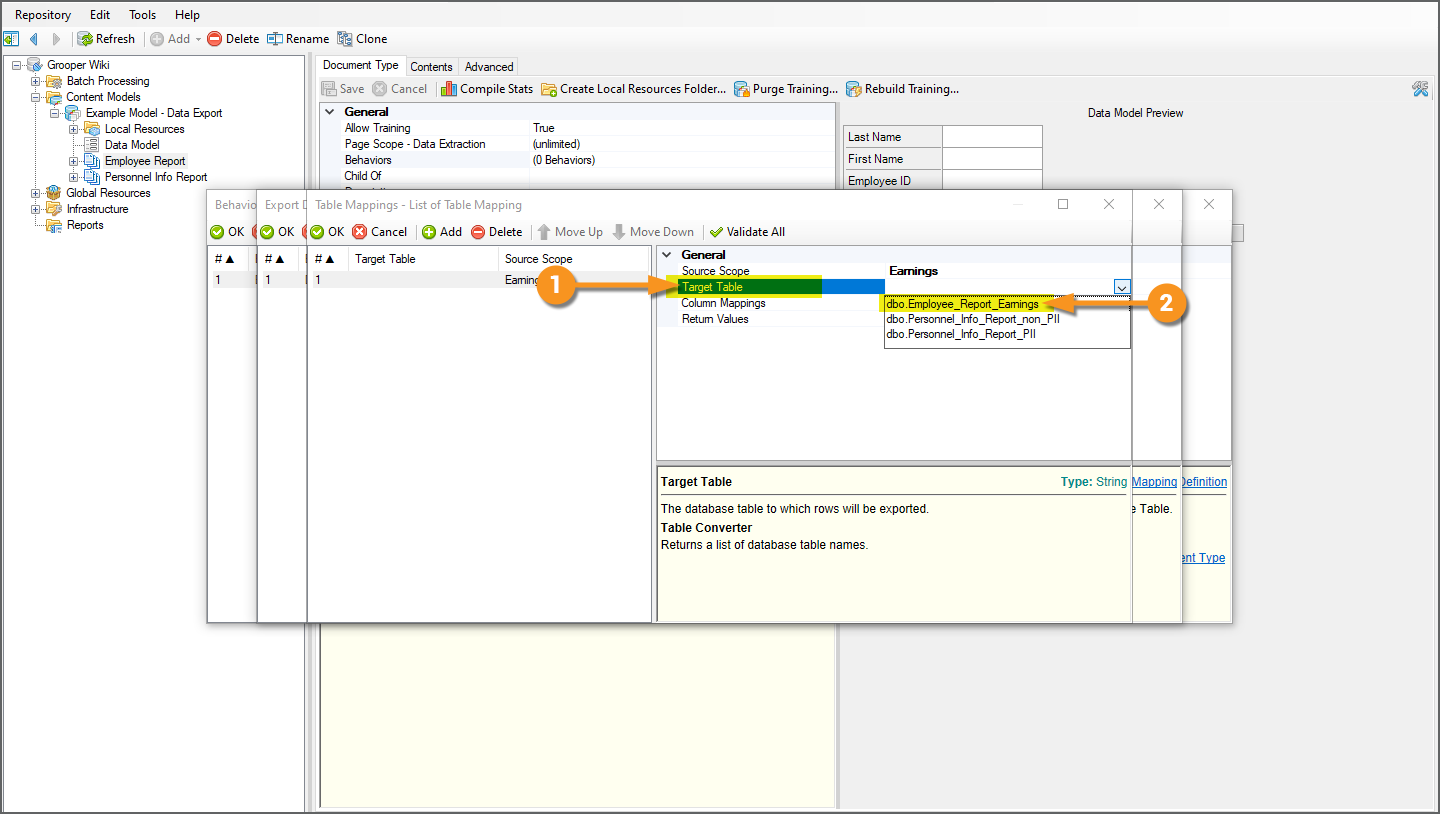

Next we need to define which database table we're mapping to. There are now three databases in the database connected to our Data Connection. Each of their table references have been imported to Grooper. We need to pick which one we want to use.

|

|

|

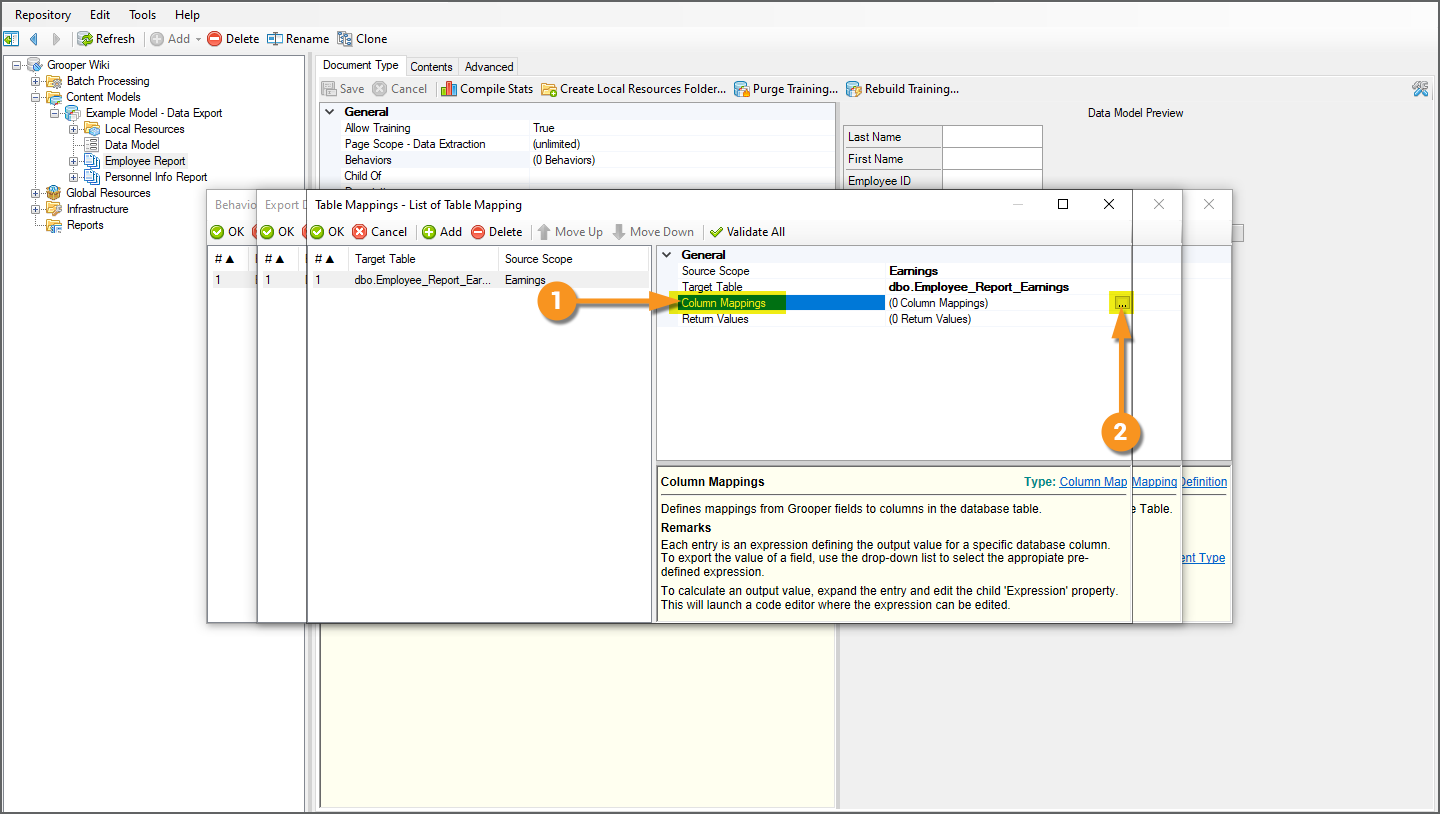

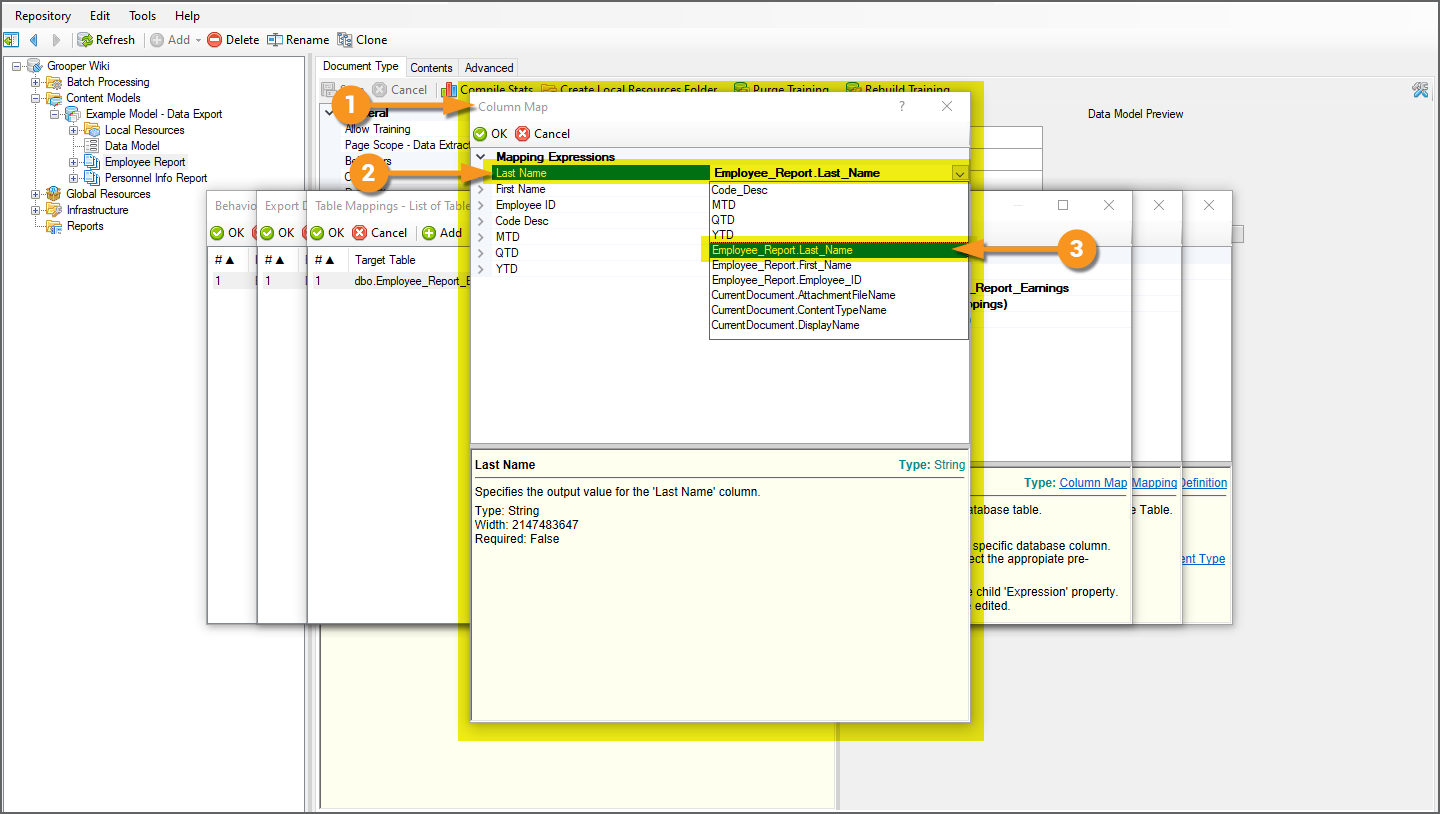

Now that we have a source data scope selected (where we're mapping from) and a target database table selected (where we're mapping to), we will map the Data Elements in our Data Model's scope to columns in the targeted database table.

|

|

|

|

|

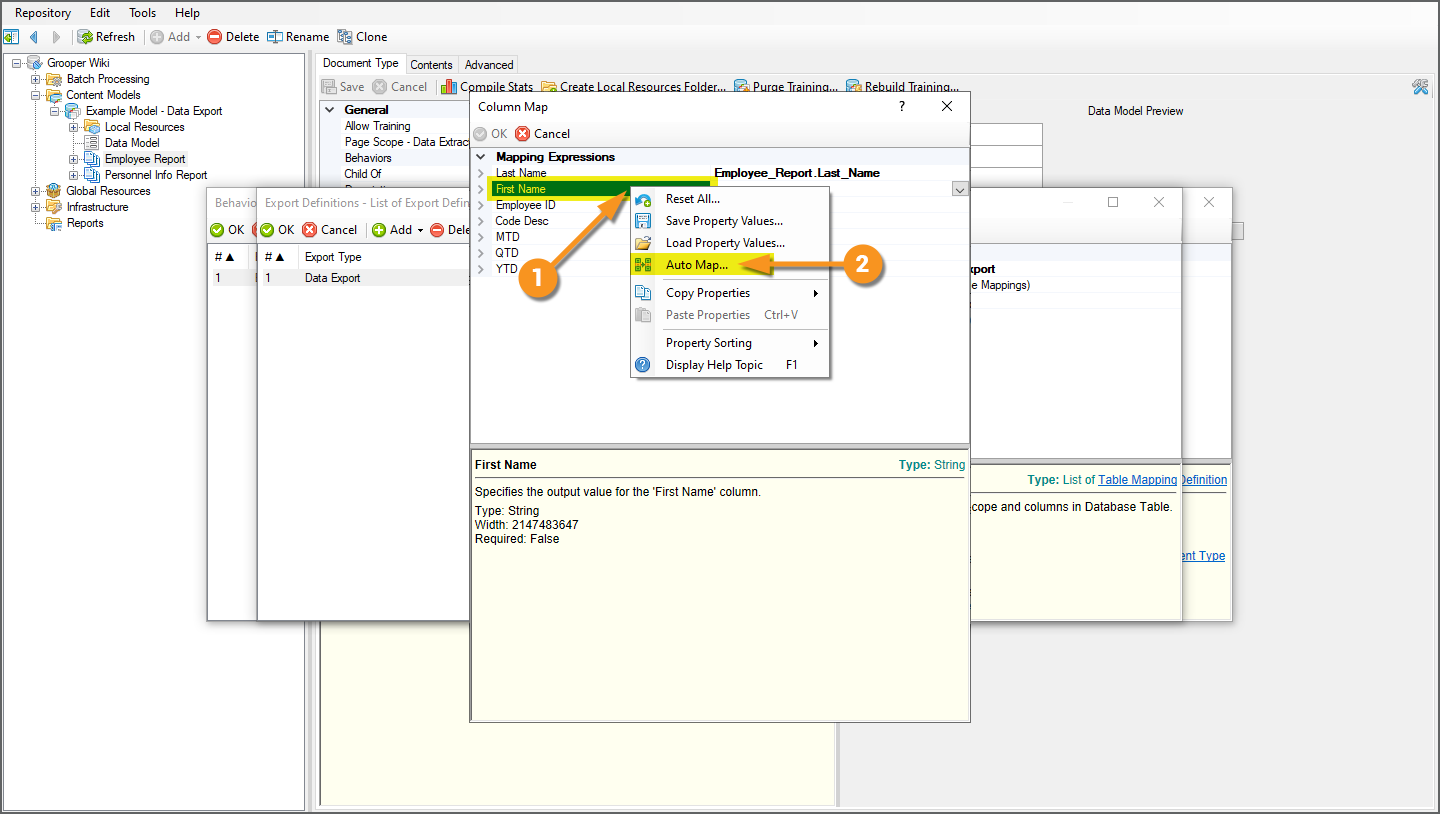

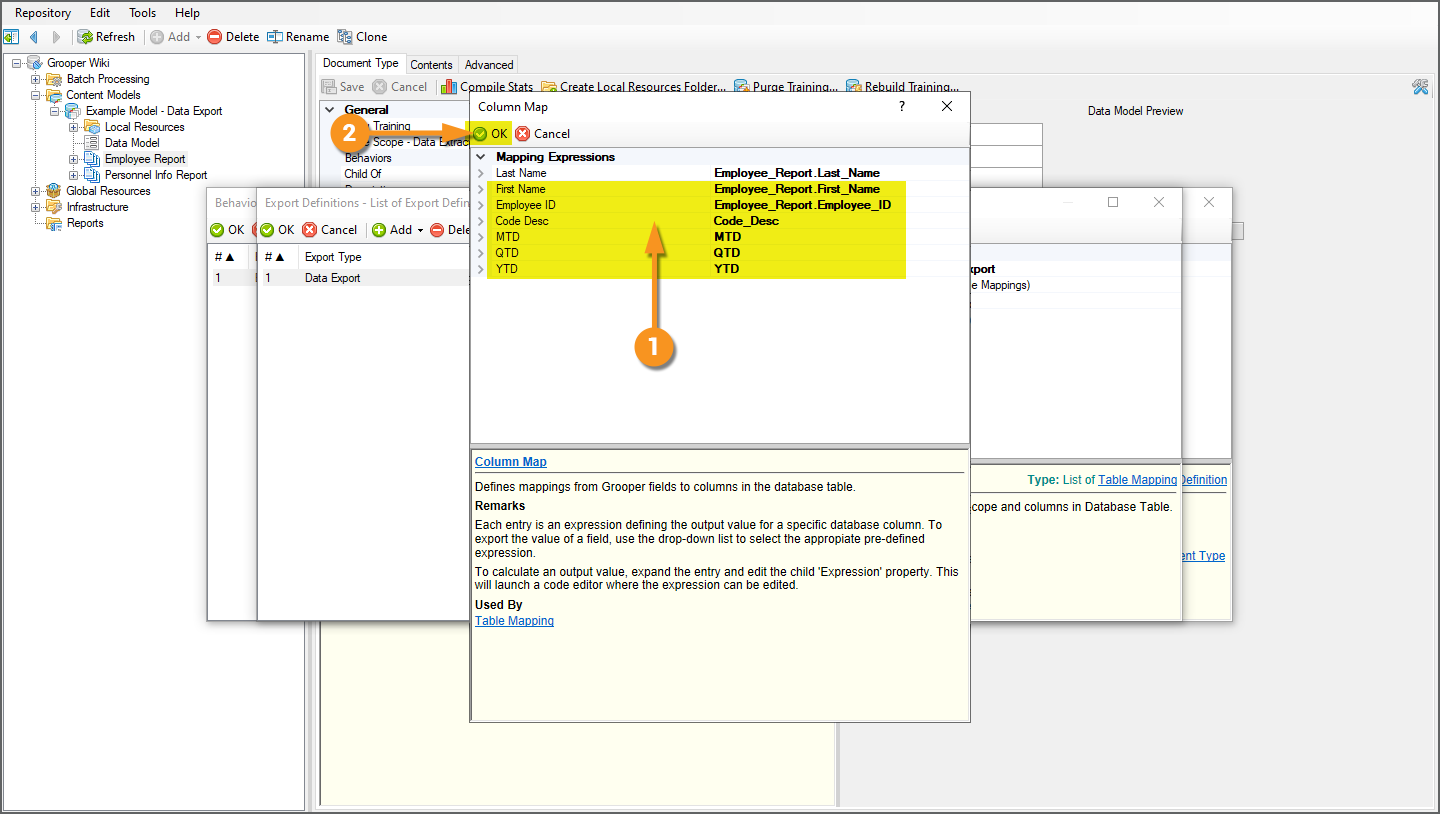

There is also a mapping shortcut to automatically assing mappings if a Data Element's name matches a database column's name.

|

|

|

|

|

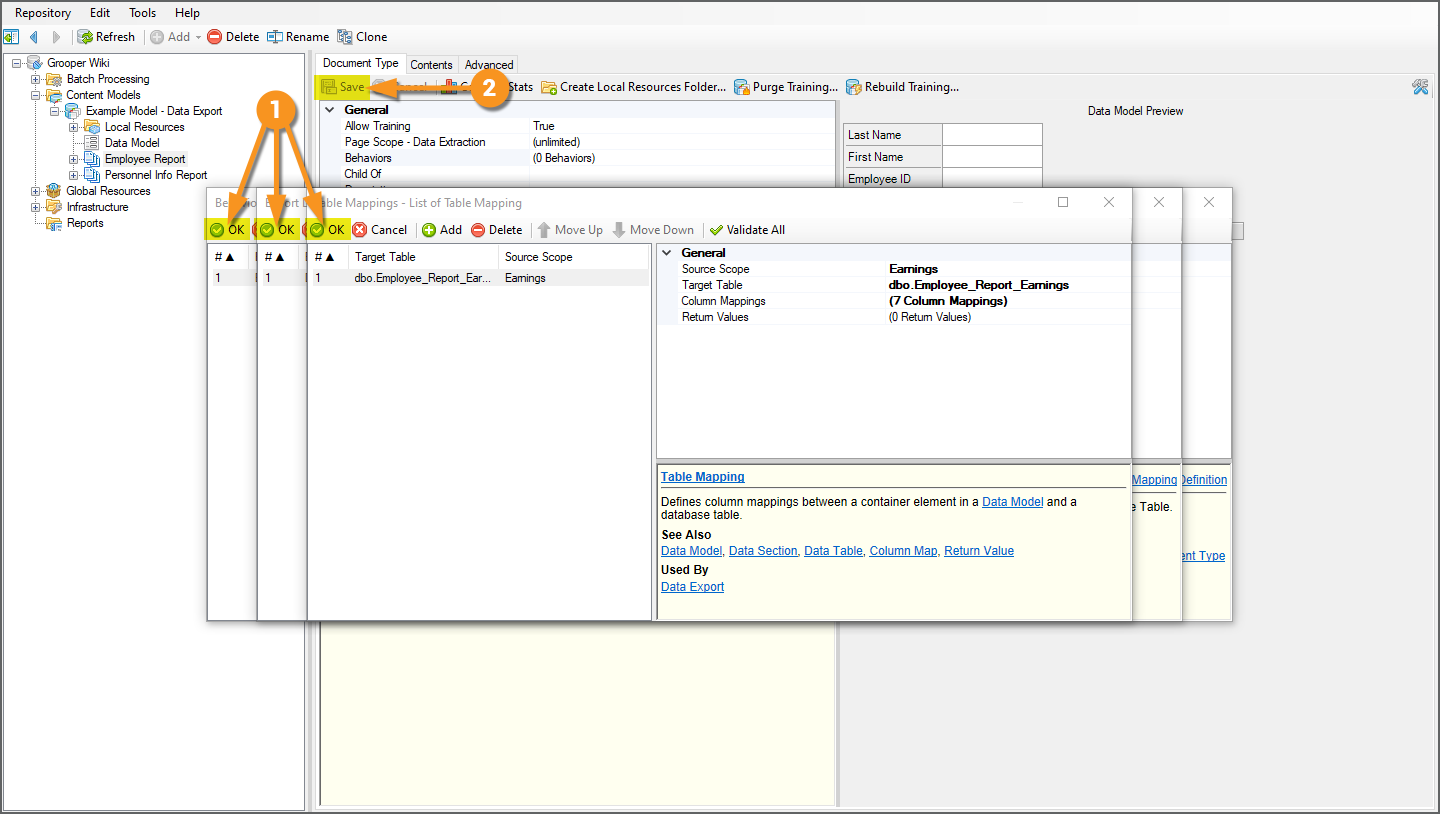

We now have everything we need to export data from "Employee Report" documents to a database, using Data Export.

With this Export Behavior in place, whenever the Export activity processes an "Employee Report" Batch Folder, data will be exported to the database as we've designed through these settings. Before testing the Export step, in the next tab, we will configure another Data Export for the "Personnel Info Report" Document Type with its own set of database table mappings to export their extracted data to two different database tables. |

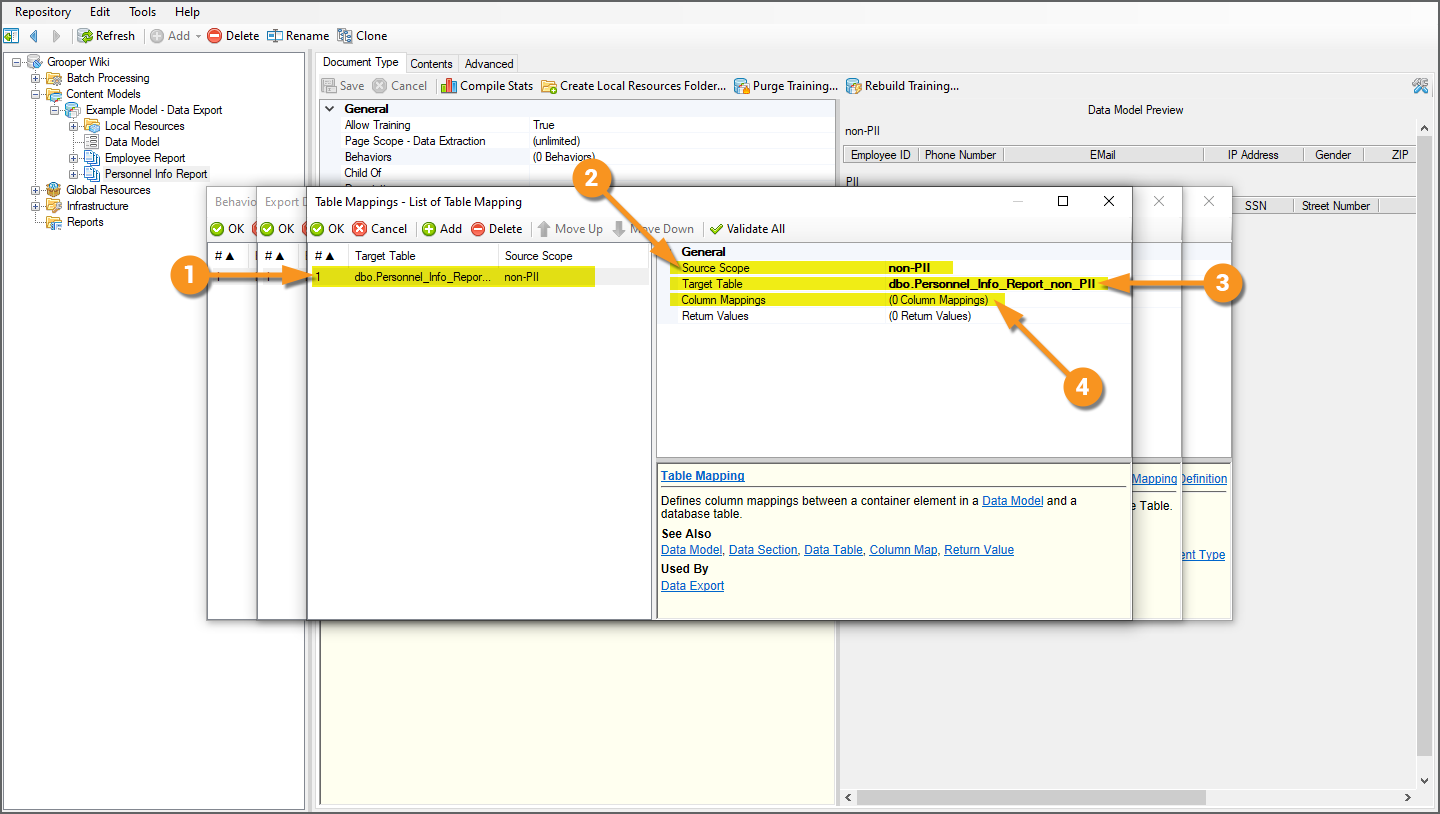

Table Mappings Example 2: Exporting to Multiple Database Tables

Despite the fact you can only set up one Export Behavior per Content Type, you can add as many Export Definitions as you need.

In this example, we will take the extracted data from our "Personnel Info Report" Document Type and export PII information to one database table and non-PII information to a different table, using two Data Export definitions.

|

|||

|

|||

|

|||

|

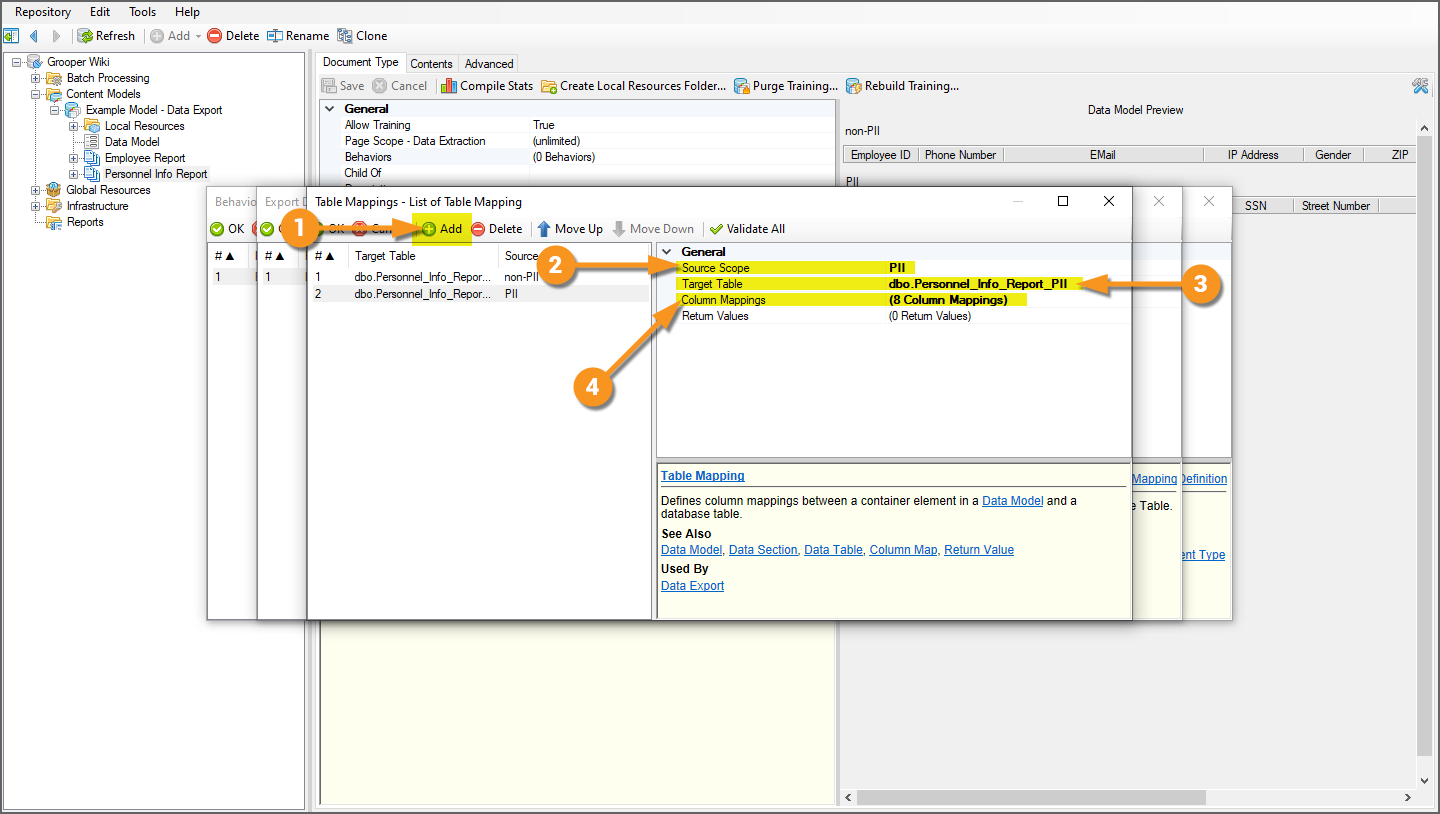

Now we just need to set up mappings for the "PII" Data Table. In this case, we're exporting to the same database, just two different database tables within it. Since we're already collecting mappings for the database connected to using the "DB Export" Data Connection, all we need to do as add another set of Table Mappings.

|

|||

|

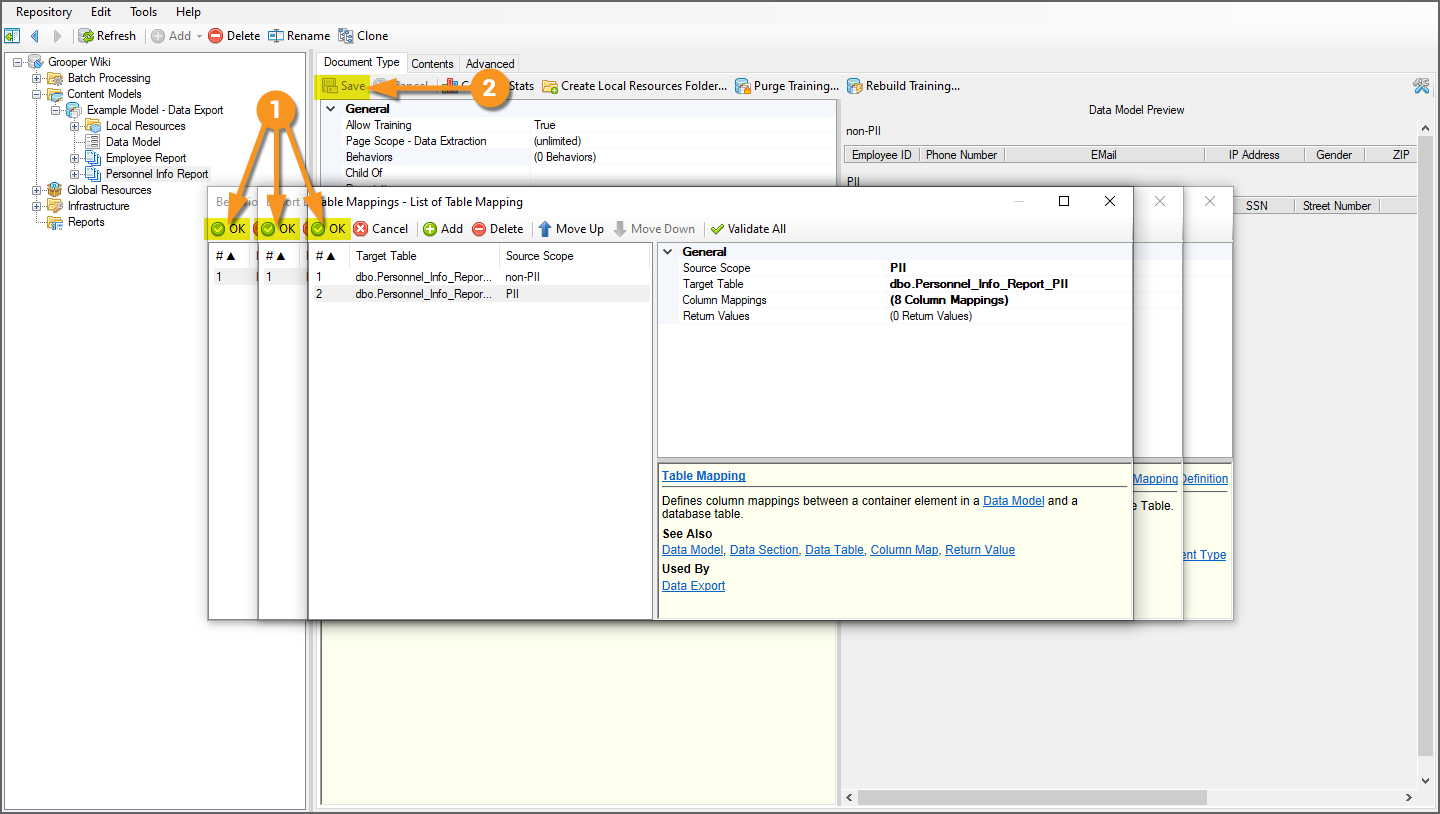

We now have everything we need to export data from "Personnel Info Report" documents to two tables in a database, using Data Export.

Next, we will apply the Export activity to the document Batch Folders in our sample Batch, which will export data according to their Document Type's Export Behavior using the Data Export definitions. |

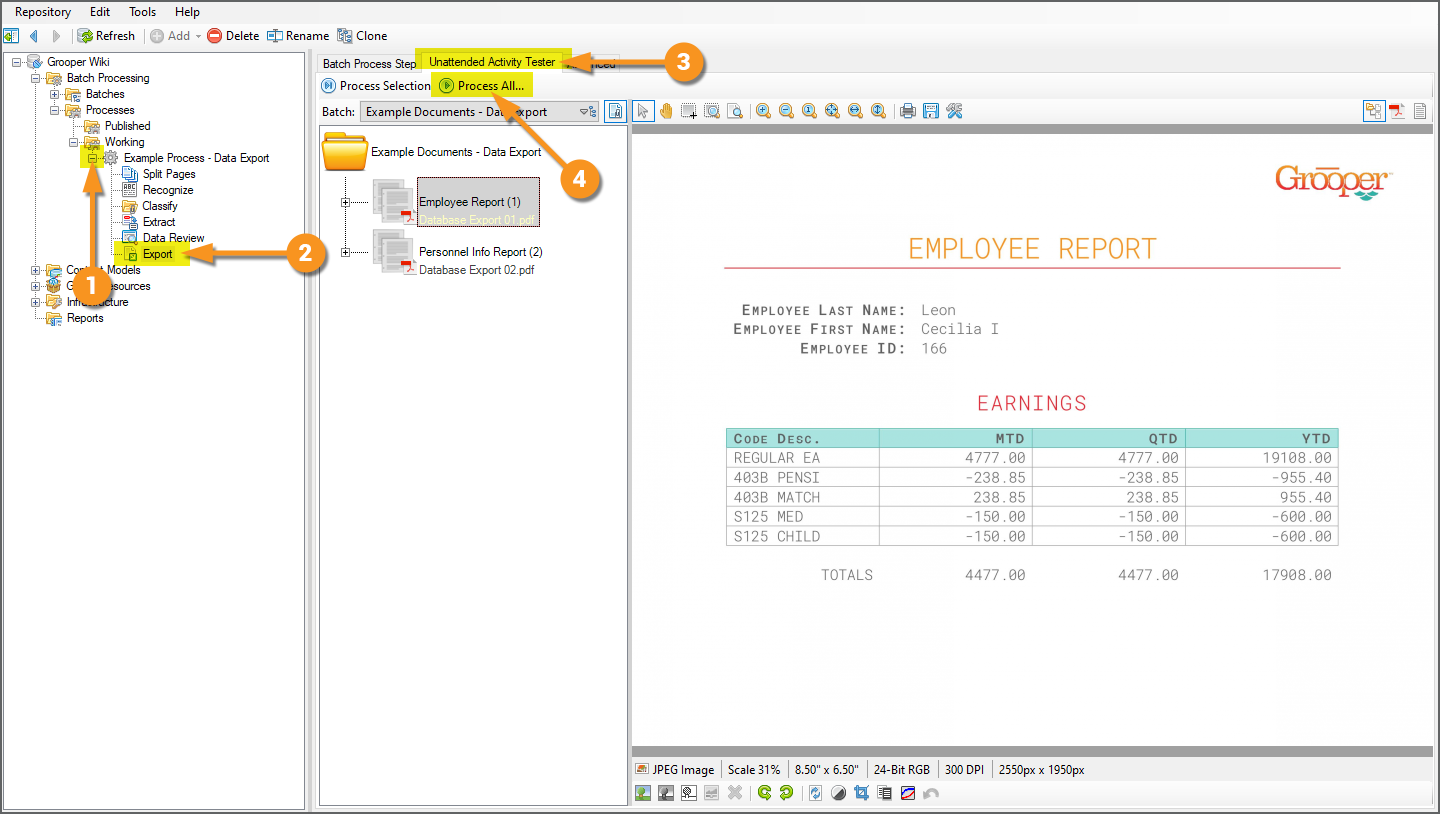

Applying the Export Activity and Reviewing the Results

Process the Export Step

|

With the two Export Behaviors configured, we can now test our export. Export Behaviors are executed by the Export activity.

|

|

|

We will test our export using the Export activity's "Unattended Activity Tester" tab.

|

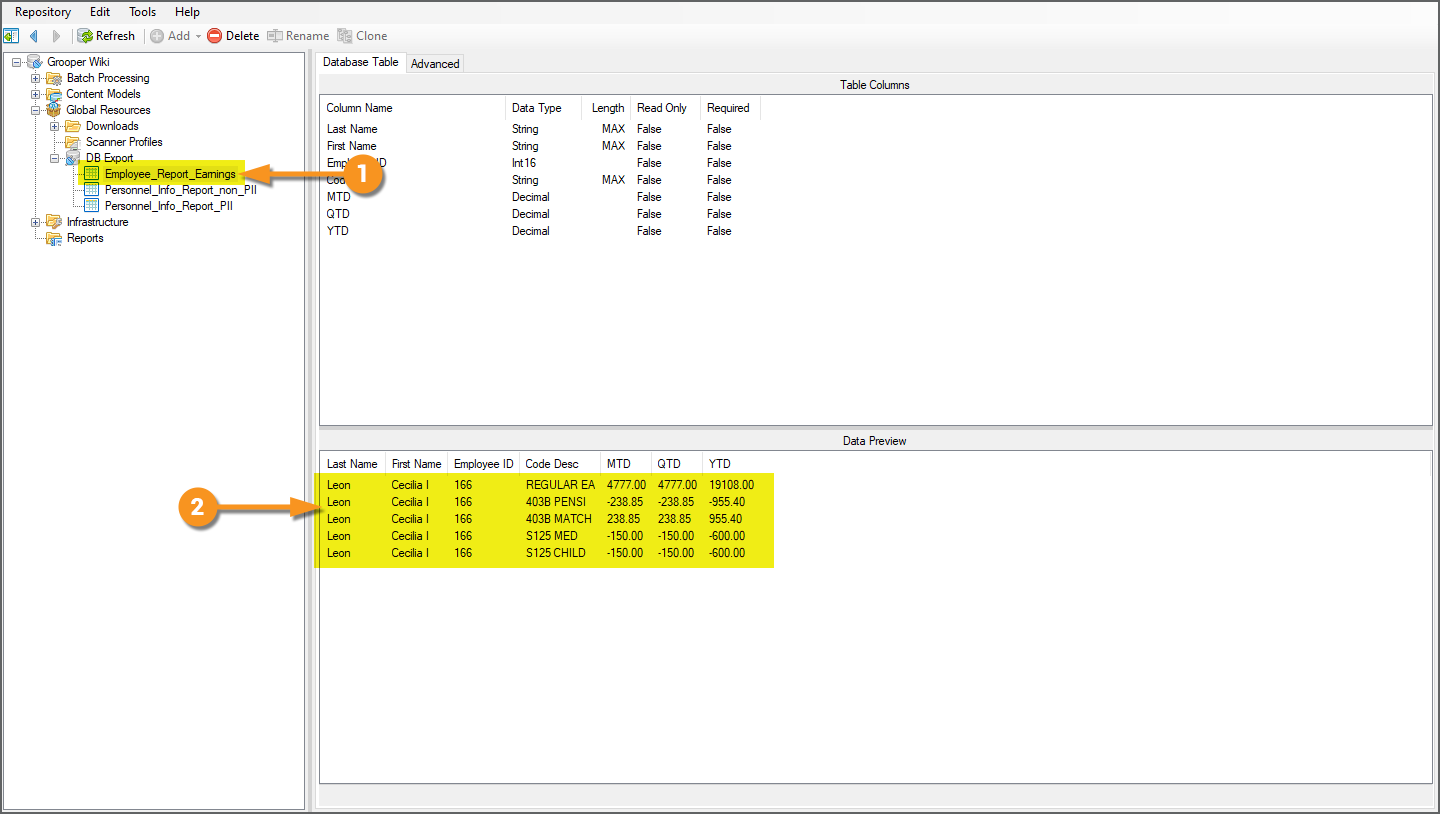

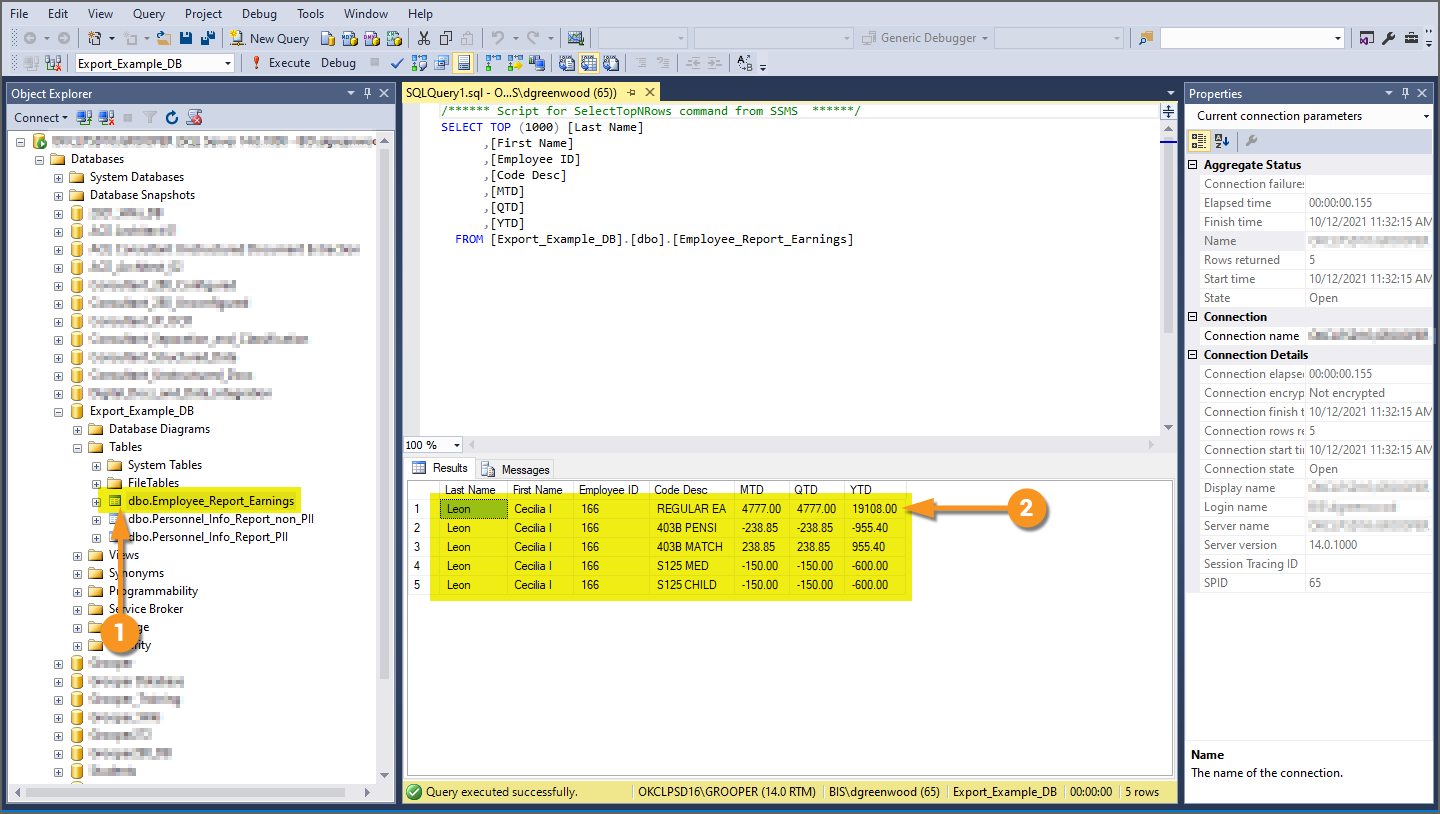

Review the Export

You can easily review our exported data in one of two ways:

- In the "Data Preview" panel of any of the Database Table reference objects.

- Connecting the database table in Microsoft SQL Server Management Studio.

|

|

|

Using SQL Server Management Studio, we can also verify the data was exported.

|