2023:GPT Integration (Concept): Difference between revisions

Dgreenwood (talk | contribs) m Dgreenwood moved page GPT Integration - 2023 to 2023:GPT Integration without leaving a redirect |

|

(No difference)

| |

Revision as of 12:50, 28 December 2023

OpenAI GPT integration in Grooper allows users to leverage modern AI technology to enhance their document data integration needs.

OpenAI's GPT model has made waves in the world of computing. Our Grooper developers recognized the potential for this to grow Grooper's capabilities. Adding its funcionality will allow for users to explore and find creative solutions for processing their documents using this advanced technology.

ABOUT

GPT (Generative Pre-trained Transformer) integration can be used for three things in Grooper:

- Extraction - Prompt the GPT model to return information it finds in a document.

- Classification - GPT has been trained against a massive corpus of information, which allows for a lot of potential when it comes to classifying documents. The idea here is that because it's seen so much, the amount of training required in Grooper should be less.

- Lookup - With a GPT lookup you can provide information collected from a model in Grooper as

@variables in a prompt to have GPT generate data.

In this article you will be shown how Grooper leverages GPT for the aforementioned methods. Some example use cases will be given to demonstrate a basic approach. Given the nature of the way this technology works, it will be up to the user to get creative about how this can be used for their needs.

Things to Consider

Before moving forward it would be prudent to mention a few things about GPT and how to use it.

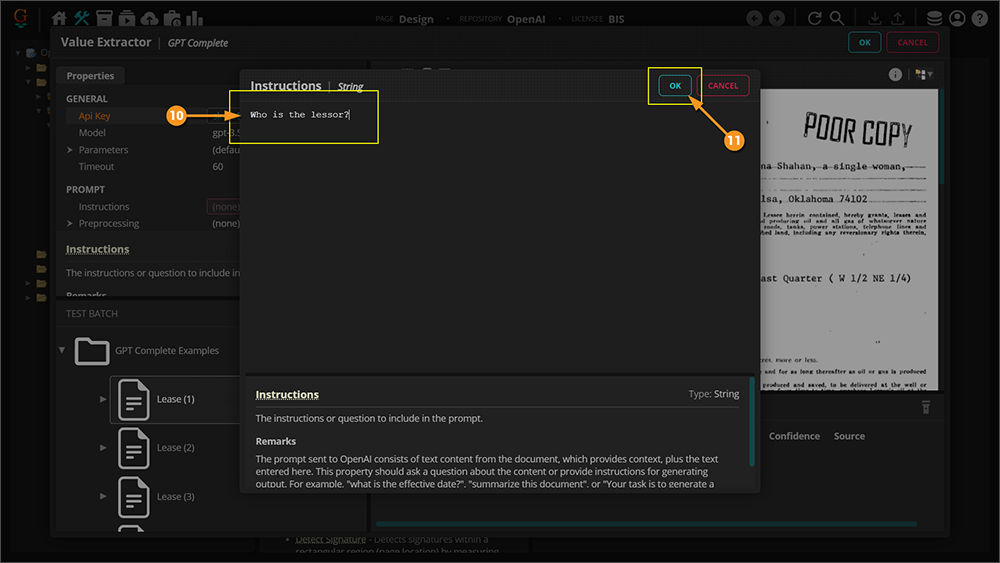

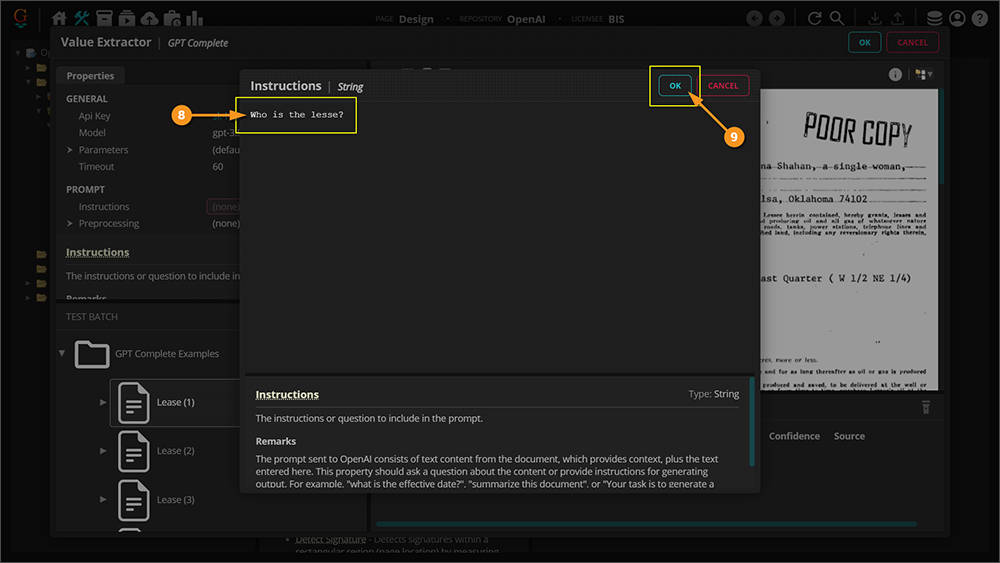

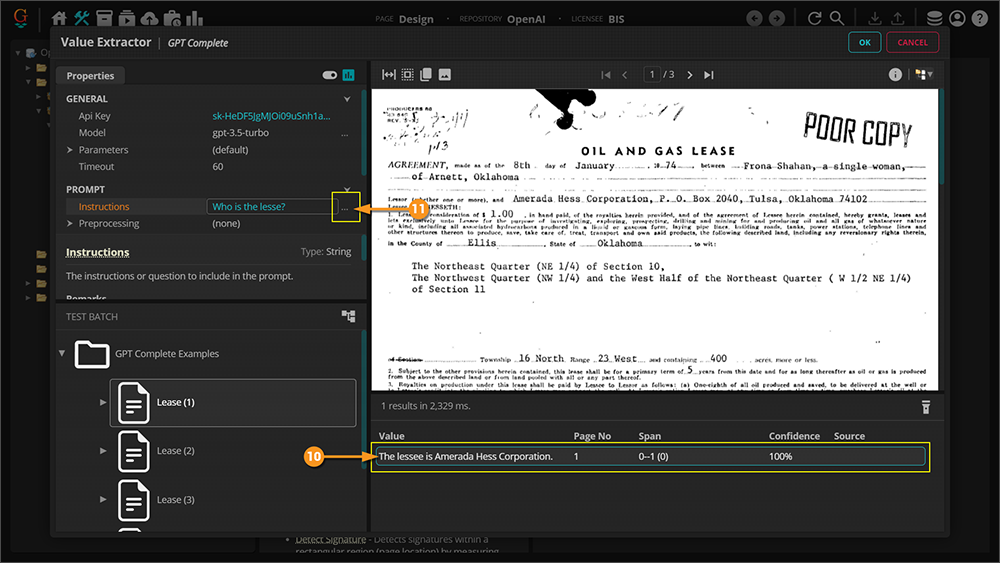

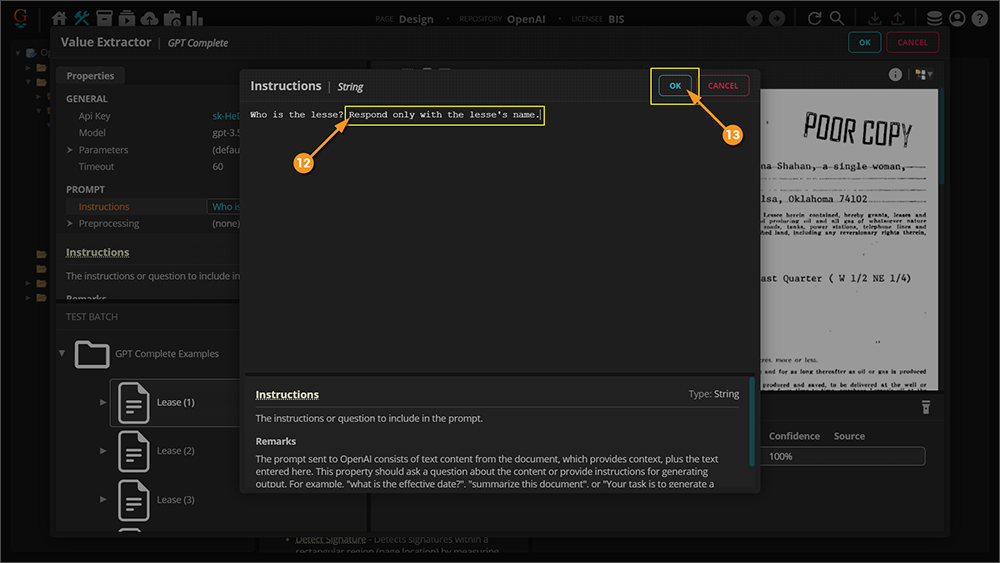

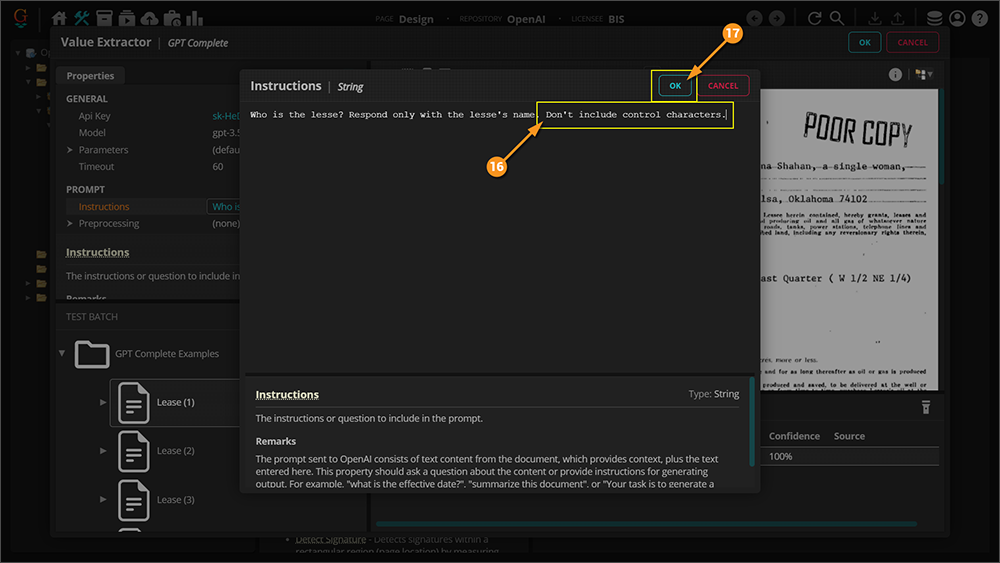

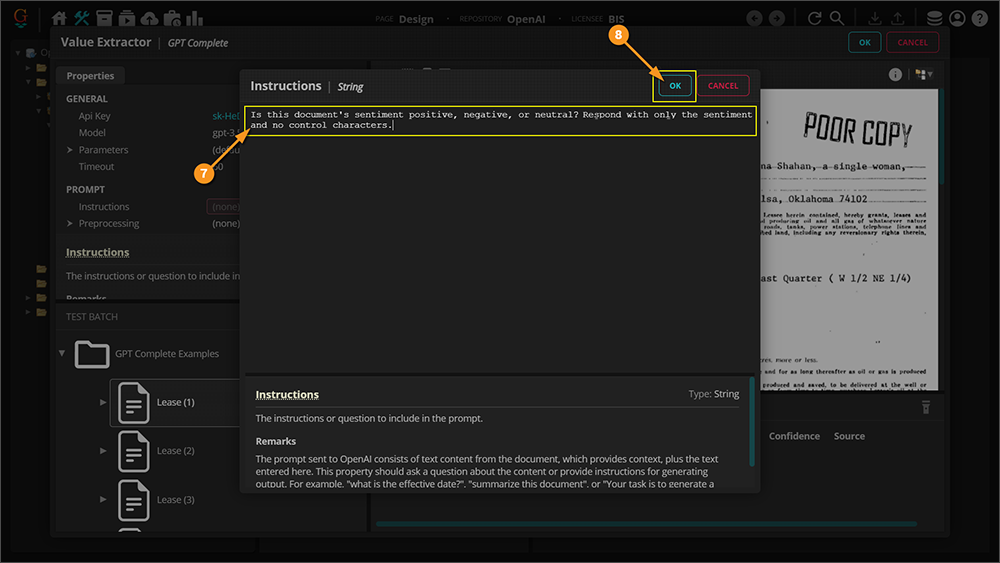

Prompt Engineering

This first thing to consider is how to structure a good prompt so that you get the results you are expecting. There is a bit of an art to knowing how to do this. GPT can tell bad jokes and write accidentally hilarious poems about your life, but it can also help you do your job better. The catch: you need to help it do its job better, too. At its most basic level, OpenAI's GPT-3 and GPT-4 predict text based on an input called a prompt. But to get the best results, you need to write a clear prompt with ample context. Further on in this article when the GPT Complete Value Extractor is being demonstrated you will see an example of prompt engineering.

Follow this link, or perhaps even this one, for more information on prompt engineering.

Tokens and Pricing

Another consideration is the way GPT pricing works. You are going to be charged for the "tokens" used when interacting with GPT. To that end, the prompt that you write, the text that you leverage to get a result, and the result that is returned to you are all considered part of the token consumption. You will need to be considerate of this as you build and use GPT in your models.

Follow this link for more information on what tokens are.

Follow this link for more information on GPT pricing.

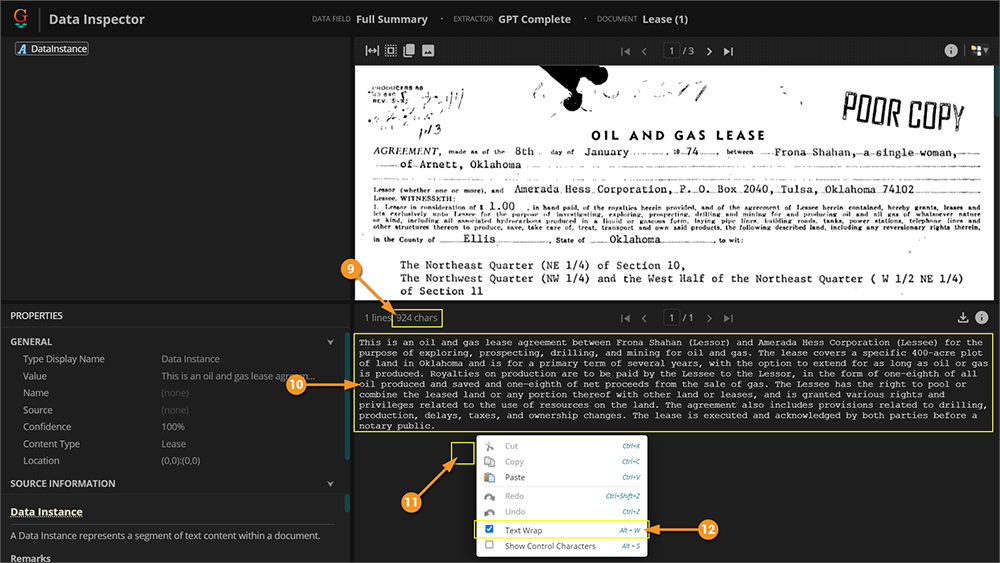

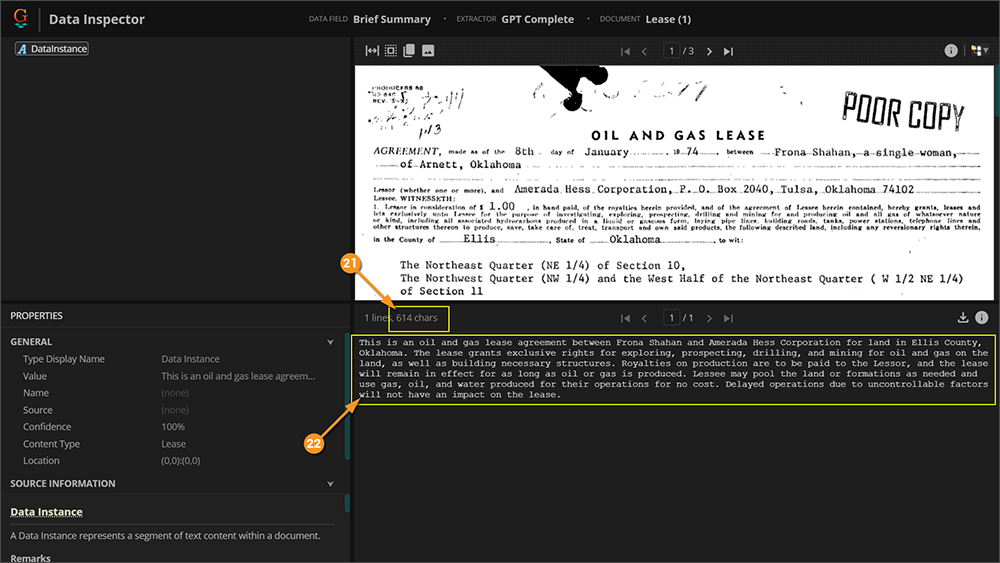

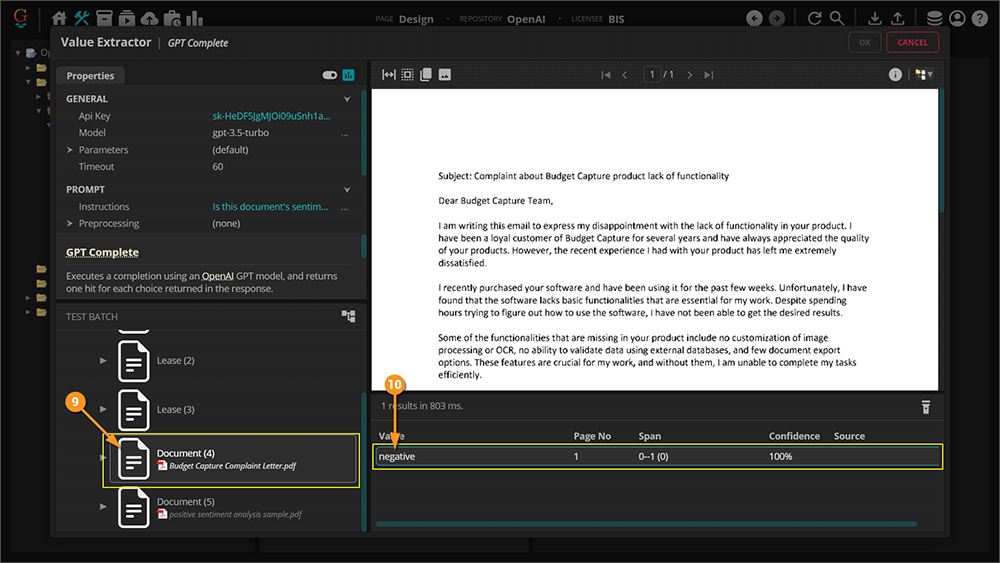

Location Data for Data Extraction

The final thing to consider is in regards to the GPT Complete Value Extractor type (more on this soon.) If you have used Grooper before then you are probably familiar with how a returned value is highlighted with a green box in the document viewer. One of the main strenghts of Grooper's text synthesis is that it collects location information for each character which allows this highlighting to occur. The GPT model does not consider location information when generating its results which means there will be no highlighting on the document for values collected with this method. The main impact this will have is on your ability to validate information returned by the GPT model.

How To

With the discussion of concepts out of the way, it is time to get into Grooper and see how and where to use the GPT integration.

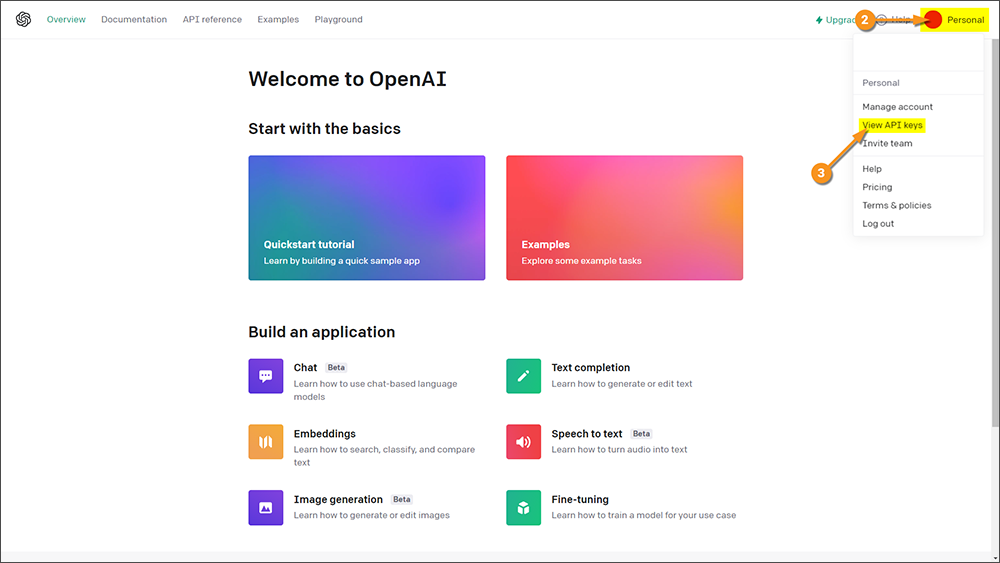

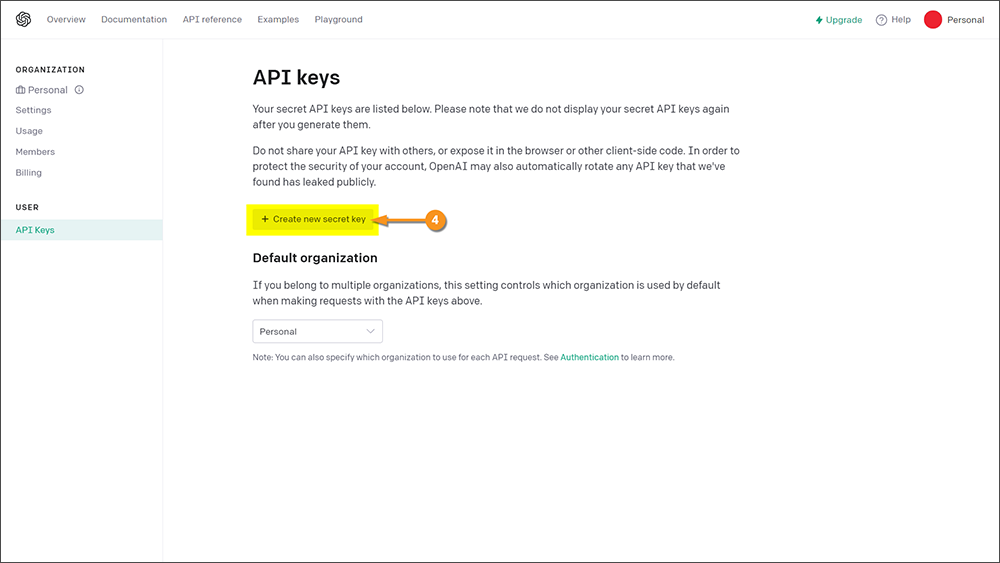

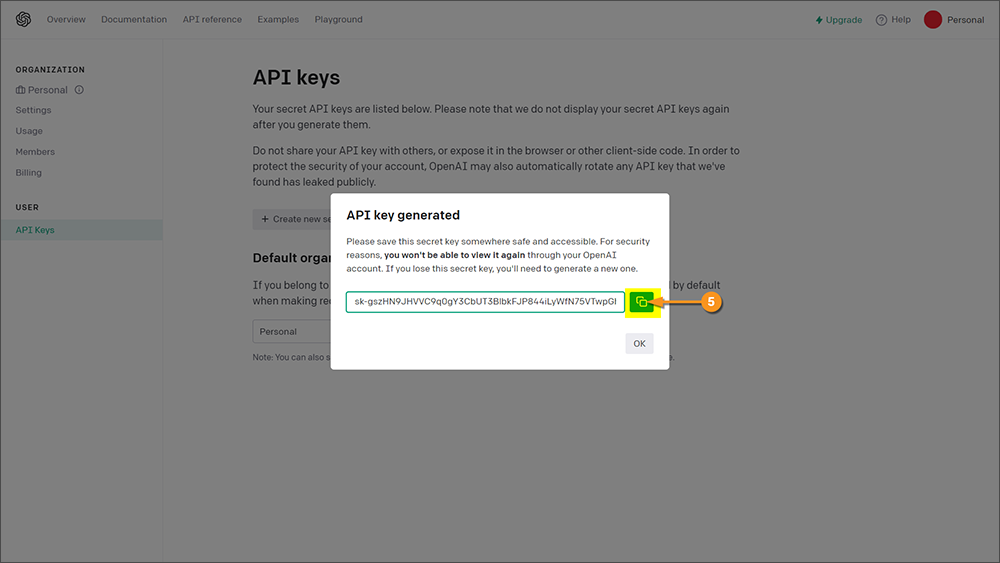

Obtain an API Key

Grooper is able to integrate with OpenAI's GPT model because they have provided a web API. All we need in order use the Grooper GPT functionality is an API key. Here you will learn how to obtain an API key for yourself so you can start using GPT with Grooper.

|

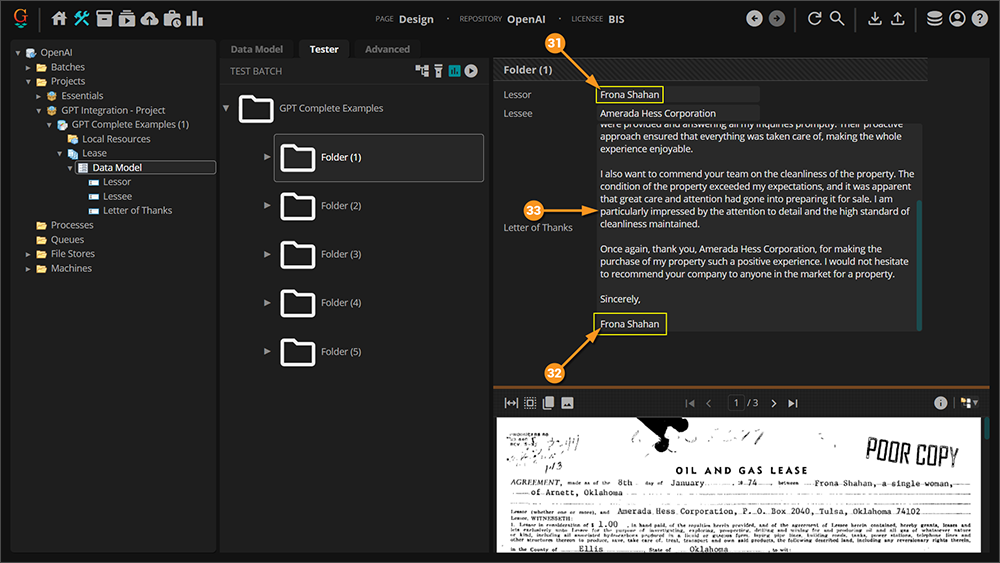

|

|

|

|

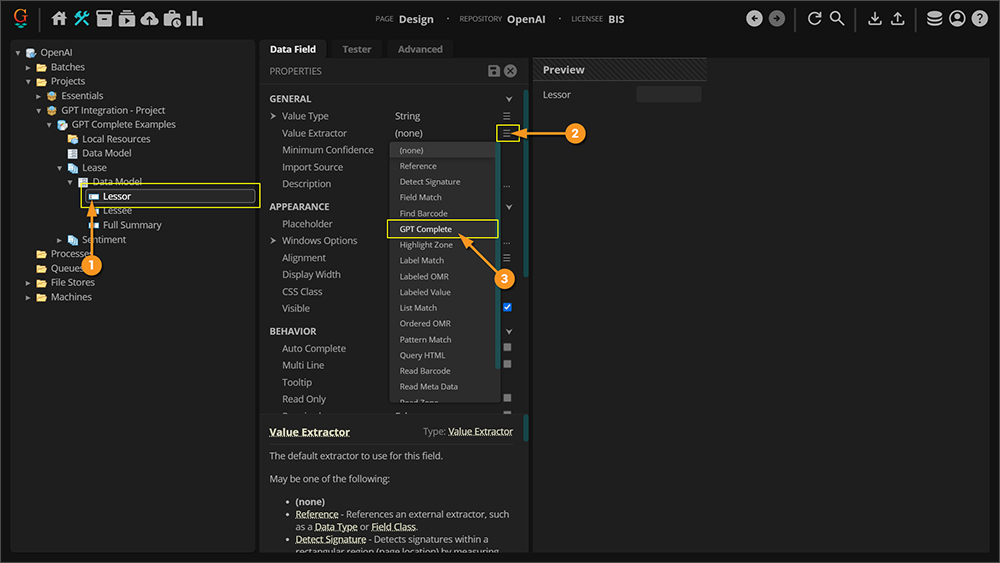

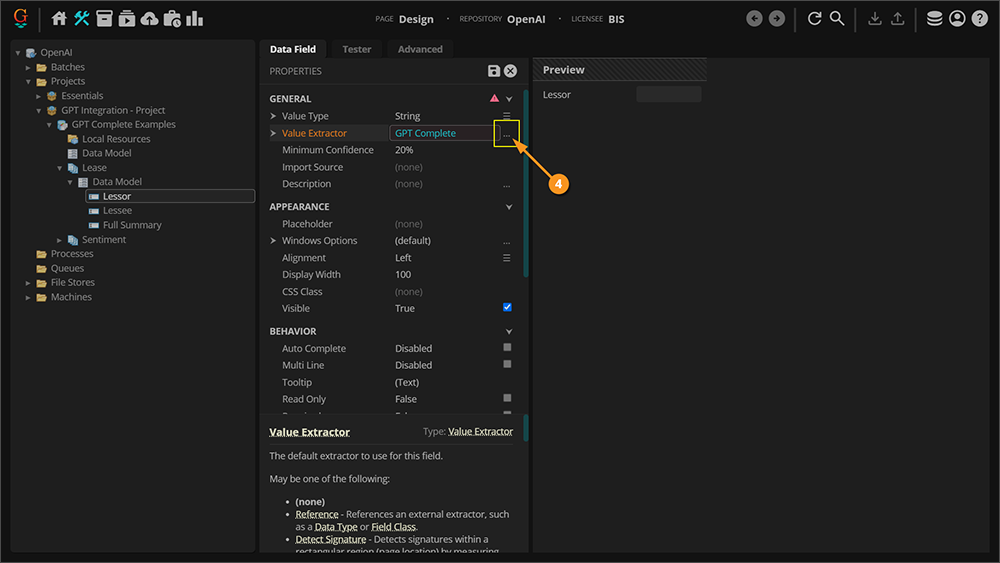

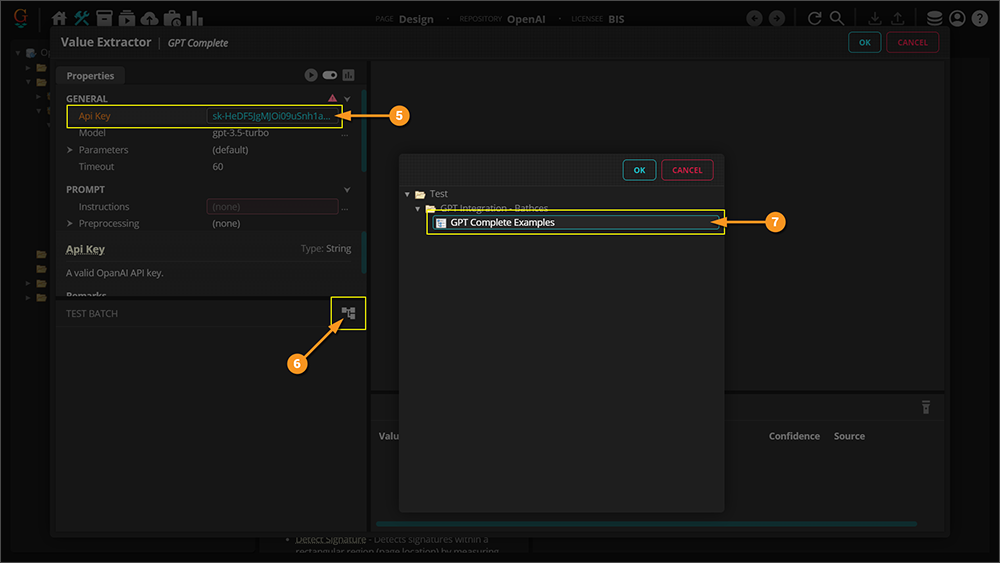

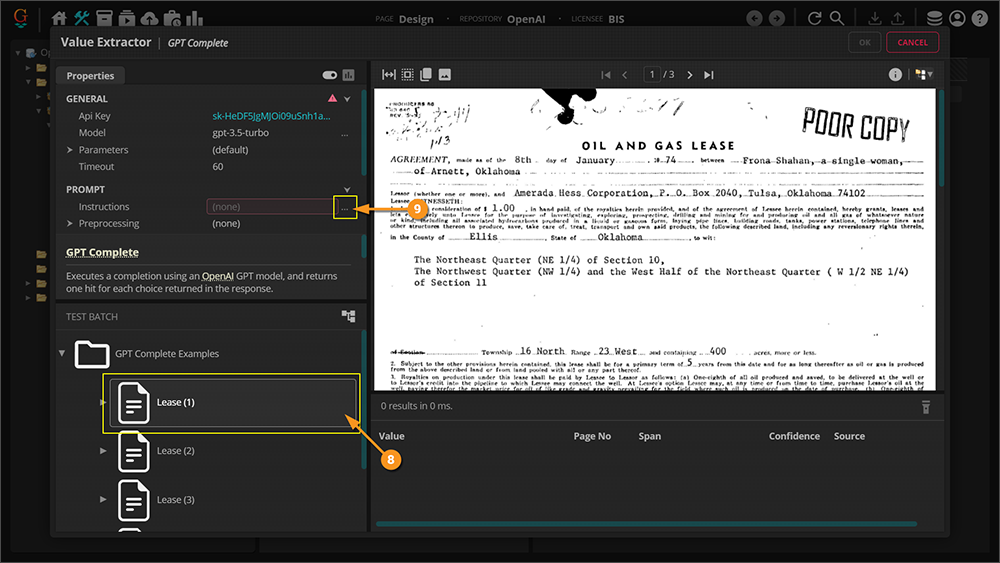

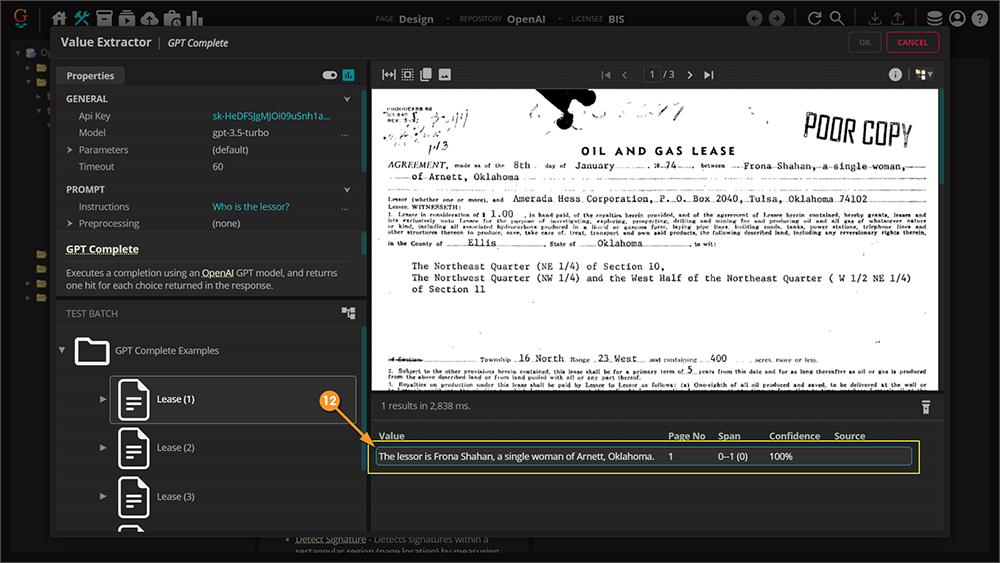

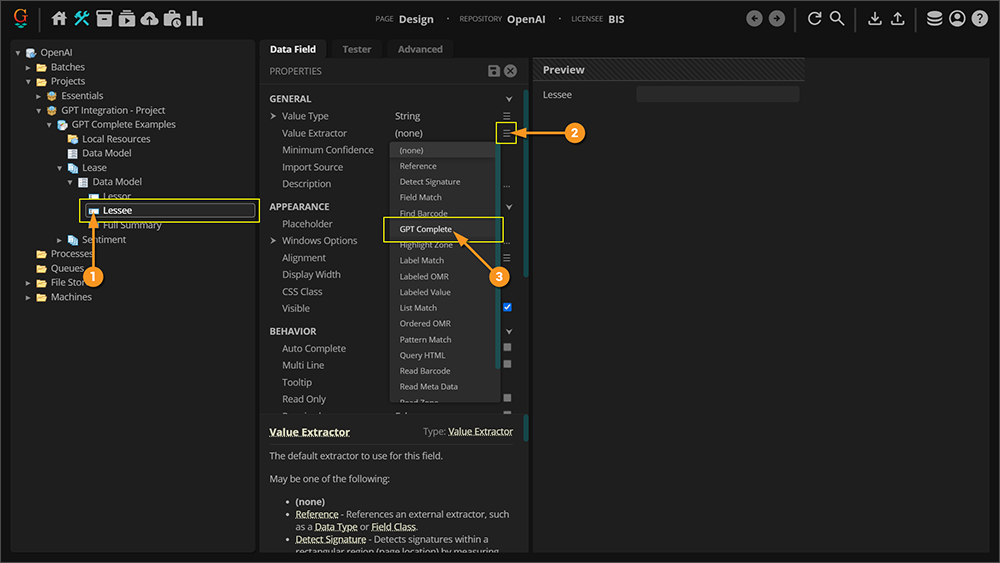

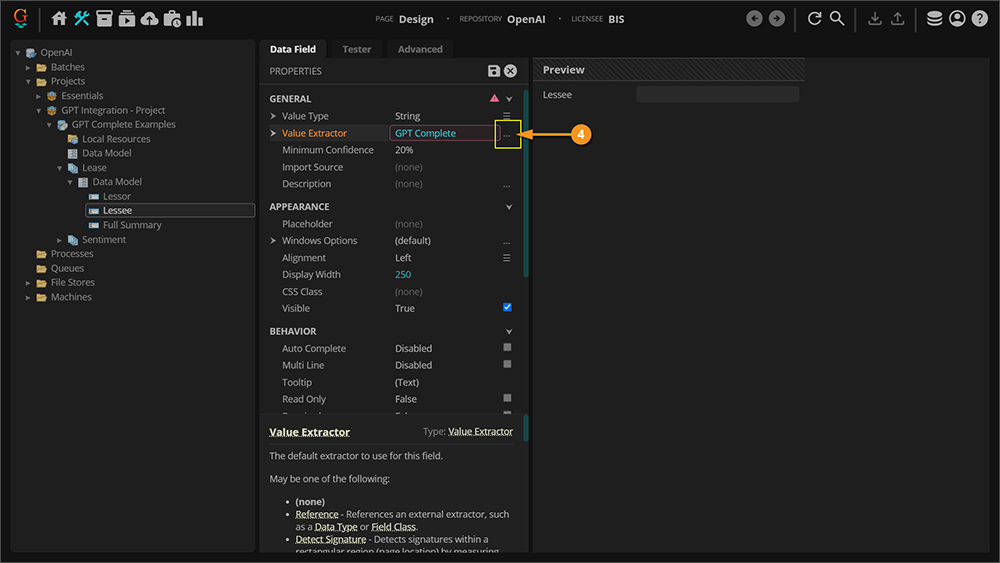

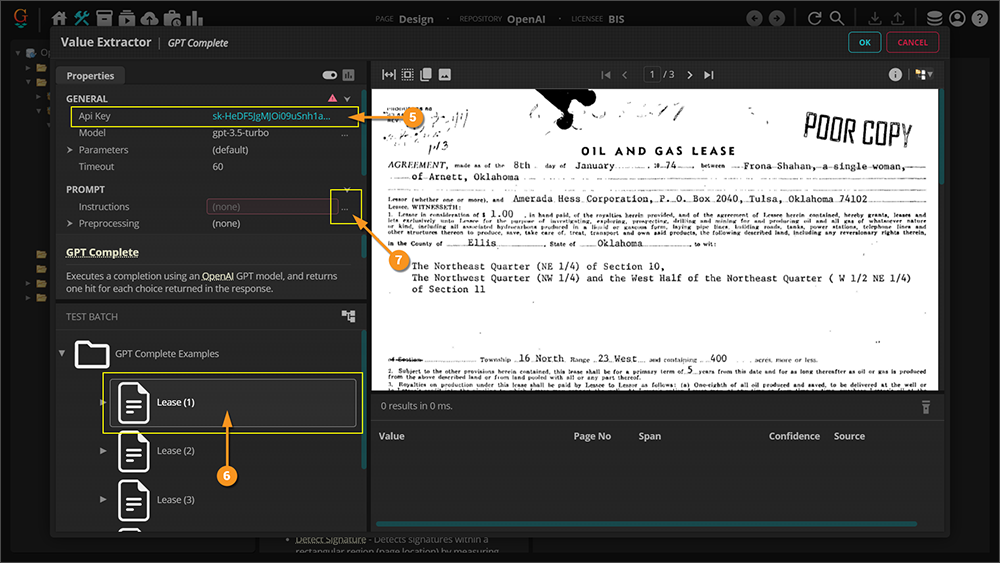

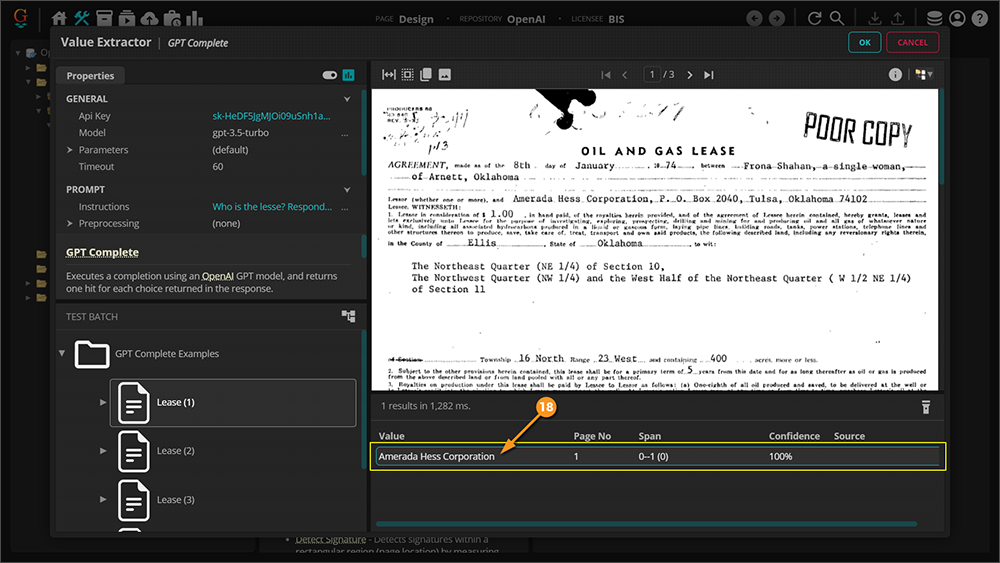

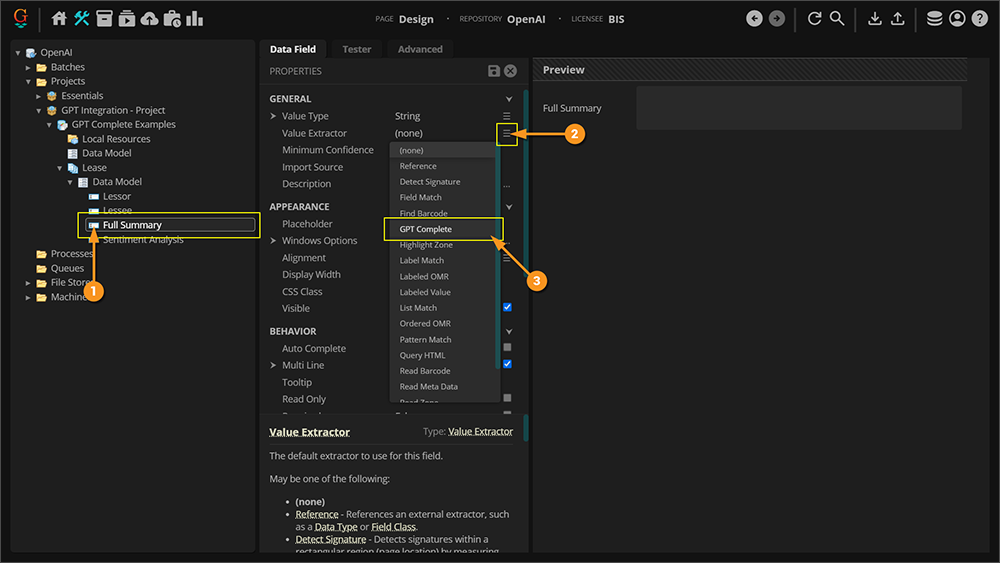

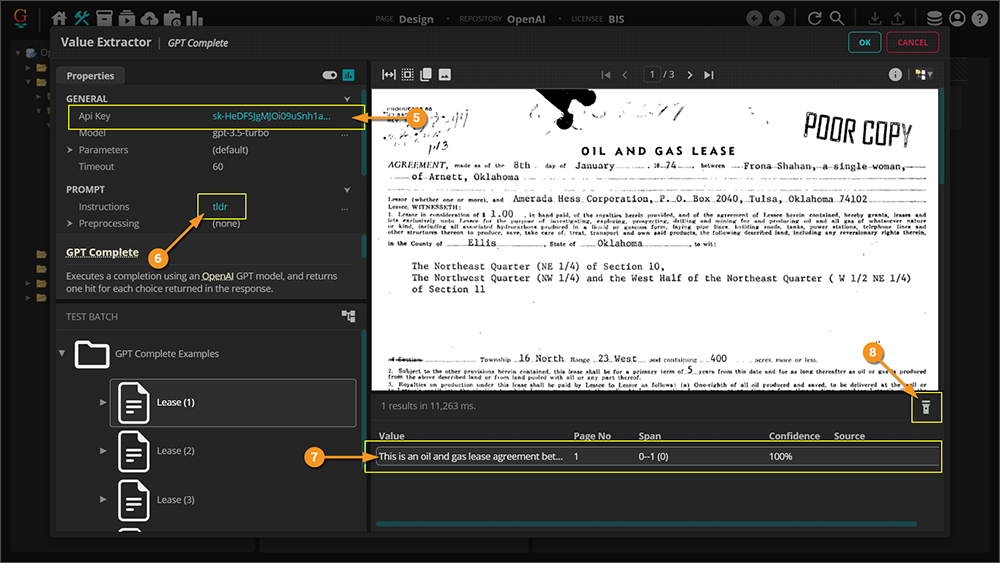

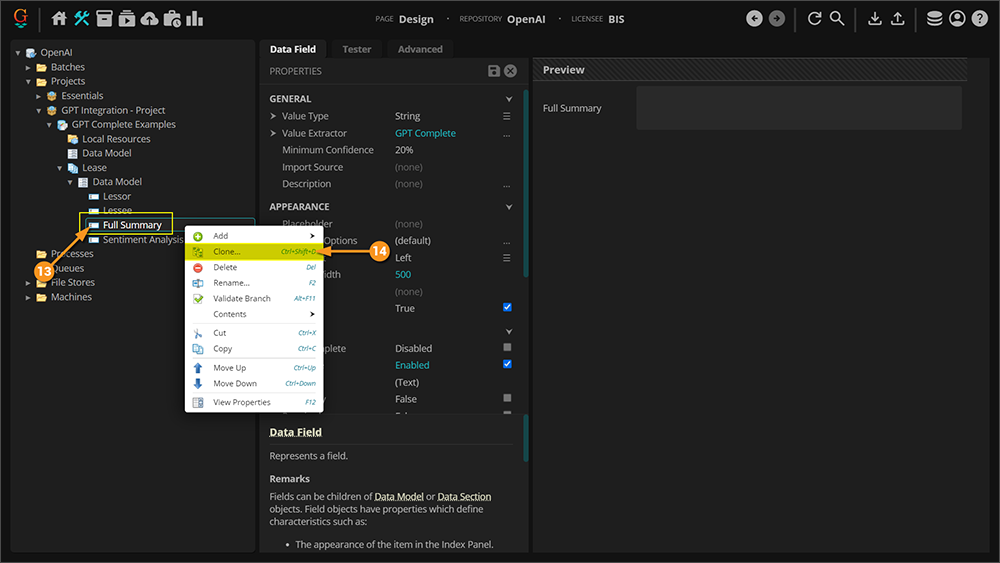

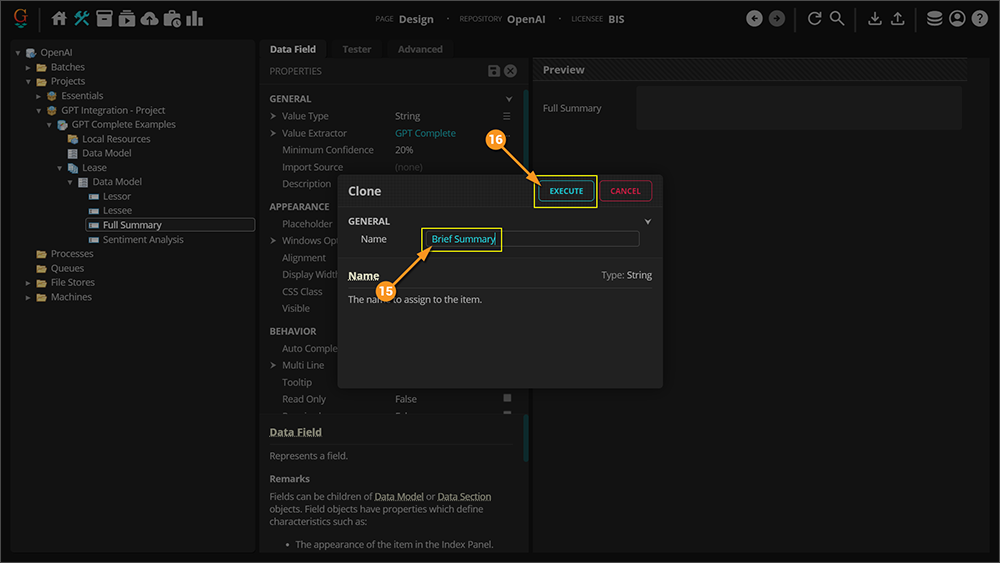

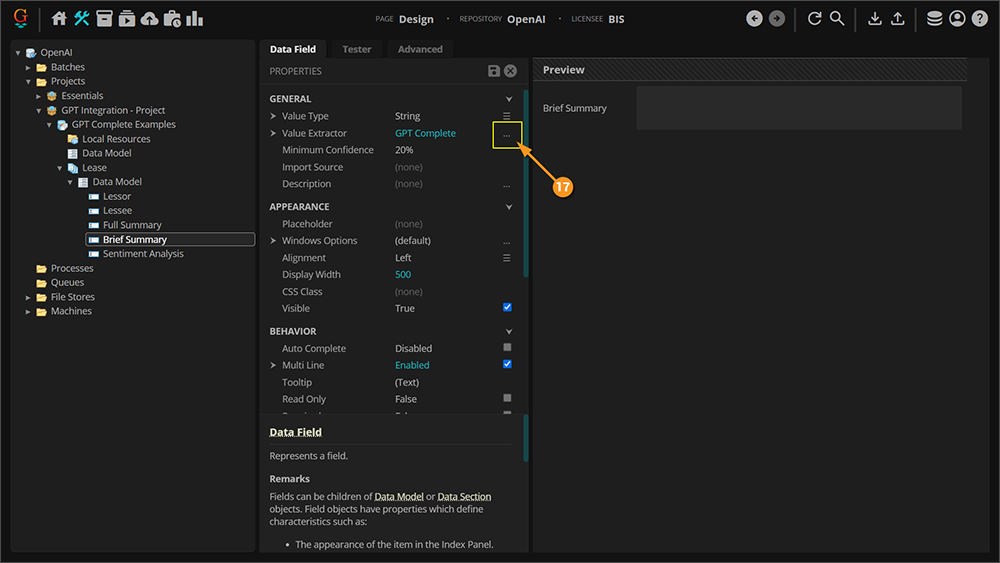

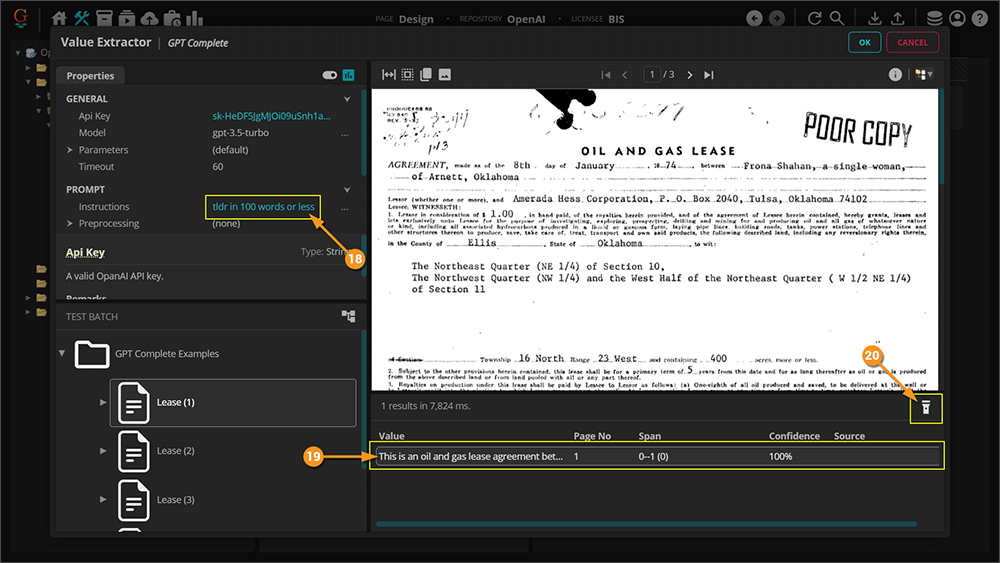

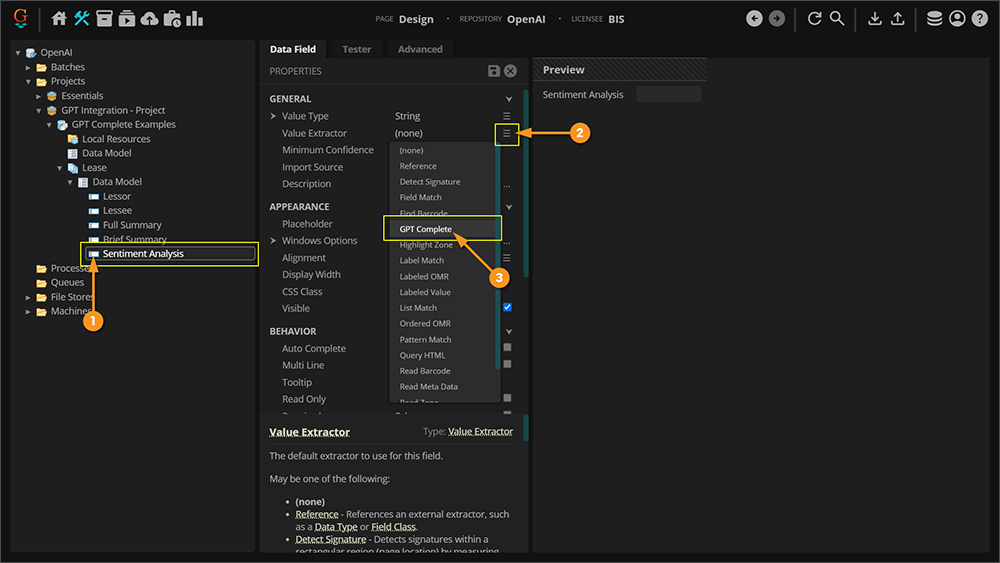

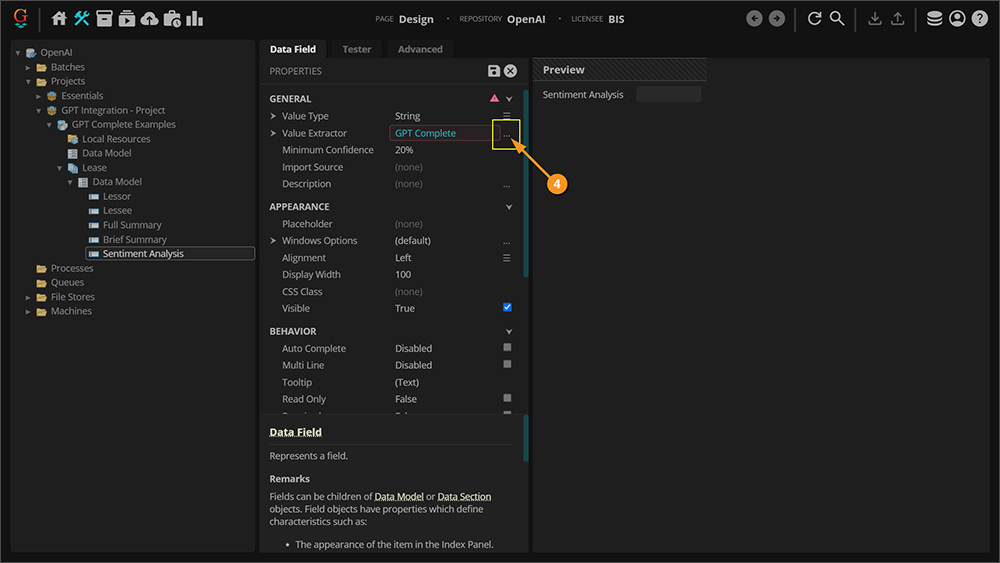

Extraction - GPT Complete

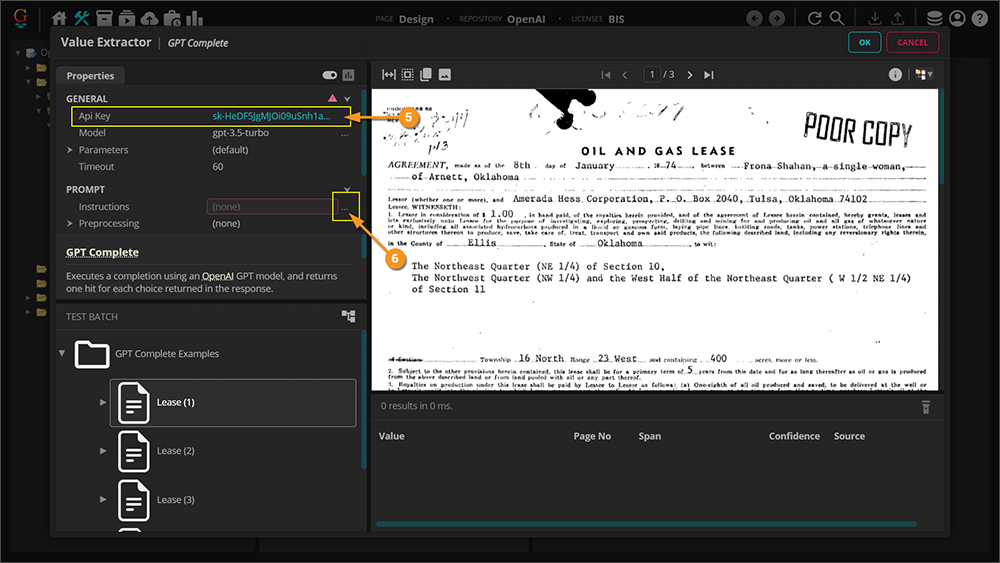

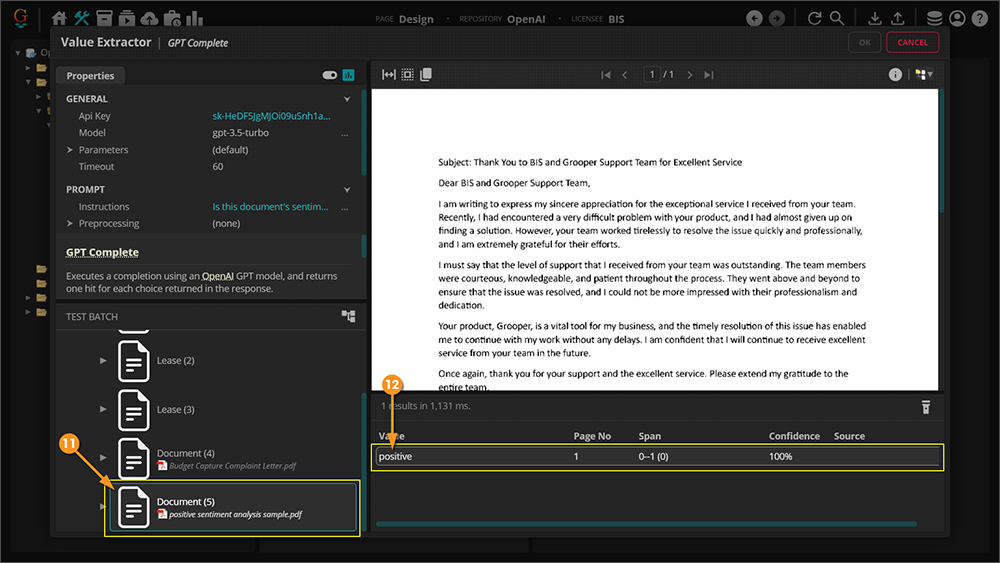

GPT Complete is a type of Value Extractor that was added to Grooper 2023. It is the setting you choose to leverage GPT integration on an extractor. Below are some examples of configuration and use. You should be able to follow along using the GPT Integration zip files (Batch and Project are included) that are included in this article. Begin by following along with the instructions. The details of the properties will be explained after.

It is also worth noting that the examples given below ARE NOT a comprehensive list. Provided are only a few examples of prompts used in extraction to get you thinking about what can be done. It is highly recommended that you not only reference the materials linked above, but also spend time experimenting and testing. Good luck!

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

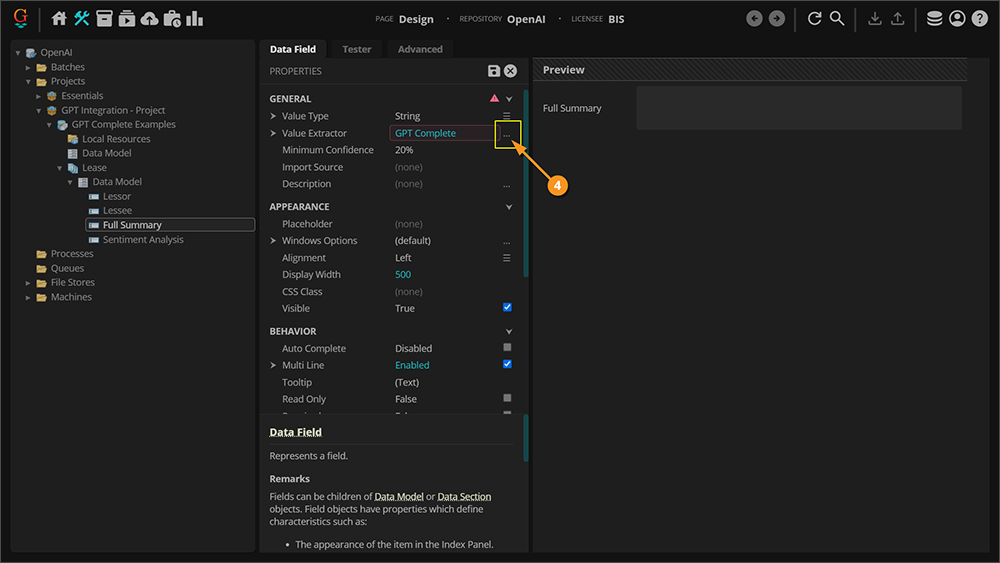

Value Extractor Properties

Before moving on to seeing how the GPT model is used for classification in Grooper let's take a look at the properties used in the GPT Complete Value Extractor.

API Key

You must fill this property with a valid API key from OpenAI in order to leverage GPT intergration with Grooper. See the Obtain an API Key section above for instruction on how to get a key.

Model

The API Key you use will determine which GPT models are available to you. The different GPT models can affect the text generated based on their size, training data, capabilities, prompt engineering, and fine-tuning potential. GPT-3's larger size and training data, in particular, can potentially result in more sophisticated, diverse, and contextually appropriate text compared to GPT-2. However, the actual performance and quality of the generated text also depend on various other factors, such as prompt engineering, input provided, and specific use case requirements. GPT-4 is the latest version, as of this writing, and takes the GPT model evern further.

Temperature

In the context of text generation using language models like ChatGPT, the temperature parameter is a setting that controls the randomness or randomness of the generated text. It is used during the sampling process, where the model selects the next word or token to generate based on its predicted probabilities.

When generating text, the language model assigns probabilities to different words or tokens based on their likelihood of occurring next in the context of the input text. The temperature parameter is used to scale these probabilities before sampling from them. A higher temperature value (e.g., 1.0) makes the probabilities more uniform and increases randomness, resulting in more varied and diverse text. On the other hand, a lower temperature value (e.g., 0.2) makes the probabilities more concentrated and biased towards the most likely word, resulting in more deterministic and focused text.

For example, with a higher temperature setting, the model may generate sentences like:

- "The weather is hot and sunny. I love to go swimming or hiking."

With a lower temperature setting, the model may generate sentences like:

- "The weather is hot. I love to go swimming."

The choice of temperature parameter depends on the desired output. Higher values are useful when you want more creativity and diversity in the generated text, but it may lead to less coherent or nonsensical sentences. Lower values are useful when you want more deterministic and focused text, but it may result in repetitive or overly conservative output. It's a hyperparameter that can be tuned to achieve the desired balance between randomness and coherence in the generated text.

TopP

TopP, also known as "nucleus sampling" or "stochastic decoding with dynamic vocabulary," is a text generation technique that is used to improve the diversity and randomness of generated text. It is often used as an alternative to traditional approaches like random sampling or greedy decoding in language models, such as GPT-2 and GPT-3.

In TopP sampling, instead of sampling from the entire probability distribution of possible next words or tokens, the model narrows down the choices to a subset of the most likely options. The subset is determined dynamically based on a predefined probability threshold, denoted as "p". The model considers only the words or tokens whose cumulative probability mass (probability of occurrence) falls within the top "p" value. The remaining words or tokens with lower probabilities are pruned from the selection.

Mathematically, given a probability distribution over all possible words or tokens, TopP sampling works as follows:

- Compute the cumulative distribution function (CDF) of the probabilities for the given distribution.

- Sort the probabilities in descending order and calculate the cumulative sum of probabilities from highest to lowest.

- Stop when the cumulative sum exceeds the threshold "p". So 0.1 means only the tokens comprising the top 10% probability mass are considered.

- The remaining set of words or tokens whose probabilities fall within the threshold "p" is considered for sampling.

By using TopP sampling, the model can generate text that is more diverse, as it allows for the possibility of selecting less frequent or rarer words or tokens, and it introduces randomness in the selection process. It can prevent the model from becoming overly deterministic or repetitive in its generated output, leading to more creative and varied text generation results.

Presence Penalty

The "presence penalty" is a technique used in text generation to encourage the model to generate more concise and focused outputs by penalizing the repetition of the same words or tokens in the generated text. It is a regularization technique that aims to reduce redundancy and promote diversity in the generated output.

The presence penalty is typically implemented as an additional term in the loss function during the training process of a language model. This term penalizes the model for generating the same words or tokens multiple times within a short span of text. The presence penalty can be formulated in different ways, depending on the specific model architecture and objectives, but the general idea is to assign a higher loss or penalty when the model generates repetitive or redundant text.

The presence penalty encourages the model to generate text that is more concise, avoids repetitive patterns, and promotes the use of a wider vocabulary. It helps prevent the model from generating overly verbose or redundant text, which can be undesirable in certain text generation tasks, such as story generation or summarization.

The magnitude of the presence penalty can be tuned to control the level of repetition allowed in the generated text. A higher penalty value would result in stricter avoidance of repetition, while a lower penalty value would allow for more repetition. The presence penalty is one of the techniques that can be used in combination with other regularization methods, such as temperature scaling, top-k sampling, or fine-tuning, to improve the quality and diversity of generated text.

Frequency Penalty

Frequency-based regularization techniques in text generation can refer to methods that aim to control the distribution of word or token frequencies in the generated text. This can be achieved by adding penalties or constraints to the model during training, such as limiting the occurrence of certain words or tokens, promoting the use of less frequent words or tokens, or controlling the balance of word or token frequencies in the generated text.

Remaining Properties

The remaining properties are fairly straight forward and require less description than the previous terms.

- Timeout - The amount of time, in seconds, to wait for a response from the web service before raising a timeout error.

- Instructions - The instructions or question to include in the prompt. The prompt sent to OpenAI consists of text content from the document, which provides context, plus the text entered here. This property should ask a question about the content or provide instructions for generating output. For example, "what is the effective date?", "summarize this document", or "Your task is to generate a comma-separated list of assignors".

- Preprocessing (Paragraph Marking, Tab Marking, Vertical Tab Marking, Ignore Control Characters) - To put simply, these tools were provided to allow the insertion (or deletion) of control characters to give textual context to information that would otherwise be spatial. GPT does not have an awareness of the location of text you feed it. As a person you can look at a table of information and understand it visually. GPT cannot. However, if you were to have control characters like tabs or paragraph markings, it increases the chance that GPT might understand those things.

- Overflow Disposition - Specifies the behavior when the document content is longer than the context length of the selected model.

- May be one of the following:

- Truncate - The content will be truncated to fit the model's context length.

- Split - The content will be split into chunks which fit the model's context length. One result will be returned for each chunk.

- Context Extractor - An optional extractor which filters the document content included in the prompt. All Value Extractor types are available.

- Max Response Length - The maximum length of the output, in tokens. 1 token is equivalent to approximately 4 characters for English text. Increasing this value decreases the maximum size of the context.

- Maximum Content Length - The maximum amount of content from the document to be included, in tokens.

Classification - GPT Embeddings

GPT Embeddings should be considered a BETA feature.

- This feature was recently added by the development team without a specific use case in mind.

- Rather, it was developed in response to ChatGPT's growing popularity.

- While it should work in theory, with no specific use case originating the feature, it has not been extensively tested.

- As new use cases emerge that are suited for this feature, this section's documentation will be expanded.

The GPT Embeddings classification method is a training-based classification approach that uses "embeddings" to tell one document from another. An embedding is a vector (list) of numbers. You can determine the difference between embeddings based on the distance between their vectors. A small distance between embeddings suggests they are highly related. A low distance between the embeddings suggests they are less related.

When using GPT Embeddings to classify documents, you will train the Content Model by giving Grooper example documents for each Document Type. The GPT model will assign the Document Types embeddings based on the text content from each trained document. When documents are classified (using the Classify activity), embeddings from the unclassified document are compared to the trained embedding values for each Document Type. Documents are then assigned the Document Type with the most similar embeddings.

For more information on embeddings, visit the following OpenAI documentation:

| ⚠ |

Please be aware embeddings have a maximum number of input tokens per request. This means there is a cutoff point for longer documents. How many input tokens are avaiable depends on the GPT model you're using.

|

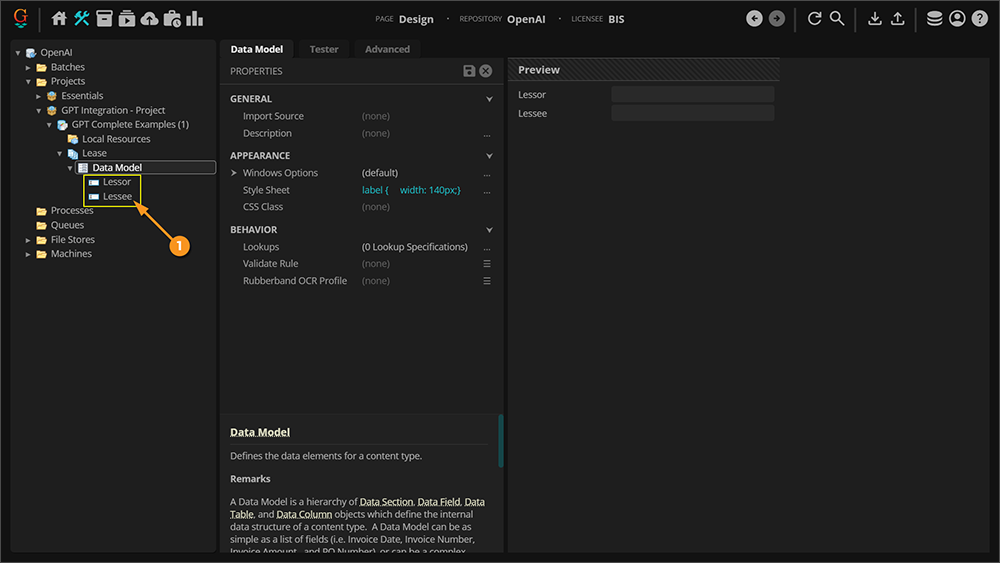

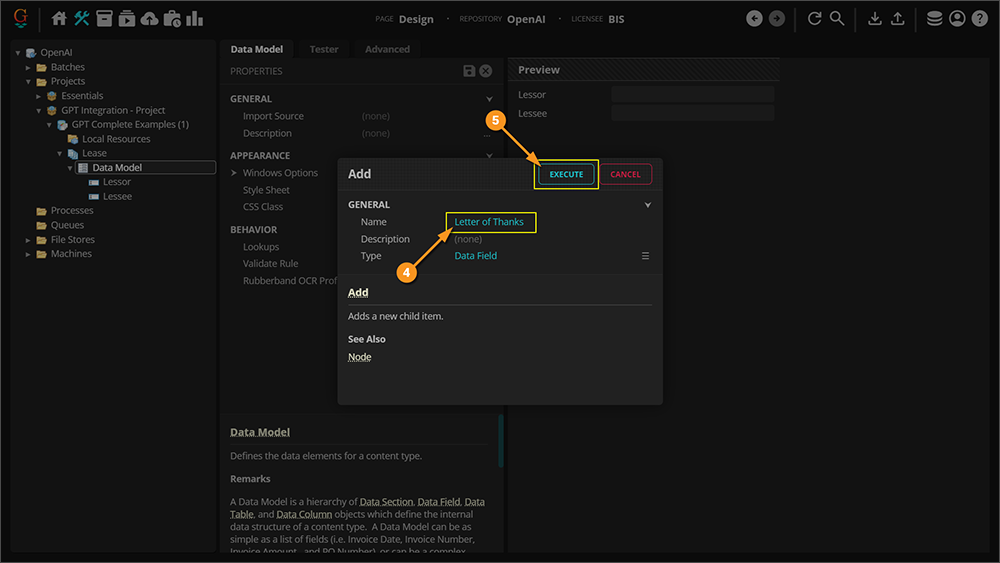

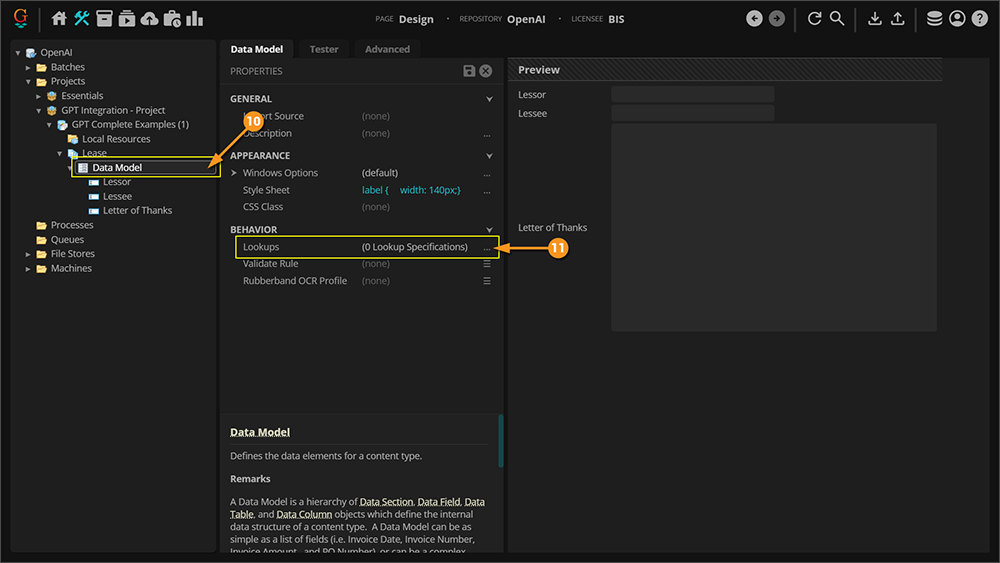

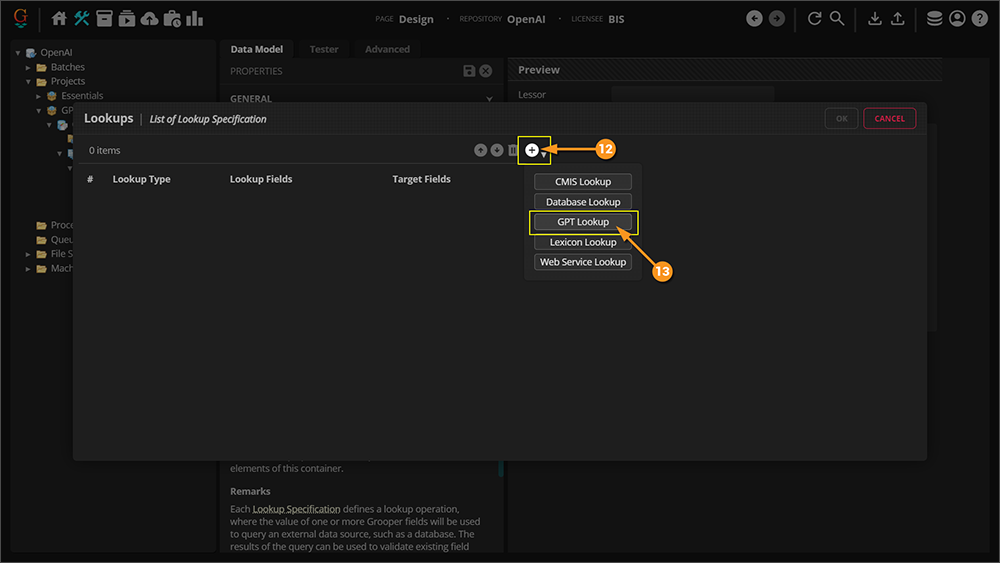

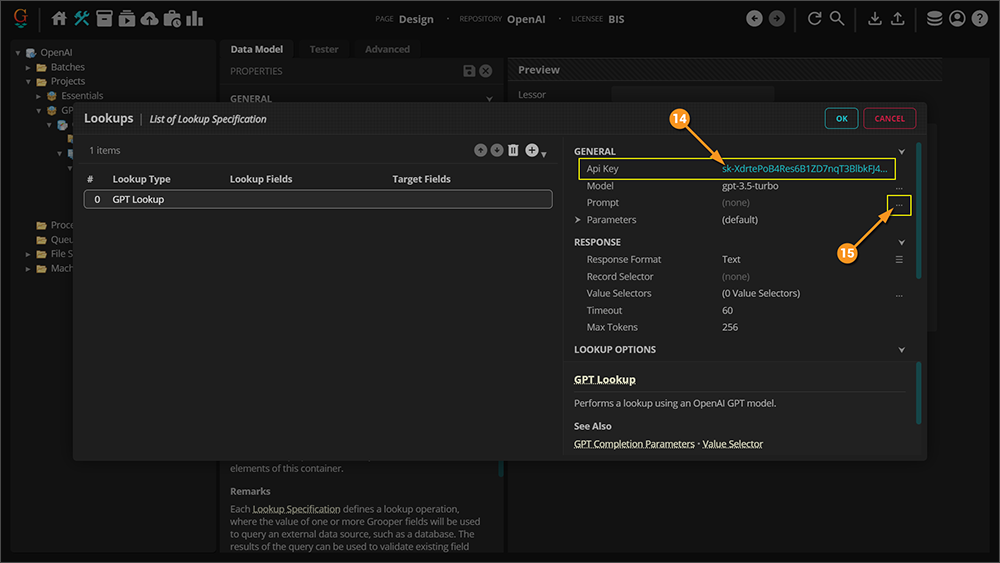

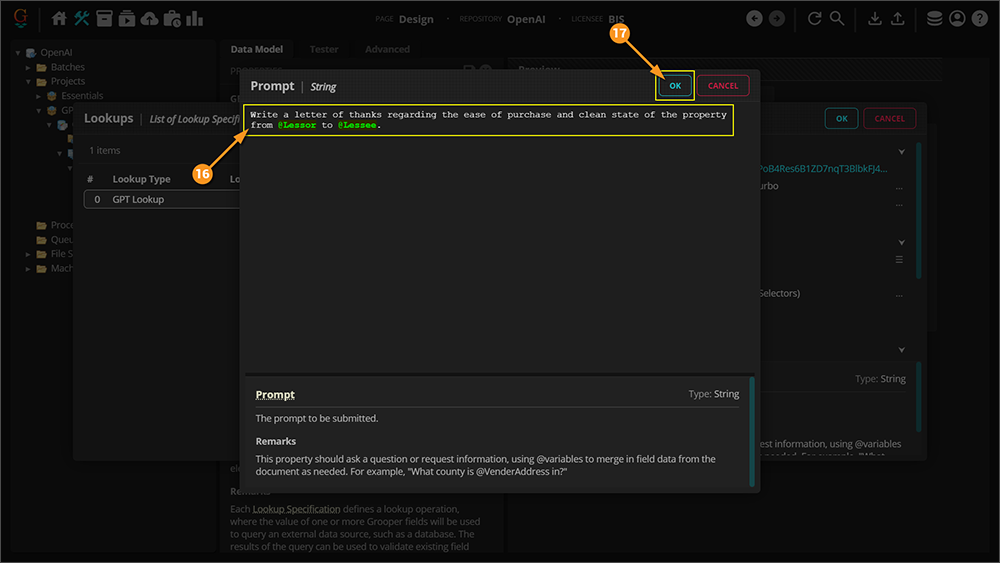

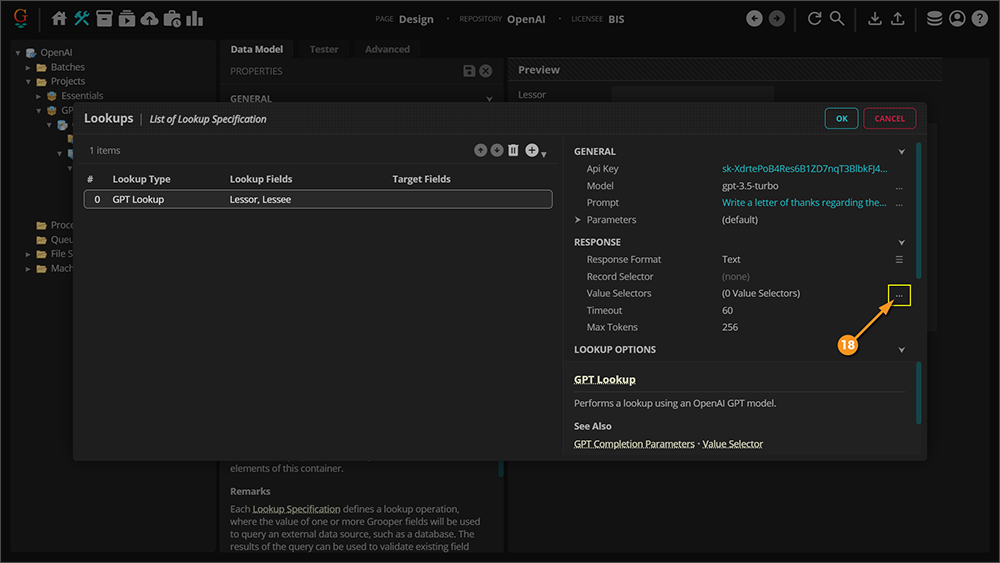

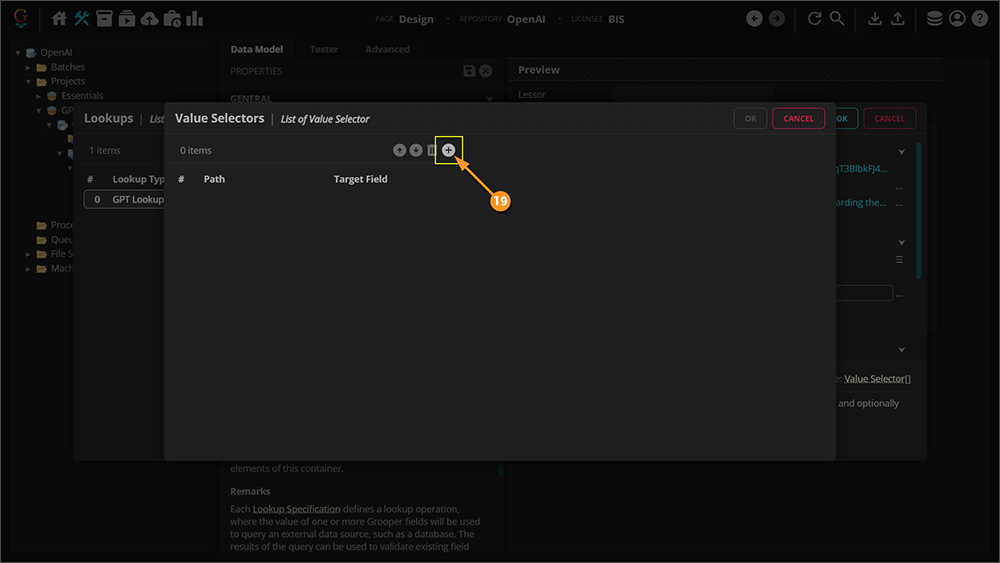

Lookup - GPT Lookup

Following is a simple of example that will demonstrate how to use the GPT Lookup functionality. As with everything else regarding GPT Integration in Grooper 2023, this is fairly untested and needs more experimentation to see its full potential. If nothing else, this example is inteded to give you a basic understanding of how to establish the lookup so you can try things out on your own.

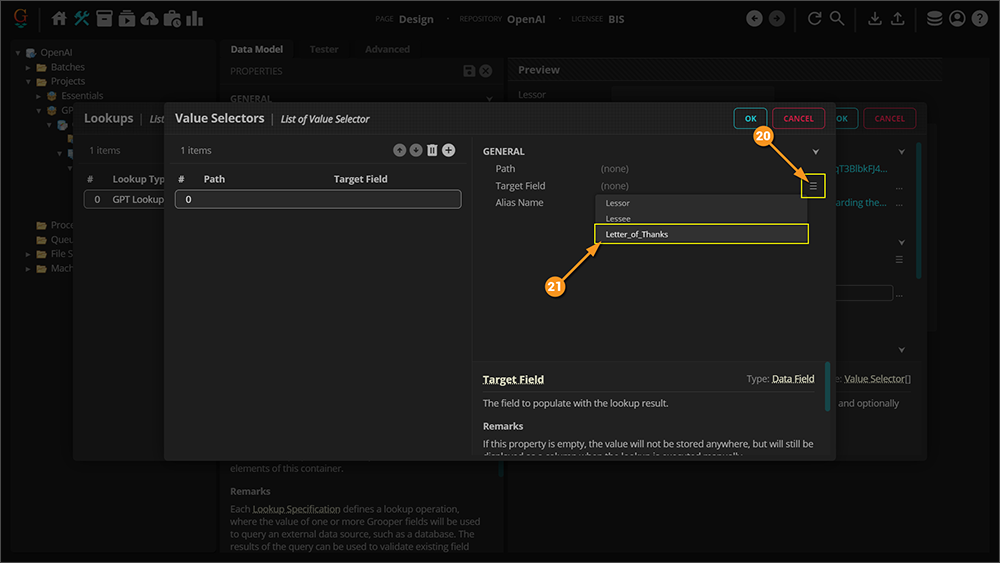

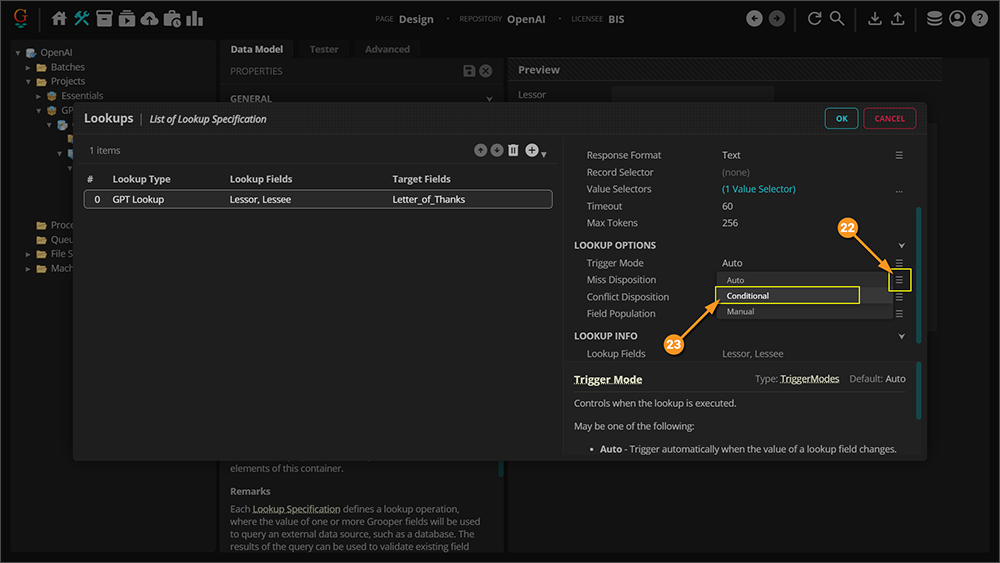

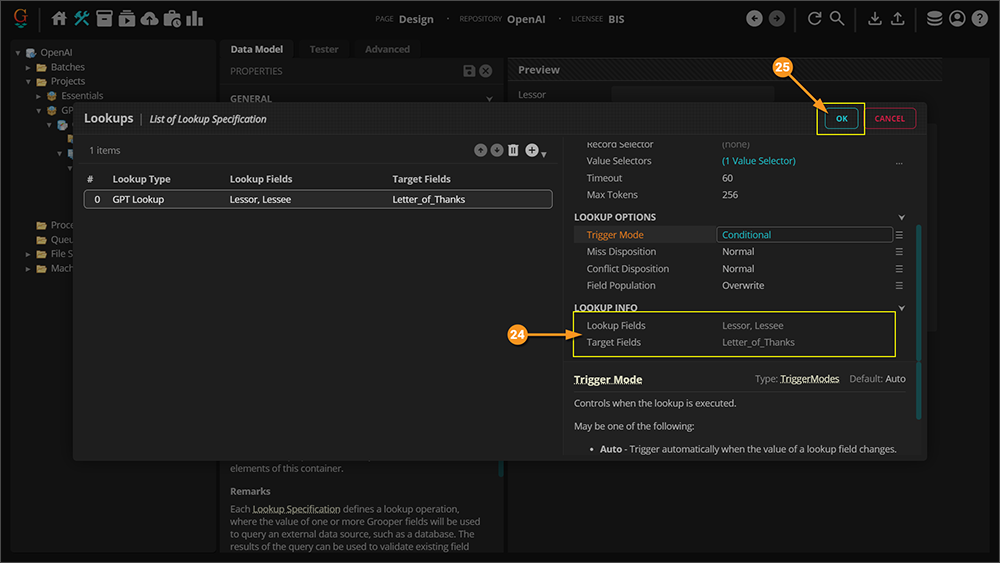

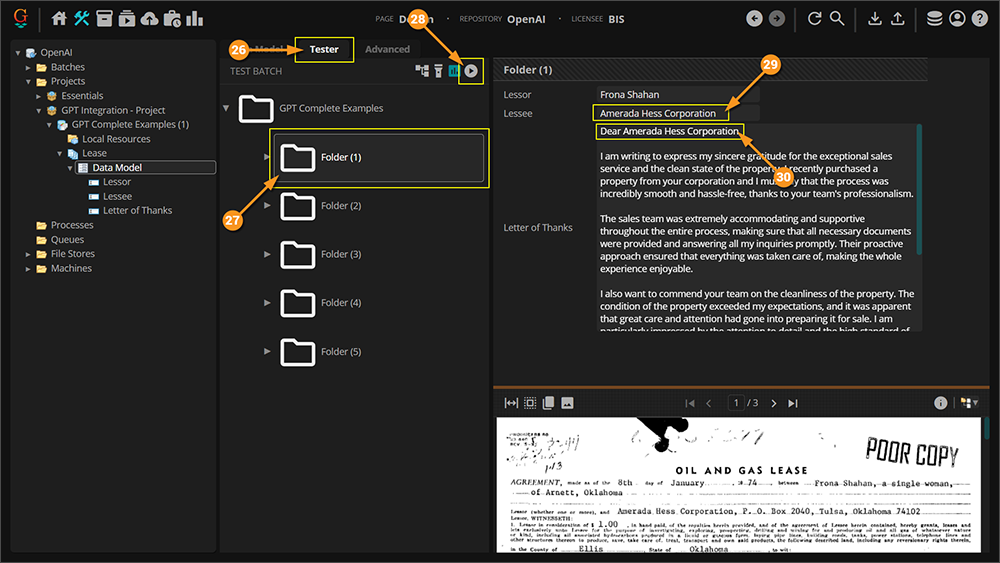

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Lookup Properties

Following are brief descriptions of properties that are unique to GPT Lookup. Properties that overlap with previously explained properties, or are self explanitory, will be skipped.

Response Format

This specifies the format in which data will be exchanged with the web service. Can be one of the following values:

- Text - The response will be plain text. Record and value selectors should be specified using regular expressions.

- JSON - The response will be in JSON format. Record and value selectors should be specified using JSONPath syntax.

- XML - The request and response body will be in XML format. Record and value selectors should be specified using XPath syntax.

The format selected here will be used both for sending POST data and interpreting responses. It is currently not possible to send an XML request then interpret the response as JSON, or vice-versa.

Record Selector

This is a JSONPath or XPath expression which selects records in the response.

The record selector is used to specify which JSON or XML entities represent records in the result set.

- JSON Notes

- In a JSON response, the Record Selector may be used as follows:

- If the selector matches an array, one record will be generated for each element of the array.

- If the selector matches one or more objects, one record will be generated for each object.

- Leave the property empty to select an array or object at the root of the JSON document.

- In a JSON response, the Record Selector may be used as follows:

- XML Notes

- In an XML response, the Record Selector may be used as follows:

- One record will be geneated for each XML element matched by the selector.

- Leave the property empty to select a singleton record at the root of the XML.

- In an XML response, the Record Selector may be used as follows: