What's New in Grooper 2024: Difference between revisions

Dgreenwood (talk | contribs) |

Dgreenwood (talk | contribs) No edit summary |

||

| Line 103: | Line 103: | ||

Currently there are two LLM provider types: | Currently there are two LLM provider types: | ||

* '''''OpenAI''''' - Connects Grooper to the OpenAI API or an OpenAI-compatible clone (used for hosting GPT models on local servers) | * '''''OpenAI''''' - Connects Grooper to the OpenAI API or an OpenAI-compatible clone (used for hosting GPT models on local servers) | ||

* '''''Azure''''' - Connects Grooper to individual chat or embeddings endpoints available in Microsoft Azure | * '''''Azure''''' - Connects Grooper to individual chat completion or embeddings endpoints available in Microsoft Azure | ||

=== New and improved LLM-based extraction techniques === | === New and improved LLM-based extraction techniques === | ||

| Line 133: | Line 133: | ||

'''''Ask AI''''' is more robust than its predecessor in that: | '''''Ask AI''''' is more robust than its predecessor in that: | ||

* '''''Ask AI''''' has access to more LLM models, including those accessed via the OpenAI API, privately hosted GPT clones, and compatible LLMs from Azure's | * '''''Ask AI''''' has access to more LLM models, including those accessed via the OpenAI API, privately hosted GPT clones, and compatible LLMs from Azure's model catalog. | ||

* '''''Ask AI''''' can more easily parse JSON responses. | * '''''Ask AI''''' can more easily parse JSON responses. | ||

* '''''Ask AI''''' has a mechanism to decompose chat responses into extraction instances (or "sub-elements"). This means '''''Ask AI''''' can potentially be used for a '''''Row Match''''' '''Data Table''' extractor. | * '''''Ask AI''''' has a mechanism to decompose chat responses into extraction instances (or "sub-elements"). This means '''''Ask AI''''' can potentially be used for a '''''Row Match''''' '''Data Table''' extractor. | ||

| Line 277: | Line 277: | ||

* Fuzzy matching: <code>searchTerm~</code> | * Fuzzy matching: <code>searchTerm~</code> | ||

*: Fuzzy search can only be applied to terms. Fuzzy searched phrases should not be enclosed in quotes. Azure's full fuzzy search documentation can be found here: https://learn.microsoft.com/en-us/azure/search/search-query-fuzzy | *: Fuzzy search can only be applied to terms. Fuzzy searched phrases should not be enclosed in quotes. Azure's full fuzzy search documentation can be found here: https://learn.microsoft.com/en-us/azure/search/search-query-fuzzy | ||

* Boolean operators: <code>AND</code> <code>OR</code> <code>NOT</code> | * Boolean operators: <code>AND</code> <code>OR</code> <code>NOT</code> | ||

*: Boolean operators can help improve the precision of search query. | *: Boolean operators can help improve the precision of search query. | ||

* Field searching: <code>fieldName:searchExpression</code> | * Field searching: <code>fieldName:searchExpression</code> | ||

*: Search built in fields and extracted '''Data Model''' values. For example, <code>Invoice_No:8*</code> would return any document whose extracted "Invoice No" field started with the number "8" | *: Search built in fields and extracted '''Data Model''' values. For example, <code>Invoice_No:8*</code> would return any document whose extracted "Invoice No" field started with the number "8" | ||

* Regular expression matching: <code>/regex/</code> | |||

*: Enclose a regex pattern in backslashes to incorporate it into the Lucene query. For example, <code>/[0-9]{3}[a-z]/</code> | |||

*:*<li class="attn-bullet"> Lucene regex can only be applied to fielded searches (e.g. <code>fieldName:/regex/</code>). | |||

*:*<li class="attn-bullet"> Lucene regex must match the ''whole field''. | |||

*:*<li class="attn-bullet"> Lucene regex does not use the Perl Compatible Regular Expressions (PCRE) library. Most notably, this means it does not use single-letter character classes, such as <code>\d</code> to match a single digit. Instead, enter the full character class in brackets, such as <code>[0-9]</code> to match a single digit. | |||

Azure's full documentation of Lucene query syntax can be found here: https://learn.microsoft.com/en-us/azure/search/query-lucene-syntax | Azure's full documentation of Lucene query syntax can be found here: https://learn.microsoft.com/en-us/azure/search/query-lucene-syntax | ||

Revision as of 13:38, 27 August 2024

|

2025 BETA |

This article covers new or changed functionality in the current or upcoming beta version of Grooper. Features are subject to change before version 2025's GA release. Configuration and functionality may differ from later beta builds and the final 2025 release. |

|

Grooper version 2024 is GrooperAI!

|

|

FYI |

We have overhauled our "Install and Setup" article for 2024. Information on installing version 2024 can be found in the article below. |

Moving Grooper fully into the web

Deploying Grooper over a web server is a more distributable, more secure, and more modern experience. Version 2022 started Grooper's foray into web development with a web client for user operated Review tasks. Versions 2023 and 2023.1 expanded our web client to incorporate all aspects of Grooper in the web client. Version 2024 fully cements our commitment to moving Grooper to a web-based application.

Thick client removal

In 2024, there is no longer a Grooper thick client (aka "Windows client"). There is only the Grooper web client. This opens Grooper up to several advantages for cloud-based app development and cloud-based deployments.

All thick client Grooper applications have an equivalent in the Grooper web client. Most of these are now pages you will navigate to from the web client. For those unfamiliar with the Grooper web client, refer to the table below for the web client equivalent versions of thick client apps in version 2024.

|

Former thick client application |

Current web client equivalent |

|

Grooper Design Studio |

The Design page |

|

Grooper Dashboard |

The Batches page |

|

Grooper Attended Client |

The Tasks page |

|

Grooper Kiosk |

The Stats page (displaying stats queries in a browser window) |

|

Grooper Config |

Grooper Command Console (GCC). See below for more information |

|

Grooper Unattended Client |

Activity Processing services can be hosted in-process using the |

Grooper Command Console

Grooper Command Console (or GCC) is a replacement for the thick client administrative application, Grooper Config. Previous functions performed by Grooper Config can be accomplished in Grooper Command Console. This includes:

- Connecting to Grooper Repositories

- Installing and managing Grooper Services

- Managing licensing for self hosted licensing installations

Grooper Command Console is a command line utility. All functions are performed using command line commands rather than a "point and click" user interface. Users of previous versions will find the difference somewhat shocking, but the command line interface has several advantages:

- Most administrative functions are accomplished with a single command or a small number of commands. In Grooper Config, to accomplish the same function you would perform several clicks to do the same thing. Once you are familiar with the GCC commands, Grooper Command Console ends up saving you time.

- Commands can be easily scripted. There was not an easy way to procedurally execute the functions of Grooper Config like creating a Grooper Repository or spinning up new Grooper services. GCC commands allow you to do this.

- Scaling services is much easier. In previous versions of Grooper, we have done proof-of-concept tests to ensure Grooper can scale in cloud deployments (such as using auto-scaling in Amazon AWS instances). However, in older Grooper versions scaling Activity Processing services was somewhat clunky. Using GCC commands to spin up services makes this process much simpler. Grooper Command Console also has specific commands to make scaling with Docker containers simpler.

For more information about Grooper Command Console, visit the Grooper Command Console article.

Improved web UI: New icons!

One of the first things Grooper Design page users will notice in version 2024 is our icons have changed. Our old icons served us well over the last several years, but were starting to look a little outdated. Part of keeping Grooper a modern platform with modern features is keeping its look modern too. Furthermore, all our new icons are scalable with your browser. They will scale larger and smaller as you zoom in and out without loosing fidelity.

See below for a list of new icons. Images of the old icons are included for reference.

Improved integrations with Large Language Models (LLMs)

Innovations in Large Language Models (or LLMs) have changed the landscape of artificial intelligence. Companies like OpenAI and their GPT models have developed LLM-based technologies, like ChatGPT, that are highly effective at natural language processing. Being fully committed to advancing our capabilities through new AI integrations, Grooper has vastly improved what we can do with LLM providers such as OpenAI.

Repository Options: LLM Connector

Repository Options are new to Grooper 2024. They add new functionality to the whole Grooper Repository. These optional features are added using the Options property editor on the Grooper Root node.

OpenAi and other LLM integrations are made by adding an LLM Connector to the list of Repository Options. The LLM Connector provides connectivity to LLMs like OpenAi's GPT models. This allows access to Grooper features that leverage LLM chatbots (discussed in further detail below).

Currently there are two LLM provider types:

- OpenAI - Connects Grooper to the OpenAI API or an OpenAI-compatible clone (used for hosting GPT models on local servers)

- Azure - Connects Grooper to individual chat completion or embeddings endpoints available in Microsoft Azure

New and improved LLM-based extraction techniques

First and foremost, in 2024 you will see new and improved ways to extract data from your documents using LLMs. Because LLMs are so good at processing natural language, set up for these new extraction techniques is done in a fraction of the time of traditional extractors in Grooper.

New in 2024 you will find:

- AI Extract: A "Fill Method" designed to extract a full Data Model with little configuration necessary.

- Clause Detection: A new Data Section extract method designed to find clauses of a certain type in a contract.

- Ask AI: This extractor type replaces the deprecated "GPT Complete" extractor, with new functionality that allows table extraction using responses from LLMs.

AI Extract

AI Extract introduces the concept of a Fill Method in Grooper. Fill Methods are configured on "container elements", like Data Models, Data Sections and Data Tables. The Fill Method runs after data extraction. It will fill in the Data Model using whatever method is configured (Fill Methods can be configured to overwrite initial extraction results or only supplement them).

AI Extract is the first Fill Method in Grooper. It uses chat responses from Large Language Models (like OpenAI's GPT models), to fill in a Data Model. We have designed this fill method to be as simple as possible to get data back from a chat response and into fields in the Data Model. In many cases, all you need to do is add Data Elements to a Data Model to return results.

AI Extract uses the Data Elements' names, data types (string, datetime, etc.) and (optionally) descriptions to craft a prompt sent to an LLM chatbot. Then, it parses out the response, populating fields, sections and even table cells. As long as the Data Elements' names are descriptive ("Invoice Number" for an invoice number located on an invoice), that's all you need to locate the value in many cases. With no further configuration necessary, this is the fastest to deploy method of extracting data in Grooper to date.

Clause Detection

Detecting contract clauses of a certain type has always been doable in Grooper using Field Classes. However, training the Field Class is a laborious and tedious process. This can be particularly taxing when attempting to locate several different clauses throughout a contract.

Large Language Models make this process so much simpler. LLMs are well suited to find examples of clauses in contracts. Natural language processing, after all, is their bread and butter. Clause Detection is a new Data Section extract method that uses chat responses to locate clauses in a contract. All you have to do is provide one or more written examples of the clause and Clause Detection does the rest. It parses the clause's location from the chatbot's response, which then forms the Data Section's data instance. This can be used to return the full text of a clause, extract information in the clause to Data Fields or both.

Ask AI

Ask AI is a new Grooper Extractor Type in Grooper 2024. It was created as a replacement for the "GPT Complete" extractor, which uses a deprecated method to call OpenAI GPT models. Ask AI works much like GPT Complete. It is an extractor configured with a prompt sent to a LLM chatbot and returns the chatbot's response.

Ask AI is more robust than its predecessor in that:

- Ask AI has access to more LLM models, including those accessed via the OpenAI API, privately hosted GPT clones, and compatible LLMs from Azure's model catalog.

- Ask AI can more easily parse JSON responses.

- Ask AI has a mechanism to decompose chat responses into extraction instances (or "sub-elements"). This means Ask AI can potentially be used for a Row Match Data Table extractor.

Chat with your documents

Publicly accessible LLM chatbots like ChatGPT are always limited by what content they were trained on. The documents you're processing are probably not part of their training set. If they were, the LLM would be able to process it more effectively. You could even "chat" with your documents. You could ask more specific questions and get more accurate responses.

Now you can do just that! Using OpenAI's Assistants API, we've created a mechanism to quickly generate custom AI chatbot assistants in Grooper that can answer questions directly about one or more selected documents.

Build AI assistants with Grooper AI Analysts

AI Analysts are a new node type in Grooper that facilitate chatting with a document set. Creating an AI Analyst requires an OpenAI API account. AI Analysts create task-specific OpenAI "assistants" that answer questions based on a "knowledge base" of supplied information. Selecting one or more documents, users can chat with the assistant in Grooper about the documents. The text data from these documents form the assistant's knowledge base.

Using this mechanism, users can have a conversation with a single document or a Batch with hundreds of documents. Each conversion is logged as a "Chat Session" and stored as a child of the AI Analyst. These Chat Sessions can be accessed again (either in the Design Page's Node Tree or the Chat Page), allowing users to continue previous conversions.

The process of creating an AI Analyst and starting a Chat Session is fairly straightforward:

- Add an LLM Connector to the Grooper Repository Options.

- Create an AI Analyst.

- Select the documents you want to chat with. This can be done in multiple ways.

- From a Batch Viewer or Folder Viewer.

- From a Search Page query (more on the Search Page below).

- From the Chat Viewer in Review

- Start a Chat Session. This can also be done in multiple ways.

- Using the Discuss command

- Using the AI Dialogue activity. This is a way of automating chat questions.

- Using the Chat Viewer in Review

Chat in Review

The Chat View is a new Review View that can be added to a Review step in a Batch Process. This allows human operators a mechanism to chat with a document during Review. The Chat View facilitates a chat with an AI Analyst. Users may select one document or multiple documents and enter questions into the chat console. The human reviewer can ask questions to better understand the document or help locate information to complete their review.

Furthermore, if there are "stock questions" any Review user should be asking, the new AI Dialogue activity can automate a scripted set of questions with an AI Analyst. AI Dialogue starts a Chat Session for each document. Any "Predefined Messages" configured for the AI Analyst will be asked by the AI Dialogue activity in an automated Chat Session. The responses for the Chat Session are then saved to each Batch Folder. The answers to these questions can be then reviewed by a user during Review with a Chat View. This also allows users to continue the conversation with Predefined Messages getting the conversation started.

Chat Page

The Chat Page is a brand new UI page that allows users to continue previous Chat Sessions. Chat Sessions are archived as children of an AI Analyst . Each Chat Session is organized into subfolders by user name. The Chat Page allows users to access their previous Chat Sessions stored in these folders. Furthermore, since Chat Sessions are archived by user name, users will only have access to Chat Sessions created by their user session.

AI Search: Document search and retrieval

Traditionally Grooper has been solely a document processing platform. The process has always been (1) get documents into Grooper (2) condition them for processing (3) get the data you want from them Grooper 2024 (4) get the documents and data out of Grooper and then delete those Batches as soon as they are gone. Grooper was never designed to be a document repository. It was never designed to hold documents and data long-term. All that is changing starting in version 2024!

One of our big roadmap goals for Grooper is to evolve its content management capabilities. Our goal is to facilitate users who do want to keep documents in Grooper long term. There will be several advantages to keeping documents in Grooper long term:

- You only need one system to manage your documents. No need to export content to a separate content management system.

- Grooper's hierarchical data modeling allows the documents' full extracted data to be stored in Grooper, including more complex multi-instance data structures like table rows.

- If you need to reprocess a document, you don't have to bring it back into Grooper. It's already present and conditioned for further processing.

In version 2024, we take our first step into realizing Grooper's document repository potential with AI Search. Any content management system worth its salt must have a document (and data) retrieval mechanism. AI Search uses Microsoft Azure's AI Search API to index documents and their data. Indexed documents can be retrieved by searching for them using the new Search Page. This page can be used for something as simple as full text searching or more advanced queries that return documents based on extracted values in their Data Model.

Basic AI Search Setup

Before you can start using the Search page to search for documents, there's some basic setup you need to perform. Some of these steps are performed outside of Grooper. Most are performed inside of Grooper.

Outside of Grooper

- Create an AI Search service in Azure.

- The following article from Microsoft instructs users how to create a Search Service:

- Microsoft's full AI Search documentation is found here:

- You will need the Azure AI Search service's "URL" and either "Primary admin key" or "Secondary admin key" for the next step. These values can be found by accessing the Azure Search service from the Azure portal (portal.azure.com).

Inside of Grooper

- Add AI Search to the Grooper Root node's Repository Options. Enter the URL and admin key for the Azure AI Search service (copied from Azure).

- Add an Indexing Behavior on a Content Model.

- Documents must be classified in Grooper before they can be indexed. Only Document Types/Content Types inheriting an Indexing Behavior are eligible for indexing.

- Create the search index. To do this, right-click the Content Model and select "Search > Create Search Index"

- This creates the search index in Azure. Without the search index created, documents can't be added to an index. This only needs to be done once per index.

- Submit an "Indexing Job" to index any documents classified using the Content Model currently in the Grooper Repository. To do this, right-click the Content Model and select "Search > Submit Indexing Job".

- BE AWARE: An Activity Processing service must be running to execute the Indexing Job.

- This is one of many ways to index documents using AI Search. For a full list (including a ways to automate document indexing) see below.

Repository Options: AI Search

Repository Options are new to Grooper 2024. They add new functionality to the whole Grooper Repository. These optional features are added using the Options property editor on the Grooper Root node.

To search documents in Grooper, we use Azure's AI Search service. In order to connect to an Azure AI Search service, the AI Search option must be added to the list of Repository Options in Grooper. Here, users will enter the Azure AI Search URL endpoint where calls are issued and an admin's API key. Both of these can be obtained from the Microsoft Azure portal once you have added an Azure AI Search resource.

With AI Search added to your Grooper Repository, you will be able to add an Indexing Behavior to one or more Content Types, create a search index, index documents and search them using the Search Page.

Indexing documents for search

Before documents can be searched, they must be indexed. The search index holds the content you want to search. This includes each document's full OCR or native text obtained from the Recognize activity and can optionally include Data Model results collected from the Extract activity. We use the Azure AI Search Service to create search indexes according to an Indexing Behavior defined for Content Types in Grooper. Documents are made searchable by adding them to a search index. Once indexed, you can search for documents using Grooper's Search page.

The Indexing Behavior: Defines the search index

Before indexing documents, you must add an Indexing Behavior to the Content Types you want to index. Most typically, this will be done on a Content Model. All child Document Types will inherit the Indexing Behavior and its configuration (More complicated Content Models may require Indexing Behaviors configured on multiple Content Types).

The Indexing Behavior defines:

- The index's name in Azure.

- Which documents are added to the index.

- Only documents who are classified as the Indexing Behavior's Content Type OR any of its children Content Types will be indexed.

- In other words, when set on a Content Model only documents classified as one of its Document Types will be indexed.

- What fields are added to the search index (including which Data Elements from a Data Model are included, if any).

- Any options for the search index in the Grooper Search page (included access restriction to the search index).

|

⚠ |

BE AWARE: Once an Indexing Behavior is added to a Content Type, you must use the "Create Search Index" command to create the index in Azure. Do this by right-clicking the Content Type and choosing "Search > Create Search Index". |

With the Indexing Behavior defined, and the search index created, now you can start indexing documents.

Adding documents to the search index

Documents may be added to a search index in one of the following ways:

- Using the "Add to Index" command.

- This is the most "manual" way of doing things.

- Select one or more documents, right-click them and select "Search > Add to Index" to add only the selected documents to the search index.

- Documents may also be manually removed from the search index in this way by using the "Remove From Index" command.

- Using the "Submit Indexing Job" command.

- This is a manual way of indexing all existing documents for the Content Model.

- The Indexing Job will add newly classified documents to the index, update the index if changes are made (to their extracted data for example), and remove documents from the index if they've been deleted.

- Select the Content Model, right-click it and select "Search > Submit Indexing Job".

- BE AWARE: An Activity Processing service must be running to execute the Indexing Job.

- Using an Execute activity in a Batch Process to apply the "Add to Index" command to all documents in a Batch.

- This is one way to automate document indexing.

- Bear in mind, if documents or their data change after this step would run, they would still need to be re-indexed after changes are made.

- Running the Grooper Indexing Service to index documents automatically in the background.

- This is the most automated way to index documents.

- The Grooper Indexing Service periodically polls the Grooper database to determine if the index needs to be updated. If it does, it will submit an "Indexing Job".

- The Indexing Job will add newly classified documents to the index, update the index if changes are made (to their extracted data for example), and remove documents from the index if they've been deleted.

- The Indexing Behavior's Auto Index property must also be enabled for the Indexing Service to sub

- BE AWARE: An Activity Processing service must be running to execute the Indexing Job(s).

The Search Page

Once you've got indexed documents, you can start searching for documents in the search index! The Search page allows you to find documents in your search index.

The Search page allows you to build a search query using four components:

- Search: This is the only required parameter. Here, you will enter your search terms, using the Lucene query syntax.

- Filter: An optional filter to set inclusion/restriction criteria for documents returned, using the OData syntax.

- Select: Optionally selects which fields you want displayed for each document.

- Order By: Optionally orders the list of documents returned.

Search

The Search configuration searches the full text of each document in the index. This uses the Lucene query syntax to return documents. For a simple search query, just enter a word or phrase (enclosed in quotes "") in the Search editor. Grooper will return a list of any documents with that word or phrase in their text data.

Lucene also supports several advanced querying features, including:

- Wildcard searches:

?and*- Use

?for a single wildcard character and*for multiple wildcard characters.

- Use

- Fuzzy matching:

searchTerm~- Fuzzy search can only be applied to terms. Fuzzy searched phrases should not be enclosed in quotes. Azure's full fuzzy search documentation can be found here: https://learn.microsoft.com/en-us/azure/search/search-query-fuzzy

- Boolean operators:

ANDORNOT- Boolean operators can help improve the precision of search query.

- Field searching:

fieldName:searchExpression- Search built in fields and extracted Data Model values. For example,

Invoice_No:8*would return any document whose extracted "Invoice No" field started with the number "8"

- Search built in fields and extracted Data Model values. For example,

- Regular expression matching:

/regex/- Enclose a regex pattern in backslashes to incorporate it into the Lucene query. For example,

/[0-9]{3}[a-z]/- Lucene regex can only be applied to fielded searches (e.g.

fieldName:/regex/). - Lucene regex must match the whole field.

- Lucene regex does not use the Perl Compatible Regular Expressions (PCRE) library. Most notably, this means it does not use single-letter character classes, such as

\dto match a single digit. Instead, enter the full character class in brackets, such as[0-9]to match a single digit.

- Lucene regex can only be applied to fielded searches (e.g.

- Enclose a regex pattern in backslashes to incorporate it into the Lucene query. For example,

Azure's full documentation of Lucene query syntax can be found here: https://learn.microsoft.com/en-us/azure/search/query-lucene-syntax

Filter

First you search, then you filter. The Filter parameter specifies criteria for documents to be included or excluded from the search results. This gives users an excellent mechanism to further fine tune their search query. Commonly, users will want to filter a search set based on the field values. Both built in index fields and/or values extracted from a Data Model can be incorporated into the filter criteria.

Azure AI Search uses the OData syntax to define filter expressions. Azure's full OData syntax documentation can be found here: https://learn.microsoft.com/en-us/azure/search/search-query-odata-filter

Select

The Select parameter defines what field data is returned in the result list. You can select any of the built in fields or Data Elements defined in the Indexing Behavior. This can be exceptionally helpful when navigating indexes with a large number of fields. Multiple fields can be selected using a comma separated list (e.g. Field1,Field2,Field3)

Order By

Order By is an optional parameter that will define how the search results are sorted.

- Any field in the index can be used to sort results.

- The field's value type will determine how items are sorted.

- String values are sorted alphabetically.

- Datetime values are sorted by oldest or newest date.

- Numerical value types are sorted smallest to largest or largest to smallest.

- Sort order can be ascending or descending.

- Add

ascafter the field's name to sort in ascending order. This is the default direction. - Add

descafter the field's name to sort in ascending order.

- Add

- Multiple fields may be used to sort results.

- Separate each sort expression with a comma (e.g.

Field1 desc,Field2) - The leftmost field will be used to sort the full result list first, then it's sub-sorted by the next, then sub-sub-sorted by the next, and so on.

- Separate each sort expression with a comma (e.g.

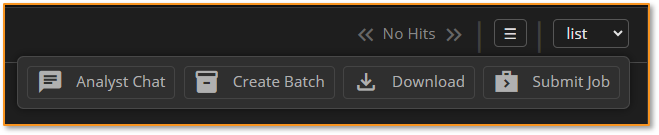

Search page commands: Create Batches, submit tasks, and download documents

There are several new commands users can execute from the Search page. These commands give users a new way of starting and continuing work in Grooper. These commands can be divided into two sets of commands: "result set commands" and "document commands"

Result set commands

These commands can be accessed from a dropdown list in the Search page UI. They can be applied to the entire result set or a selection from the result set.

- Create Batch - Creates a Batch from the result set and submits an Import Job to start processing it.

- Submit Job - Submits a Processing Job for documents in the result set. This command is intended for "on demand" activity processing.

- Analyst Cat - Select an AI Analyst to start a chat session with the result set.

- Download - Download a document, generated from the result set. May be one of the following:

- Download PDF - Generates a single bookmarked PDF with optional search hit highlights.

- Download ZIP - Generates a ZIP file containing each document in the result set.

- Download CSV - Generates a CSV file from the result set's data fields.

- Download Custom - Generates a custom document using an "AI Generator"

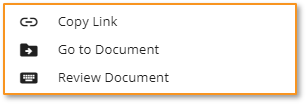

Document commands

These commands can be accessed from the Search page when right-clicking a document in the result list.

- Go to Document - Navigates users with Design page permissions to that document in the Grooper node tree.

- Review Document - Opens the document in a Review Viewer with a Data View, Folder View and Thumbnail View.

- Copy Link - Creates a URL link to the document. When clicking the link users will be taken to a Review Viewer with a Data View, Folder View and Thumbnail View.

Document "Generators"

Simplified Batch architecture

The traditional Batch architecture in Grooper is unnecessarily bloated. All Batches are created with a copy of a published Batch Process, created and stored as one of its children. This creates an exceptional number of duplicated nodes, not only the copy Batch Process but all its child steps too. To make Batches leaner and processing Batches more efficient, we have simplified this structure.

In brief:

- Batches no longer house copies of Batch Process. Instead, they simply reference a published Batch Process.

- With the child Batch Process gone, there is no need

- Batches are now more truly just a container for document content. This has the following advantages:

- It makes them easier to process.

- It makes it easier for AI Search to index.

- It easier to keep them around in Grooper long term.

Looking towards Grooper's future, this new architecture will help Grooper be a document repository as well as a processing platform. This will allow us to move from a "batch processing" focused design to a "document processing" focused design. If documents are going to hang around in Grooper permanently, it needs to be easier to process them "in place", wherever they are in the repo. The simplified Batch architecture implemented in version 2024 will aid us in this goal.

Good-bye local Batch Process children!

Batches no longer store a local copy of a Batch Process as a child.

In the past, whenever Grooper creates a Batch, it stores a read-only copy of the Batch Process used to process it as one of its children. This is inefficient, especially when processing Batches with a single document (or in other words, just one document). Every Batch that comes into Grooper just has an extra Batch Process and set of Batch Process steps tied to it. These additional nodes clutter up the Grooper database and makes querying Batches more inefficient than it needs to be.

In 2024, Batches will no longer house a clone of a Batch Process. Instead they will reference a published Batch Process. Each published version of a Batch Process is archived permanently (until a Grooper designer deletes unused processes).

Good-bye "root folder" node!

Batches no longer have a "root folder". The result is a simpler, more logical folder hierarchy.

The only reason Batches had a root folder was to distinguish the folder and page content from the local copy of a Batch Process. Because there is no longer a Batch Process child, there is no need for a root folder. So, its gone!

Instead, the Batch object itself is the root of the Batch. Batches now have all the properties of a Batch Folder as well as a Batch. This makes Batches more lightweight, particularly for single-document Batches.

- For single-document Batches, the Batch is not just a container for documents, but in effect, the document itself!

- For Batches with multiple documents, the Batch now acts as the root folder. This gets rid of a now unnecessary (and previously often confusing) level in the Batch hierarchy.

Hello new Batch testing tools!

There are new tools to help facilitate testing from the Design page.

The only potential drawback to the Batch redesign comes in testing. In the past, Grooper designers would use the local Batch Process copies to test steps in production Batches. If there is no longer a local copy, how are users going to test production Batches in this way?

There are several new tools that make testing production Batches easier.

- Published versions of Batch Process will now be able to access the production branch of the Batches tree for Batch testing.

- Production Batches have a "Go To Process" button. Pressing this button will navigate to the Batch's referenced process and selects the Batch in the Activity Tester.

- Published versions of Batch Processes now have a "Batches" tab. This will show a list of all Batches currently using the selected process. These Batches can then be managed the same way they would be managed from the Batches Page.

Bonus! New Batch naming options

While not directly related to the Batch redesign, we have a new set of Batch Name Option properties for in version 2024. These options can be configured for Batches created by Import Jobs (either ad-hoc from the Imports page or procedurally by an Import Watcher). Previously, users could only affix a text prefix to a Batch when importing documents. The Batch would be named using the prefix and a time stamp (e.g. "Batch Prefix 2024-06-12 03:14:15 PM").

Users can now name Batches with a text prefix, one to three "segments", and a text suffix. This gives users a lot more flexibility in what they can name Batches created from imports. The "segments" may be set to one of the following:

- Sequence - A sequence number of the current Batch. The first Batch imported will be "1" then "2" and so on. This sequence may optionally be zero-padded ("00001" then "00002" and so on)

- DateTime - The current date and time.

- Process - The name of the assigned Batch Process.

- ContentType - The name of the Content Type assigned to the Batch.

- Username - The current Windows user's logon name.

- Machine - The name of the current machine.

- BatchId - The integer id number for the batch.

Miscellaneous

Improvements to Data Model expression efficiency

In this version, we refactored Data Model expressions (Default Value, Calculated Value, Is Valid, and Is Required) to address some longstanding issues. Specifically, we have improved how Data Model expressions are compiled. This will improve performance, particularly for large Data Models with a large number of Data Elements with a large number of expressions.

- In previous versions, a representation of the Data Model was compiled with each expression. This slowed things down and caused expressions to break unncessicarily in certain scenarios.

- In 2024, we resolve this by compiling each Data Model a single time.

- The compiled Data Model is called a "Data Model assembly". Expression assemblies reference Data Model assemblies, rather than defining types internally.

- BE AWARE: When testing expression configurations, the Data Model assemblies must be recompiled when changes to the Data Model, its Data Elements, or their expressions are made.

- Do this by selecting the Data Model in the Node Tree and clicking the "Refresh Tree Structure" button in the upper right corner of the screen.

- Example: You change a Data Field's type from "string" to "decimal" so its Calculated Value expression adds numbers together correctly. Before testing this expression, you should select the Data Model and refresh the node to recompile its assembly.

Auto Orient during Recognize

Tabular View in Data Review

Improvements to Grooper Desktop and scanning

New URL endpoint for scanning

http://{serverName}/Grooper/Review/Scan?repositoryId={repositoryId}&processName={processName}&batchName={batchName}

Data Column Propagation

Better multi-cardinal field support in Review

CMIS Document Link improvements

Overwrite property Move command

New icons

Object icons

|

|

|

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

|

|

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

Activity icons

|

|

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

|

|||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||