2021:Data Export (Export Definition): Difference between revisions

Dgreenwood (talk | contribs) |

Dgreenwood (talk | contribs) No edit summary |

||

| Line 75: | Line 75: | ||

{|cellpadding=10 cellspacing=5 | {|cellpadding=10 cellspacing=5 | ||

| style="vertical-align:top; width:40%" | | | style="vertical-align:top; width:40%" | | ||

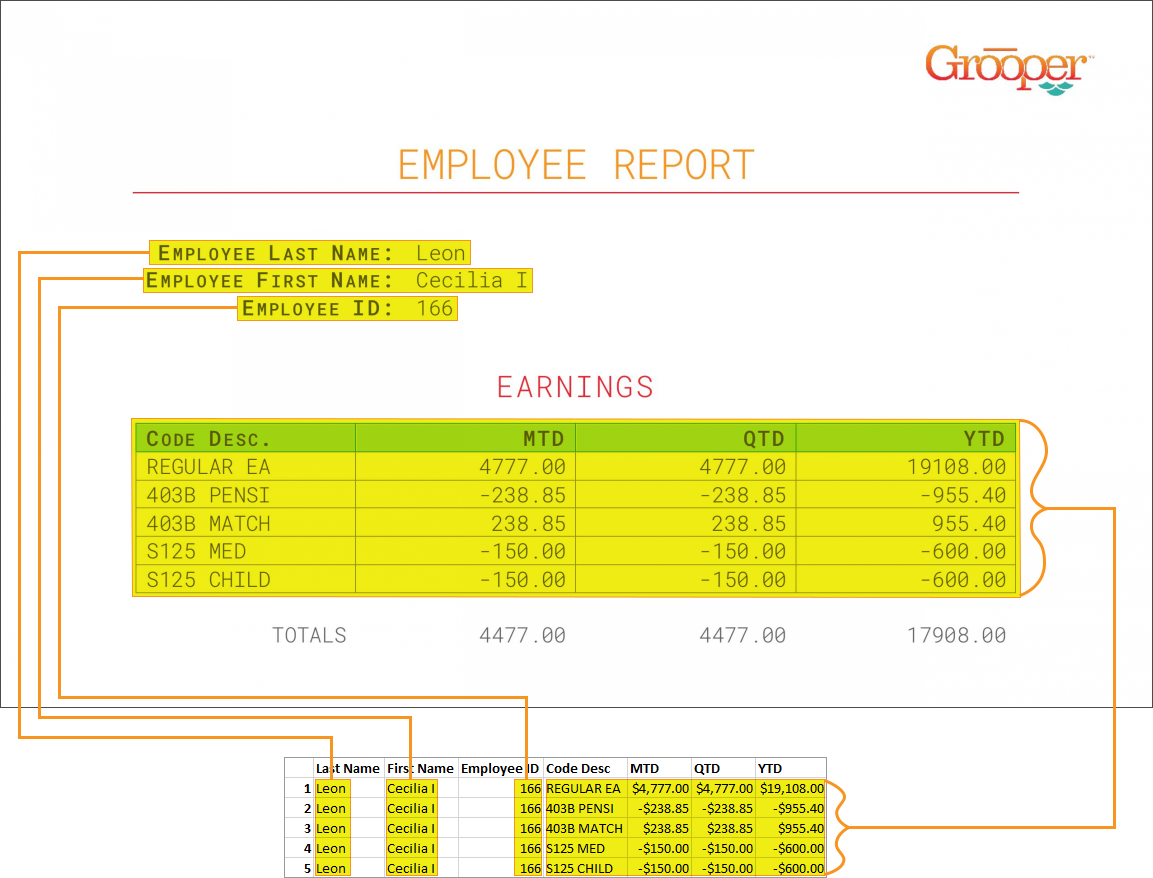

The thing to understand about | === Document 1: Employee Report === | ||

The thing to understand about this document is some of its data share a "one-to-many" relationship. | |||

Some of the data is described as "single instance" data. These are individual fields like "Employee Last Name", "Employee First Name" and "Employee ID". For each document, there is only one value for each of these fields. These values are only listed once, and hence only collected once during extraction. | |||

Some of the data, however, is described as "multi-instance" data. The "Earnings" table displays a dynamic amount of rows, for which there may be a varying number of data for its columns ("Code Desc", "MTD", "QTD", "YTD") depending on how many rows are in the table. There are multiple instances of the "YTD" value for the whole table (and therefore the whole document). | |||

The single instance data, as a result of only being listed once on the document, will only be collected once, but needs to be married to each row of information from the table, in one way or another. The "one" "Employee ID" value, for example, pertains to the "many" different table rows. | |||

This document is meant to show how to flatten data structures. While the single instance data is only collected once, it will be reported many times upon exporting to a database table. | |||

| | | | ||

[[File:database_export_002.png]] | [[File:database_export_002.png]] | ||

|- | |- | ||

| style="vertical-align:top; width:40%" | | | style="vertical-align:top; width:40%" | | ||

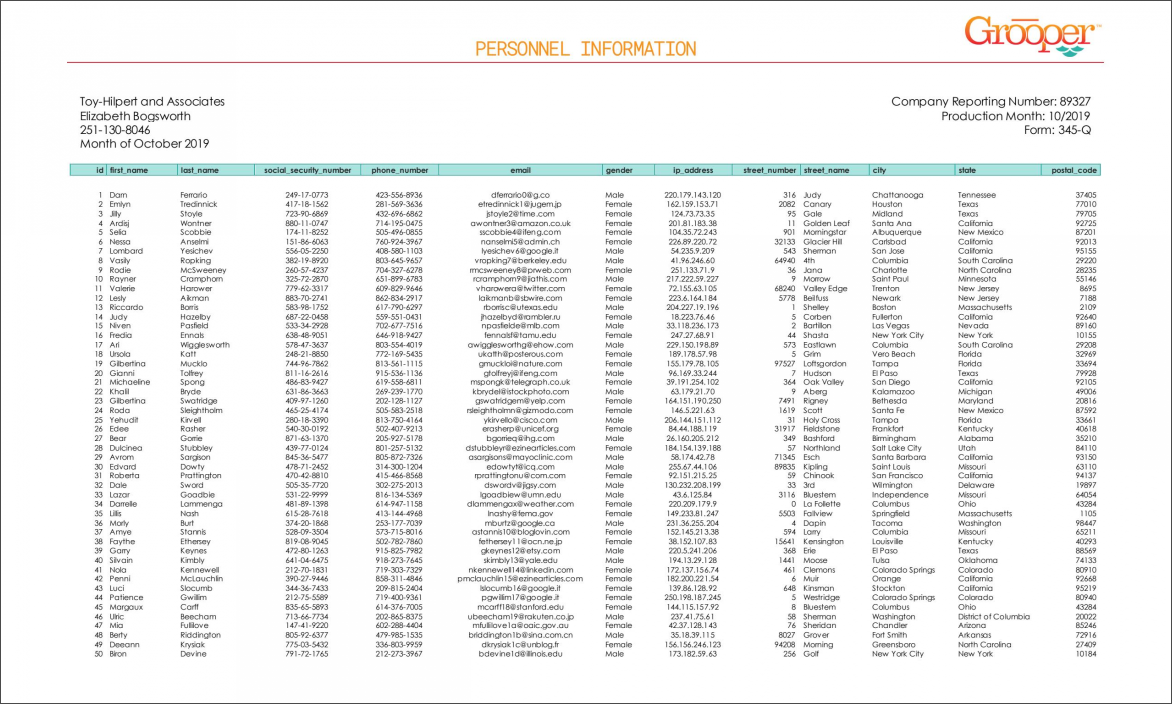

The second document is | === Document 2: Personnel Information Report === | ||

|| [[File:database_export_003.png ]] | |||

The second document is essentially one big table of personnel information (name, address, email, phone number and the like). | |||

While we ultimately want to collect data from all rows in this table, there are potentially two sets of information here. Some of it is generic personnel information, but some of it is "personally identifiable information" or PII. | |||

This information should be protected for legal reasons. | |||

As a result, we will export collected data to two database tables (with the assumption that the second table is "protected".) | |||

This document is meant to demonstrate how to export to multiple tables via one '''''Export Behavior'''''. | |||

{|cellpadding="10" cellspacing="5" | |||

|-style="background-color:#f89420; color:white" | |||

|style="font-size:22pt"|'''⚠'''||DISCLAIMER: The author of this article is not a lawyer. | |||

For educational purposes we divided "Personnel Information" into two sets: "non-PII" and "PII" | |||

* non-PII: Employee ID, Phone Number, E-mail address, IP Address, Gender, ZIP | |||

* PII: First Name, Last Name, SSN, Street Number, Street Name, City, State. | |||

'''''DO NOT TAKE THIS TO MEAN THIS INFORMATION IS OR IS NOT PERSONALLY IDENTIFIABLE INFORMATION.''''' | |||

This division was done purely for educational purposes to demonstrate a concept. | |||

PII gets tricky in the real world. For example, an IP Address would not normally qualify as PII by itself, but could when combined with other personal information. Please consult your own legal department to determine what data you're collecting is PII and should be protected more securely. | |||

|} | |||

| | |||

[[File:database_export_003.png ]] | |||

|} | |} | ||

| Line 142: | Line 177: | ||

</tabs> | </tabs> | ||

<--- | <!--- | ||

<tabs> | <tabs> | ||

<tab name="Configuring a Data Connection" style="margin:25px"> | <tab name="Configuring a Data Connection" style="margin:25px"> | ||

Revision as of 15:24, 6 October 2021

Data Export is one of the Export Types available when configuring an Export Behavior. It exports extracted document data over a Data Connection, allowing users to export data to a SQL or ODBC compliant database.

About

|

You may download and import the file below into your own Grooper environment (version 2021). This contains Batches with the example document(s) and a Content Model discussed in this article

|

About

The most important goal of Grooper is to deliver accurate data to line of business systems that allow the information to be integrated into impactful business decisioning. Tables in databases remain, to this day, one of the main vessels by which this information is stored. Data Export is one of the main ways to deliver data collected in Grooper.

There are three important things to understand when using and configuring Data Export to export data to a database:

- The Export activity.

- Data Elements

- Data Connections

The Export Activity

Grooper's Export activity is the mechanism by which Grooper-processed document content is delivered to an external storage platform. Export configurations are defined by adding Export Type definitions to Export Behaviors. Data Export is the Export Type designed to export Batch Folder document data collected by the Extract activity to a Microsoft SQL Server or ODBC-compliant database server.

For more information on configuring Export Behaviors, please visit the full Export activity article.

Data Elements

Data Export is the chief delivery device for "collection" elements. Data is collected in Grooper by executing the Extract activity, extracting values from a Batch Folder according to its classified Document Type's Data Model.

A Data Model in Grooper is a digital representation of document data targeted for extraction, defining the data structure for a Content Type in a Content Model. Data Models are objects comprised of Data Element objects, including:

- Data Fields used to target single field values on a document.

- Data Tables and their child Data Columns used to target tabular data on a document.

- Data Sections used to divide a document into sections to simplify extraction logic and/or target repeating sections of extractable Data Elements on a single document.

With Data Models and their child Data Elements configured, Grooper collects values using the Extract activity.

Depending on the Content Type hierarchy in a Content Model and/or Data Element hierarchy in a Data Model, there will be a collection, or "set", of values for varying data scope of a fully extracted Data Model's hierarchy. That may be the full data scope of the Data Model, including any inherited Data Elements inherited from parent Data Models. It may be a narrower scope of Data Elements like a child Data Section comprised of its own child Data Fields.

Understanding this will be important as Data Export has the ability to take full advantage of Grooper's hierarchical data modeling to flatten complex and inherited data structures. Understanding Data Element hierarchy and scope will also be critical when exporting data from a single document to multiple different database tables to ensure the right data exports to the right places.

Data Connections

Data Export uses a configured Data Connection object to establish a link to SQL or ODBC compliant database tables in a database and intelligently populate said tables. Once this connection is established, collected Data Elements can be mapped to corresponding column locations in one or multiple database tables. Much of Data Export's configuration is assigning these data mappings. The Data Connection presents these mappable data endpoints to Grooper as well as allowing data content to flow from Grooper to the database table when the Export activity processes each Batch Folder in a Batch.

Furthermore, not only can Grooper connect to existing databases using a Data Connection, but it can create whole new databases as well as database tables once a connection to the database server is established.

We discuss how to create Data Connections, add a new database from a Data Connection, and add a new database table from a Data Connection in the #Configuring a Data Connection tutorial below.

How To

Understanding the Forms

Document 1: Employee ReportThe thing to understand about this document is some of its data share a "one-to-many" relationship. Some of the data is described as "single instance" data. These are individual fields like "Employee Last Name", "Employee First Name" and "Employee ID". For each document, there is only one value for each of these fields. These values are only listed once, and hence only collected once during extraction. Some of the data, however, is described as "multi-instance" data. The "Earnings" table displays a dynamic amount of rows, for which there may be a varying number of data for its columns ("Code Desc", "MTD", "QTD", "YTD") depending on how many rows are in the table. There are multiple instances of the "YTD" value for the whole table (and therefore the whole document). The single instance data, as a result of only being listed once on the document, will only be collected once, but needs to be married to each row of information from the table, in one way or another. The "one" "Employee ID" value, for example, pertains to the "many" different table rows. This document is meant to show how to flatten data structures. While the single instance data is only collected once, it will be reported many times upon exporting to a database table. |

|||

Document 2: Personnel Information ReportThe second document is essentially one big table of personnel information (name, address, email, phone number and the like). While we ultimately want to collect data from all rows in this table, there are potentially two sets of information here. Some of it is generic personnel information, but some of it is "personally identifiable information" or PII. This information should be protected for legal reasons. As a result, we will export collected data to two database tables (with the assumption that the second table is "protected".) This document is meant to demonstrate how to export to multiple tables via one Export Behavior.

|

Understanding the Content Model

|

The Content Model extracting the data for these documents is fairly straight forward. There are two Document Types, each with their own Data Model. The first Document Type's Data Model is the one representing the one-to-many relationship. Notice for the fields represented once in the document there are Data Fields. For the tabular data, a Data Table was established. The second Document Type's Data Model is using one table extractor to collect all the data, but reporting it to two different tables. It should be noted that the documents in the accompanying Batches had their Document Type assigned manually. The Content Model is not performing any classification. |

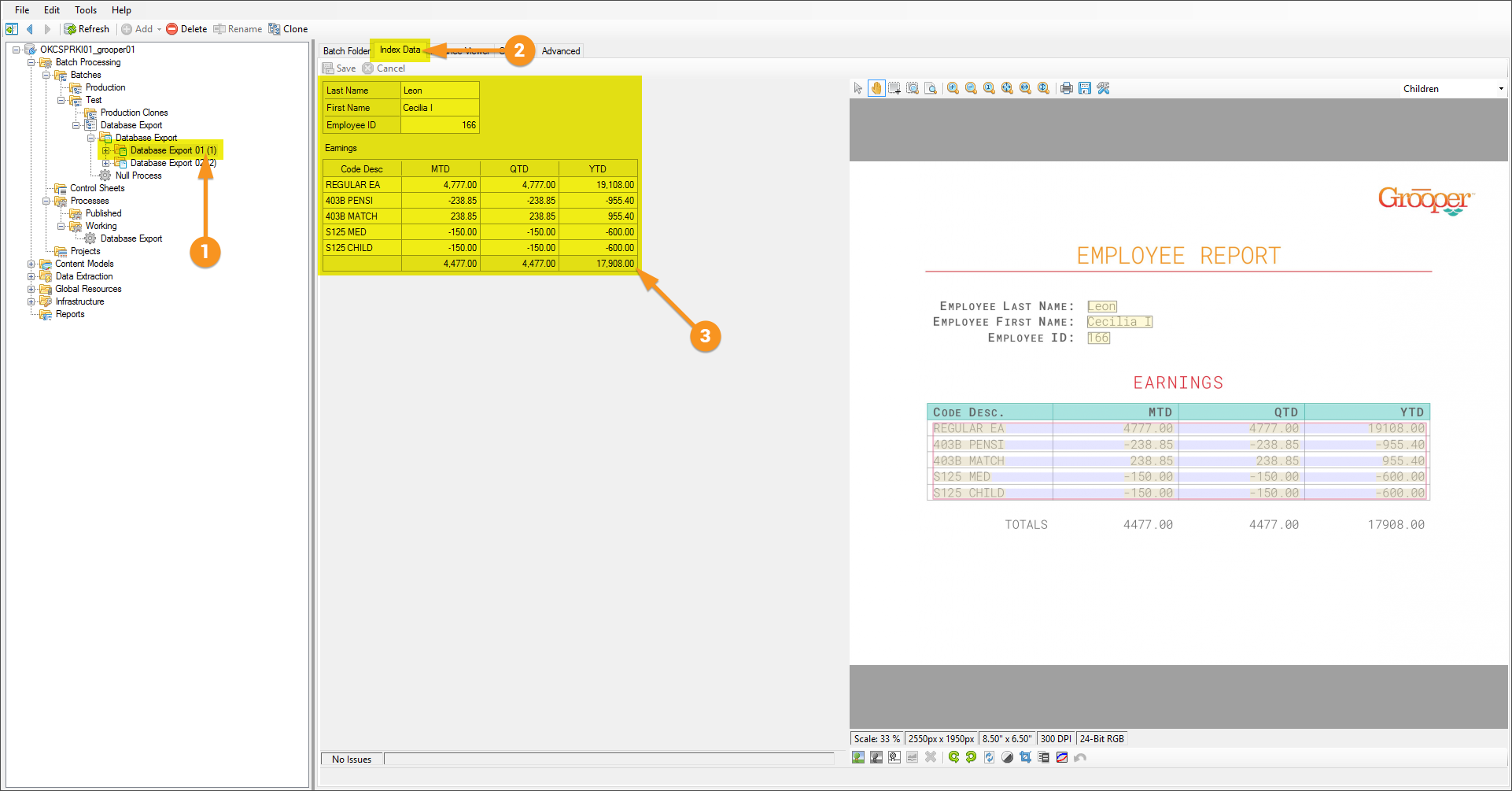

Verifying Index Data

Before the Database Export activity can send data, it must have data!

It's easy to get in the habit of testing extraction on a Data Field or a Data Model and feel good about the results, but it must be understood that the information displayed when doing so is in memory, or temporary. When testing a Data Export configuration, it's a good idea to ensure extracted data is actually present for document Batch Folders whose data you want to export.

When the Extract activity runs, it executes all extraction logic for the Data Model tied to a Batch Folder's classified Document Type. For each Batch Folder document, it creates "Index Data" and marries it to the Batch Folder via a JSON file called Grooper.DocumentData.json.

A couple of ways to verify its existence are as follows:

Option 1

|

Option 2

|