Grooper and AI: Difference between revisions

Dgreenwood (talk | contribs) |

Dgreenwood (talk | contribs) |

||

| (11 intermediate revisions by the same user not shown) | |||

| Line 25: | Line 25: | ||

== Relevant AI constructs and article links == | == Relevant AI constructs and article links == | ||

Here | Here you will find links to all currently available articles pertaining to Grooper AI functionality. | ||

=== Azure OCR === | === Azure OCR === | ||

: '''[[Azure OCR]]''' – An OCR Engine option for OCR Profiles that utilizes Microsoft Azure's Read API. Azure Read is an AI-based text recognition engine that uses a convolutional neural network (CNN) to recognize text. Compared to traditional OCR engines, it yields superior results, especially for handwritten text and poor-quality images. Grooper supplements Azure's results with those from a traditional OCR engine in areas where traditional OCR performs better. | |||

< | : '''[[Azure DI OCR]]''' – This OCR Engine utilizes Azure Document Intelligence for OCR. All models provided by this service use the Azure Read engine for their baseline OCR. Specialized models perform document analysis including text extraction, layout data collection, and more. | ||

:* Grooper’s current integration focuses on two models: | |||

:** <code>prebuilt-read</code> – Version 4.0 of the Read engine, offering superior machine-printed and handwritten text recognition across nearly any document image. | |||

:** <code>prebuilt-layout</code> – Collects additional layout data Grooper can use downstream, including table lines, checkbox data, and barcode data. | |||

:* ''Note: Azure DI OCR requires the "Azure Document Intelligence" Repository Option added to a Grooper Repository. [[#Azure Document Intelligence integration |See below for more information on Azure Docuemnt Intelligence]]'' | |||

:* <li class="attn-bullet"> The Azure DI OCR engine will eventually replace the Azure OCR engine in Grooper. Azure OCR uses an older API, with Read v3.2 being the most recent supported model. Microsoft is no longer updating this API and it will be fully retired by September 25, 2028. | |||

=== LLM connectivity and constructs === | |||

====LLM Connectivity==== | |||

: '''[[LLM Connector]]''' – A "Repository Option" configured on the '''Grooper Root''' node. This option provides connectivity to large language models (LLMs) offered by OpenAI and Microsoft Azure. There are currently two connection options: | |||

:* '''OpenAI''' – Connects Grooper to LLMs offered through the OpenAI API or compatible APIs. | |||

* ''' | :** <li class="fyi-bullet"> Compatible APIs must support <code>chat/completions</code> and <code>embeddings</code> endpoints similar to the OpenAI API to interoperate with Grooper’s LLM features. | ||

* ''' | :* '''Azure''' – Connects Grooper to LLMs offered by Microsoft Azure through its Model Catalog, including Azure OpenAI models. | ||

<section begin="llm-constructs" /> | |||

====LLM-enabled extraction capabilities==== | |||

: '''[[Ask AI]]''' – An LLM-based Value Extractor specialized for natural-language responses. This extractor sends the document’s text and a natural-language prompt to a chat completion service. The chatbot’s response becomes the extractor’s result. | |||

: '''[[AI Schema Extractor]]''' – An LLM-based Value Extractor specialized for structured JSON responses. The AI Schema Extractor enables advanced, schema-driven extraction from unstructured or semi-structured documents, supporting scenarios such as tables, line items, and multi-field records. | |||

: '''[[AI Extract]]''' – An LLM-based Fill Method for large-scale data extraction. Fill Methods are configured on '''Data Models''' and '''Data Sections'''. They perform a secondary extraction ''after'' child '''Data Elements''' extractors and extract methods execute, or they may act as the primary extraction mechanism when no other extractors are configured. AI Extract uses an LLM chat completion service to populate Data Models, often requiring only that Data Elements be defined. | |||

:''LLM-enabled Data Section Extract Methods'' | |||

:: '''[[AI Collection Reader]]''' – An LLM-based Section Extract Method for multi-instance section extraction. AI Collection Reader extends AI Section Reader for repeating records and is optimized for large, multi-page documents that must be processed in chunks to avoid exceeding LLM context limits. | |||

:: '''[[AI Section Reader]]''' – An LLM-based Section Extract Method for single-instance section extraction. It enables advanced extraction from complex, variable, or ambiguous document layouts using generative AI. | |||

:: '''[[AI Transaction Detection]]''' – An LLM-based Section Extract Method specialized for transaction-based documents such as payroll reports or EOBs. It automatically segments documents into individual transactions using detected anchors and can extract structured data from each transaction. | |||

:: '''[[Clause Detection]]''' – Designed to locate specified clause types in natural-language documents. Users provide one or more sample clauses, and an embeddings model identifies the most similar document chunks. Detected sections can then use '''AI Extract''' to extract structured data from the clause text. | |||

:'''[[AI Table Reader]]''' – An LLM-based Data Table Extract Method that enables extraction of tabular data from semi-structured or unstructured documents, even when table layouts are ambiguous or inconsistent. | |||

====LLM-enabled separation and classification capabilities==== | |||

:'''[[AI Separate]]''' – An LLM-based Separation Provider that evaluates page meaning and structure rather than relying on fixed rules, barcodes, or control sheets. | |||

:'''[[LLM Classifier]]''' – An LLM-based Classify Method that sends document content and candidate Document Types (with descriptions) to an LLM, which selects the best match. | |||

:'''[[Mark Attachments]]''' – Assists document separation by attaching documents to parent documents. When the Generative AI option is enabled, an LLM determines whether a document should be attached to the preceding or following document. | |||

====Other LLM-enabled capabilities==== | |||

AI | :'''[[AI Generator]]''' – Used on the Search page to generate text-based outputs (TXT, CSV, HTML, etc.) such as reports, summaries, or contact lists from search results. | ||

:'''[[AI Productivity Helpers]]''' – A collection of LLM-powered tools that assist Grooper Designers with tasks such as writing regular expressions, building Data Models, and composing AI Search queries. | |||

====VLM capabilities (experimental)==== | |||

Grooper | We are experimenting with Vision-Language Model (VLM) integration in Grooper. The following activities are available in current builds for experimentation but are not yet considered production-ready: | ||

:'''VLM Analyze''' – Analyzes pages or folders using a VLM and saves the response as structured JSON for downstream extraction. This JSON can be accessed by LLM-based constructs using the "JSON File" Quoting Method. | |||

:'''VLM OCR''' – Uses VLM models to recognize text from images, built on the VLM Analyze activity. | |||

=== | ====LLM-related properties and concepts==== | ||

[ | :[[Parameters]] – Properties that adjust how an LLM generates responses (for example, Temperature controls randomness). | ||

:[[Quoting Method]] – Determines what content is provided to an LLM, such as full document text, partial text, layout data, extracted values, or combinations thereof. | |||

:[[Alignment]] – Controls how LLM-based results are highlighted and aligned in the Document Viewer. | |||

:[[Prompt Engineering]] – The practice of designing and refining prompts to obtain desired responses from an LLM. | |||

<section end="llm-constructs" /> | |||

=== AI Search and the Search page === | |||

[ | '''[[AI Search]]''' – Enables document indexing and searching within Grooper. Once an index is created and documents are added, users can search document content and extracted data from the Search page. | ||

Simple and advanced query mechanisms provide flexibility in search criteria. The Search page can be used for research or to execute document processing commands. | |||

The [[AI Search]] article covers: | |||

* [[AI Search and the Search Page#How To|How to integrate Grooper with Azure AI Search and set up a new index]] | |||

* [[AI Search and the Search Page#Index documents and data from Grooper|The different ways to index documents]] | |||

* [[AI Search and the Search Page#Indexing Service|Setting up a background indexing service]] | |||

* [[AI Search and the Search Page#Search Page|Using the Search Page]] | |||

* [[AI Search and the Search Page#Search Page Commands|Available Search Page commands]] | |||

=== AI Assistants and the Chat page === | |||

'''[[AI Assistant]]''' – Enables the Chat page, allowing users to interact conversationally with documents and data. AI Assistants can access AI Search indexes, databases connected via Grooper Data Connections, and web services defined using RAML. | |||

AI Assistants use retrieval-augmented generation (RAG) to build a retrieval plan that determines what content is relevant to a conversation, enabling natural-language interaction with enterprise documents and data. | |||

=== Azure Document Intelligence integration === | |||

Azure Document Intelligence is a Microsoft cloud service that enables OCR and document analysis. Grooper’s integration allows organizations to leverage Azure’s advanced machine learning models for text extraction, layout analysis, and semantic understanding across both machine-printed and handwritten documents. | |||

Grooper connects to Azure Document Intelligence by configuring the '''[[Azure Document Intelligence]]''' Repository Option on the {{IconName|Root}} Root node using an API key and resource name. | |||

: | With the Azure Document Intelligence option configured, Grooper leverages the service in two primary ways: | ||

* '''[[Azure DI OCR]]''' – Used by the [[Recognize]] activity for text extraction and layout data collection. | |||

* '''[[DI Analyze]]''' – Used for comprehensive document analysis leveraged by AI-enabled features such as [[AI Extract]]. | |||

** <li class="fyi-bullet"> This analysis produces JSON data files that are used by the '''DI Layout''' quoting method when configuring AI-enabled features. | |||

== OpenAI and Azure account setup == | == OpenAI and Azure account setup == | ||

| Line 407: | Line 338: | ||

# In the "URL" property, enter the "Target URI" from Azure | # In the "URL" property, enter the "Target URI" from Azure | ||

#:*<li class="fyi-bullet"> For Azure OpenAI model deployments this will resemble: | #:*<li class="fyi-bullet"> For Azure OpenAI model deployments this will resemble: | ||

#:**<code><nowiki>https://{your-resource-name}.openai.azure.com/openai/deployments/{ | #:**<code><nowiki>https://{your-resource-name}.openai.azure.com/openai/deployments/{deployment-name}/chat/completions?api-version={api-version}</nowiki></code> for Chat Service deployments | ||

#:**<code><nowiki>https://{your-resource-name}.openai.azure.com/openai/deployments/{ | #:***<li class="attn-bullet"> At this time, the URL '''must''' take this format. If you copy a URL wth a <code>responses</code> endpoint from Azure, it will not work in Grooper. You will need to manually create the URL, filling in your resource's name and deployment name. Be sure to use the deployment's name and '''not''' the model's name. | ||

#:**<code><nowiki>https://{your-resource-name}.openai.azure.com/openai/deployments/{deployment-name}/embeddings?api-version={api-version}</nowiki></code> for Embeddings Service deployments | |||

#:*<li class="fyi-bullet"> For other models deployed in Azure AI Foundry (formerly Azure AI Studio) this will resemble: | #:*<li class="fyi-bullet"> For other models deployed in Azure AI Foundry (formerly Azure AI Studio) this will resemble: | ||

#:** <code><nowiki>https://{model-id}.{your-region}.models.ai.azure.com/v1/chat/completions</nowiki></code> for Chat Service deployments | #:** <code><nowiki>https://{model-id}.{your-region}.models.ai.azure.com/v1/chat/completions</nowiki></code> for Chat Service deployments | ||

| Line 461: | Line 393: | ||

* [https://learn.microsoft.com/en-us/azure/ai-studio/ Azure AI Studio full documentation] | * [https://learn.microsoft.com/en-us/azure/ai-studio/ Azure AI Studio full documentation] | ||

* [https://azure.microsoft.com/en-us/products/ai-model-catalog Azure AI model catalog] | * [https://azure.microsoft.com/en-us/products/ai-model-catalog Azure AI model catalog] | ||

== OpenAI and Azure OpenAI rate limits == | |||

=== OpenAI rate limits === | |||

[https://platform.openai.com/docs/guides/rate-limits OpenAI's full rate limit documentation] | |||

Rate limits restrict how many requests or tokens you can send to the OpenAI API in a specific time frame. Limits are set at the organization and project level (not per individual user) and vary by model type. Rate limits are measured in several ways: | |||

* RPM - Requests per minute (the number of calls to the "chat/completions" or "embeddings" endpoints per minute) | |||

* RPD - Requests per day (the number of calls to the "chat/completions" or "embeddings" endpoints per day) | |||

* TPM - Tokens per minute (effectively how much text is sent and received per minute) | |||

* TPD - Tokens per data (effectively how much text is sent and received per day) | |||

* Usage limit (OpenAI limits the total amount an organization can spend on the API per month) | |||

OpenAI places users in "usage tiers" based on how much they spend monthly with OpenAI and how old their account is. As usage tiers increase, so does the rate limit thresholds for each model. | |||

{|class="wikitable" | |||

|'''Tier'''||'''Qualification'''||'''Usage limits''' | |||

|- | |||

|Free||User must be in an allowed geography||$100 / month | |||

|- | |||

|Tier 1||$5 paid||$100 / month | |||

|- | |||

|Tier 2||$50 paid and 7+ days since first successful payment||$500 / month | |||

|- | |||

|Tier 3||$100 paid and 7+ days since first successful payment||$1,000 / month | |||

|- | |||

|Tier 4||$250 paid and 14+ days since first successful payment||$5,000 / month | |||

|- | |||

|Tier 5||$1,000 paid and 30+ days since first successful payment||$200,000 / month | |||

|} | |||

Users will automatically move up in usage tiers the more they spend with OpenAI. Users may also contact OpenAI support to request an increase in usage tier. | |||

[https://platform.openai.com/settings/organization/limits You can view your current usage tier and rate limits in the Limits page of the OpenAI Platform.] | |||

*<li class="attn-bullet"> For Grooper users just getting started connecting to the OpenAI API, this can cause large volumes of work to time out. Each time Grooper executes one of its LLM-based features (such as an AI Extract operation), it sends a request to OpenAI (these count toward your requests per minute/day), handing it document text and system messages (these count toward your tokens per minute/day). | |||

=== Azure OpenAI rate limits === | |||

[https://learn.microsoft.com/en-us/azure/ai-foundry/openai/quotas-limits?tabs=REST Azure AI Foundry full rate limit documentation.] | |||

Azure OpenAI models are deployed with Azure AI Foundry. This allows users to deploy OpenAI models outside of OpenAI's infrastructure as a service you manage in your Azure environment. They are still rate limited. However, in general, users are able to achieve greater throughput deploying Azure OpenAI models than using the OpenAI API. ''This is especially the case for new OpenAI accounts.'' | |||

Quotas and limits aren't enforced at the tenant level. Instead, the highest level of quota restrictions is scoped at the Azure subscription level. Rate limits are similar to OpenAI API's rate limts: | |||

* RPM - Requests per minute (the number of calls to the "chat/completions" or "embeddings" endpoints per minute) | |||

* TPM - Tokens per minute (effectively how much text is sent and received per minute) | |||

Several different factors affect your rate limits. | |||

* '''Global vs Data Zone deployments''' | |||

** Global deployments have larger rate limits and are better suited for high-volume workloads. | |||

** Example: Global Standard gpt-4.1 (Default) = 1M tokens per minute & 1K requests per minute | |||

** Example: Data Zone Standard gpt-4.1 (Default) = 300K tokens per minute & 300 requests per minute | |||

* '''Subscription tier''' | |||

** Enterprise and Microsoft Customer Agreement - Enterprise (MCA-E) vs Default | |||

** Enterprise and MCA-E agreements have larger rate limits than the default "pay-as-you-go" style agreement. The Default tier is best suited for testing and small teams, with MCA-E better suited for mid-to-large organizations and Enterprise is best for large organizations, especially those in regulated industries. | |||

** Example: Global Standard gpt-4.1 (Enterprise and MCA-E) = 5M tokens per minute & 5K requests per minute | |||

** Example: Global Standard gpt-4.1 (Default) = 1M tokens per minute & 1K requests per minute | |||

* '''Region''' | |||

** Rate limits are defined per region (e.g. South Central US, East US, West Europe, etc.) | |||

** However, you are not limited to a single global quota. You can deploy models in multiple regions to effectively increase your throughput (as long as your subscription supports it). | |||

* '''Model''' | |||

** Similar to OpenAI, rate limits differ depending on the model you use. | |||

** Example: Global Standard gpt-4.1 (Enterprise and MCA-E) = 5M tokens per minute & 5K requests per minute | |||

** Example: Global Standard gpt-4o (Enterprise and MCA-E) = 30M tokens per minute & 180K requests per minute | |||

== Does OpenAI or Azure AI Foundry save my data or use it for training? == | |||

: ''For OpenAI models'' | |||

:: Grooper integrates with OpenAI API ''not'' Chat GPT. When using the OpenAI API, your data (prompts, completions, embeddings, and fine-tuning data) is not used for training to improve OpenAI models (unless you explicitly opt in to share data with OpenAI). Your data is not available to other customers or other third parties. | |||

:: All data passed to and from OpenAI (prompts, completions, embeddings, and fine-tuning data) is encrypted in transit. | |||

:: Data is saved in the case of fine-tuning data for your own custom models. Fine-tuned models are available to you and no one else (without your consent). All stored fine-tuning data may be deleted at your discretion. All stored data is encrypted at rest. The OpenAI API may store logs for up to 30 days for abuse monitoring. However, they offer a "zero data retention" option for trusted customers with sensitive applications. You will need to contact the [https://openai.com/contact-sales/ OpenAI sales team] for more information on obtaining a zero data retention policy. | |||

::* Relevant links: | |||

::**[https://platform.openai.com/docs/guides/your-data See here for more on data controls in the OpenAI API.] | |||

::** [https://openai.com/policies/data-processing-addendum/ OpenAI Data Processing Addendum] | |||

::** [https://openai.com/enterprise-privacy/ OpenAI enterprise privacy policy] | |||

::** [https://platform.openai.com/docs/models/how-we-use-your-data Open AI API - How we use your data] | |||

:''For Azure AI Foundry Models (including Azure OpenAI models)'' | |||

:: Azure AI models are deployed in Azure resources under your control in your tenant. Models are deployed in Azure and operate as a service under your control. Your data (prompts, completions, embeddings, and fine-tuning data) is not available to other customers, OpenAI, or other third parties. Your data is not used for training to improve models by Microsoft, OpenAI or any other third parties with out your permission or instruction. | |||

:: All data passed to and from the model service (prompts, completions, embeddings, and fine-tuning data) is encrypted in transit. | |||

:: Some data is saved in certain cases, such as data saved for fine-tuning your own custom models. All stored data is encrypted at rest. All data may be deleted at your discretion. Azure will not store prompts and completions without enabling features that do so. Azure OpenAI may store logs for up to 30 days for abuse monitoring purposes, but this can be disabled for approved applications. | |||

::* Relevant links: | |||

::**[https://learn.microsoft.com/en-us/azure/ai-foundry/how-to/concept-data-privacy See here for Azure AI Foundry's data privacy summary] | |||

::**[https://learn.microsoft.com/en-us/azure/ai-foundry/responsible-ai/openai/data-privacy See here for Azure's OpenAI data privacy policies] | |||

== More information on specific models == | == More information on specific models == | ||

| Line 480: | Line 502: | ||

=== Known issues with certain models === | === Known issues with certain models === | ||

Occasionally, newly released models may not behave the same as previous models when used in Grooper. When this occurs, the table below will be updated with known issues, their impact on Grooper functionality, and any recommended workarounds. | Occasionally, newly released models may not behave the same as previous models when used in Grooper. Or, Parameters configurations may not be supported. When this occurs, the table below will be updated with known issues, their impact on Grooper functionality, and any recommended workarounds. | ||

{| class="wikitable" style="margin:auto" | {| class="wikitable" style="margin:auto" | ||

| Line 486: | Line 508: | ||

! Model name !! Date reported !! Notes | ! Model name !! Date reported !! Notes | ||

|- | |- | ||

| gpt-5 || | | gpt-5 (and above) || NA || gpt-5 does not support the use of the '''Temperature''' or '''Top P''' parameters. Use the default Temperature and Top P settings in Grooper to avoid a Bad Request error. | ||

:*<li class="fyi-bullet"> gpt-5.2 does support these parameters when reasoning effort is set to ''none''. However, Grooper does expose a ''none'' option at this time. | |||

|- | |||

| gpt-5.1 (and above) || NA || gpt-5.1 does not support the ''minimal'' option for the '''Reasoning Effort''' parameter. Use ''low'', ''medium'' or ''high'' to avoid a Bad Request error. | |||

|- | |||

| gpt-5.1 (and above) || 1/30/2026 || Grooper does not currently expose a ''none'' option for the '''Reasoning Effort''' parameter. Development is evaluating how to address this best but has not implemented a solution at the time this is written. | |||

|- | |||

| Structured Outputs supported models || NA || OpenAI's Structured Outputs feature is designed to make the model stick to a developer-supplied JSON schema. Paradoxically, there are scenarios where Structured Outputs can cause the model to be thrown in a repetitive loop when creating its JSON response. If you find this issue is affecting you, change the "Use Structured Output" property to ''False''. | |||

|} | |} | ||

| Line 497: | Line 526: | ||

Refer to the documentation for your chosen model to verify supported parameters before modifying default settings. | Refer to the documentation for your chosen model to verify supported parameters before modifying default settings. | ||

|} | |||

== Grooper before AI and after AI == | |||

<big>This used to be hard</big> | |||

''Less configuration. Better results.'' | |||

AI has fundamentally changed how the Grooper platform is configured — leading to accelerated deployments and systems that are easier to manage. | |||

{| class="wikitable" style="width:100%" | |||

|- | |||

! style="width:50%; background:#eeeeee;" | Old way | |||

! style="width:50%; background:#e1f5ee; color:#085041;" | New way | |||

|- | |||

| style="vertical-align:top; padding:10px;" | | |||

* Relied on lots of manual configuration | |||

* Tedious and time-consuming | |||

* Difficult to manage at scale | |||

* Required deep platform knowledge up front | |||

| style="vertical-align:top; padding:10px; background:#f4fbf8; color:#0f6e56;" | | |||

* AI-driven and largely automatic | |||

* Configured with natural language prompts | |||

* Easier to troubleshoot and maintain | |||

* Accessible without years of platform expertise | |||

|} | |||

=== Image cleanup for OCR === | |||

''Defects on images interfere with traditional OCR engines, leading to poor accuracy and data capture failures.'' | |||

{| class="wikitable" style="width:100%" | |||

|- | |||

! style="width:50%; background:#eeeeee;" | Old way — IP Profiles | |||

! style="width:50%; background:#e1f5ee; color:#085041;" | New way — Azure DI OCR | |||

|- | |||

| style="vertical-align:top; padding:10px;" | | |||

Build IP (Image Processing) Profiles to adjust the image prior to running an OCR engine. | |||

* No universal set of commands works across all image types and sources | |||

* Extensive configuration and testing required per job | |||

* Complexity compounds with every step added to a profile | |||

| style="vertical-align:top; padding:10px; background:#f4fbf8; color:#0f6e56;" | | |||

Uses Azure Document Intelligence to analyze images, providing an AI-based OCR engine trained on millions of images of varied types and quality. | |||

* Requires no pre-OCR cleanup in nearly all cases | |||

* Works out of the box with zero image tuning | |||

* Handles a wide variety of image qualities automatically | |||

|- | |||

| colspan="2" style="background:#f0f8f5; padding:10px; color:#0f6e56;" | | |||

'''Result:''' ''Days of image tuning reduced to zero configuration in most deployments.'' | |||

|} | |||

=== Document separation === | |||

''Scanned or digital page collections need to be split into individual documents — often one of the hardest problems in document processing.'' | |||

{| class="wikitable" style="width:100%" | |||

|- | |||

! style="width:50%; background:#eeeeee;" | Old way — 7 Separation Providers | |||

! style="width:50%; background:#e1f5ee; color:#085041;" | New way — AI Separate | |||

|- | |||

| style="vertical-align:top; padding:10px;" | | |||

Choose from and configure one of seven different Separation Providers. | |||

* Required expertise in each provider's logic and limitations | |||

* Each provider designed for narrow, predictable separation scenarios | |||

* Most providers required heavy configuration | |||

* Using "ESP Auto Separation" adds curated training data complexity | |||

| style="vertical-align:top; padding:10px; background:#f4fbf8; color:#0f6e56;" | | |||

A Separation Provider that uses an LLM to identify document boundaries from natural language instructions. | |||

* Instructions written in plain, human-readable language | |||

* Handles unpredictable separation points with ease | |||

* Optional reasoning output makes troubleshooting simple | |||

|- | |||

| colspan="2" style="background:#f0f8f5; padding:10px; color:#0f6e56;" | | |||

'''Result:''' ''Separation logic that once required training data and specialist knowledge is now written in plain English.'' | |||

|} | |||

=== Document classification === | |||

''Documents of different types need to be identified and routed correctly before data can be extracted from them.'' | |||

{| class="wikitable" style="width:100%" | |||

|- | |||

! style="width:50%; background:#eeeeee;" | Old way — Lexical Classification | |||

! style="width:50%; background:#e1f5ee; color:#085041;" | New way — LLM Classifier | |||

|- | |||

| style="vertical-align:top; padding:10px;" | | |||

Curate sample documents for each Document Type and train a classification model. | |||

* Required curated sample documents per Document Type | |||

* Training sets needed regular maintenance as formats evolved | |||

* New Document Types meant retraining from scratch | |||

* Edge cases and lookalike documents caused misclassification | |||

| style="vertical-align:top; padding:10px; background:#f4fbf8; color:#0f6e56;" | | |||

Classify documents by describing them in natural language — no samples or training required. | |||

* No sample documents or training required to get started | |||

* Handles novel document types immediately | |||

* Reasoning output explains every classification decision | |||

|- | |||

| colspan="2" style="background:#f0f8f5; padding:10px; color:#0f6e56;" | | |||

'''Result:''' ''Adding a new Document Type goes from a multi-day training exercise to writing a paragraph.'' | |||

|} | |||

=== Data extraction === | |||

''Specific fields need to be located and extracted from documents — even when layouts vary across vendors, versions, or sources.'' | |||

{| class="wikitable" style="width:100%" | |||

|- | |||

! style="width:50%; background:#eeeeee;" | Old way — Extractors and templates | |||

! style="width:50%; background:#e1f5ee; color:#085041;" | New way — AI Extract | |||

|- | |||

| style="vertical-align:top; padding:10px;" | | |||

Build rule-based (or worse zone-based) extractors for each field, table and section for each document. | |||

* Zone-based extractors broke whenever layouts changed | |||

* Rules-based extractors use regex or required other technical expertise | |||

* Each vendor or form variant may need its own template (Document Type) and override logic | |||

* Too much to learn: 25 Value Extractors. 7 Table Extract Methods. 11 Section Extract Methods. | |||

* Maintenance burden grew with every new document source | |||

| style="vertical-align:top; padding:10px; background:#f4fbf8; color:#0f6e56;" | | |||

Describe what to extract in plain language. Works across varied formats automatically. | |||

* Layout-agnostic — works across varied formats without template changes | |||

* One extraction definition handles multiple document variants | |||

* Contextual understanding catches fields rule-based tools miss | |||

|- | |||

| colspan="2" style="background:#f0f8f5; padding:10px; color:#0f6e56;" | | |||

'''Result:''' ''A single natural language prompt replaces dozens of fragile field rules and vendor templates.'' | |||

|} | |||

=== Document analysis === | |||

''Documents are one part image, one part text. Understanding a document means understanding both — not just the characters on the page, but how they're arranged and related.'' | |||

{| class="wikitable" style="width:100%" | |||

|- | |||

! style="width:50%; background:#eeeeee;" | Old way — Raw text | |||

! style="width:50%; background:#e1f5ee; color:#085041;" | New way — DI Analyze | |||

|- | |||

| style="vertical-align:top; padding:10px;" | | |||

Recognize text using OCR and feed raw output to extractors for separation, classification, and data extraction. | |||

* Raw text includes character positions only — no structural context | |||

* Relationships between text segments are invisible to the engine | |||

* Users must define those relationships manually using extractors | |||

* Layout cues like headings, columns, and tables are effectively lost | |||

| style="vertical-align:top; padding:10px; background:#f4fbf8; color:#0f6e56;" | | |||

Uses Azure Document Intelligence to recognize text and analyze layout relationships simultaneously, producing structured output specifically designed to feed Grooper's AI-enabled features. | |||

* Captures document structure without complicated setup | |||

* Handles structured, semi-structured, and unstructured documents natively | |||

* Identifies table structures with high accuracy, including tables that span pages | |||

* Structured output is purpose-built as input for AI Separate, LLM Classifier, AI Extract, and other AI-enabled features | |||

|- | |||

| colspan="2" style="background:#f0f8f5; padding:10px; color:#0f6e56;" | | |||

'''Result:''' ''DI Analyze gives Grooper's AI-enabled features a richer starting point — turning a page of pixels into structured, relationship-aware content that improves separation, classification, and extraction outcomes.'' | |||

|} | |||

=== Image analysis === | |||

''Documents aren't just text. Signatures, photos, charts, checkboxes, stamps — for most of Grooper's history, these visual elements were either ignored or required painstaking configuration to detect. VLMs change that entirely.'' | |||

{| class="wikitable" style="width:100%" | |||

|- | |||

! style="width:50%; background:#eeeeee;" | Old way — IP Profiles | |||

! style="width:50%; background:#e1f5ee; color:#085041;" | New way — VLM Analyze | |||

|- | |||

| style="vertical-align:top; padding:10px;" | | |||

Use IP Commands to locate specific, predefined document features. | |||

* Limited to a narrow set of detectable features: lines, checkboxes, and barcodes | |||

* Commands required configuration and offered no flexibility outside their designed purpose | |||

* Detecting irregular shapes like stamps required a custom image training process | |||

* Photos were unanalyzable — content was invisible to the platform | |||

* Charts and graphs could not be read or interpreted in any meaningful way | |||

* Visual elements outside the supported feature set were simply ignored | |||

| style="vertical-align:top; padding:10px; background:#f4fbf8; color:#0f6e56;" | | |||

Uses Vision-Language Models to analyze document images from a natural language prompt, producing structured output designed to feed Grooper's AI-enabled features. | |||

* Analyze any visual element on a document — photos, signatures, stamps, charts, diagrams, and more | |||

* Describe what you're looking for in plain language — no configuration required | |||

* Extract meaningful information from charts and graphs, not just detect their presence | |||

* Previously supported layout features like checkboxes and barcodes are still collected and available as before | |||

* Opens up entirely new document processing use cases that were simply not possible before | |||

|- | |||

| colspan="2" style="background:#f0f8f5; padding:10px; color:#0f6e56;" | | |||

'''Result:''' ''Image analysis used to mean detecting a handful of predefined shapes. With VLMs, it means understanding anything a human eye can see — described in plain language, no configuration required.'' | |||

|} | |} | ||

Latest revision as of 11:00, 14 April 2026

Grooper's groundbreaking AI-based integrations make getting good data from your documents a reality with less setup than ever before.

Artificial intelligence and machine learning technology has always been a core part of the Grooper platform. It all started with ESP Auto Separation's capability to separate loose pages into discrete documents using trained examples. As Grooper has progressed, we've integrated with other AI technologies to improve our product.

Grooper not only offers Azure's machine-learning based OCR engine as an option, but we improve its results with supplementary data from traditional OCR engines. This gives you the "best of both worlds": Highly accurate character recognition and position data for both machine printed and hand written text with minimal setup.

In version 2024, Grooper added a slew of features incorporating cutting edge AI technologies into the platform. These AI based features accelerate Grooper's design and development. The end result is easier deployments, results with less setup and, for the first time in Grooper, a document search and retrieval mechanism.

In version 2025, we introduced AI Assistants, a conversational AI that is knowledgeable about your documents and more. With AI Assistants, users can ask a chatbot persona questions about resources it is connected to, including documents, databases and web services.

Grooper's AI integrations and features include:

- Azure OCR - A machine learning based OCR engine offered by Microsoft Azure.

- Large language model (LLM) based data extraction at scale

- A robust and speedy document search and retrieval mechanism

- Document assistants users can chat with about their documents

In this article, you will find:

- Relevant links to articles in the Grooper Wiki

- Quickstart guides to set up OpenAI and Azure accounts to integrate functionality with Grooper

Relevant AI constructs and article links

Here you will find links to all currently available articles pertaining to Grooper AI functionality.

Azure OCR

- Azure OCR – An OCR Engine option for OCR Profiles that utilizes Microsoft Azure's Read API. Azure Read is an AI-based text recognition engine that uses a convolutional neural network (CNN) to recognize text. Compared to traditional OCR engines, it yields superior results, especially for handwritten text and poor-quality images. Grooper supplements Azure's results with those from a traditional OCR engine in areas where traditional OCR performs better.

- Azure DI OCR – This OCR Engine utilizes Azure Document Intelligence for OCR. All models provided by this service use the Azure Read engine for their baseline OCR. Specialized models perform document analysis including text extraction, layout data collection, and more.

- Grooper’s current integration focuses on two models:

prebuilt-read– Version 4.0 of the Read engine, offering superior machine-printed and handwritten text recognition across nearly any document image.prebuilt-layout– Collects additional layout data Grooper can use downstream, including table lines, checkbox data, and barcode data.

- Note: Azure DI OCR requires the "Azure Document Intelligence" Repository Option added to a Grooper Repository. See below for more information on Azure Docuemnt Intelligence

- The Azure DI OCR engine will eventually replace the Azure OCR engine in Grooper. Azure OCR uses an older API, with Read v3.2 being the most recent supported model. Microsoft is no longer updating this API and it will be fully retired by September 25, 2028.

- Grooper’s current integration focuses on two models:

LLM connectivity and constructs

LLM Connectivity

- LLM Connector – A "Repository Option" configured on the Grooper Root node. This option provides connectivity to large language models (LLMs) offered by OpenAI and Microsoft Azure. There are currently two connection options:

- OpenAI – Connects Grooper to LLMs offered through the OpenAI API or compatible APIs.

- Compatible APIs must support

chat/completionsandembeddingsendpoints similar to the OpenAI API to interoperate with Grooper’s LLM features.

- Compatible APIs must support

- Azure – Connects Grooper to LLMs offered by Microsoft Azure through its Model Catalog, including Azure OpenAI models.

- OpenAI – Connects Grooper to LLMs offered through the OpenAI API or compatible APIs.

LLM-enabled extraction capabilities

- Ask AI – An LLM-based Value Extractor specialized for natural-language responses. This extractor sends the document’s text and a natural-language prompt to a chat completion service. The chatbot’s response becomes the extractor’s result.

- AI Schema Extractor – An LLM-based Value Extractor specialized for structured JSON responses. The AI Schema Extractor enables advanced, schema-driven extraction from unstructured or semi-structured documents, supporting scenarios such as tables, line items, and multi-field records.

- AI Extract – An LLM-based Fill Method for large-scale data extraction. Fill Methods are configured on Data Models and Data Sections. They perform a secondary extraction after child Data Elements extractors and extract methods execute, or they may act as the primary extraction mechanism when no other extractors are configured. AI Extract uses an LLM chat completion service to populate Data Models, often requiring only that Data Elements be defined.

- LLM-enabled Data Section Extract Methods

- AI Collection Reader – An LLM-based Section Extract Method for multi-instance section extraction. AI Collection Reader extends AI Section Reader for repeating records and is optimized for large, multi-page documents that must be processed in chunks to avoid exceeding LLM context limits.

- AI Section Reader – An LLM-based Section Extract Method for single-instance section extraction. It enables advanced extraction from complex, variable, or ambiguous document layouts using generative AI.

- AI Transaction Detection – An LLM-based Section Extract Method specialized for transaction-based documents such as payroll reports or EOBs. It automatically segments documents into individual transactions using detected anchors and can extract structured data from each transaction.

- Clause Detection – Designed to locate specified clause types in natural-language documents. Users provide one or more sample clauses, and an embeddings model identifies the most similar document chunks. Detected sections can then use AI Extract to extract structured data from the clause text.

- AI Table Reader – An LLM-based Data Table Extract Method that enables extraction of tabular data from semi-structured or unstructured documents, even when table layouts are ambiguous or inconsistent.

LLM-enabled separation and classification capabilities

- AI Separate – An LLM-based Separation Provider that evaluates page meaning and structure rather than relying on fixed rules, barcodes, or control sheets.

- LLM Classifier – An LLM-based Classify Method that sends document content and candidate Document Types (with descriptions) to an LLM, which selects the best match.

- Mark Attachments – Assists document separation by attaching documents to parent documents. When the Generative AI option is enabled, an LLM determines whether a document should be attached to the preceding or following document.

Other LLM-enabled capabilities

- AI Generator – Used on the Search page to generate text-based outputs (TXT, CSV, HTML, etc.) such as reports, summaries, or contact lists from search results.

- AI Productivity Helpers – A collection of LLM-powered tools that assist Grooper Designers with tasks such as writing regular expressions, building Data Models, and composing AI Search queries.

VLM capabilities (experimental)

We are experimenting with Vision-Language Model (VLM) integration in Grooper. The following activities are available in current builds for experimentation but are not yet considered production-ready:

- VLM Analyze – Analyzes pages or folders using a VLM and saves the response as structured JSON for downstream extraction. This JSON can be accessed by LLM-based constructs using the "JSON File" Quoting Method.

- VLM OCR – Uses VLM models to recognize text from images, built on the VLM Analyze activity.

- Parameters – Properties that adjust how an LLM generates responses (for example, Temperature controls randomness).

- Quoting Method – Determines what content is provided to an LLM, such as full document text, partial text, layout data, extracted values, or combinations thereof.

- Alignment – Controls how LLM-based results are highlighted and aligned in the Document Viewer.

- Prompt Engineering – The practice of designing and refining prompts to obtain desired responses from an LLM.

AI Search and the Search page

AI Search – Enables document indexing and searching within Grooper. Once an index is created and documents are added, users can search document content and extracted data from the Search page.

Simple and advanced query mechanisms provide flexibility in search criteria. The Search page can be used for research or to execute document processing commands.

The AI Search article covers:

- How to integrate Grooper with Azure AI Search and set up a new index

- The different ways to index documents

- Setting up a background indexing service

- Using the Search Page

- Available Search Page commands

AI Assistants and the Chat page

AI Assistant – Enables the Chat page, allowing users to interact conversationally with documents and data. AI Assistants can access AI Search indexes, databases connected via Grooper Data Connections, and web services defined using RAML.

AI Assistants use retrieval-augmented generation (RAG) to build a retrieval plan that determines what content is relevant to a conversation, enabling natural-language interaction with enterprise documents and data.

Azure Document Intelligence integration

Azure Document Intelligence is a Microsoft cloud service that enables OCR and document analysis. Grooper’s integration allows organizations to leverage Azure’s advanced machine learning models for text extraction, layout analysis, and semantic understanding across both machine-printed and handwritten documents.

Grooper connects to Azure Document Intelligence by configuring the Azure Document Intelligence Repository Option on the database Root node using an API key and resource name.

With the Azure Document Intelligence option configured, Grooper leverages the service in two primary ways:

- Azure DI OCR – Used by the Recognize activity for text extraction and layout data collection.

- DI Analyze – Used for comprehensive document analysis leveraged by AI-enabled features such as AI Extract.

- This analysis produces JSON data files that are used by the DI Layout quoting method when configuring AI-enabled features.

OpenAI and Azure account setup

For Azure OCR connectivity

Grooper can implement Azure AI Vision's Read engine for OCR. "Read" is a machine learning based OCR engine used to recognize text from images with superior accuracy, including both machine printed and handwritten text.

Azure OCR Quickstart

In Azure:

- If not done so already, create an Azure account.

- Go to your Azure portal

- If not done so already, create a "Subscription" and "Resource Group."

- Click the "Create a resource" button

- Search for "Computer Vision"

- Find "Computer Vision" in the list click the "Create" button.

- Follow the prompts to create the Computer Vision resource.

- Go to the resource.

- Under "Keys and endpoint", copy the API key.

In Grooper

- Go to an OCR Profile in Grooper.

- For the OCR Engine property, select Azure OCR.

- Paste the API key in the API Key property.

- Adjust the API Region property to match your Computer Vision resource's Region value

- You can find this by going to the resource in the Azure portal and inspecting its "Location" value.

External resources

- Azure AI Vision overview

- Azure AI Vision full documentation

- Azure OCR (Read) documentation

- Deploy Azure OCR (Read) on prem

For Azure AI Search connectivity

Grooper uses Azure AI Search's API to create a document search and retrieval mechanism.

Azure AI Search Quickstart

In Azure:

- If not done so already, create an Azure account.

- Go to your Azure portal

- If not done so already, create a "Subscription" and "Resource Group."

- Click the "Create a resource" button

- Search for "Azure AI Search"

- Find "Azure AI Search" in the list click the "Create" button.

- Follow the prompts to create the Azure AI Search resource.

- The pricing tier defaults to "Standard". There is a "Free" option if you're just wanting to try this feature out.

- Go to the resource.

- In the "Essentials" panel, copy the "Url" value. You will need this in Grooper.

- In the left-hand navigation panel, expand "Settings" and select "Keys"

- Copy the "Primary admin key" or "Secondary admin key." You will need this in Grooper.

In Grooper:

- Connect to the Azure AI Search service by adding the "AI Search" option to your Grooper Repository.

- From the Design page, go to the Grooper Root node.

- Select the Options property and open its editor.

- Add the AI Search option.

- Paste the AI Search service's URL in the URL property.

- Paste the AI Search service's API key in the API Key property.

- Full documentation on Grooper's AI Search capabilities can be found in the AI Search article.

External resources

- Create an AI Search service in the Azure portal

- Azure AI Search full documentation

- Pricing - Note: Grooper's AI Search integration does not currently use any of the "Premium Features" that incur additional costs beyond the hourly/monthly pricing models.

- How to choose a service tier

- Service limits - Note: This documents each tier's limits, such as number of indexes, partition storage, vector index storage and maximum number of documents.

For LLM connectivity

Grooper can integrate with OpenAI and Microsoft Azure's LLM models to implement various LLM-based functionality. Before you are able to connect Grooper to these resources, you will need accounts setup with these providers. For information on how to set up these accounts/resources, refer to the following links:

OpenAI LLM Quickstart

In OpenAI:

- If you have not done so already, create an OpenAI account.

- Go to the API keys section of the OpenAI Platform.

- Press the "Crate new secret key" button.

- Follow the prompts to create a new key.

- Copy the API key. You will not be able to view/copy it again.

In Grooper:

- Connect to the OpenAI API by adding an "LLM Connector" option to your Grooper Repository.

- From the Design page, go to the Grooper Root node.

- Select the Options property and open its editor.

- Add the LLM Connector option.

- Select the Service Providers property and open its editor.

- Add an OpenAI provider.

- Paste the OpenAI API key in the API Key property.

- Refer to the following articles for more information on functionality enabled by the LLM Connector:

External resources

Azure LLM Quickstart

Microsoft Azure offers access to several LLMs through their "Model Catalog". This includes Azure OpenAI models, Mistral AI models, Meta's Llama models, and more. To access a model from the catalog in Grooper, it must first be deployed in Azure from Azure AI Foundry (formerly Azure AI Studio).

This advice is intended for users wanting to deploy the Azure OpenAI models in the Azure model catalog and use them in Grooper. We will instruct you how to use Azure AI Foundry (formerly Azure AI Studio) to deploy OpenAI models. Deploying other chat completions and embeddings models in the Azure model catalog may require additional setup in Azure.

- Please Note: Azure OpenAI integration with Grooper is different from OpenAI. Each model needs to be deployed in Azure to access it in Grooper (where the OpenAI provider allows you to select all models in Grooper).

In Azure: You have two options when it comes to deploying OpenAI models in Azure:

- Option 1: Create an Azure AI Foundry project - There's more steps to creating an Azure AI Foundry project, but it will allow you to deploy non-OpenAI models as well. This is also Azure's stated preference for users.

- Option 2: Create an Azure OpenAI service - Many users find this easier to deploy, but you are limited to deploying only OpenAI models.

Option 1: Create an "Azure AI project" in Azure AI Foundry (formerly Azure AI Studio)

- If not done so already, create an Azure account.

- If not done so already, create a "Subscription" and "Resource Group" in the Azure portal

- Go to Azure AI Foundry (formerly Azure AI Studio).

- From the Home page, press the "New project" button.

- A dialogue will appear to create a new project. Enter a name for the project.

- If you don't have an Azure AI hub or "hub", select "Create a new hub"

- For more information on "projects" and "hubs" refer to Microsoft's hubs and projects documentation.

- Follow the prompts to create a new hub.

- When selecting your "Location" for the hub, be aware Azure AI services availability differs per region. Certain models may not be available in certain regions.

- When selecting "Connect Azure AI Services or Azure OpenAI", you can either:

- Connect to an existing Azure OpenAI or Azure AI Services resource.

- Or, you can create one.

- If you want access to all models from all providers (not just OpenAI) you must use Azure AI Services.

- Select and deploy an LLM model from your Azure AI hub

- From your hub, select "Models + endpoints"

- Press the "Deploy model" button.

- Select "Deploy base model".

- Choose the OpenAI model of your choice.

- Grooper's integration with Azure's Model Catalog uses either "chat completion" or "embeddings" models depending on the feature. To better narrow down your list of options, expand the "Inference tasks" dropdown and select "Chat completion" and/or "Embeddings."

- Embeddings models are required when enabling "Vector Search" for an Indexing Behavior, when using the Clause Detection section extract method, or when using the "Semantic" Document Quoting method in Grooper.

- If you connected with Azure AI Services, you will have access to all models in Azure's model catalog. However, non-OpenAI models (such as mistral or Llama) may have additional setup required.

- Press the "Confirm" button.

- Press the "Deploy" button.

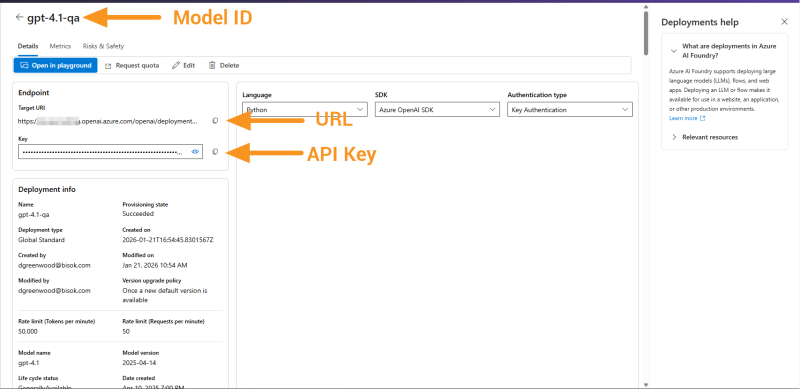

- Get the necessary information you'll need in Grooper

- The model will be deployed in your Azure AI project. In your project, go to "Models + endpoints".

- Select the OpenAI model you deployed.

- Copy the "Target URI". You will need to enter this in Grooper.

- Copy the "Key". You will need to enter this in Grooper.

- Notice as well the "Authentication type" is "Key Authentication". This will be important when configuring Grooper too.

Option 2: Create an Azure OpenAI service

- If not done so already, create an Azure account.

- Go to the Azure portal

- If not done so already, create a "Subscription" and "Resource Group" in the Azure portal.

- Search for "Azure OpenAI" and select Azure OpenAI under Services.

- Press the "Create" button and select "Azure OpenAI".

- Fill in the required fields, including a Pricing Tier.

- Press "Next" until you can create the resource.

- Select and deploy a model.

- Go to the newly created Azure OpenAI resource.

- From the Overview section, press the "Go to Foundry portal".

- In Azure AI Foundry, go to the Deployments section.

- Press the "Deploy model" button and select "Deploy base model".

- Select the OpenAI model you wish to deploy.

- Using the "Inference tasks" editor, select "Chat completion" for completions models (used for AI Extract and other AI-enabled features in Grooper).

- Using the "Inference tasks" editor, select "Embeddings" for embeddings models (used for AI-enabled features that require embeddings generation such as Vector Search enabled Indexing Behavior configurations).

- Press "Confirm" to confirm your selection.

- Name the deployment (this will be the "Model ID" in Grooper), choose the deployment type and press "Deploy".

- Get the necessary information you'll need in Grooper

- Copy deployed model's name. You will need to enter this in Grooper.

- Copy the "Target URI". You will need to enter this in Grooper.

- Copy the "Key". You will need to enter this in Grooper.

- Notice as well the "Authentication type" is "Key Authentication". This will be important when configuring Grooper too.

In Grooper:

- Connect to the Azure model deployment by adding an "LLM Connector" option to your Grooper Repository.

- From the Design page, go to the "Grooper Root" node.

- Select the "Options" property and open its editor.

- Add the "LLM Connector" option.

- Select the "Service Providers" property and open its editor.

- Add an "Azure" provider.

- Select the "Deployments'" property and open its editor.

- Add a "Chat Service", "Embeddings Service", or both.

- "Chat Services" are required for most LLM-based features in Grooper, such as AI Extract and AI Assistants. "Embeddings Services" are required when enabling "Vector Search" for an Indexing Behavior, when using the Clause Detection section extract method, or when using the "Semantic" Document Quoting method in Grooper.

- In the "Model Id" property, enter the model's name (Example: "gpt-35-turbo").

- In the "URL" property, enter the "Target URI" from Azure

- For Azure OpenAI model deployments this will resemble:

https://{your-resource-name}.openai.azure.com/openai/deployments/{deployment-name}/chat/completions?api-version={api-version}for Chat Service deployments- At this time, the URL must take this format. If you copy a URL wth a

responsesendpoint from Azure, it will not work in Grooper. You will need to manually create the URL, filling in your resource's name and deployment name. Be sure to use the deployment's name and not the model's name.

- At this time, the URL must take this format. If you copy a URL wth a

https://{your-resource-name}.openai.azure.com/openai/deployments/{deployment-name}/embeddings?api-version={api-version}for Embeddings Service deployments

- For other models deployed in Azure AI Foundry (formerly Azure AI Studio) this will resemble:

https://{model-id}.{your-region}.models.ai.azure.com/v1/chat/completionsfor Chat Service deploymentshttps://{model-id}.{your-region}.models.ai.azure.com/v1/embeddingsfor Chat Service deployments

- For Azure OpenAI model deployments this will resemble:

- Set "Authorization" to the method appropriate for the model deployment in Azure.

- How do I know which method to choose? In Azure, under "Keys and Endpoint", you'll typically see

- "API Key" if the model uses API key authentication (Choose "ApiKey" in Grooper).

- "Microsoft Entra ID" if the model supports token-based authentication via Microsoft Entra ID (Choose "Bearer" in Grooper).

- Azure OpenAI supports both API Key and Microsoft Entra ID (formerly Azure AD) authentication. Azure AI Foundry (formerly Azure AI Studio) models often lean toward token-based (Bearer in Grooper) authentication.

- How do I know which method to choose? In Azure, under "Keys and Endpoint", you'll typically see

- In the "API Key" property, enter the Key copied from Azure.

- (Recommended) Turn on "Use System Messages" (change the property to "True").

- When enabled, certain instructions Grooper generates and information handed to a model are sent as "system" messages instead of "user" messages. This helps an LLM distinguish between contextual information and input from a user. This is recommended for most OpenAI models, but may not be supported by all compatible services.

- Press "OK" buttons in each editor to confirm changes and press "Save" on the Grooper Root.

- Refer to the following articles for more information on functionality enabled by the LLM Connector:

Quick way to get configuration info (for models deployed in an Azure AI Foundry project)

- Go to Azure AI Foundry.

- If not already selected, use the dropdown in the top navigation bar to select your project.

- Under, "My assets" go to the "Models + endpoints" section.

- Select the model deployment from the list.

- All the info you need will be on this page.

- The Model ID is the name of the deployment you just clicked.

- Copy the full URL under "Target URI"

- Copy the API Key under "Key".

Quick way to get configuration info (for models deployed using Azure OpenAI services)

If you just need the Model ID, URL and API Key for an already deployed model, follow these steps:

- Go to the Azure portal.

- Go to "All Resources".

- Search for "Azure OpenAI"

- Select your Azure OpenAI service from the list.

- From the "Overview" section, press the "Go to Foundry portal" button.

- From the Azure foundry, go to the "Deployments" section.

- Select the OpenAI model deployment from the list.

- All the info you need will be on this page.

- The Model ID is the name of the deployment you just clicked.

- Copy the full URL under "Target URI"

- Copy the API Key under "Key".

External resources

- Microsoft's Azure AI Studio quickstart documentation

- Azure AI Studio full documentation

- Azure AI model catalog

OpenAI and Azure OpenAI rate limits

OpenAI rate limits

OpenAI's full rate limit documentation

Rate limits restrict how many requests or tokens you can send to the OpenAI API in a specific time frame. Limits are set at the organization and project level (not per individual user) and vary by model type. Rate limits are measured in several ways:

- RPM - Requests per minute (the number of calls to the "chat/completions" or "embeddings" endpoints per minute)

- RPD - Requests per day (the number of calls to the "chat/completions" or "embeddings" endpoints per day)

- TPM - Tokens per minute (effectively how much text is sent and received per minute)

- TPD - Tokens per data (effectively how much text is sent and received per day)

- Usage limit (OpenAI limits the total amount an organization can spend on the API per month)

OpenAI places users in "usage tiers" based on how much they spend monthly with OpenAI and how old their account is. As usage tiers increase, so does the rate limit thresholds for each model.

| Tier | Qualification | Usage limits |

| Free | User must be in an allowed geography | $100 / month |

| Tier 1 | $5 paid | $100 / month |

| Tier 2 | $50 paid and 7+ days since first successful payment | $500 / month |

| Tier 3 | $100 paid and 7+ days since first successful payment | $1,000 / month |

| Tier 4 | $250 paid and 14+ days since first successful payment | $5,000 / month |

| Tier 5 | $1,000 paid and 30+ days since first successful payment | $200,000 / month |

Users will automatically move up in usage tiers the more they spend with OpenAI. Users may also contact OpenAI support to request an increase in usage tier.

You can view your current usage tier and rate limits in the Limits page of the OpenAI Platform.

- For Grooper users just getting started connecting to the OpenAI API, this can cause large volumes of work to time out. Each time Grooper executes one of its LLM-based features (such as an AI Extract operation), it sends a request to OpenAI (these count toward your requests per minute/day), handing it document text and system messages (these count toward your tokens per minute/day).

Azure OpenAI rate limits

Azure AI Foundry full rate limit documentation.

Azure OpenAI models are deployed with Azure AI Foundry. This allows users to deploy OpenAI models outside of OpenAI's infrastructure as a service you manage in your Azure environment. They are still rate limited. However, in general, users are able to achieve greater throughput deploying Azure OpenAI models than using the OpenAI API. This is especially the case for new OpenAI accounts.

Quotas and limits aren't enforced at the tenant level. Instead, the highest level of quota restrictions is scoped at the Azure subscription level. Rate limits are similar to OpenAI API's rate limts:

- RPM - Requests per minute (the number of calls to the "chat/completions" or "embeddings" endpoints per minute)

- TPM - Tokens per minute (effectively how much text is sent and received per minute)

Several different factors affect your rate limits.

- Global vs Data Zone deployments

- Global deployments have larger rate limits and are better suited for high-volume workloads.

- Example: Global Standard gpt-4.1 (Default) = 1M tokens per minute & 1K requests per minute

- Example: Data Zone Standard gpt-4.1 (Default) = 300K tokens per minute & 300 requests per minute

- Subscription tier

- Enterprise and Microsoft Customer Agreement - Enterprise (MCA-E) vs Default

- Enterprise and MCA-E agreements have larger rate limits than the default "pay-as-you-go" style agreement. The Default tier is best suited for testing and small teams, with MCA-E better suited for mid-to-large organizations and Enterprise is best for large organizations, especially those in regulated industries.

- Example: Global Standard gpt-4.1 (Enterprise and MCA-E) = 5M tokens per minute & 5K requests per minute

- Example: Global Standard gpt-4.1 (Default) = 1M tokens per minute & 1K requests per minute

- Region

- Rate limits are defined per region (e.g. South Central US, East US, West Europe, etc.)

- However, you are not limited to a single global quota. You can deploy models in multiple regions to effectively increase your throughput (as long as your subscription supports it).

- Model

- Similar to OpenAI, rate limits differ depending on the model you use.

- Example: Global Standard gpt-4.1 (Enterprise and MCA-E) = 5M tokens per minute & 5K requests per minute

- Example: Global Standard gpt-4o (Enterprise and MCA-E) = 30M tokens per minute & 180K requests per minute

Does OpenAI or Azure AI Foundry save my data or use it for training?

- For OpenAI models

- Grooper integrates with OpenAI API not Chat GPT. When using the OpenAI API, your data (prompts, completions, embeddings, and fine-tuning data) is not used for training to improve OpenAI models (unless you explicitly opt in to share data with OpenAI). Your data is not available to other customers or other third parties.

- All data passed to and from OpenAI (prompts, completions, embeddings, and fine-tuning data) is encrypted in transit.

- Data is saved in the case of fine-tuning data for your own custom models. Fine-tuned models are available to you and no one else (without your consent). All stored fine-tuning data may be deleted at your discretion. All stored data is encrypted at rest. The OpenAI API may store logs for up to 30 days for abuse monitoring. However, they offer a "zero data retention" option for trusted customers with sensitive applications. You will need to contact the OpenAI sales team for more information on obtaining a zero data retention policy.

- For Azure AI Foundry Models (including Azure OpenAI models)

- Azure AI models are deployed in Azure resources under your control in your tenant. Models are deployed in Azure and operate as a service under your control. Your data (prompts, completions, embeddings, and fine-tuning data) is not available to other customers, OpenAI, or other third parties. Your data is not used for training to improve models by Microsoft, OpenAI or any other third parties with out your permission or instruction.

- All data passed to and from the model service (prompts, completions, embeddings, and fine-tuning data) is encrypted in transit.

- Some data is saved in certain cases, such as data saved for fine-tuning your own custom models. All stored data is encrypted at rest. All data may be deleted at your discretion. Azure will not store prompts and completions without enabling features that do so. Azure OpenAI may store logs for up to 30 days for abuse monitoring purposes, but this can be disabled for approved applications.

More information on specific models

Model retirements

OpenAI

OpenAI has announced the retirement of several models in ChatGPT, including gpt-4o, gpt-4.1-mini, gpt-5, and others.

- These changes do not affect Grooper’s OpenAI integration at this time.

- Grooper integrates with the OpenAI API, not ChatGPT.

- OpenAI API deprecations and recommended replacements are documented here: OpenAI's Depreciations documentation.

Azure OpenAI

Azure maintains General Availability (GA) models for a minimum of 12 months. Refer to the following for more information:

Known issues with certain models

Occasionally, newly released models may not behave the same as previous models when used in Grooper. Or, Parameters configurations may not be supported. When this occurs, the table below will be updated with known issues, their impact on Grooper functionality, and any recommended workarounds.

| Model name | Date reported | Notes |

|---|---|---|

| gpt-5 (and above) | NA | gpt-5 does not support the use of the Temperature or Top P parameters. Use the default Temperature and Top P settings in Grooper to avoid a Bad Request error.

|

| gpt-5.1 (and above) | NA | gpt-5.1 does not support the minimal option for the Reasoning Effort parameter. Use low, medium or high to avoid a Bad Request error. |

| gpt-5.1 (and above) | 1/30/2026 | Grooper does not currently expose a none option for the Reasoning Effort parameter. Development is evaluating how to address this best but has not implemented a solution at the time this is written. |

| Structured Outputs supported models | NA | OpenAI's Structured Outputs feature is designed to make the model stick to a developer-supplied JSON schema. Paradoxically, there are scenarios where Structured Outputs can cause the model to be thrown in a repetitive loop when creating its JSON response. If you find this issue is affecting you, change the "Use Structured Output" property to False. |

|

FYI |

If you encounter the "Bad Request (HTTP BadRequest – Bad Request)" error when testing extraction, the selected model may not support one or more configured parameters in Grooper. Refer to the documentation for your chosen model to verify supported parameters before modifying default settings. |

Grooper before AI and after AI

This used to be hard Less configuration. Better results.

AI has fundamentally changed how the Grooper platform is configured — leading to accelerated deployments and systems that are easier to manage.

| Old way | New way |

|---|---|

|

|

Image cleanup for OCR

Defects on images interfere with traditional OCR engines, leading to poor accuracy and data capture failures.

| Old way — IP Profiles | New way — Azure DI OCR |

|---|---|

|

Build IP (Image Processing) Profiles to adjust the image prior to running an OCR engine.

|

Uses Azure Document Intelligence to analyze images, providing an AI-based OCR engine trained on millions of images of varied types and quality.

|

|

Result: Days of image tuning reduced to zero configuration in most deployments. | |

Document separation

Scanned or digital page collections need to be split into individual documents — often one of the hardest problems in document processing.

| Old way — 7 Separation Providers | New way — AI Separate |

|---|---|

|

Choose from and configure one of seven different Separation Providers.

|

A Separation Provider that uses an LLM to identify document boundaries from natural language instructions.

|

|

Result: Separation logic that once required training data and specialist knowledge is now written in plain English. | |

Document classification

Documents of different types need to be identified and routed correctly before data can be extracted from them.

| Old way — Lexical Classification | New way — LLM Classifier |

|---|---|

|

Curate sample documents for each Document Type and train a classification model.

|

Classify documents by describing them in natural language — no samples or training required.

|

|

Result: Adding a new Document Type goes from a multi-day training exercise to writing a paragraph. | |

Data extraction

Specific fields need to be located and extracted from documents — even when layouts vary across vendors, versions, or sources.

| Old way — Extractors and templates | New way — AI Extract |

|---|---|

|

Build rule-based (or worse zone-based) extractors for each field, table and section for each document.

|

Describe what to extract in plain language. Works across varied formats automatically.

|

|

Result: A single natural language prompt replaces dozens of fragile field rules and vendor templates. | |

Document analysis

Documents are one part image, one part text. Understanding a document means understanding both — not just the characters on the page, but how they're arranged and related.

| Old way — Raw text | New way — DI Analyze |

|---|---|

|

Recognize text using OCR and feed raw output to extractors for separation, classification, and data extraction.

|

Uses Azure Document Intelligence to recognize text and analyze layout relationships simultaneously, producing structured output specifically designed to feed Grooper's AI-enabled features.

|

|

Result: DI Analyze gives Grooper's AI-enabled features a richer starting point — turning a page of pixels into structured, relationship-aware content that improves separation, classification, and extraction outcomes. | |

Image analysis

Documents aren't just text. Signatures, photos, charts, checkboxes, stamps — for most of Grooper's history, these visual elements were either ignored or required painstaking configuration to detect. VLMs change that entirely.

| Old way — IP Profiles | New way — VLM Analyze |

|---|---|

|

Use IP Commands to locate specific, predefined document features.

|

Uses Vision-Language Models to analyze document images from a natural language prompt, producing structured output designed to feed Grooper's AI-enabled features.

|

|

Result: Image analysis used to mean detecting a handful of predefined shapes. With VLMs, it means understanding anything a human eye can see — described in plain language, no configuration required. | |