What's New in Grooper 2023.1

Welcome to Grooper 2023.1!

|

Grooper version 2023.1 is here! Below you will find brief descriptions on new and/or changed features. When available, follow any links to extended articles on a topic. |

Expanded web client

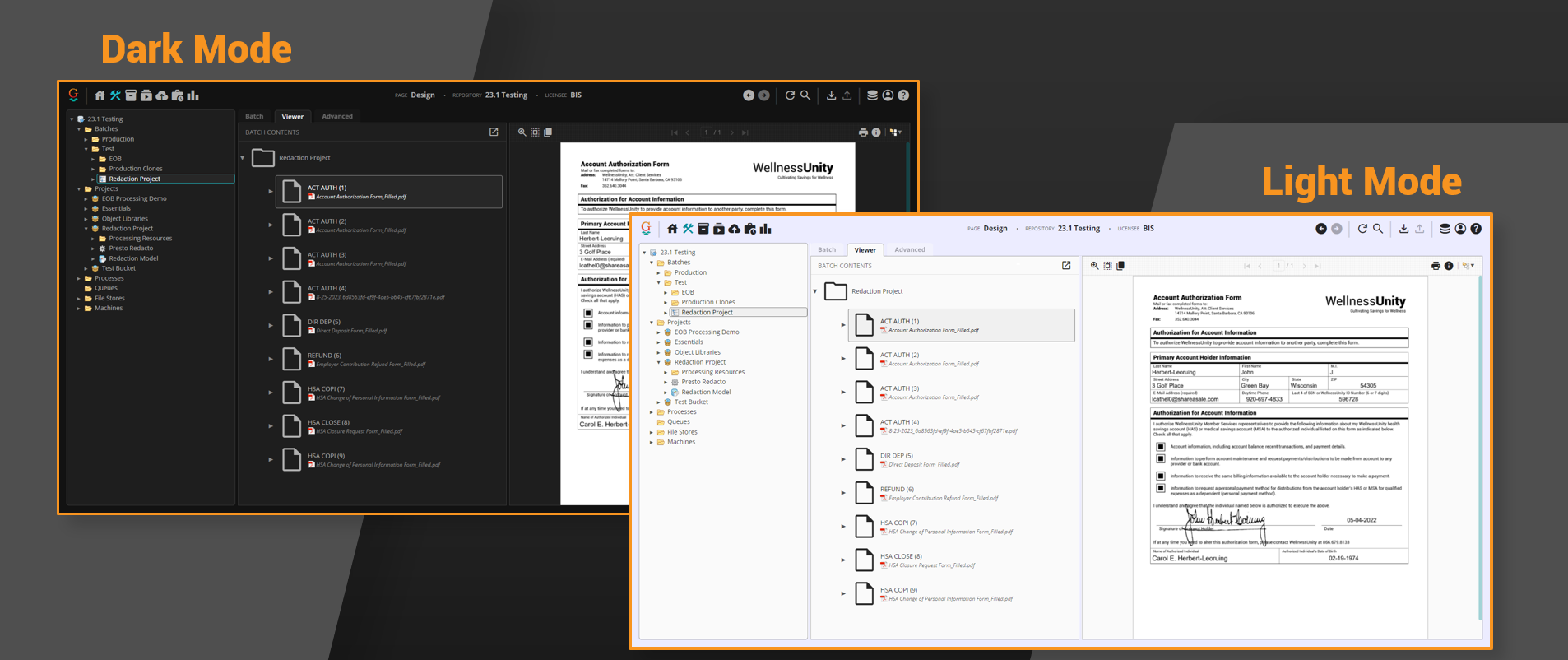

Light mode

|

Let there be light (mode)! Users can now toggle between dark mode and light mode in the Grooper web client. |

Improved scripting for web client

Scripting for the web client is better than ever. Grooper users can now use the web client as their debug target thanks to the GrooperSDK Visual Studio extension.

- The GrooperSDK extension is available for download in the Visual Studio Marketplace.

- This extension allows users to set a web browser as their debug target.

- Users can now download scripts and work on them independently of Grooper, saving changes directly from Visual Studio using the GrooperSDK extension.

Furthermore, users can edit scripts from any machine, not just the Grooper web server, with the following components must be installed:

- Grooper (with a Repository connection made to the Grooper Repository hosted by the web server)

- Grooper Web Client

- IIS

- Visual Studio 2019

- GrooperSDK

For more information, be sure to check out the Remote Scripting Setup article.

New and improved processing features

Secondary Types

|

Secondary Types is a new property assignable to Batch Folders. This feature allows a single document to be assigned multiple Document Types. Secondary Types can be used with the following activities:

|

EDI support

Grooper can create Data Models directly from an X12 EDI schema and load data from an EDI file directly into a Grooper document.

- Data Elements adhering to an EDI schema can be created by right-clicking a Data Model, selecting Import Schema... and configuring the EDI Schema Importer.

- Data from an EDI file can be loaded into Grooper using the Execute activity, configured with an EDI File - Load Data command.

Currently, Grooper can process the following EDI schemas natively:

- X12 835

- X12 837 Professional

- X12 837 Dental

- X12 837 Institutional

FYI: X12 refers to the organization developing and maintaining the EDI standards. X12 is the common EDI standard in the US and North America.

For more information, be sure to check out the XML Schema Integration article.

XML Data Interchange

Grooper users can now ingest and build XML files more effectively with our suite of "XML Data Interchange" capabilities. With this new funcitonality users can:

- Create Data Elements from an XML schema file right-clicking a Data Model, selecting Import Schema... and configuring the XML Schema Importer.

- Load data from an XML file that adheres to an XML schema using the Execute activity, configured with an XML File - Load Data command.

- Validate an XML document against an XML schema using the Execute activity, configured with an XML File - Validate Schema command.

- Generate an XML document according to an XML schema using a new Merge/Export Format (called XML Format).

For more information, be sure to check out the XML Schema Integration article.

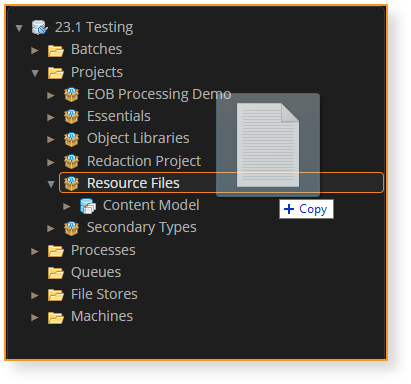

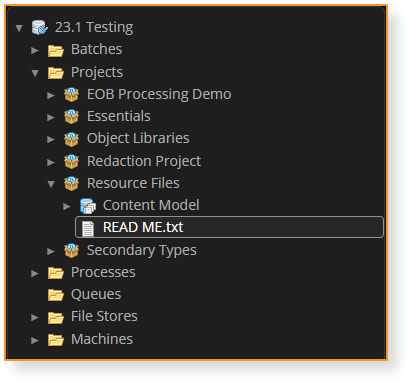

New node type: Resource Files

|

Resource Files allow users to store any kind of file in a Project. Simply drag a file from your file system and drop it into a Project (or folder in a Project).

|

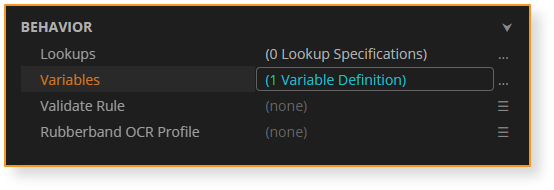

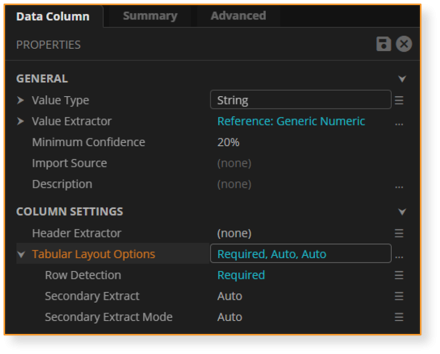

Expression Variables

|

Simplify expressions with Variables. Data Models, Data Sections and Data Tables have a new Variables property. Users can add a list of expression variables, each having a name, a value type and an expression.

|

Lookup improvements

New Lookup type: XML Lookup

The new XML Lookup allows users to perform a lookup against data in an XML file. It uses XPath selectors with Grooper variables to select data in an XML hierarchy. This gives Grooper users a mechanism to query data that lies somewhere between a Lexicon Lookup and a Database Lookup. It is more capable than a Lexicon Lookup. XML data is more complex than the simple "key-value" list you can query in a Lexicon. However, XML data is not as complex as a relational database. The XML Lookup gives you an option when you need to query fairly static data that lies between a Lexicon and a database in terms of its complexity.

Please Note! For most lookups, you reference Grooper Data Elements with the "@" symbol. However, "@" is already part of the XPath syntax. Data Elements are instead referenced with the "%" symbol for XML Lookups.

New Trigger mode: Custom

A new Custom option for a lookup's Trigger Mode now allows for conditional lookup execution. Selecting Custom exposes a Trigger for the lookup. This uses an expression to define conditions when to execute the lookup.

This can make lookups more efficient, preventing a query from executing unless certain conditions are met. This can be particularly helpful when performing Database Lookups' that query larger databases during Review. If you don't have to execute a lookup unless certain conditions are met, save you and your reviewers time by only conditionally executing the query with our new Custom Trigger Mode.

This can also create opportunities for "waterfall" query approaches where if the first (generally more specific) query failed to produce a result, you can fall back on a second (generally looser) query.

Prescan Threshold for Labelset-Based Classification

Before 2023.1 the Labelset-Based classification method had a problem. It became less efficient the more Document Types were added to the Content Model (and therefore the more Label Sets were added). The more Document Types, the more Label Sets that must be loaded and checked against each document.

The new Prescan Threshold property available to 'Labelset-Based classification resolves this by only running Label Sets that are likely to succeed, not every one on each document. Prescan Threshold determines this based on word statistics. A minimum percent of Label Set words must be present on the document to be considered for classification. This has been demonstrated to speed up classification up to 15x what it was without a Prescan Threshold. This extends the Labelset-Based classification method to Content Models with thousands of Document Types.

New "Summary" Tabs

There are new and improved "Summary" tabs on Data Models, Data Elements, and Document Types in the web client. These summaries give Grooper Design users useful "at a glance" information about these objects in your Content Models. Furthermore, these summaries have helpful links to associated objects, allowing for quicker navigation through complicated Content Models with large numbers of Data Elements and Document Types.

Data Model "Summary" tabs now list all expressions configured for any Data Element configured with an expression (Default Value, Calculated Value, Is Required, etc.)

- Clicking on a listed Data Element will take you directly to that element in the Data Model.

Data Element "Summary" tabs also list any configured expressions for the selected element. This summary also lists any Document Types configured with overrides for the selected element.

- Clicking on a listed Document Type will take you directly to the Document Type.

Document Type "Summary" tabs also have an "Overrides" section, listing all Data Elements being overridden for the selected Document Type.

- Clicking on a listed Data Element will take you directly to that element.

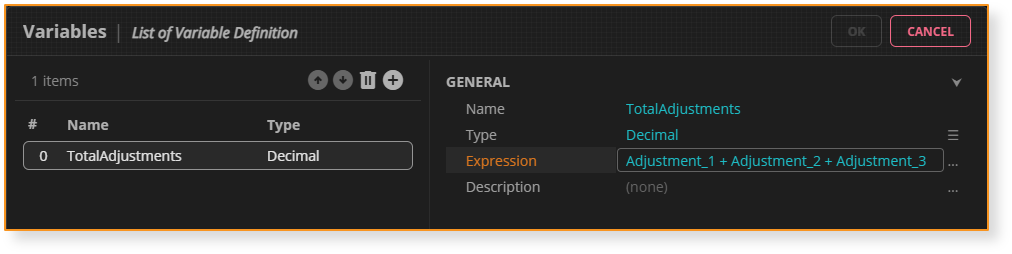

Changes to table extraction

Header-Value is out. Tabular Layout is in

In version 2021, the Tabular Layout table extraction method was created as an improved version of the Header-Value table extraction method. Tabular Layout's logic is similar to Header-Value in that it uses column header labels and value extractors to determine a table's structure, detect columns and rows, and extract cell data. However, Tabular Layout is superior to Header-Value in the following ways:

- Tabular Layout's initial setup is simpler.

- Tabular Layout has better built in column and row detection.

- Tabular Layout is more fully featured, capable of doing things Header-Value cannot.

- Tabular Layout is Label Set supported.

For these reasons, Tabular Layout will fully replace Header-Value in version 2023.1. Any Data Table using Header-Value will be converted to Tabular Layout upon upgrade.

Tabular Layout will greatly improve your table extraction results, particularly if you familiarize yourself with all Tabular Layout can do for you.

|

⚠ |

PLEASE NOTE: If you are currently using Header-Value in production, you should take extra care when testing your upgrade to ensure your results are consistent. You may need to adjust aspects of your table extraction setup to get the best results possible after upgrading. ALWAYS BACKUP YOUR GROOPER DATABASE BEFORE UPGRADING TO ENSURE YOU CAN REVERT BACK IF NECESSARY |

Known conversion issues

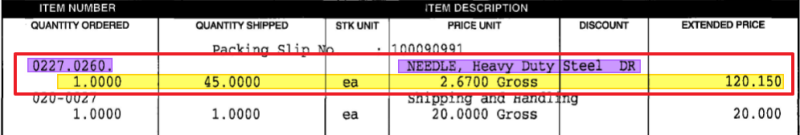

New Tabular Layout property: Find Column Positions

There is a new Tabular Layout property called Find Column Positions.

- This property is found in the Row Detection sub-properties.

- This property was added to Tabular Layout to make the upgrade logic from Header-Value to Tabular Layout possible.

The property enables row detection in cases where required column values do not vertically align with the column header.

- If set to True, Grooper will expand the left or right boundaries of the required column's header if values are detected nearby.

- Additionally, there is logic built in to ensure Grooper will not adjust column bounds if it encroaches on other column headers.

- PLEASE NOTE Enabling this property causes Grooper to expand column headers only if there are no aligned value extractor hits. Column positions will not be expanded if the column's value extractor produces at least one hit.

- "Required" columns are those Data Columns with the Tabular Layout Options > Row Detection property set to Required.

- This property has no impact on column headers whose boundaries have already been expanded due to line detection.

- PLEASE NOTE Enabling this property can produce unpredictable results as you have no control how Grooper looks left or right for nearby values. If using Label Sets, it is best practice to keep this property turned False and adjust the width of a header label manually using the label's Padding properties.

New Tabular Layout property: Merge Multiple Instances

There is a new Tabular Layout property called Merge Multiple Instances

- This property is found in the Row Detection sub-properties.

- This property was added to Tabular Layout to make the upgrade logic from Header-Value to Tabular Layout possible.

This property changes the standard way Footer extractors/labels work for Tabular Layout.

When set to False, a footer result will behave as it always has for Tabular Layout.

- Grooper will detect rows starting at the table's row of header labels up to the first instance of a footer.

- No rows will be detected after the footer's location.

When set to True, Grooper will combine spans of rows between multiple instances of footer results/labels.

- Grooper will detect rows starting at a the table's row of header labels as normal and stop detecting rows at the first instance of a footer. However, the difference is, if header labels are found on the next page, Grooper will continue detecting rows up to another instance of a footer (or the end of the document if no second footer is present). Row results from the two (or more) pages are then merged into a single table.

- Results from multiple spans of rows between multiple headers and footers will then be merged together in a single table.

- This property is useful for documents like invoices that commonly have footers at the bottom of a line items table on each page of a multipage invoice.

Data Element Extensions

"Data Element Extensions" are a new way of handling properties for Data Elements given a parent Data Element's configuration. Given a parent Data Element configuration, properties can now extend to child Data Elements.

Currently, we are implementing this functionality to move certain table extraction properties from the Data Table to relevant child Data Columns.

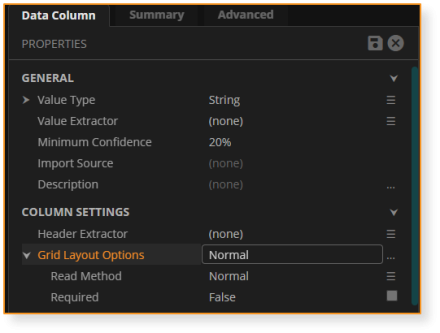

|

For Tabular Layout:

|

|

|

For Grid Layout:

|

Batch management & Review enhancements

"FIFO" Batch Priority

Traditionally in Grooper, Batches are created with a set priority (between "1" and "5"). When all Batches have the same priority, processing can bottleneck around processor intensive activities. This can cause situations where the first Batch that was imported isn't necessarily the first Batch to finish processing or get to a Review step first.

Batches can now be processed with a "First In, First Out" priority in Grooper. Import Providers have a Batch Creation option called Increment Priority.

Turning this property on sets each Batch's priority to the Batch's Row ID value from the Grooper database. Row IDs are assigned to Grooper nodes sequentially as items are created in the Grooper database. Since lower priority values are processed first, Batches created earlier (with lower Row IDs) will be processed before those created later, achieving FIFO behavior.

Please note the following:

- Enabling Increment Priority will override whatever value is set for the Priority setting in the Batch Creation settings.

- Batch priority values will not increment by exactly "1".

- Row IDs are also generated for Batch Folders created by Import Jobs. This means there may be gaps between the numbers assigned to each Batch's priority.

- Example: Batch #1 is imported with 10 documents. Its Row ID is 100, so its priority will be 100. Batch #2 is imported next. Its Row ID will be 111, accounting for Batch #1's previously imported 10 documents and Batch #2's node itself. Therefore its priority will be 111.

Dynamic Value Lists

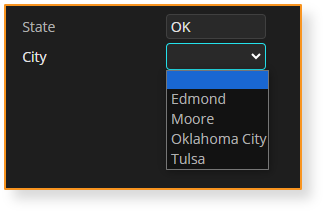

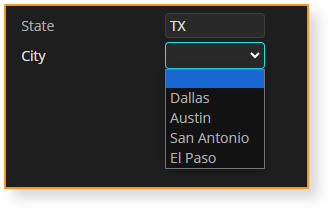

Data Fields have a new List Values option: "Include Lookup Results"

- When enabled, the field will appear as a drop-down list in Review.

- The list will include all distinct values returned from Lookups targeting the field.

- Be Aware! Lookups used to populate value lists in this way should have their Conflict Disposition set to Ignore as the lookup is expected to return multiple results.

|

In the example here, a Database Lookup is configured to return various cities for a particular value extracted for a "State" field. The "City" field returns a different list, given the lookup's results.

|

Review improvements

This section is a work in progress and will be expanded at a later date

- Swap panes

- Horizontal/Vertical view toggle

- Double-click capture (alternative to rubberband OCR)

- Data Grid zoom in/zoom out

- Sticky field support in web client

- Display labels (Use “\auto” to use labels in Label Sets!)

Misc other changes

|

The Labeled Value extractor can now always function without a Value Extractor configured.

|

|

The Labeled Value extractor now has a configurable Footer Extractor property.

|

|

In the Label Set editor, you can use “Alt + Double Click” to capture custom labels |

|

In the Label Set editor, Data Elements with no labels defined display a checkbox. Clicking this checkbox will hide these Data Elements in Review.

|

|

This version fixes a long standing issue with Label Sets and Overrides. When enabling a Labeling Behavior and collecting labels for a Data Field or Data Column, those labels will always be used in place of a Label Extractor or Header Extractor. To avoid confusion, you should NEVER collect a label and override the label. Simply choose one or the other depending on your needs.

|

|

The Extract activity now has a Rules property.

|

|

New "Change Priority" command applicable to Batches from the Batches page. |

|

Ability to import documents as the root of a Batch.

|

|

CSS Improvements

|

|

The "Publish To Repository" command now works with any descendant of the Projects folder.

Note: This functionality was added late in the development cycle. Currently, nodes may only be published one at a time. Future development will include the capability to publish multiple nodes by multi-selecting them. |

|

New Data Export functionality: Custom Statements Custom Statements allow users to configure a custom SQL statement using an expression. This will allow for more nuanced SQL database exports. For example, a user could modify existing records in a database with an UPDATE command using Custom Statements. Users define two properties to configure each Custom Statement:

|

Expressions and Scripting Related Changes

|

The

|

|

Next Step expressions no longer require |